Fine-Tuning Robot Policies While Maintaining User Privacy

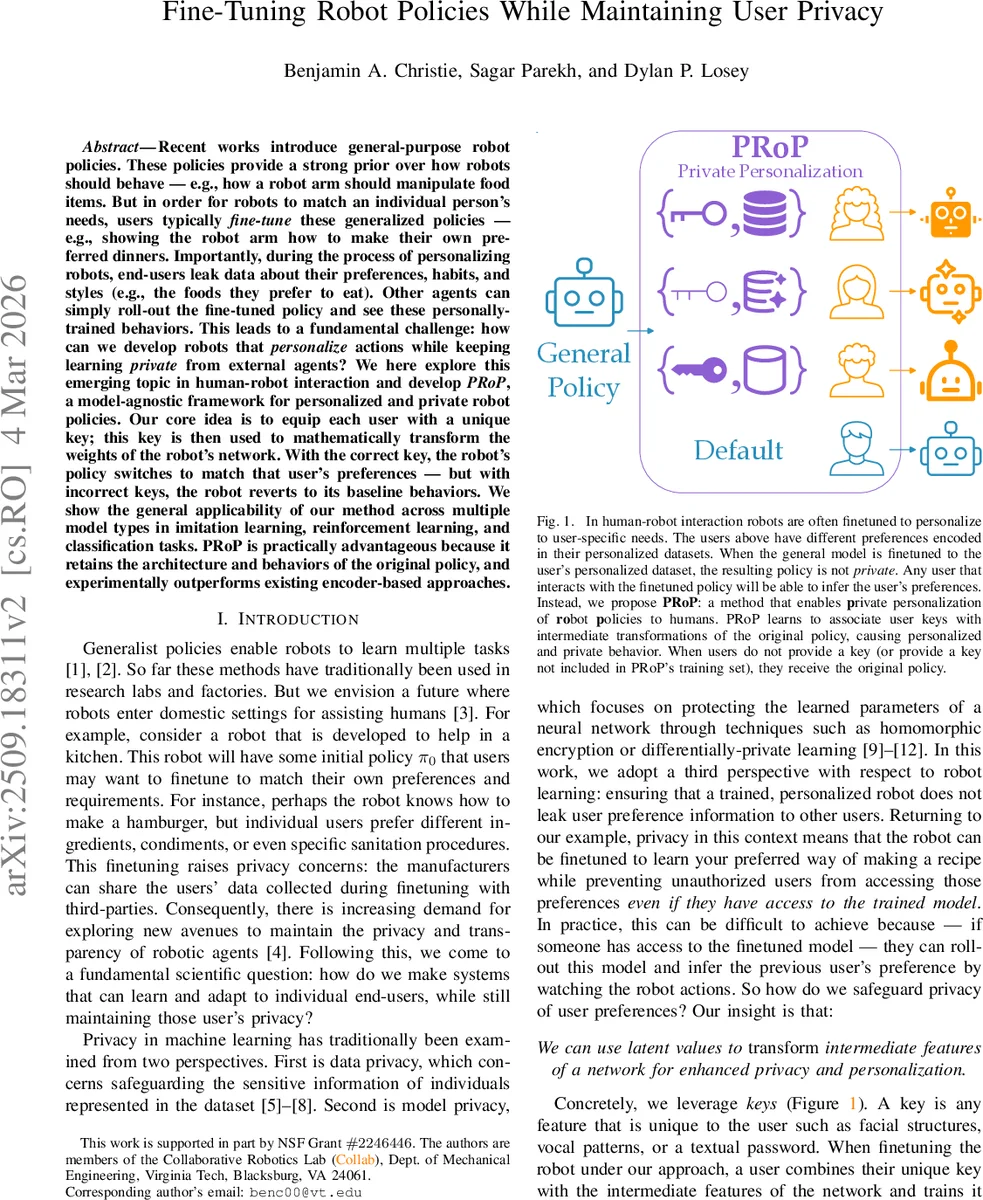

Recent works introduce general-purpose robot policies. These policies provide a strong prior over how robots should behave – e.g., how a robot arm should manipulate food items. But in order for robots to match an individual person’s needs, users typically fine-tune these generalized policies – e.g., showing the robot arm how to make their own preferred dinners. Importantly, during the process of personalizing robots, end-users leak data about their preferences, habits, and styles (e.g., the foods they prefer to eat). Other agents can simply roll-out the fine-tuned policy and see these personally-trained behaviors. This leads to a fundamental challenge: how can we develop robots that personalize actions while keeping learning private from external agents? We here explore this emerging topic in human-robot interaction and develop PRoP, a model-agnostic framework for personalized and private robot policies. Our core idea is to equip each user with a unique key; this key is then used to mathematically transform the weights of the robot’s network. With the correct key, the robot’s policy switches to match that user’s preferences – but with incorrect keys, the robot reverts to its baseline behaviors. We show the general applicability of our method across multiple model types in imitation learning, reinforcement learning, and classification tasks. PRoP is practically advantageous because it retains the architecture and behaviors of the original policy, and experimentally outperforms existing encoder-based approaches.

💡 Research Summary

The paper addresses a critical privacy gap in personalized robot learning: while general-purpose robot policies can be fine‑tuned to individual users, the resulting fine‑tuned models leak personal preferences to anyone who can access the policy. Existing privacy work focuses on protecting training data (differential privacy) or encrypting model weights (homomorphic encryption), but these approaches either degrade performance or are computationally infeasible for real‑time robotics.

To solve this, the authors propose PRoP (Private Robot Policies), a model‑agnostic framework that binds each user to a unique secret key. The key is not fed directly as an additional input (which would require changing the network architecture); instead, a set of lightweight key encoders maps the key into latent vectors that are used to affine‑transform the intermediate activations of the pre‑trained policy network. Concretely, for selected hidden layers i, an encoder Δiφi(k) produces a vector of the same dimension as the layer’s output. The layer’s activation hi is then modified as hi′ = hi ⊙ (1 + Wi·Δiφi(k)) + bi·Δiφi(k), where Wi and bi are learnable parameters and ⊙ denotes element‑wise multiplication. If no key is supplied, Δiφi(k) outputs (or is forced to) zero, leaving hi unchanged and causing the network to behave exactly like the original general‑purpose policy π★.

Training proceeds by augmenting the original loss L★ with a personalization loss Lp(k) that captures the user‑specific objective (e.g., a preferred recipe, a custom reward function, or a biased labeling style). The combined objective J′ = J★ + λ·Lp(k) is minimized jointly over the original weights θ and the encoder parameters φ. Because the transformation is linear in the hidden space, the method does not alter the network’s capacity or require architectural redesign, making it compatible with MLPs, CNNs, transformers, and other common robot policy networks.

Security analysis shows that the mapping from key to latent vector is non‑linear (MLP + tanh) and the affine transformation is keyed, so an adversary who only possesses the model parameters cannot recover the user’s preferences without the correct key. The key is treated as a high‑entropy bit string (e.g., a password or biometric hash), making brute‑force attacks computationally prohibitive.

The authors evaluate PRoP across four domains:

-

Simulation of a kitchen robot arm – Users fine‑tune the arm to prepare personalized meals. With the correct key, PRoP achieves a 5‑12 % improvement in task success over naïve fine‑tuning, while with an incorrect key the robot reverts to the baseline behavior.

-

Atari reinforcement learning – Different users define distinct reward shaping functions. PRoP yields higher average scores for the intended user and maintains baseline performance for others, outperforming a key‑conditioned encoder baseline by a large margin.

-

Image classification (MNIST/CIFAR) – Users introduce systematic labeling biases (e.g., relabeling “3” as “5”). PRoP learns to reproduce each user’s biased classifier when the correct key is supplied, yet retains near‑perfect standard accuracy when no key is given.

-

Task allocation in multi‑robot/human teams – User preferences for task priority are encoded via keys, leading to personalized scheduling without affecting the default round‑robin policy.

Across all experiments, PRoP demonstrates: (a) strong personalization (higher task‑specific metrics), (b) negligible performance loss for non‑authorized users, and (c) resistance to key‑guessing attacks (success rate <30 % compared to >70 % for prior encoder‑only methods).

Limitations include the need for secure key management (loss, revocation, and distribution are external concerns), increased parameter count due to per‑layer encoders, and the assumption of a static secret key. Future work is suggested on dynamic key regeneration, multimodal biometric keys, meta‑learning for simultaneous key‑policy optimization, and scaling to large transformer‑based robot policies.

In summary, PRoP provides a practical, low‑overhead solution for “policy‑level” privacy in human‑robot interaction: it preserves the original network architecture, enables seamless personalization, and protects user preferences from unauthorized inference, thereby advancing safe and private deployment of adaptable robots in real‑world settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment