Difficult Examples Hurt Unsupervised Contrastive Learning: A Theoretical Perspective

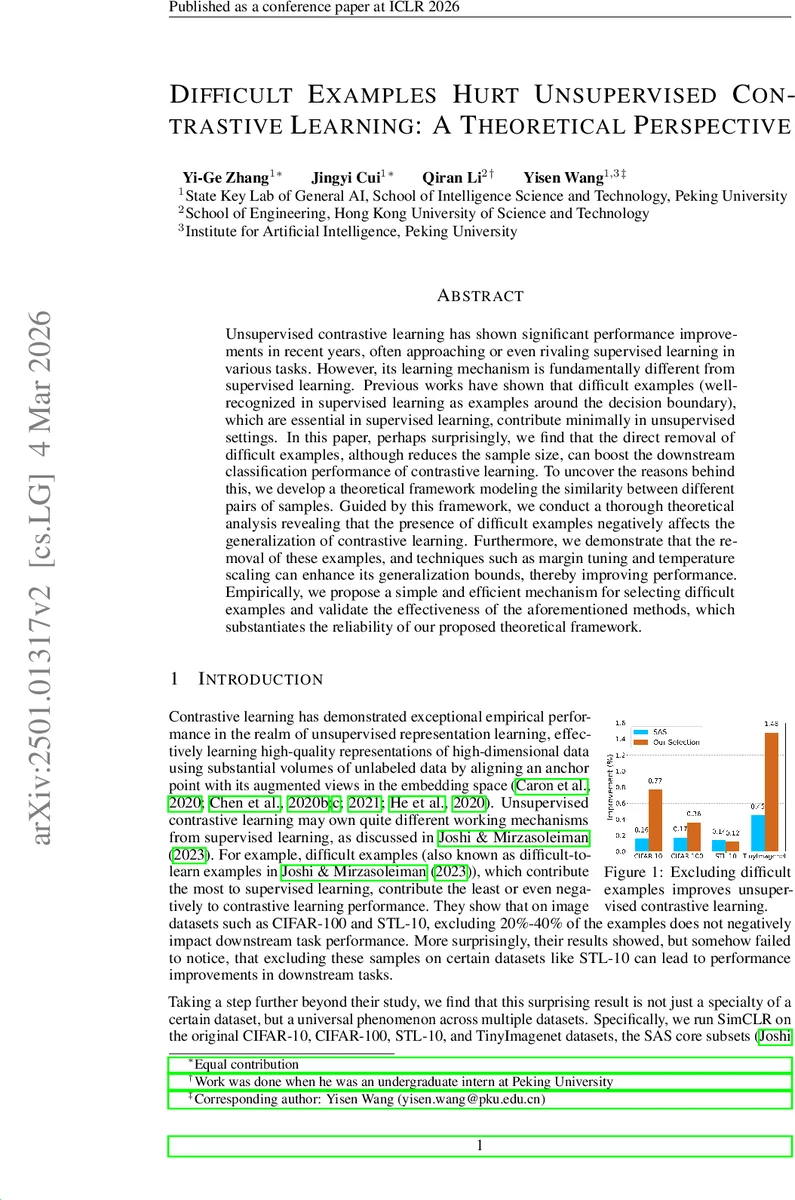

Unsupervised contrastive learning has shown significant performance improvements in recent years, often approaching or even rivaling supervised learning in various tasks. However, its learning mechanism is fundamentally different from supervised learning. Previous works have shown that difficult examples (well-recognized in supervised learning as examples around the decision boundary), which are essential in supervised learning, contribute minimally in unsupervised settings. In this paper, perhaps surprisingly, we find that the direct removal of difficult examples, although reduces the sample size, can boost the downstream classification performance of contrastive learning. To uncover the reasons behind this, we develop a theoretical framework modeling the similarity between different pairs of samples. Guided by this framework, we conduct a thorough theoretical analysis revealing that the presence of difficult examples negatively affects the generalization of contrastive learning. Furthermore, we demonstrate that the removal of these examples, and techniques such as margin tuning and temperature scaling can enhance its generalization bounds, thereby improving performance. Empirically, we propose a simple and efficient mechanism for selecting difficult examples and validate the effectiveness of the aforementioned methods, which substantiates the reliability of our proposed theoretical framework.

💡 Research Summary

**

This paper investigates a counter‑intuitive phenomenon in unsupervised contrastive learning: “difficult examples,” which are crucial for supervised learning because they lie near decision boundaries, actually hurt the performance of contrastive methods. The authors first provide empirical evidence across several standard vision benchmarks (CIFAR‑10/100, STL‑10, Tiny‑ImageNet, and SAS‑core subsets) that removing a modest fraction (20‑40 %) of training samples can improve downstream linear‑probe accuracy, even though the overall sample size is reduced. To make the notion of “difficulty” applicable without labels, they define difficult pairs as inter‑class sample pairs that exhibit unusually high similarity.

To explain this effect theoretically, the paper introduces a similarity graph (also called an augmentation graph) whose nodes are augmented views of the data and whose edge weights encode the joint probability of two views originating from the same natural image. Within this graph, three similarity levels are parameterized: α for same‑class pairs, β for easy inter‑class pairs, and γ for difficult inter‑class pairs, with β < γ < α. This abstraction captures the intuition that difficult examples are highly similar to samples from other classes, making them prone to being clustered incorrectly during self‑supervised pre‑training.

Using the spectral contrastive loss (Lₛₚₑc) and its equivalence to a matrix‑factorization objective, the authors derive linear‑probe error bounds under two scenarios: (1) training without difficult examples, and (2) training with n_d difficult examples per class. Assuming label recoverability from augmentations (error δ) and realizability of the loss minimum, they obtain

-

Theorem 3.3 (no difficult examples):

E₍wo₎ ≤ 4δ/(1‑α) + nα + nrβ + 8δ. -

Theorem 3.4 (with difficult examples):

E₍wd₎ ≤ 4δ/

Comments & Academic Discussion

Loading comments...

Leave a Comment