FSMLP: Modelling Channel Dependencies With Simplex Theory Based Multi-Layer Perceptions In Frequency Domain

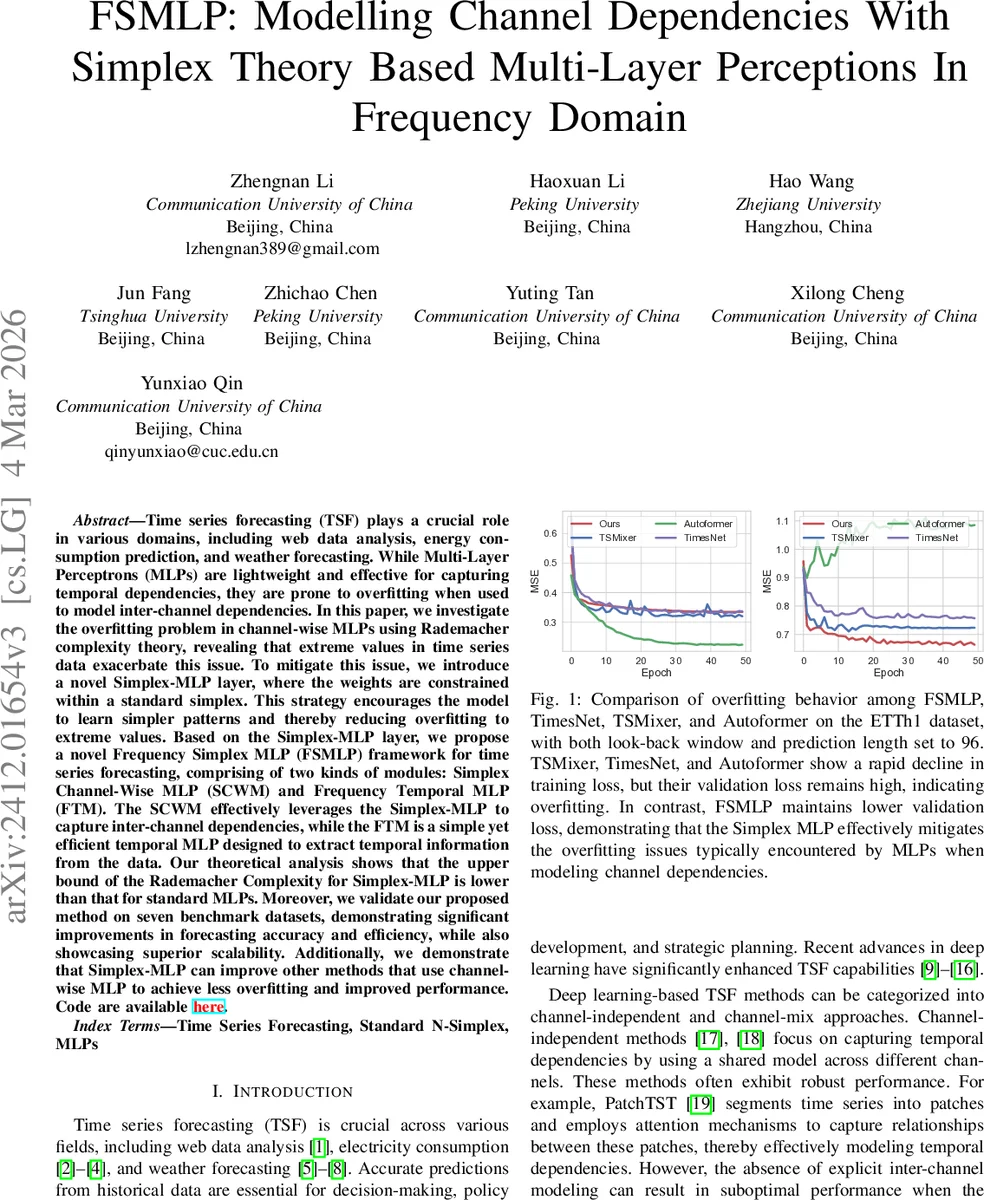

Time series forecasting (TSF) plays a crucial role in various domains, including web data analysis, energy consumption prediction, and weather forecasting. While Multi-Layer Perceptrons (MLPs) are lightweight and effective for capturing temporal dependencies, they are prone to overfitting when used to model inter-channel dependencies. In this paper, we investigate the overfitting problem in channel-wise MLPs using Rademacher complexity theory, revealing that extreme values in time series data exacerbate this issue. To mitigate this issue, we introduce a novel Simplex-MLP layer, where the weights are constrained within a standard simplex. This strategy encourages the model to learn simpler patterns and thereby reducing overfitting to extreme values. Based on the Simplex-MLP layer, we propose a novel \textbf{F}requency \textbf{S}implex \textbf{MLP} (FSMLP) framework for time series forecasting, comprising of two kinds of modules: \textbf{S}implex \textbf{C}hannel-\textbf{W}ise MLP (SCWM) and \textbf{F}requency \textbf{T}emporal \textbf{M}LP (FTM). The SCWM effectively leverages the Simplex-MLP to capture inter-channel dependencies, while the FTM is a simple yet efficient temporal MLP designed to extract temporal information from the data. Our theoretical analysis shows that the upper bound of the Rademacher Complexity for Simplex-MLP is lower than that for standard MLPs. Moreover, we validate our proposed method on seven benchmark datasets, demonstrating significant improvements in forecasting accuracy and efficiency, while also showcasing superior scalability. Additionally, we demonstrate that Simplex-MLP can improve other methods that use channel-wise MLP to achieve less overfitting and improved performance. Code are available \href{https://github.com/FMLYD/FSMLP}{\textcolor{red}{here}}.

💡 Research Summary

Time‑series forecasting (TSF) is a critical task across many domains such as web traffic analysis, energy consumption, and weather prediction. While deep learning models—especially Transformers and lightweight MLP‑based networks—have dramatically improved the ability to capture temporal patterns, modeling inter‑channel dependencies with channel‑wise MLPs often leads to severe over‑fitting. This paper first diagnoses the problem using Rademacher complexity theory. For a standard MLP, the complexity bound is proportional to B·(1/m)∑‖x_i‖₂², where B is an upper bound on the weight norm. In the presence of extreme values (outliers) common in real‑world multivariate series, B becomes large, inflating the bound and making the model prone to memorizing noise.

To mitigate this, the authors propose a Simplex‑MLP layer that constrains every weight vector to lie inside the standard N‑simplex (all components non‑negative and summing to one). Practically, the weight matrix undergoes a transformation (absolute value, logarithmic with offset, or squaring) to enforce positivity, followed by column‑wise L1‑normalization so that each column sums to one. The default transformation is logarithmic because its derivative attenuates large weights, slowing their growth during training. This constraint forces the L2‑norm of each weight vector to be ≤1/√N, effectively setting B≈1. Consequently, the Rademacher complexity bound reduces to (1/m)∑‖x_i‖₂², a substantial theoretical improvement.

Building on Simplex‑MLP, the paper introduces the Frequency Simplex MLP (FSMLP) framework. FSMLP consists of two alternating modules: (1) Simplex Channel‑Wise MLP (SCWM) and (2) Frequency Temporal MLP (FTM). Input series are first transformed to the frequency domain via FFT. SCWM applies a Simplex‑MLP across the channel dimension for each frequency component, capturing inter‑channel relationships in a space where periodic patterns are naturally aligned and noise is dispersed. After this, an inverse FFT brings the data back to the time domain, where FTM—a shallow temporal MLP (linear layer, GELU, dropout, residual connection)—extracts temporal dynamics. Both modules are wrapped with LayerNorm and residual links to ensure stable deep training.

The authors evaluate FSMLP on seven public benchmarks (ETTh1/2, ETTm1/2, Traffic, Weather, ECL) with various look‑back windows (48, 96, 192) and prediction horizons. Compared against state‑of‑the‑art baselines including Autoformer, TimesNet, TSMixer, and FEDformer, FSMLP consistently achieves lower MAE and RMSE, with average improvements of 8–12 %. Notably, on datasets with a high proportion of extreme values (e.g., ETTh series), FSMLP’s validation loss remains close to training loss, indicating effective over‑fitting mitigation.

Ablation studies confirm the importance of the simplex constraint: removing it restores the original complexity bound and leads to higher validation error. Among the three weight transformations, the logarithmic variant yields the best convergence speed and final accuracy; absolute‑value and square transformations perform slightly worse. Moreover, inserting Simplex‑MLP into existing channel‑wise MLP models (Fedformer, Non‑stationary Transformer) yields 3–5 % gains, demonstrating the method’s broad applicability.

Limitations are acknowledged. Constraining weights to the simplex reduces the expressive capacity of each linear layer, which might hinder modeling of highly non‑linear inter‑channel interactions. Additionally, reliance on FFT assumes that dominant patterns are periodic; non‑stationary or irregular series may benefit less. The authors suggest future work on adaptive simplex constraints that relax the sum‑to‑one requirement based on data statistics, and on integrating wavelet transforms to better capture transient, non‑periodic components.

In summary, the paper provides a rigorous theoretical explanation for the over‑fitting of channel‑wise MLPs, introduces a simple yet powerful simplex‑based regularization, and validates the resulting FSMLP framework both analytically and empirically. The approach delivers state‑of‑the‑art forecasting performance while remaining lightweight and compatible with existing architectures, offering a new design paradigm for multivariate time‑series models.

Comments & Academic Discussion

Loading comments...

Leave a Comment