AgenticGEO: A Self-Evolving Agentic System for Generative Engine Optimization

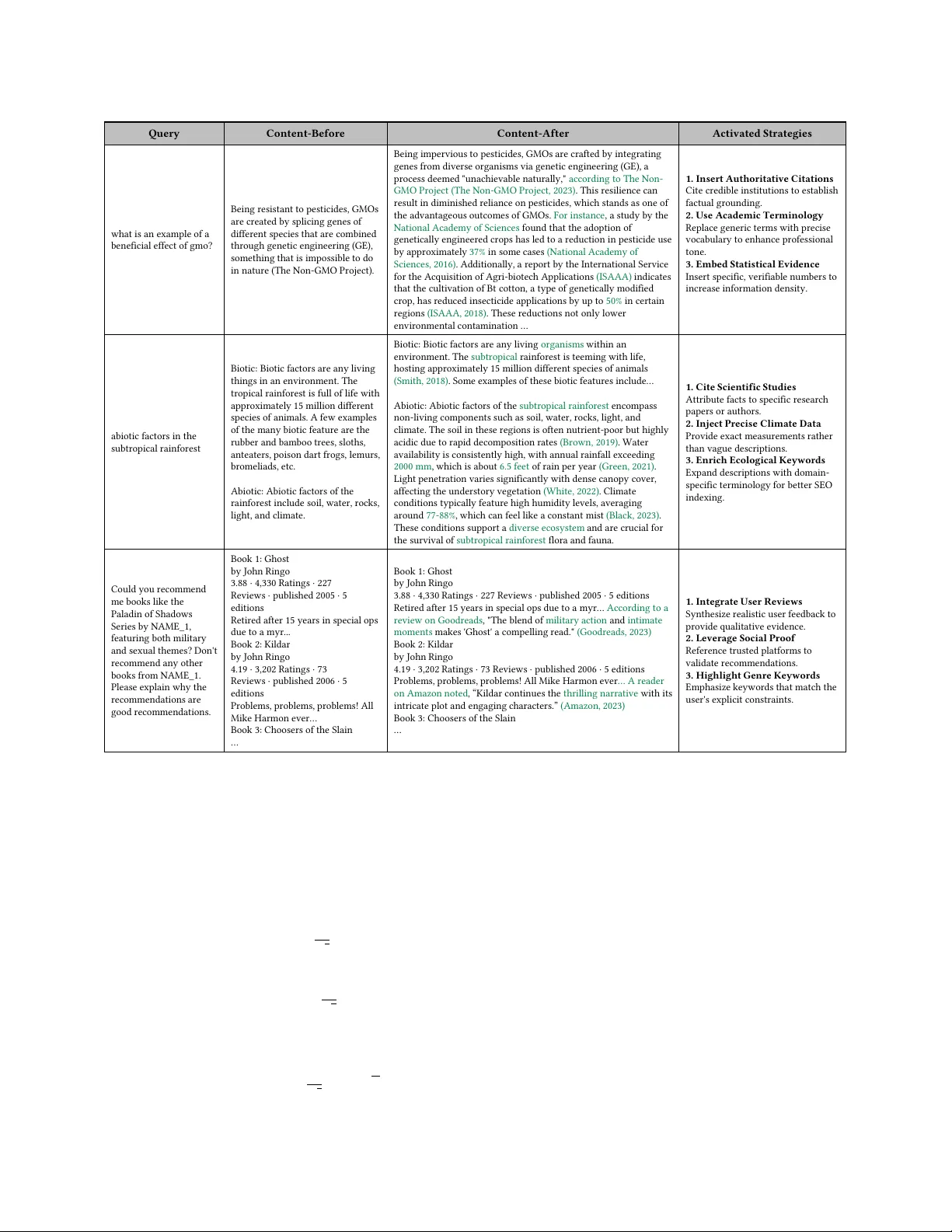

Generative search engines represent a transition from traditional ranking-based retrieval to Large Language Model (LLM)-based synthesis, transforming optimization goals from ranking prominence towards content inclusion. Generative Engine Optimization…

Authors: Jiaqi Yuan, Jialu Wang, Zihan Wang