Process Over Outcome: Cultivating Forensic Reasoning for Generalizable Multimodal Manipulation Detection

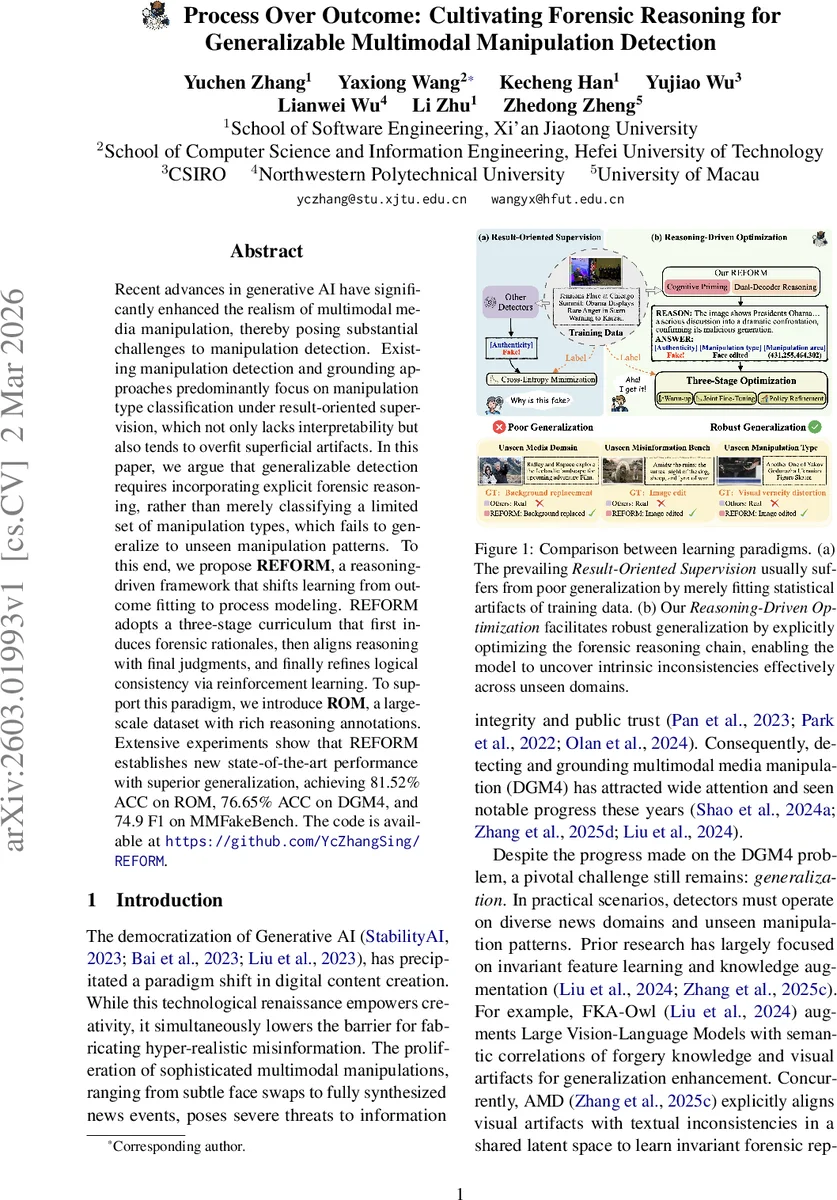

Recent advances in generative AI have significantly enhanced the realism of multimodal media manipulation, thereby posing substantial challenges to manipulation detection. Existing manipulation detection and grounding approaches predominantly focus on manipulation type classification under result-oriented supervision, which not only lacks interpretability but also tends to overfit superficial artifacts. In this paper, we argue that generalizable detection requires incorporating explicit forensic reasoning, rather than merely classifying a limited set of manipulation types, which fails to generalize to unseen manipulation patterns. To this end, we propose REFORM, a reasoning-driven framework that shifts learning from outcome fitting to process modeling. REFORM adopts a three-stage curriculum that first induces forensic rationales, then aligns reasoning with final judgments, and finally refines logical consistency via reinforcement learning. To support this paradigm, we introduce ROM, a large-scale dataset with rich reasoning annotations. Extensive experiments show that REFORM establishes new state-of-the-art performance with superior generalization, achieving 81.52% ACC on ROM, 76.65% ACC on DGM4, and 74.9 F1 on MMFakeBench.

💡 Research Summary

The paper tackles the growing difficulty of detecting multimodal media manipulations generated by advanced generative AI. Existing detection methods largely rely on result‑oriented supervision that trains models to map image‑text pairs directly to binary labels (real/fake) or manipulation‑type categories. This approach encourages the model to latch onto superficial statistical artifacts present in the training data, leading to poor generalization when faced with unseen manipulation patterns or new domains.

To overcome this limitation, the authors propose REFORM (Reasoning‑Enhanced Forensic Optimization via Reinforcement Modeling), a framework that shifts the learning objective from fitting outcomes to modeling the forensic reasoning process itself. REFORM is built around a three‑stage curriculum:

-

Cognitive Reasoning Warm‑up – Using large‑scale data distillation, the model is first taught to generate natural‑language forensic rationales for each multimodal sample. These rationales describe visual inconsistencies (lighting, shadows, boundary artifacts), textual‑visual semantic mismatches, metadata anomalies, and other forensic cues. This stage creates a latent “reasoning space” where the model learns to articulate why a sample is suspicious.

-

Reasoning‑Endowed Joint Fine‑Tuning – The model is then fine‑tuned jointly on the generated rationales and the final truth label. A consistency loss penalizes cases where the rationale contradicts the final decision, forcing the network to align its internal explanation with its verdict. This joint optimization moves the model beyond simple label prediction toward a coherent inference pipeline.

-

Constraint‑Aware Policy Refinement – Finally, the reasoning generation process is treated as a policy and optimized with reinforcement learning. The authors adopt Group Relative Policy Optimization (GRPO), rewarding policies that (i) achieve high final classification accuracy, (ii) maintain logical consistency between rationale and verdict, and (iii) produce linguistically coherent explanations. The group‑relative formulation ensures batch‑level consistency and mitigates exposure bias typical of supervised sequence generation.

To support this paradigm, the authors introduce ROM (Reasoning‑enhanced Omnibus Manipulation), a new large‑scale dataset containing over 704 k multimodal samples. Unlike prior benchmarks that focus mainly on face‑swap or simple text‑image mismatches, ROM spans a wide range of manipulation types—background replacement, scene‑level synthesis, semantic contradictions, and metadata tampering—and provides detailed human‑annotated forensic rationales for each sample.

Extensive experiments demonstrate that REFORM achieves state‑of‑the‑art performance: 81.52 % accuracy on ROM, 76.65 % on the DGM4 benchmark, and 74.9 F1 on MMFakeBench, surpassing strong baselines such as FKA‑Owl and AMD. Crucially, REFORM shows markedly better cross‑domain generalization; when the reasoning annotations are degraded or when the model is evaluated on unseen manipulation styles, performance degrades far less than result‑oriented baselines, confirming that the learned reasoning process captures intrinsic forensic logic rather than dataset‑specific quirks.

The paper also discusses limitations. Reason generation still depends heavily on large language models, which may struggle with highly complex logical chains. The reinforcement learning stage requires careful reward design; inappropriate weighting can lead to over‑optimizing for fluent text at the expense of forensic accuracy. Future work is suggested on incorporating structured logical representations (e.g., proof trees), multi‑agent collaborative reasoning, and model compression for real‑time deployment.

In summary, REFORM introduces a paradigm shift from outcome‑centric to process‑centric learning in multimodal manipulation detection. By explicitly cultivating forensic reasoning through a staged curriculum and reinforcement‑driven policy refinement, the framework achieves both higher detection accuracy and stronger interpretability, setting a new direction for robust, generalizable multimedia forensics.

Comments & Academic Discussion

Loading comments...

Leave a Comment