Dream2Learn: Structured Generative Dreaming for Continual Learning

Continual learning requires balancing plasticity and stability while mitigating catastrophic forgetting. Inspired by human dreaming as a mechanism for internal simulation and knowledge restructuring, we introduce Dream2Learn (D2L), a framework in which a model autonomously generates structured synthetic experiences from its own internal representations and uses them for self-improvement. Rather than reconstructing past data as in generative replay, D2L enables a classifier to create novel, semantically distinct dreamed classes that are coherent with its learned knowledge yet do not correspond to previously observed data. These dreamed samples are produced by conditioning a frozen diffusion model through soft prompt optimization driven by the classifier itself. The generated data are not used to replace memory, but to expand and reorganize the representation space, effectively allowing the network to self-train on internally synthesized concepts. By integrating dreamed classes into continual training, D2L proactively structures latent features to support forward knowledge transfer and adaptation to future tasks. This prospective self-training mechanism mirrors the role of sleep in consolidating and reorganizing memory, turning internal simulations into a tool for improved generalization. Experiments on Mini-ImageNet, FG-ImageNet, and ImageNet-R demonstrate that D2L consistently outperforms strong rehearsal-based baselines and achieves positive forward transfer, confirming its ability to enhance adaptability through internally generated training signals.

💡 Research Summary

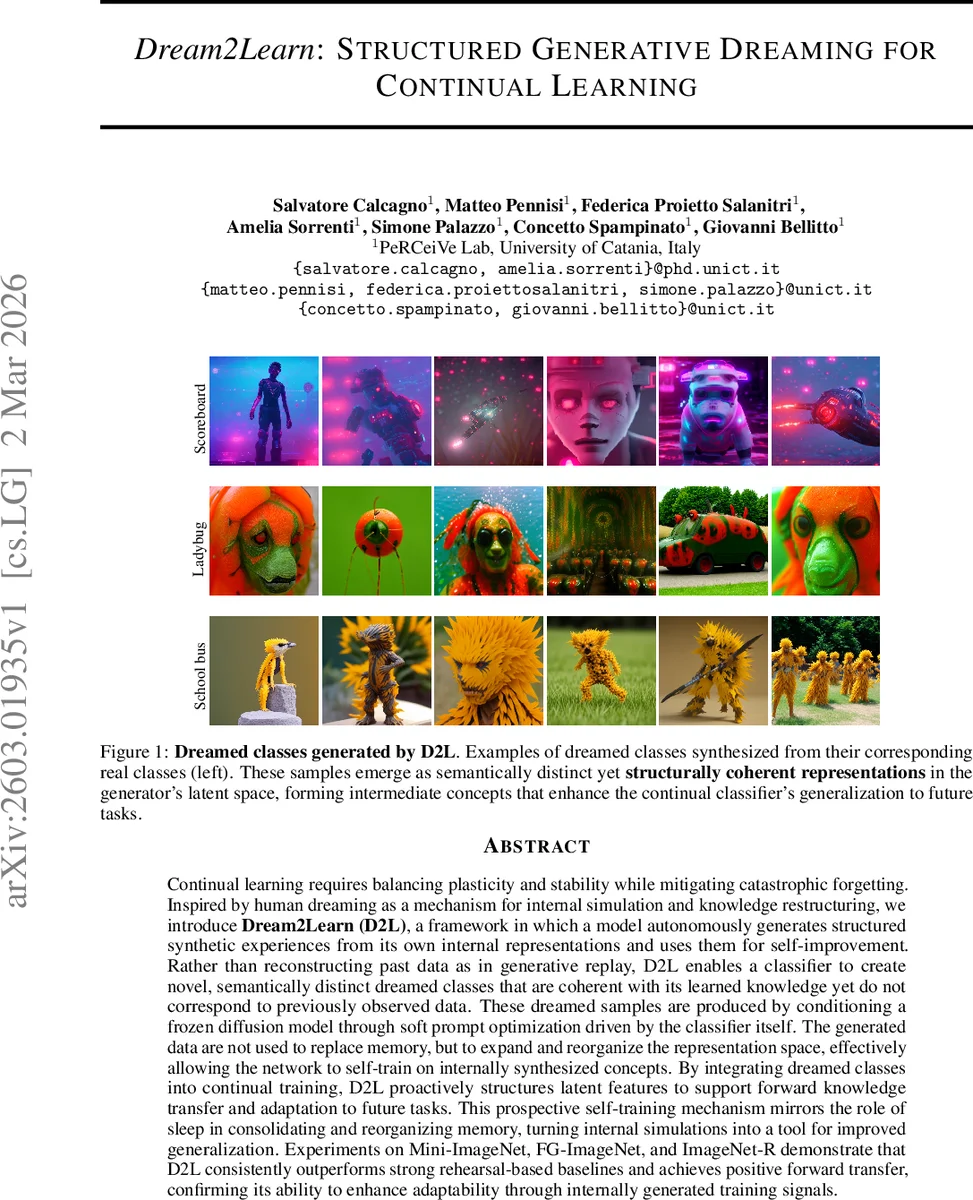

Dream2Learn (D2L) introduces a novel continual learning paradigm inspired by human dreaming. Instead of replaying stored exemplars or reconstructing past data via generative replay, D2L generates entirely new “dreamed” classes that are semantically distinct yet structurally coherent with the model’s existing knowledge. The core mechanism relies on a frozen latent diffusion model (LDM) conditioned by a combination of a fixed textual prompt and a learnable soft prompt for each class. During each task, the classifier’s parameters are fixed while the soft prompts are optimized so that the diffusion model, given any real image, produces a dreamed image that the classifier confidently assigns to the target class. Crucially, the optimization excludes real samples of that class, forcing the generator to explore a latent region that shares visual traits with the class but remains distinct enough to form a new intermediate concept.

These dreamed samples are never stored in the replay buffer; only their prompts are retained in a “dream inventory.” At the start of a new task, each incoming real class is mapped to the output neuron of the most similar existing dream class, encouraging feature reuse and smoothing gradient updates. The classifier is then trained on a mixture of real data and the residual dreamed data from the previous task, combined with a standard continual‑learning loss that leverages a small rehearsal buffer. An auxiliary “Oracle Net” monitors the similarity between real and dreamed images to stop prompt optimization before mode collapse, preserving diversity.

Experiments on three benchmarks—Mini‑ImageNet (5‑task split), FG‑ImageNet (10‑task split), and ImageNet‑R (10‑task split)—show that D2L consistently outperforms strong rehearsal‑based baselines (e.g., iCaRL, ER, CLS‑ER) and recent diffusion‑based generative replay methods (DiffClass, SDDR). Gains range from 2 % to 5 % absolute accuracy, and, importantly, D2L achieves positive forward transfer: the dreamed classes act as pre‑trained intermediate concepts that facilitate learning of future tasks. t‑SNE visualizations reveal that dreamed classes occupy distinct clusters between real classes, confirming that the method reshapes the latent space in a structured way.

The paper also discusses limitations. D2L depends on a large pre‑trained diffusion model, incurring computational overhead. Prompt optimization must be performed per class, which can become costly as the number of tasks grows. Moreover, the semantic quality of dreamed classes is evaluated only indirectly via downstream performance; a more explicit measure of their meaning alignment would be valuable.

In summary, Dream2Learn reframes generative replay from a retrospective memory‑reconstruction tool into a prospective “dreaming” mechanism that proactively structures representations for future learning. By leveraging frozen diffusion models and soft‑prompt optimization, D2Learn enables continual learners to self‑generate useful training signals, improving both stability and plasticity. Future work may explore lightweight generators, shared or meta‑learned prompts, multimodal dreaming, and explicit semantic evaluation of dreamed concepts to broaden the applicability and efficiency of the approach.

Comments & Academic Discussion

Loading comments...

Leave a Comment