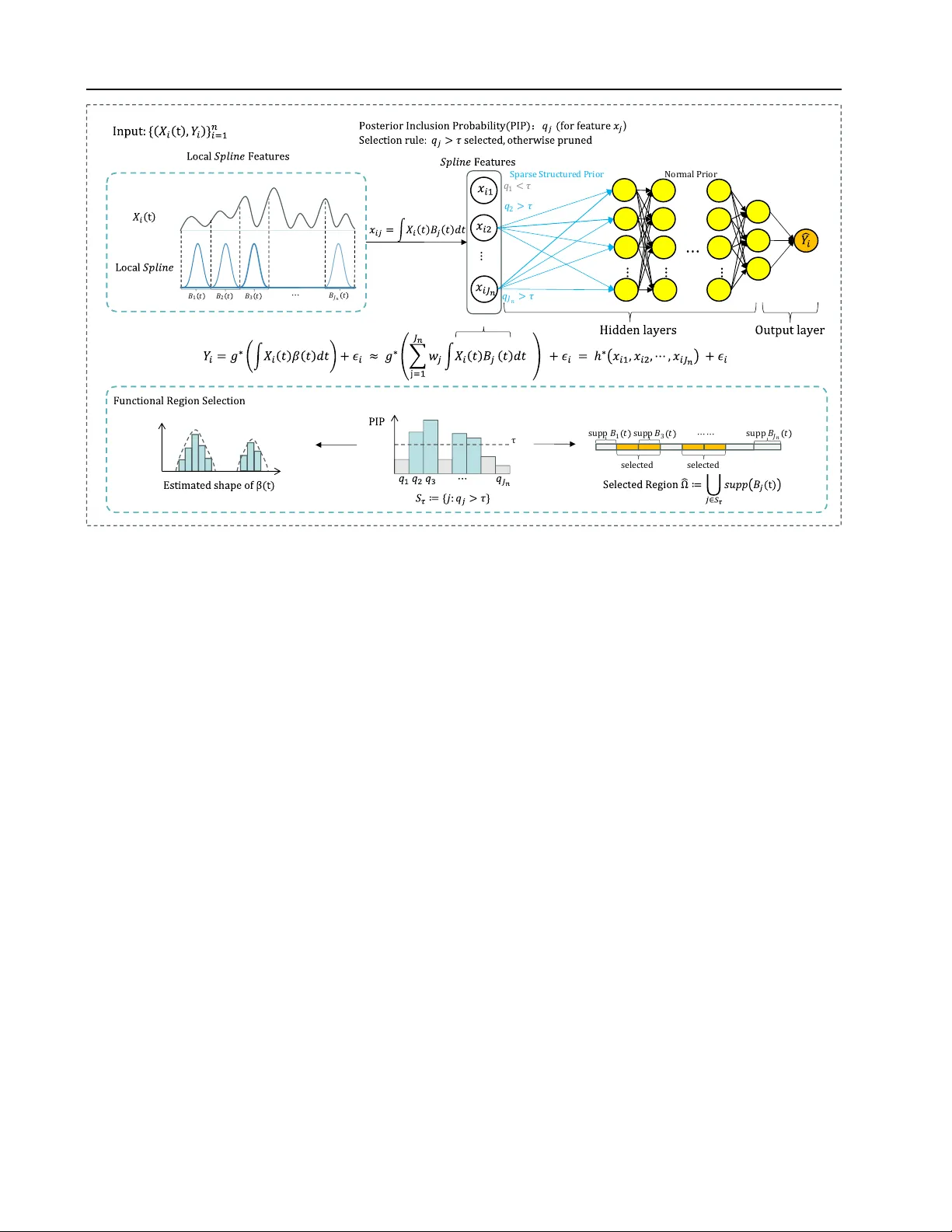

Sparse Bayesian Deep Functional Learning with Structured Region Selection

In modern applications such as ECG monitoring, neuroimaging, wearable sensing, and industrial equipment diagnostics, complex and continuously structured data are ubiquitous, presenting both challenges and opportunities for functional data analysis. H…

Authors: Xiaoxian Zhu, Yingmeng Li, Shuangge Ma