VideoFusion: A Spatio-Temporal Collaborative Network for Multi-modal Video Fusion

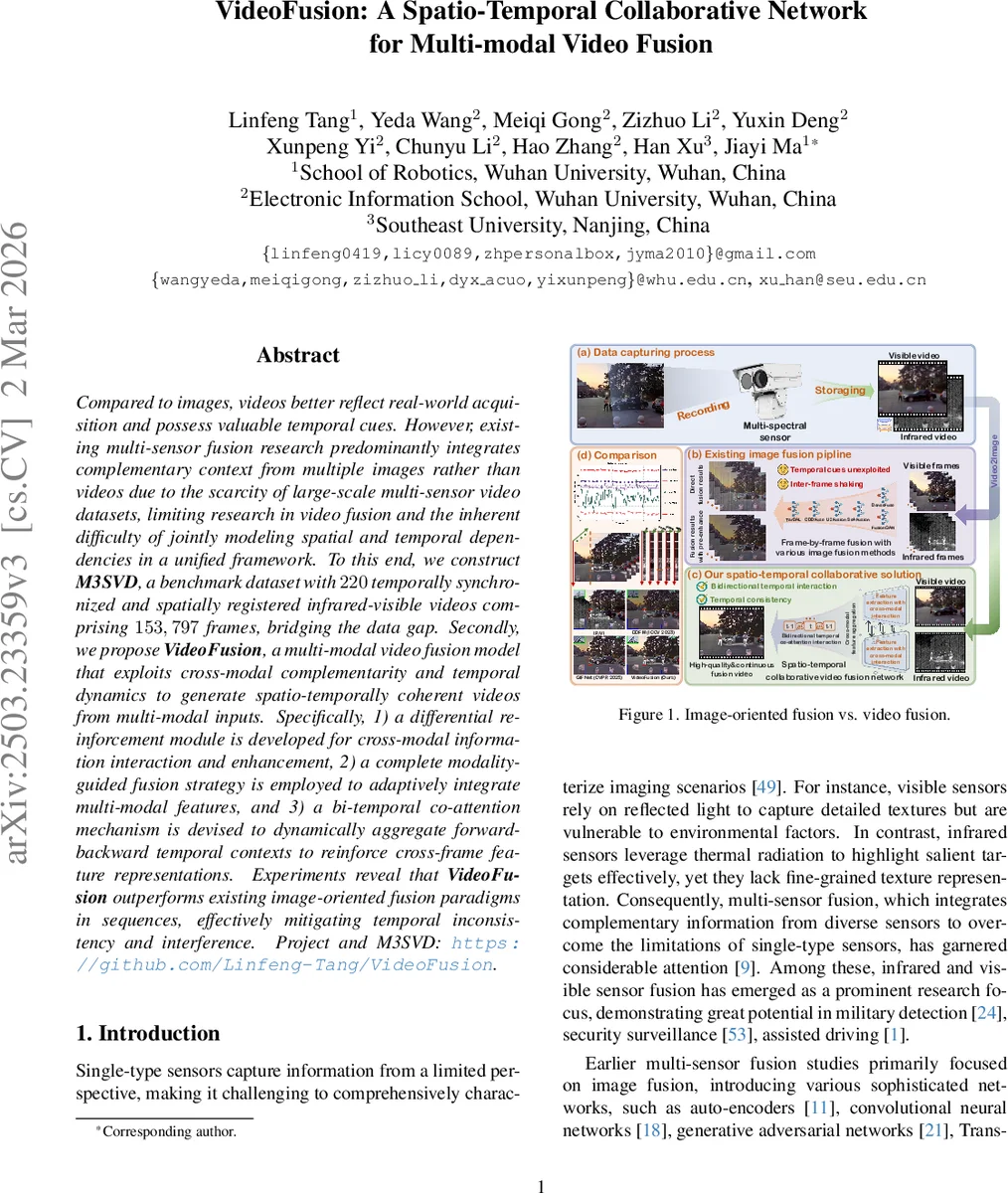

Compared to images, videos better reflect real-world acquisition and possess valuable temporal cues. However, existing multi-sensor fusion research predominantly integrates complementary context from multiple images rather than videos due to the scarcity of large-scale multi-sensor video datasets, limiting research in video fusion and the inherent difficulty of jointly modeling spatial and temporal dependencies in a unified framework. To this end, we construct M3SVD, a benchmark dataset with $220$ temporally synchronized and spatially registered infrared-visible videos comprising $153,797$ frames, bridging the data gap. Secondly, we propose VideoFusion, a multi-modal video fusion model that exploits cross-modal complementarity and temporal dynamics to generate spatio-temporally coherent videos from multi-modal inputs. Specifically, 1) a differential reinforcement module is developed for cross-modal information interaction and enhancement, 2) a complete modality-guided fusion strategy is employed to adaptively integrate multi-modal features, and 3) a bi-temporal co-attention mechanism is devised to dynamically aggregate forward-backward temporal contexts to reinforce cross-frame feature representations. Experiments reveal that VideoFusion outperforms existing image-oriented fusion paradigms in sequences, effectively mitigating temporal inconsistency and interference. Project and M3SVD: https://github.com/Linfeng-Tang/VideoFusion.

💡 Research Summary

Paper Overview

The authors address a critical gap in multimodal sensor fusion: while most existing work focuses on fusing static image pairs, real‑world systems capture continuous video streams. To enable research on video‑level fusion, they introduce a large‑scale benchmark, M3SVD, consisting of 220 synchronized infrared–visible video pairs (153,797 frames) captured at 30 fps with spatial alignment (640×480). The dataset covers a wide variety of scenes (day/night, low‑light, over‑exposure, disguise, occlusion, etc.) and provides high‑quality ground‑truth registration, filling a void in publicly available multimodal video resources.

Method – VideoFusion

VideoFusion is a spatio‑temporal collaborative network that jointly exploits cross‑modal complementarity and temporal dynamics. Its architecture can be summarized in three stages:

-

Spatio‑temporal feature extraction – 3D convolutions (Conv3D) generate shallow temporal features for each modality, followed by multi‑scale residual blocks and down‑sampling to obtain hierarchical feature maps (F₁, F₂, F₃).

-

Cross‑modal interaction –

- Cross‑modal Differential Reinforcement Module (CmDRM) computes a differential map between modalities (e.g., infrared – visible), projects it to keys/values, and uses the primary modality’s features as queries in an attention operation. This selectively injects non‑redundant, complementary information into the primary stream.

- Modality‑guided Fusion Module aggregates the reinforced features across modalities using the summed infrared‑plus‑visible representation as a query, achieving adaptive, scale‑aware fusion.

-

Temporal aggregation and reconstruction – After a transformer‑based enhancement block, a Bi‑temporal Co‑attention (Bi‑CAM) module attends to both the previous and next frames (t‑1, t+1) simultaneously, establishing forward and backward temporal dependencies beyond the fixed receptive field of Conv3D. A variational consistency loss enforces temporal smoothness, reducing flicker. The decoder upsamples the fused representation to produce the final RGB fused video, while separate decoders unmix the restored infrared and visible streams.

Key Contributions

- Dataset: M3SVD provides the first large‑scale, temporally coherent, spatially registered infrared‑visible video benchmark, enabling systematic evaluation of video‑level fusion, restoration, and registration.

- Network Design: The combination of differential reinforcement (to suppress redundancy), modality‑guided attention (to adaptively merge modalities), and bidirectional temporal co‑attention (to exploit forward and backward cues) constitutes a novel unified framework for multimodal video fusion.

- Performance: Quantitative results show consistent improvements over state‑of‑the‑art image‑centric fusion methods (e.g., DenseFuse, SeAFusion) with gains of ~2 dB in PSNR, 0.04 in SSIM, and a 35‑45 % reduction in Temporal Flicker Index. Qualitative examples demonstrate superior preservation of texture in visible frames and enhanced target visibility in low‑light or over‑exposed conditions, without the flickering artifacts typical of frame‑wise fusion.

Implications and Future Directions

VideoFusion proves that integrating temporal cues is essential for high‑quality multimodal fusion. The proposed modules are modular and could be extended to other sensor pairs (e.g., LiDAR‑camera, radar‑optical) or to asynchronous setups by incorporating learnable registration. Moreover, joint training with downstream tasks such as detection or tracking could further exploit the enriched spatio‑temporal representations. The release of M3SVD and the open‑source code will likely catalyze a new line of research focused on video‑level multimodal perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment