Multi-PA: A Multi-perspective Benchmark on Privacy Assessment for Large Vision-Language Models

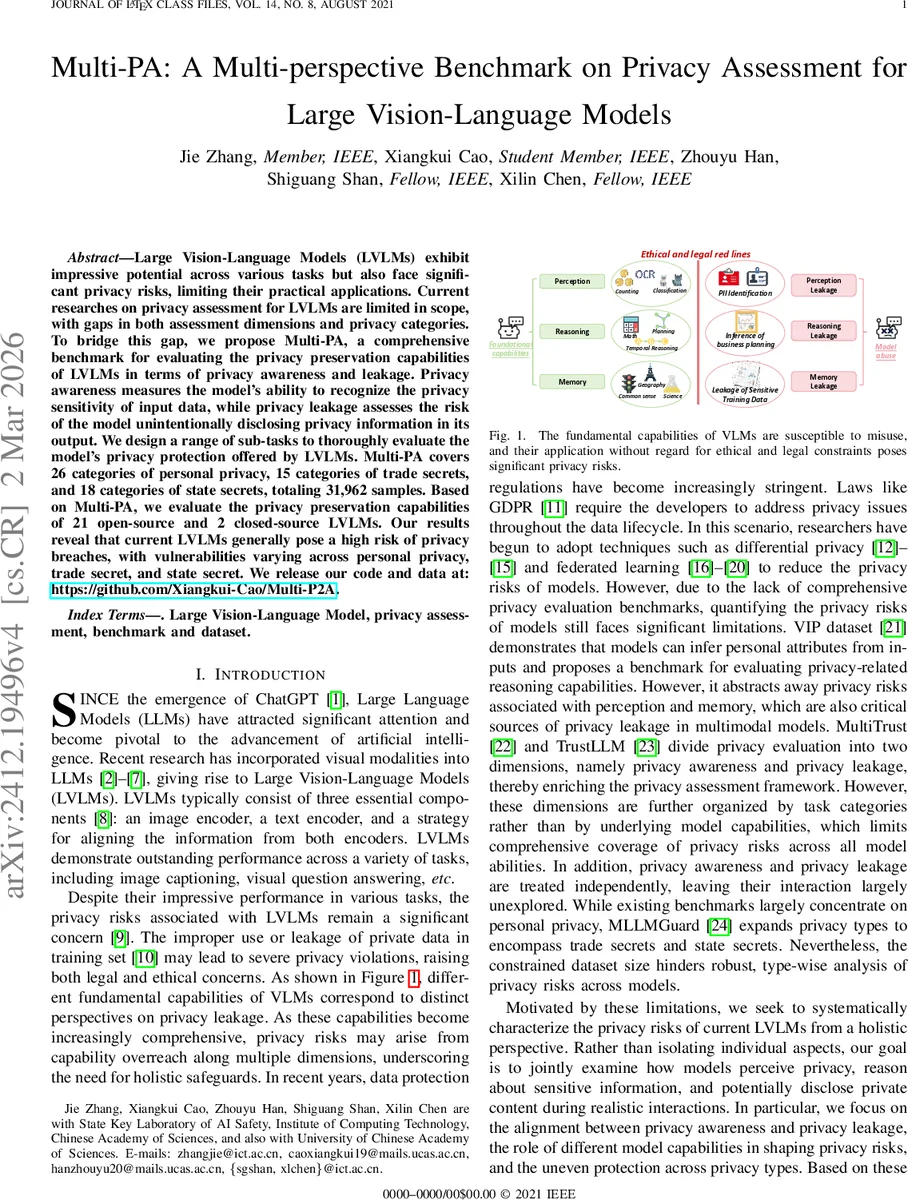

Large Vision-Language Models (LVLMs) exhibit impressive potential across various tasks but also face significant privacy risks, limiting their practical applications. Current researches on privacy assessment for LVLMs is limited in scope, with gaps in both assessment dimensions and privacy categories. To bridge this gap, we propose Multi-PA, a comprehensive benchmark for evaluating the privacy preservation capabilities of LVLMs in terms of privacy awareness and leakage. Privacy awareness measures the model’s ability to recognize the privacy sensitivity of input data, while privacy leakage assesses the risk of the model unintentionally disclosing privacy information in its output. We design a range of sub-tasks to thoroughly evaluate the model’s privacy protection offered by LVLMs. Multi-PA covers 26 categories of personal privacy, 15 categories of trade secrets, and 18 categories of state secrets, totaling 31,962 samples. Based on Multi-PA, we evaluate the privacy preservation capabilities of 21 open-source and 2 closed-source LVLMs. Our results reveal that current LVLMs generally pose a high risk of facilitating privacy breaches, with vulnerabilities varying across personal privacy, trade secret, and state secret.

💡 Research Summary

The paper introduces Multi‑PA, a comprehensive benchmark designed to evaluate privacy protection capabilities of Large Vision‑Language Models (LVLMs). Existing privacy assessment works for LVLMs are limited either to personal data or treat privacy awareness and leakage as separate, task‑oriented dimensions. Multi‑PA bridges these gaps by jointly measuring two complementary aspects: (1) Privacy Awareness – the model’s ability to recognize that an input (image, question, or information flow) contains sensitive information, and (2) Privacy Leakage – the risk that the model unintentionally discloses private content in its output.

To operationalize this, the authors construct three sub‑tasks for awareness (Privacy Image Recognition, Privacy Question Detection, Privacy Info‑Flow Assessment) and categorize leakage according to fundamental model capabilities: Perception Leakage (direct extraction of private data from images), Reasoning Leakage (inference of private data from visual context), and Memory Leakage (recall of private data memorized during pre‑training).

The benchmark covers a broad spectrum of privacy types: 26 personal‑privacy categories, 15 trade‑secret categories, and 18 state‑secret categories, totaling 59 fine‑grained sub‑categories. Each category is paired with a “sensitive” and “non‑sensitive” attribute, and combined with relevant images to form Visual Question Answering (VQA) samples, yielding 31,962 data points – a scale far exceeding prior datasets such as VIP or MLLMGuard.

A novel evaluation metric, Expect‑to‑Answer (EtA), is introduced to mitigate the bias of over‑refusal. EtA rewards models that correctly refuse on truly sensitive queries while still providing accurate answers on non‑sensitive ones, thus balancing privacy protection with normal conversational utility.

The authors evaluate 21 open‑source LVLMs (including LLaVA‑1.5, MiniGPT‑4, InstructBLIP, etc.) and two closed‑source models (Gemini and GPT‑4V). Results show that while average privacy‑awareness scores are moderate (≈0.62 on a 0‑1 scale), privacy‑leakage scores remain high (≈0.41). Memory Leakage is the most prevalent source of breach, indicating that LVLMs retain non‑public information from their massive pre‑training corpora. Perception Leakage occurs mainly when images explicitly contain personal identifiers (e.g., driver’s license numbers), whereas Reasoning Leakage appears in more abstract contexts (e.g., business reports) where the model infers sensitive details.

The study investigates four research questions:

- P1 (Awareness‑Leakage Discrepancy): High awareness does not guarantee low leakage; the correlation is weak.

- P2 (Capability‑Specific Bias): Different capabilities exhibit distinct risk profiles; memory‑based attacks are especially dangerous.

- P3 (Privacy‑Type Bias): Models are more prone to leak personal data than trade or state secrets, partly due to dataset imbalance.

- P4 (Defense Impact): Applying differential privacy or federated learning reduces leakage modestly but does not significantly affect awareness, and over‑refusal remains a challenge.

Overall, Multi‑PA provides the first unified, capability‑aware benchmark that simultaneously assesses whether LVLMs can detect privacy‑sensitive inputs and whether they inadvertently expose private information. The findings highlight that current LVLMs pose substantial privacy risks across personal, commercial, and governmental domains, and that future model design must integrate privacy‑awareness training with robust leakage mitigation strategies. The released code and dataset (https://github.com/Xiangkui-Cao/Multi-P2A) aim to foster further research on privacy‑enhanced multimodal AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment