VLA-Reasoner: Empowering Vision-Language-Action Models with Reasoning via Online Monte Carlo Tree Search

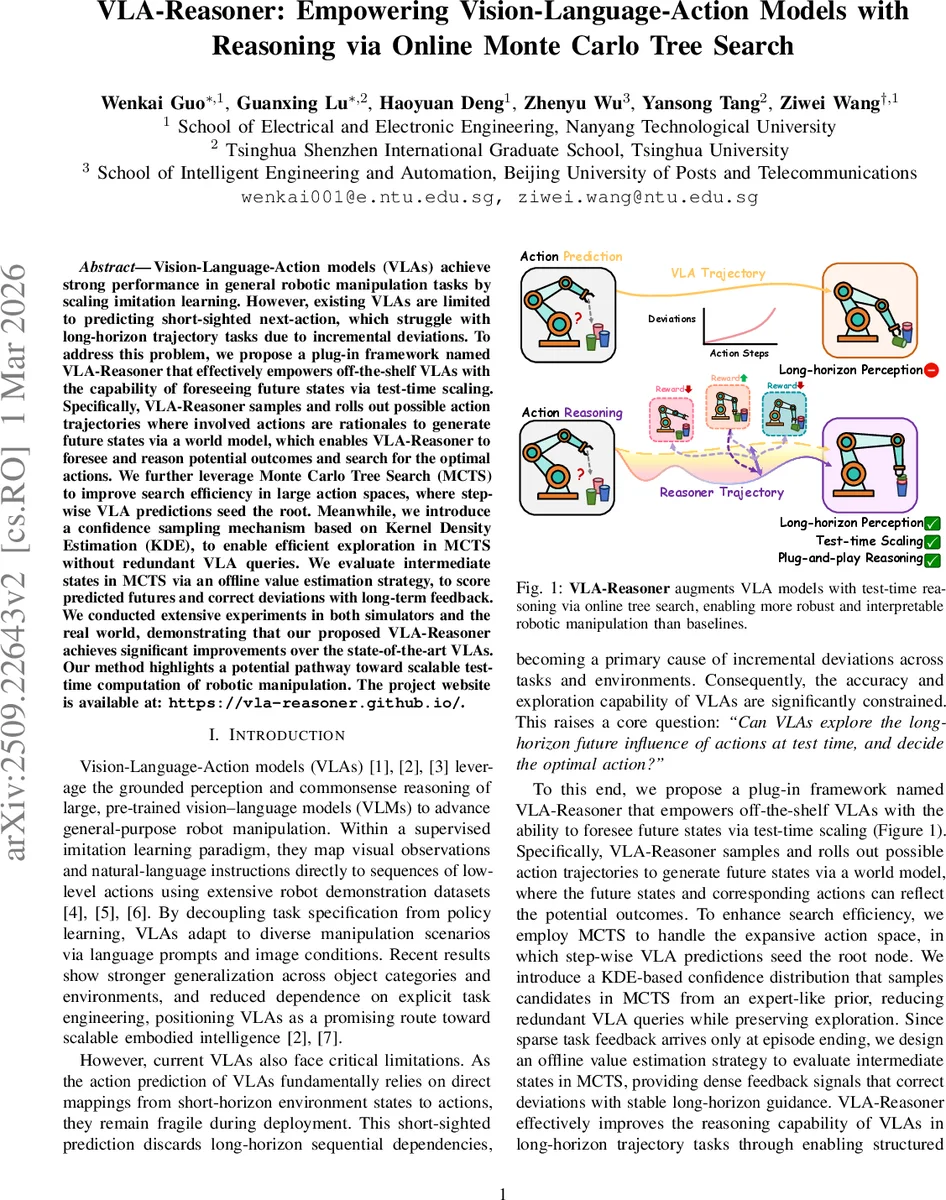

Vision-Language-Action models (VLAs) achieve strong performance in general robotic manipulation tasks by scaling imitation learning. However, existing VLAs are limited to predicting short-sighted next-action, which struggle with long-horizon trajectory tasks due to incremental deviations. To address this problem, we propose a plug-in framework named \method that effectively empowers off-the-shelf VLAs with the capability of foreseeing future states via test-time scaling. Specifically, \method samples and rolls out possible action trajectories where involved actions are rationales to generate future states via a world model, which enables \method to foresee and reason potential outcomes and search for the optimal actions. We further leverage Monte Carlo Tree Search (MCTS) to improve search efficiency in large action spaces, where step-wise VLA predictions seed the root. Meanwhile, we introduce a confidence sampling mechanism based on Kernel Density Estimation (KDE), to enable efficient exploration in MCTS without redundant VLA queries. We evaluate intermediate states in MCTS via an offline value estimation strategy, to score predicted futures and correct deviations with long-term feedback. We conducted extensive experiments in both simulators and the real world, demonstrating that our proposed VLA-Reasoner achieves significant improvements over the state-of-the-art VLAs. Our method highlights a potential pathway toward scalable test-time computation of robotic manipulation. The project website is available at: https://vla-reasoner.github.io/.

💡 Research Summary

The paper tackles a fundamental limitation of current Vision‑Language‑Action (VLA) models: their predictions are short‑sighted, relying only on the current observation and language instruction to output the next robot action. While this design enables impressive zero‑shot generalization, it leads to compounding errors in long‑horizon tasks because each step ignores the downstream consequences of its action. To remedy this, the authors introduce VLA‑Reasoner, a plug‑in framework that endows any off‑the‑shelf VLA with test‑time reasoning capabilities through an online Monte‑Carlo Tree Search (MCTS) guided by a learned world model, a kernel‑density‑estimation (KDE) prior, and a visual value network.

Core Idea

At deployment, the VLA’s one‑step prediction is taken as the root of a search tree. The tree is expanded by sampling candidate actions from a KDE that approximates the distribution of historically successful actions. Each candidate is rolled out in a learned world model that predicts the next visual observation given the current state and action. A lightweight visual value network, trained offline on down‑sampled image sequences with interpolated progress labels, evaluates the intermediate predicted states. MCTS then propagates these values upward, using a UCB (Upper Confidence Bound) selection rule that balances exploitation of high‑value nodes with exploration of less‑visited actions. After a fixed number of iterations, the best child of the root is selected as the Reasoner action. The final executed command is a convex combination of the original VLA action and the Reasoner action, controlled by a scalar α (typically 0.3‑0.5).

Technical Contributions

- Test‑time scaling – The framework adds a planning layer on top of a frozen VLA without retraining the policy, preserving the benefits of large‑scale imitation learning while mitigating its myopic nature.

- MCTS adaptation for manipulation – Traditional MCTS assumes discrete, low‑dimensional actions. The authors adapt it to high‑dimensional continuous robot commands by (a) using KDE to generate a compact, expert‑like prior over actions, and (b) estimating visit counts directly from KDE probabilities, thus avoiding costly repeated VLA queries.

- World model integration – A learned action‑aware dynamics model predicts future RGB‑D observations conditioned on robot actions. The model is fine‑tuned on a modest robot dataset and operates in latent space to keep inference fast enough for online use.

- Visual value estimation – Recognizing that sparse task rewards are only available at episode termination, the authors train a ResNet‑34‑based MLP to regress a progress score from visual frames. This dense value signal supplies the MCTS back‑propagation step with meaningful gradients, enabling the tree to favor trajectories that visibly move toward the goal.

- Action injection mechanism – By blending the Reasoner’s long‑horizon suggestion with the VLA’s short‑horizon prediction, the system retains responsiveness to immediate perception while correcting for anticipated drift.

Experimental Validation

The authors evaluate on both simulation (the LIBERO benchmark) and real‑world robot experiments using a UR5e arm. Baselines include several state‑of‑the‑art VLA models (e.g., RT‑1, SayCan) and ablations that remove KDE, the value network, or the MCTS component. Results show:

- In simulation, VLA‑Reasoner improves success rates by 12‑18 percentage points across a suite of multi‑step tasks.

- On the real robot, success rates increase from ~62 % to ~78 % on tasks requiring four or more sequential manipulations.

- Ablations reveal that KDE reduces the number of required VLA queries by ~70 %, while the visual value network cuts down the average tree depth needed for convergence. Without MCTS, performance drops to near‑baseline, confirming that the planning layer is essential.

Limitations and Future Directions

The approach hinges on the fidelity of the world model; inaccuracies in contact dynamics can mislead the tree. KDE, while effective for moderate‑dimensional action spaces, may struggle as the dimensionality grows, suggesting a need for more expressive density models (e.g., normalizing flows). The scalar α is manually set per task; an adaptive scheme could further improve robustness. The authors propose extending the value function to incorporate force/torque feedback and exploring meta‑learning for automatic hyper‑parameter tuning.

Conclusion

VLA‑Reasoner demonstrates that a lightweight, test‑time planning overlay can dramatically enhance the long‑horizon competence of existing VLA policies without retraining them. By marrying a world model, KDE‑driven action priors, and dense visual value estimation within an MCTS framework, the system achieves substantial gains in both simulated and real‑world manipulation. This work opens a promising pathway toward scalable, reasoning‑augmented embodied agents that can safely and efficiently operate in complex, open‑ended environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment