One protein is all you need

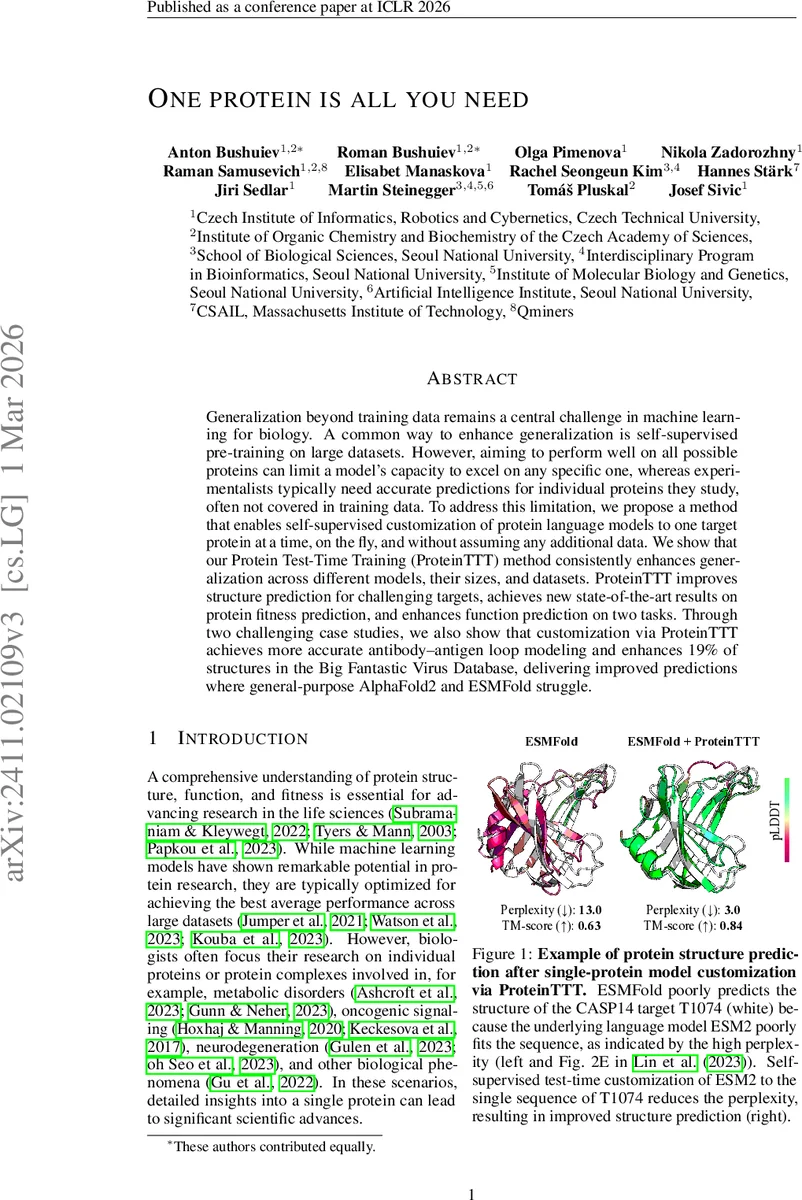

Generalization beyond training data remains a central challenge in machine learning for biology. A common way to enhance generalization is self-supervised pre-training on large datasets. However, aiming to perform well on all possible proteins can limit a model’s capacity to excel on any specific one, whereas experimentalists typically need accurate predictions for individual proteins they study, often not covered in training data. To address this limitation, we propose a method that enables self-supervised customization of protein language models to one target protein at a time, on the fly, and without assuming any additional data. We show that our Protein Test-Time Training (ProteinTTT) method consistently enhances generalization across different models, their sizes, and datasets. ProteinTTT improves structure prediction for challenging targets, achieves new state-of-the-art results on protein fitness prediction, and enhances function prediction on two tasks. Through two challenging case studies, we also show that customization via ProteinTTT achieves more accurate antibody-antigen loop modeling and enhances 19% of structures in the Big Fantastic Virus Database, delivering improved predictions where general-purpose AlphaFold2 and ESMFold struggle.

💡 Research Summary

Generalization across the vast protein space remains a major obstacle for machine‑learning models in biology. While large protein language models (PLMs) such as ESM‑2 achieve impressive average performance after massive self‑supervised pre‑training, they often falter on individual proteins that are under‑represented in the training set or that contain rare sequence patterns. The authors address this gap by introducing Protein Test‑Time Training (ProteinTTT), a test‑time adaptation technique that customizes a pre‑trained PLM to a single target protein (or its multiple‑sequence alignment) without requiring any additional labeled data.

ProteinTTT works by freezing the downstream task head (structure, fitness, or function predictor) and fine‑tuning only the PLM backbone using the masked language modeling (MLM) objective on the target sequence. Starting from the original parameters θ₀, the model undergoes a fixed number of MLM updates (typically T ≈ 30). At each step a set of parameters Θ = {θ₀,…,θ_T} is stored; the final customized parameters θₓ are chosen by maximizing a confidence function c (e.g., predicted pLDDT for structure or reduced perplexity). If no explicit confidence metric is available, the last step θ_T is used. To keep the method scalable, the authors employ low‑rank adaptation (LoRA), gradient accumulation, and stochastic gradient descent (SGD) rather than Adam. All masking ratios, token‑replacement policies, and sequence‑cropping strategies are identical to those used during the original pre‑training, ensuring distributional consistency.

The approach is evaluated on three canonical downstream tasks. In structure prediction, ProteinTTT is applied to ESMFold, HelixFold‑Single, DPLM2, and ESM3. Across the CASP14 and CAMEO test sets, perplexity reductions translate into TM‑scores that improve by 0.15–0.30 on average; a particularly difficult target (CASP14 T1074) sees TM‑score rise from 0.29 to 0.86 after adaptation. In protein fitness prediction (deep mutational scanning), the method boosts Spearman correlations for ESM‑2, SaProt, ProSST, MSA‑Transformer, and ProGen2, establishing new state‑of‑the‑art results. For functional annotation, two tasks—terpene‑synthase substrate classification and subcellular localization—show accuracy gains of 3–5 percentage points when using the adapted backbone.

Two case studies illustrate practical impact. First, antibody‑antigen CDR‑loop modeling benefits from ProteinTTT: the adapted model reproduces loop geometry more faithfully, potentially reducing experimental screening costs in therapeutic antibody design. Second, the authors process the “Big Fantastic Virus Database” (≈1,200 viral capsid proteins). While AlphaFold2 and ESMFold assign low confidence to 19 % of these structures, ProteinTTT raises average pLDDT by ~12 points and improves TM‑scores, delivering reliable models for previously intractable viral proteins.

Limitations include the extra GPU memory and compute required for on‑the‑fly fine‑tuning, especially for billion‑parameter backbones, and the sensitivity of the confidence function to the downstream task. MSA‑based customization is explored only briefly, and extending the method to multi‑protein complexes or simultaneous adaptation of several targets remains an open challenge.

In summary, ProteinTTT demonstrates that a simple test‑time MLM fine‑tuning step can substantially reduce model perplexity on a single protein and, without altering downstream heads, consistently enhance prediction quality across structure, fitness, and function tasks. The work opens a new “one‑protein‑at‑a‑time” paradigm for biological AI, suggesting future directions such as automated confidence selection, batch adaptation of protein families, and tighter integration with experimental pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment