Understanding Usage and Engagement in AI-Powered Scientific Research Tools: The Asta Interaction Dataset

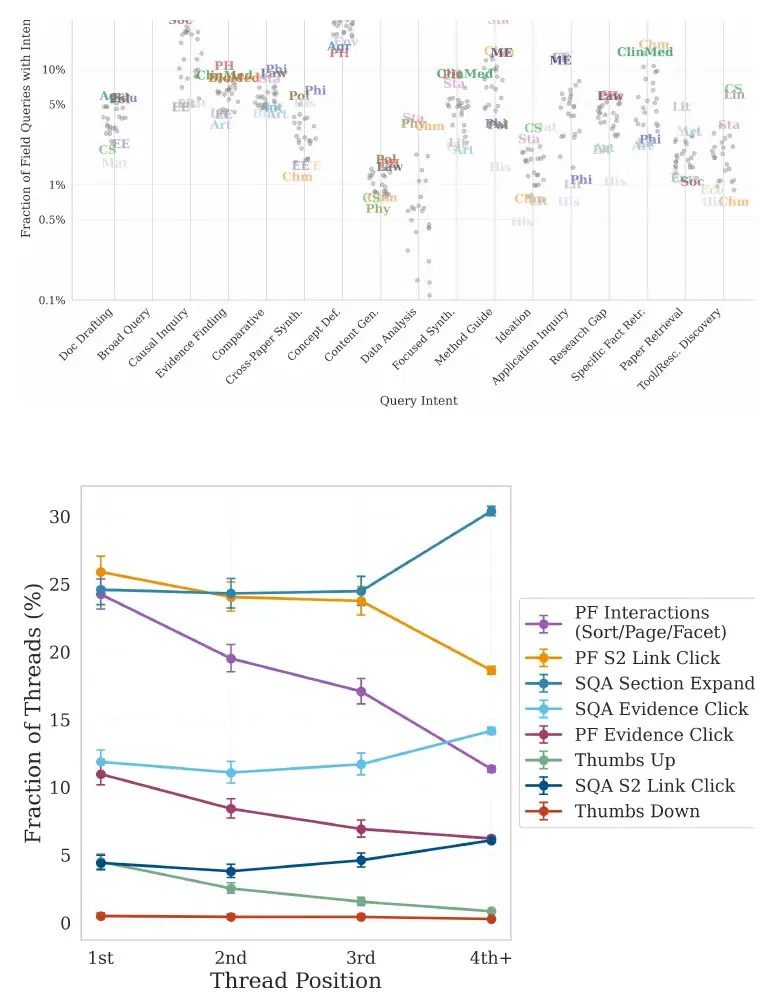

AI-powered scientific research tools are rapidly being integrated into research workflows, yet the field lacks a clear lens into how researchers use these systems in real-world settings. We present and analyze the Asta Interaction Dataset, a large-sc…

Authors: Dany Haddad, Dan Bareket, Joseph Chee Chang