SPARR: Simulation-based Policies with Asymmetric Real-world Residuals for Assembly

Robotic assembly presents a long-standing challenge due to its requirement for precise, contact-rich manipulation. While simulation-based learning has enabled the development of robust assembly policies, their performance often degrades when deployed…

Authors: Yijie Guo, Iretiayo Akinola, Lars Johannsmeier

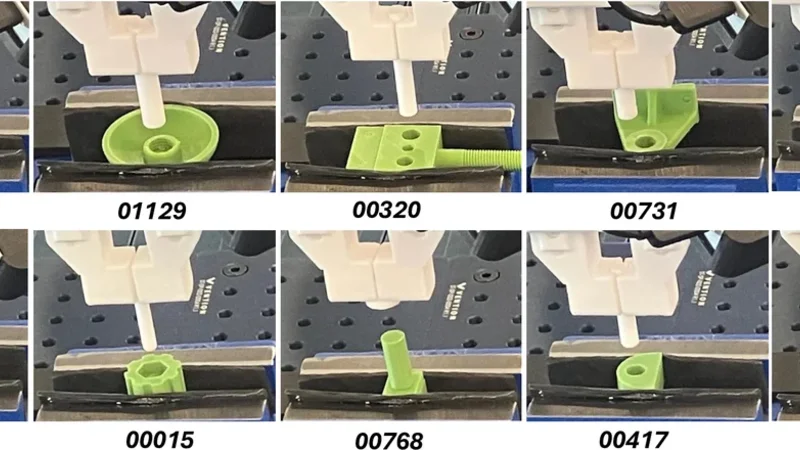

SP ARR: Simulation-based P olicies with Asymmetric Real-world Residuals f or Assembly Y ijie Guo 1 , Iretiayo Akinola 1 , Lars Johannsmeier 1 , Hugo Hadfield 1 , Abhishek Gupta 2 , and Y ashraj Narang 1 (a). Pre - tr ain b ase polic y 𝜋 𝑏 in the simu l a tion (b). Collect demos with 𝜋 𝑏 in the re al world (c). T rain r e sidual p o licy 𝜋 𝑟 in the rea l w o rld Demo s Demo s Demo B uf fe r P olicy Tr a ns i t io n s Hi gh - quali ty trajs RL Buffer 𝜋 𝑏 + 𝜋 𝑟 Ex ecu t e combine d pol i cy Collect transi ti ons Updat e resi d u al policy 𝜋 𝑟 P olicy ro ll- ou ts Success trajs Fig. 1: Illustration of our approach, SP ARR. (a) A specialist policy is pre-trained in simulation. (b) The simulation policy is deployed zero-shot in the real world, achieving a moderate success rate (e.g., up to 80%). Successful trajectories are collected as demonstrations. (c) A residual policy is trained in the real world on top of the simulation policy , lev eraging both the demonstration buf fer and the online RL buf fer . During training, high-quality trajectories that achieve success quickly are added in demonstrations for further exploitation. Abstract — Robotic assembly presents a long-standing chal- lenge due to its requir ement for pr ecise, contact-rich ma- nipulation. While simulation-based learning has enabled the development of rob ust assembly policies, their performance often degrades when deployed in real-w orld settings due to the sim-to-real gap. Conv ersely , r eal-world reinfor cement learning (RL) methods avoid the sim-to-real gap, but rely heavily on human supervision and lack generalization ability to en vironmental changes. In this work, we propose a hybrid approach that combines a simulation-trained base policy with a real-world residual policy to efficiently adapt to real-w orld variations. The base policy , trained in simulation using lo w- level state observations and dense r ewards, pr ovides strong priors for initial behavior . The residual policy , learned in the real world using visual observations and sparse rewards, compensates for discrepancies in dynamics and sensor noise. Extensive r eal-world experiments demonstrate that our method, SP ARR, achie ves near -perfect success rates across di verse two- part assembly tasks. Compared to the state-of-the-art zero- shot sim-to-r eal methods, SP ARR improves success rates by 38.4% while reducing cycle time by 29.7%. Moreov er , SP ARR requir es no human expertise, in contrast to the state-of-the- art real-w orld RL appr oaches that depend hea vily on human supervision. Please visit the project webpage at https:// research.nvidia.com/labs/srl/projects/sparr/ I . I N T R O D U C T I O N Robotic assembly remains a long-standing challenge in robot learning, demanding high-precision, contact-rich ma- nipulation. Simulation and sim-to-real transfer have emerged as po werful strategies for addressing these difficulties. Re- cent adv ances in simulation-based learning hav e led to the dev elopment of assembly policies that demonstrate strong performance in both simulated and real world en vironments [1], [2], [3], [4], [5]. Notably , these methods hav e achieved success rates of up to 80% on challenging benchmarks of assembly [6], [7]. Despite this progress, current per- formance is insufficient for industrial deployment, where 1 Nvidia, 2 Univ ersity of W ashington success rates of 95% or higher are typically required. Fur - thermore, simulation-trained policies often exhibit brittleness when deployed directly in the real world due to the sim- to-real gap. For state-based policies, zero-shot performance can degrade significantly due to mismatches in physical parameters (e.g., mass, friction), camera calibration errors, state estimation noise, and v ariations in grasp pose. V ision- based policies, on the other hand, are especially sensitive to visual domain shifts, such as changes in lighting, object appearance, or background, which can sev erely impair their real-world generalization. Meanwhile, recent progress in real-world RL has demonstrated promising results in contact- rich assembly tasks using ra w visual observations [8], [9], [10]. These methods directly optimize policies in real-world en vironments, enabling them to capture fine-grained physical interactions. Howe ver , the y typically rely heavily on human expertise for demonstration collection, as well as acti ve su- pervision and interv ention during training to guide learning. T o address these limitations, we propose SP ARR ( Fig. 1 ), a hybrid framework to pre-train a base policy with low-dimensional state observations in simulation and then learn a residual policy with visual observ ations in the real world. The base policy provides successful demonstrations, a structured prior and safe early exploration, while the residual policy corrects for discrepancies in physical properties, state estimation errors, and visual or en vironmental dif ferences. This asymmetric design enables efficient adaptation to real- world en vironments without reliance on human supervision. In the experiments, SP ARR achiev es 95%-100% success rates for tw o-part assembly tasks (Fig. 2) in the real- world. Compared to the state-of-the-art (SO T A) zero-shot deployment [6], SP ARR shows a relati ve impro vement of 38.4% in success rate and cost 29.7% less cycle time, av eraged over 10 tasks. Unlike the SOT A approach [9], which demands substantial human efforts, SP ARR requires no human in volv ement in real-world learning. In summary , our key contrib utions are: • Asymmetric Residual Learning F ramework : W e intro- duce SP ARR , a no vel frame work for sim-to-real trans- fer , combining a simulation-trained state-based base policy with an asymmetric, vision-conditioned residual policy in the real world. This asymmetric design lev er- ages efficient simulation training while enabling robust adaptation to real-world variations. • Autonomous Adaptation W ithout Human Supervision : Unlike existing real-world RL methods that require expert demonstrations or frequent human interventions, SP ARR achie ves near -perfect success rates (95–100%) on real-world assembly tasks with zero human supervi- sion, making it practical for scalable deployment. • Robustness to State Noise and Physical V ariations : W e show that the vision-based residual policy signifi- cantly improv es robustness to pose estimation errors and socket displacements, outperforming the simulation- trained policy and state-based residual polic y . I I . R E L AT E D W O R K a) Robotic Assembly: Robotic assembly is a core chal- lenge in manipulation, requiring accurate perception, precise control under contact, and robustness under uncertainty . Classical approaches based on quasi-static modeling, com- pliance strategies, and force control have been successful in structured en vironments [11], [12], but they typically lack adaptability and scalability to ne w settings. Learning-based methods aim to reduce engineering ef fort and enable gen- eralization. Recent work uses deep RL, imitation learning, and skill composition to address the contact-rich nature of assembly [5], [4], [6], [7]. Simulation-based learning has made notable progress, but performance often degrades in real-world deployment. Additionally , recent research on real- world RL directly optimizes assembly in the physical world. [13] leverages tactile sensing b ut requires human expertise to design curriculum specific for insertion tasks. [8] introduces vision-based RL for contact-rich tasks using sparse rewards. [9] and [10] extend this with human-in-the-loop feedback to achiev e sub-millimeter accuracy . While these methods push the frontier of real-world robot learning, they often rely on frequent human supervision, interv ention, and/or substantial ex ecution time on real-world robots, underscoring the need for more scalable and autonomous solutions. Our work addresses this issue with a hybrid approach that combines the efficienc y and structure of simulation policies with residual adaptation in the real world. b) Sim-to-Real T ransfer: Sim-to-real transfer aims to bridge the gap between policies trained in simulation and their deployment in the real world. A common strategy is do- main randomization, which exposes the polic y to randomized physical and visual parameters during training to improve ro- bustness [14], [15], [16]. Especially for image-based policies, this often requires high-fidelity rendering and large-scale training to identify the effecti ve visual augmentations and randomizations [17]. Y et, such policies can become ov erly conservati ve and still fail when the real environment deviates in unmodeled ways. Alternatively , [18], [19], [20] explores domain adaptation, where policies or features are adapted post-training to align with real-world observ ations. [21] fine-tunes simulation policies for tight-insertion tasks with real-world human demonstrations. Distillation as a widely used adaptation method is time- and compute-intensive [22], [23]. It typically requires significant iterations during real- world dev elopment, in volving policies rollouts, visual data collection, behavior cloning or DAgger , and fine-tuning. c) Residual P olicy Learning for Assembly T asks: Resid- ual policy learning has emerged as an effecti ve strategy to enhancce base policies with correctiv e behaviors, particularly in contact-rich manipulation. Early work [24], [25], [26] augmentes conv entional feedback controllers with RL-based residuals to handle unmodeled contact dynamics, demon- strating success in large-part assemblies. [27] learns com- pliance parameter adjustments via real-time residuals atop a simulation policy . This w ork supports assembly , pi voting, and scre wing tasks though limited to large-part assemblies (with edge lengths e xceeding 4 cm). Recently , [28] combines behavior cloning with a residual RL polic y , achieving sim-to- real transfer for high-precision assembly , but it requires train- ing both the base and residual policies in simulation as well as collecting human demonstrations. [29] e xplores learning a vision-based base policy and a force-based residual policy , but primarily ev aluates assembly tasks in simulation. [30] proposes compliant residual DAgger with force feedback and compliance control, but relies on human corrections. Across these w orks, the residual learning paradigm enables compact powerful adaptations but often depend on human expertise for controller design or demonstration collection. In contrast, our approach lev erages simulation as a platform to alleviate the need for human demonstrations and is applied to fine- grained assembly tasks (a verage diameter of plugs < 1 cm ). I I I . M E T H O D A. Problem Description This work studies policy adaptation from simulation to the real world for tw o-part assembly tasks (Fig. 2). Each en vironment consists of a Franka robot [31], a plug (the part to be inserted), and a socket (the corresponding receptacle). The objectiv e is to insert the plug into its designated socket. W e formulate the task as a reinforcement learning (RL) problem under the standard Markov decision process (MDP) framew ork, M = { S , A , ρ , P , r , γ } . At each time step t , s t ∈ S is the state observation (e.g., the robot’ s proprioceptiv e signals and part poses or visual observations), a t ∈ A is the action (e.g., delta end-ef fector pose), and r t is the re ward. The initial state s 0 is drawn from the distribution ρ ( s 0 ) and transitions follow unknown, possibly stochastic dynamics P . The agent seeks a policy π ( a t | s t ) that maximizes the accumulative discounted rew ards, and γ is the discount factor . Our approach is motiv ated by the practical deployment of robotic assembly policies in industrial and manufacturing settings. A simulation-trained policy typically transfers to the real world with a moderate success rate (e.g., up to 80% 011 36 010 41 007 31 003 20 000 15 007 68 000 28 011 29 004 17 010 36 Round peg Rectan gular peg Gear Fig. 2: Overview of experimental tasks . (T op) 10 AutoMate [6] assembly tasks. AutoMate provides a dataset of 100 assembly tasks with di verse parts. W e choose 10 out of 100 tasks with near-perfect specialist policy pre-trained in simulation. (Bottom) 3 tasks on NIST board. NIST (National Institute of Standards and T echnology) provides Assembly T ask Boards as performance benchmarks to e valuate robotic assembly technologies. W e consider the peg and gear insertion tasks on task board #1. in [5], [6], [7]). By training a residual polic y on a gi ven set of plug-socket pairs in the real world, we aim to boost performance to near-perfect levels. Once trained, the residual policy is combined with the simulation-based base policy and deployed to assemble ne w plug-socket pairs directly on the assembly line. An ov ervie w is sho wn in Fig. 1. In the following section, we describe base policy pre-training in simulation (Sec. III-B), demonstration collection (Sec. III-D), and residual policy learning in the real world (Sec. III-E). B. Pre-tr aining in Simulation As depicted in Fig. 1(a) and Fig. 3, we train a base policy π b using low-dimensional state-based observations. State-based training in simulation is computationally ef ficient and robust to v ariations in object poses and visual patterns when transferred to the real world. In contrast, image-based policies require extensiv e domain randomization and large- scale training [17] to achie ve robustness against changes in dynamics, object poses, and visual domain gaps. Follo wing AutoMate [6], the leading baseline for zero- shot sim-to-real transfer in assembly , our state observations s b t includes robot joint angles, current end-effector pose, goal end-effector pose, and their difference. The action a t is defined as an incremental pose target, which is tracked by a Cartesian impedance controller . W e train the policy π b with Proximal Policy Optimization (PPO) [32] using imitation rew ards deriv ed from disassembly trajectories. π b outputs Gaussian-distributed actions a t ∼ N ( µ t , σ t ) for continuous control. T o enhance rob ustness, we randomize robot joint configurations, socket poses, and plug poses at the start of each episode. Thanks to reliable state information, dense rew ard signals, and parallelized simulation environments, the specialist policy can be trained ef fectively and ef ficiently to achiev e a high success rate in simulation with randomized initial states, even e xceeding 99% in some tasks [6]. C. P olicy F ormulation in the Real W orld a) Deployment of Base P olicy: When deployed in the real world, π b takes the end-effector goal pose as part of s b t . W e obtain the socket pose via a pose-estimation pipeline combining Grounding DINO [33], SAM2 [34], and FoundationPose [35]. Then, we set the end-effector goal pose as the estimated socket pose with a z-axis offset, assuming the plug is consistently held in the gripper . F or comparison, we also obtain ground-truth goal poses by man- ually guiding the Franka arm to complete the insertion. On AutoMate assemblies, the dif ference between our estimated and ground-truth poses is within ± 1 mm along the x and y axes. Based on this observ ation, we model state-estimation noise by uniformly sampling up to 1 mm in the x and y axes and adding it to the ground-truth goal pose at the start of each episode. W e deliberately avoid directly using estimated poses as inputs, which could cause overfitting to the error distrib ution from our specific pose estimator . For initialization in each episode, the end-ef fector holding the plug is positioned 2 cm above the noisy goal pose. In the real world, π b achiev es only a moderate zero-shot success rate due to dynamics mismatch and state-input inaccuracies. W e therefore introduce a residual policy π r to adapt π b to real-world en vironments that may differ from pre-training. Ba se pol icy 𝜋 𝑏 Re al -wo rld observation 𝑠 𝑡 𝑟 Re sid ual pol icy 𝜋 𝑟 Ba se actio n 𝑎 𝑡 𝑏 Re sid ual actio n 𝑎 𝑡 𝑟 Si mul ati on ob serva tio n 𝑠 𝑡 𝑏 Pro prioceptive fea tu r e s Wrist-ca me r a RGB images Pro prioceptive fea tu r e s Goal pose of end- ef fe ctor Fig. 3: Illustration of asymmetric policy combination . Com- bining state-based base policies from simulation and image-based residual policies learned in the real-world. b) Design of Residual P olicy: As shown in Fig. 3, π r takes as input s r t , which includes both lo w-dimensional state (end-effector pose, linear and angular velocities, and force and torques [9]) and visual observ ations (two RGB images). These inputs exclude any object pose and are not affected by state-estimation errors. In addition, visual inputs provide complementary information not captured in the simulated CAD model, such as object asymmetries, textures, manu- facturing tolerances and defects, and fine-grained contact features. A CAD-based pose estimator cannot capture geo- metric constraints such as a USB socket’ s one-way insertion, whereas visual observations can. The residual polic y π r outputs actions a r t as incremental pose tar gets, added to a b t from π b . Unlike in simulation, dense reward signals (e.g., disassembly path imitation [6] or signed distance field metrics [5]) are not av ailable in the real world. Also, typical dense-reward terms, such as the ne gati ve distance to the goal, can be misleading, since surrounding geometry (e.g., socket walls) may obstruct the plug. W e therefore use a sparse success re ward: the task succeeds if the current end-effector pose is within 3 mm in translation and 5 ◦ in rotation of the goal, yielding r t = 1. D. Demonstration Collection in the Real W orld Instead of training the residual policy π r from scratch in the real world, we propose using rollouts of the simulation policy π b to collect demonstrations for the residual. Since ground-truth residual actions are not av ailable, we generate pseudo-labels by sampling residual actions as Gaussian noise based on the action distrib ution from π b . At each timestep t , we gather propriocepti ve features with the noisy goal pose as s b t and capture camera images for observation s r t . The base policy π b is queried with s b t to produce a Gaussian distribution of actions N ( µ t , σ t ) . W e use the mean µ t as the deterministic, base action, i.e. a b t = µ t . The residual action a r t is then sampled from N ( 0 , σ t ) . The combined action is defined as a t = a b t + a r t , which ensures that a t follows the same distribution N ( µ t , σ t ) as the base policy output. W e record the resulting trajectory as a sequence of transi- tions τ = { ( s r 0 , a b 0 , a r 0 , r 0 ) , · · · , ( s r t , a b t , a r t , r t ) , · · · } . As in Fig. 1(b), zero-shot deployment of π b with injected residual actions yields a subset of successful trajectories, which we store as demonstrations to bootstrap training of π r . E. Residual P olicy Learning in the Real W orld W e propose learning a polic y π r to predict residual actions a r t ∼ π r ( ·| s r t , a b t ) , conditioned on both real-world observations and the base action (Fig. 3). As explained in Sec. III-C, real-world observations s r t provide information that is robust to state noise. Conditioning on the base action a b t further supplies context for determining the appropriate correction. W e empirically ev aluate this design choice in Sec. IV -E. Learning π r in the real world is challenging due to sparse rew ards and limited interaction data. T o address these chal- lenges, we adopt the RLPD algorithm [36], which is sample efficient and capable of lev eraging prior demonstration data. As sho wn in Fig. 1(c), at each policy update, transitions are sampled equally from a demonstration buf fer and an RL b uffer . The demonstration b uffer is initialized with the offline success trajectories collected in Sec. III-D. W e then continue to update the demonstration b uffer with high-quality trajectories encountered during residual polic y learning [37]. Specifically , trajectories that achiev e task success in fe wer time steps than the median of prior demonstrations are added to the b uffer , allo wing the policy to take advantage of strong experiences g athered during training and decrease cycle time. W e further analyze this design choice in Sec. IV -E. I V . E X P E R I M E N T S In this section, we design e xperiments to answer the fol- lowing questions: (1) Can SP ARR ef fecti vely and efficiently adapt simulation policies to real-world assembly tasks with near-perfect success rates, without human demonstrations or interventions? (2) Can SP ARR achiev e robustness to pose variations and pose-estimation errors? (3) Can SP ARR adapt simulation policies to unseen tasks in the real w orld? A. Setup In our experiments we use the follo wing components: • A Franka Emika robot [31], • an NVIDIA Jetson Orin GPU to run robot controllers, • two Intel RealSense D405 cameras rigidly connected to the flange of the robot, • a PC with an NVIDIA R TX 4090 GPU to run policies, • a SpaceMouse input de vice only used for [9]. B. Effective Real-world Adaptation W e in vestigate 10 real-world robotic assembly tasks from the AutoMate dataset [6]. W e pre-train base policies in Isaac Lab [38] using 128 parallel en vironments, completing 25 million en vironment steps within one day of training. W e select 10 out of 100 tasks (Fig. 2) that achieve ov er 99% success in simulation. W e choose them for their div erse geometries and strong simulation performance, which are expected to perform well in the real world. W e compare following approaches that deploy simulation policies in the real world without human demonstrations: • SERL: a real-w orld RL approach f or precise, contact-rich tasks [8]. Rather than using human demonstrations, we roll out the simulation polic y , collect 20 successful trajectories and then run SERL for 0.5 hours to learn real-w orld assembly policies. 01 136 01 129 00320 00731 01041 01036 00015 00768 00417 00028 0.0 0.2 0.4 0.6 0.8 1.0 Success Rate SERL AutoMate SP ARR (Ours) HIL-SERL (Oracle) 01 136 01 129 00320 00731 01041 01036 00015 00768 00417 00028 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 20.0 Average Episode T ime (in seconds) Fig. 4: Perf ormance on 10 AutoMate tasks . W e evaluate the success rate ( ↑ higher is better) and cycle time ( ↓ lo wer is better) av eraged ov er 20 episodes. SERL, AutoMate, and SP ARR (Ours) transfer simulation-trained policies to the real world without human ef fort, where SP ARR achie ves substantially higher success rates and shorter cycle times. HIL-SERL (Oracle) serves as an upper bound, assuming access to near -optimal human demonstrations and continuous human supervision. • A utoMate: the SO T A approach of zero-shot sim-to- real transfer f or two-part assembly [6]. W e deploy the simulation policy directly in a zero-shot manner . • SP ARR (Ours) . W e collect 20 successful trajectories with the base policy from simulation b ut then trains a residual policy on top of the base polic y for 0.5 hours. Additionally , to establish an upper bound on performance, we run HIL-SERL, the SOT A real-world RL approach [9] where human experts provide near-optimal demonstrations, frequent interventions, and consistent supervision during training. W e manually collect 20 demonstrations with a space mouse and train aseembly policies for 0.5 hours with frequent human interventions to ensure nearly all episodes succeed during real-world training. W e fix the training budget to 0.5 hours across all meth- ods, as real-robot training is expensi ve and shorter training emphasizes data efficienc y . This choice also aligns with recent real-world adaptation and fine-tuning w orks, which demonstrate effecti ve learning within 30 minutes or less [39], [26]. All actions are executed at 15 Hz. W e ev aluate policies ov er 20 episodes with noisy goal poses (Sec. III-C). In Fig. 4, SERL exhibits poor performance due to hard e x- ploration in the sparse-reward setting. W ith only 20 demon- strations and 0.5 hours of real-world training, it struggles to discov er reasonable behaviors and collect positive rew ards, as no human intervention is provided during online training. While a fe w successful trials are observed during real-world learning, SERL cannot ef ficiently learn to reproduce these successes. A utoMate shows a moderate success rate despite strong simulation performance, indicating the sim-to-real gap. In comparison to AutoMate, SP ARR achie ves a relative improv ement of 38.4% in success rate and 29.7% in cycle time, highlighting the effecti veness of the residual policy in correcting actions of the simulation policy . Notably , SP ARR attains a 95–100% success rate, comparable to HIL-SERL, without an y human supervision or interventions. HIL-SERL , as an oracle approach, shows reduced cycle times compared to other methods that lack human demonstrations or inter- ventions. W e attrib ute this to two main factors: First, sim-to- real transfer approaches use action smoothing and a policy- lev el action integrator (PLAI) [5] for reliable deployment in the real world, which can negati vely affect policy execution speed. Second, the quality of demonstration data differs: faster human demonstrations lead to faster learned policies. Since we constrain real-world training to 0.5 hours per task, RL cannot suf ficiently overcome the prior from demonstra- tions. W e leave it to future work to reduce cycle time without increasing training time or human efforts. (0. 4 8 , 0) 0. 8 (0. 4 8 , 0.02 ) 0. 65 (0. 4 6 , 0) 0. 75 (0. 4 8 , - 0.02) 0. 5 (0. 5 , 0) 0. 8 (0. 4 8 , 0) 0. 75 (0. 4 8 , 0.02 ) 0. 8 (0. 4 6 , 0) 0. 8 (0. 4 8 , - 0.02) 0. 75 (0. 5 , 0) 0. 8 (0. 4 8 , 0) 1. 0 (0. 4 8 , 0.02 ) 0. 95 (0. 4 6 , 0) 0. 8 (0. 4 8 , - 0.02) 0. 95 (0. 5 , 0) 1. 0 0. 0 1. 0 (a) (b) (c) Fig. 5: P olicy deployment on different socket poses for AutoMate task 00731. Each box indicates the (x, y) coordinates of the socket pose and the corresponding success rate (0–1) during e valuation. The training socket pose is at (0.48, 0), and for ev aluation, the sock et is displaced by 2 cm : up (0.50, 0), down (0.46, 0), left (0.48, –0.02), and right (0.48, 0.02). (a) Base polic y from simulation. (b) Base policy with a state-based residual policy . (c) SP ARR (Ours): base policy with image-based residual policy . The color bar represents success rate from 0 (yello w) to 1 (green). SP ARR achiev es higher success rates (darker green) and demonstrates robustness to sock et pose v ariations. C. Robustness to State Noise In high-mix, lo w-volume manuf acturing, rob ustness to part pose v ariations is a compelling capability . In particular , the socket pose on an assembly line may not exactly match the pose set during residual policy learning. T o assess performance under such conditions, we physically displace the socket by 2 cm from its training position. W e also add a uniform noise of 1 mm to the ground-truth goal pose at each episode, to emulate pose-estimation error, as explained in Sec. III-C. Here tw o sources of noise are considered: (1) part pose variation , due to absence of precise fixtures in flexible automation, and (2) pose estimation err or since the exact goal pose is unavailable at deployment. W e compare SP ARR (Ours) with a variant using a state- based residual policy . Our image-based residual policy takes as input two RGB images from wrist cameras, along with the end-ef fector pose, v elocity , force, and torques. The state- based alternati ve uses the same propriocepti ve inputs plus the noisy goal pose instead of images. In Fig. 5, the state-based r esidual policy is sensiti ve to errors in estimated goal pose and to out-of-distribution socket positions, showing degraded performance. In contrast, our image-based r esidual policy is less affected by socket pose changes, as long as the visual observation remains similar to the training distribution. Its performance only drops slightly under extreme pose shifts, primarily due to the resulting changes in the base action distribution. At novel socket poses, the base policy may produce out-of-distribution base actions that the residual policy cannot fully correct. Overall, SP ARR with the image- based residual polic y achie ves a 38.6% improv ement over the base policy and outperforms the state-based residual policy by 20.8%, a veraged across varying physical sock et positions. D. Generalization to Unseen T asks W e ev aluate the generalizability of SP ARR on NIST assemblies (Fig. 2) that were not seen during pre-training on AutoMate tasks. W e aim to achie ve strong real-world performance by adapting the base policy from relev ant prior tasks. In Fig. 6, we select the simulation policy based on the object size and the behavior pattern. For 4 mm r ound pe g insertion , we le verage AutoMate task 01041, which has the smallest plug (6 mm diameter) among the 10 AutoMate tasks. For 12 mm r ectangular pe g insertion , we choose AutoMate task 00731, which has a 10 mm diameter round peg. For Adapt Adapt Adapt (a) T ra nsfer fro m AutoMa te 0 1041 to 4mm ro und peg i nsertion (b) T ransfe r fro m AutoMa te 0 0731 to 12 mm rect a ngu lar peg in sertion (c) T rans fer fro m AutoMa te 0 0768 t o sma ll ge ar insertion Fig. 6: Adaptation of simulation policies from A utoMate tasks to NIST tasks. W e show images from wrist-mounted cameras here. Fig. 2 (bottom) shows the NIST tasks from the front camera vie w . small gear insertion , we select AutoMate task 00768, whose behavior in volv es placing a cap onto a cylinder , distinct from other typical peg-in-hole tasks. These choices result in reasonable zero-shot performance on the NIST tasks (success rates of 0.4–0.7). More systematic methods [40] can be applied to reliably identify prior tasks transferring to ne w tasks according to task similarity and relev ance. When deploying the base policy , dif ferences in dynamics between simulation and reality degrade the performance. Howe ver , the policy is largely unaffected by differences in visual observations because it only conditions on low- dimensional state information. As a result, the base policy still generates some successful demonstrations and serves as a functional prior for real-world training. W e then train a residual polic y using SP ARR to impro ve real-world success. Fig. 7 shows the generalizability of SP ARR under unseen dynamics and visual observations. For r ound peg insertion , SP ARR achie ves only an 80% success rate because the tiny hole on the reflectiv e white board is extremely dif ficult to detect (Fig. 2&6). Although the goal is unclear in the image observ ation, the residual policy still helps to improve performance using input from the proprioceptiv e state. For r ectangular peg insertion , the base polic y was trained to Round Rectangle Gear 0.0 0.2 0.4 0.6 0.8 1.0 Success Rate AutoMate SP ARR (Ours) Round Rectangle Gear 0 2 4 6 8 10 12 14 16 Average Episode T ime (in seconds ) Fig. 7: Perf ormance on NIST assembly tasks. SP ARR outper - forms the baseline in success rate ( ↑ higher is better) and cycle time ( ↓ lower is better). 0 10 20 30 40 Environment timestep −1.0 −0.5 0.0 0.5 1.0 Action Base action in yaw axis Combined action in yaw axis Residual action in yaw axis Fig. 8: Visualization of base, residual and combined actions. On a trajectory completing rectangular peg insertion task, the residual action in ya w axis disagrees with the base action and changes the plug rotation when the combined action is ex ecuted in the en vironment. All these actions are clamped to the range [-1,1] as incremental target pose. insert a round pe g and cannot handle the slight rotational mismatch with the rectangular hole. T o emphasize this chal- lenge, we add a uniform yaw noise of up to 3 de grees to the end-effector pose at the start of each episode. Our residual policy learns the necessary rotation to align the peg with the target hole (Fig. 8), achieving a perfect success in all 20 e valuation episodes. For small gear insertion , the gear clearance is much tighter (0 . 005 − 0 . 014 mm ) than the plug in AutoMate task 00768 (0 . 5 − 1 mm ). The residual polic y learns to correct both the insertion angle and the applied actions, resulting in successful insertions. Overall, SP ARR achiev es a relative impro vement of 74.5% in success rate and 36.5% in cycle time across these NIST tasks. E. Ablation Study a) Effect of demo buf fer update: As described in Sec. III-E, we update the demonstration b uffer during RLPD policy learning. Specifically , we add high-quality trajectories that reach success faster than at least half of the offline demonstrations. This allo ws the policy to retain and exploit valuable experiences encountered during training, enabling it to complete the task faster . T able I compares methods with and without this demo b uf fer update, showing that SP ARR outperforms the variant that does not update the buf fer . T ABLE I: Comparison of method variants. SP ARR shows higher success rate than the v ariant without demo buf fer update. Success rate ( ↑ ) Cycle time (s) ( ↓ ) T ask 01041 01036 01041 01036 AutoMate 0.8 0.8 10.76 4.82 SP ARR w/o demo update 0.95 0.8 8.1 4.31 SP ARR (Ours) 1.0 1.0 6.81 5.45 b) Effect of base action as input to r esidual policy: Including the base action as an input provides important context for the residual correction. In T able II, without the base action as input, the residual polic y still slightly improv es zero-shot deployment performance of AutoMate. compared to this v ariant without base action as input, SP ARR further enhances both the success rate and the cycle time. T ABLE II: Comparison of method variants. SP ARR outper- forms the v ariant without base action as input to residual polic y . Success rate ( ↑ ) Cycle time (s) ( ↓ ) T ask 00015 00768 00015 00768 AutoMate 0.75 0.65 7.66 10.19 SP ARR w/o base action input 0.8 0.8 6.12 8.76 SP ARR (Ours) 1.0 0.95 3.51 6.11 V . C O N C L U S I O N A N D F U T U R E W O R K In this work, we propose a residual polic y learning ap- proach SP ARR that lev erages a simulation-trained state- based policy as a base and augments it with an asym- metric, vision-conditioned residual in the real world. The base policy provides structured priors and guides e xploration, while the residual corrects for real-world discrepancies. On real-world two-part assembly tasks, SP ARR achieves near - perfect success without requiring human demonstrations or interventions, while also demonstrating robustness to state noise and generalizability to unseen tasks. While SP ARR is highly effecti ve, it relies on several assumptions. First, it requires the plug to be rigidly pre- grasped in the gripper . In vestigating the full grasp-to- insertion pipeline and improving robustness to grasp per- turbations remain topics for future work. Second, SP ARR depends on reasonably good zero-shot sim-to-real transfer of the base policy . If the base policy achie ves near-zero success in the real world, the residual component alone is insufficient to reco ver performance. Finally , SP ARR assumes access to automated re ward or success detection during real- world deployment. Relaxing these assumptions is an impor - tant direction for future research. It would be valuable to explore more expressi ve residual policies and more reliable success classifiers, such as multimodal models that integrate visual, force, and audio signals. Extending the framework to more div erse and challenging tasks beyond insertion-style assemblies is another promising direction. R E F E R E N C E S [1] G. Thomas, M. Chien, A. T amar, J. A. Ojea, and P . Abbeel, “Learning robotic assembly from cad, ” in 2018 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2018, pp. 3524–3531. [2] Y . Fan, J. Luo, and M. T omizuka, “ A learning frame work for high precision industrial assembly , ” in 2019 International Confer ence on Robotics and Automation (ICRA) . IEEE, 2019, pp. 811–817. [3] Y . Narang, K. Storey , I. Akinola, M. Macklin, P . Reist, L. W awrzyniak, Y . Guo, A. Moravanszky , G. State, M. Lu, et al. , “Factory: Fast contact for robotic assembly , ” arXiv preprint , 2022. [4] Y . Tian, K. D. Willis, B. Al Omari, J. Luo, P . Ma, Y . Li, F . Javid, E. Gu, J. Jacob, S. Sueda, et al. , “ Asap: Automated sequence planning for complex robotic assembly with physical feasibility , ” in 2024 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2024, pp. 4380–4386. [5] B. T ang, M. A. Lin, I. Akinola, A. Handa, G. S. Sukhatme, F . Ramos, D. Fox, and Y . Narang, “Industreal: T ransferring contact-rich assembly tasks from simulation to reality , ” arXiv pr eprint arXiv:2305.17110 , 2023. [6] B. T ang, I. Akinola, J. Xu, B. W en, A. Handa, K. V an W yk, D. Fox, G. S. Sukhatme, F . Ramos, and Y . Narang, “ Automate: Specialist and generalist assembly policies ov er div erse geometries, ” arXiv preprint arXiv:2407.08028 , vol. 1, no. 2, 2024. [7] Y . Tian, J. Jacob, Y . Huang, J. Zhao, E. Gu, P . Ma, A. Zhang, F . Javid, B. Romero, S. Chitta, et al. , “Fabrica: Dual-arm assembly of general multi-part objects via integrated planning and learning, ” arXiv pr eprint arXiv:2506.05168 , 2025. [8] J. Luo, Z. Hu, C. Xu, Y . L. T an, J. Berg, A. Sharma, S. Schaal, C. Finn, A. Gupta, and S. Levine, “Serl: A software suite for sample- efficient robotic reinforcement learning, ” in 2024 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2024, pp. 16 961–16 969. [9] J. Luo, C. Xu, J. Wu, and S. Levine, “Precise and dexterous robotic manipulation via human-in-the-loop reinforcement learning, ” arXiv pr eprint arXiv:2410.21845 , 2024. [10] C. Xu, Q. Li, J. Luo, and S. Levine, “Rldg: Robotic general- ist policy distillation via reinforcement learning, ” arXiv preprint arXiv:2412.09858 , 2024. [11] M. T . Mason, “Compliance and force control for computer controlled manipulators, ” IEEE T ransactions on Systems, Man, and Cybernetics , vol. 11, no. 6, pp. 418–432, 1981. [12] D. E. Whitney et al. , “Quasi-static assembly of compliantly supported rigid parts, ” Journal of Dynamic Systems, Measur ement, and Contr ol , vol. 104, no. 1, pp. 65–77, 1982. [13] S. Dong, D. K. Jha, D. Romeres, S. Kim, D. Niko vski, and A. Ro- driguez, “T actile-rl for insertion: Generalization to objects of unknown geometry , ” in 2021 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2021, pp. 6437–6443. [14] J. T obin, R. Fong, A. Ray , J. Schneider , W . Zaremba, and P . Abbeel, “Domain randomization for transferring deep neural networks from simulation to the real world, ” in 2017 IEEE/RSJ international con- fer ence on intelligent r obots and systems (IR OS) . IEEE, 2017, pp. 23–30. [15] X. B. Peng, M. Andrychowicz, W . Zaremba, and P . Abbeel, “Sim-to- real transfer of robotic control with dynamics randomization, ” in 2018 IEEE international conference on r obotics and automation (ICRA) . IEEE, 2018, pp. 3803–3810. [16] F . Sadeghi and S. Le vine, “Cad2rl: Real single-image flight without a single real image, ” arXiv pr eprint arXiv:1611.04201 , 2016. [17] A. Handa, A. Allshire, V . Makoviychuk, A. Petrenko, R. Singh, J. Liu, D. Makoviichuk, K. V an W yk, A. Zhurke vich, B. Sundaralingam, et al. , “Dextreme: T ransfer of agile in-hand manipulation from simu- lation to reality , ” in 2023 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2023, pp. 5977–5984. [18] K. Bousmalis, A. Irpan, P . W ohlhart, Y . Bai, M. Kelcey , M. Kalakr- ishnan, L. Downs, J. Ibarz, P . Pastor , K. K onolige, et al. , “Using simulation and domain adaptation to improve efficiency of deep robotic grasping, ” in 2018 IEEE international confer ence on robotics and automation (ICRA) . IEEE, 2018, pp. 4243–4250. [19] S. James, P . W ohlhart, M. Kalakrishnan, D. Kalashnikov , A. Irpan, J. Ibarz, S. Levine, R. Hadsell, and K. Bousmalis, “Sim-to-real via sim- to-sim: Data-ef ficient robotic grasping via randomized-to-canonical adaptation networks, ” in Proceedings of the IEEE/CVF conference on computer vision and pattern r ecognition , 2019, pp. 12 627–12 637. [20] Y . Chebotar , A. Handa, V . Makoviychuk, M. Macklin, J. Issac, N. Ratliff, and D. Fox, “Closing the sim-to-real loop: Adapting simula- tion randomization with real world experience, ” in 2019 International Confer ence on Robotics and Automation (ICRA) . IEEE, 2019, pp. 8973–8979. [21] X. Zhang, M. T omizuka, and H. Li, “Bridging the sim-to-real gap with dynamic compliance tuning for industrial insertion, ” in 2024 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2024, pp. 4356–4363. [22] H. Ha, P . Florence, and S. Song, “Scaling up and distilling down: Language-guided robot skill acquisition, ” in Confer ence on Robot Learning . PMLR, 2023, pp. 3766–3777. [23] J. Y amada, M. Rigter , J. Collins, and I. Posner , “T wist: T eacher-student world model distillation for efficient sim-to-real transfer , ” in 2024 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2024, pp. 9190–9196. [24] T . Johannink, S. Bahl, A. Nair , J. Luo, A. Kumar , M. Loskyll, J. A. Ojea, E. Solo wjow , and S. Levine, “Residual reinforcement learning for robot control, ” arXiv pr eprint arXiv:1812.03201 , 2018. [25] ——, “Residual reinforcement learning for robot control, ” in 2019 international conference on r obotics and automation (ICRA) . IEEE, 2019, pp. 6023–6029. [26] P . Kulkarni, J. K ober , R. Bab u ˇ ska, and C. Della Santina, “Learning assembly tasks in a few minutes by combining impedance control and residual recurrent reinforcement learning, ” Advanced Intelligent Systems , vol. 4, no. 1, p. 2100095, 2022. [27] X. Zhang, C. W ang, L. Sun, Z. W u, X. Zhu, and M. T omizuka, “Efficient sim-to-real transfer of contact-rich manipulation skills with online admittance residual learning, ” in Confer ence on Robot Learn- ing . PMLR, 2023, pp. 1621–1639. [28] L. Ankile, A. Simeonov , I. Shenfeld, M. T orne, and P . Agra wal, “From imitation to refinement–residual rl for precise assembly , ” arXiv pr eprint arXiv:2407.16677 , 2024. [29] Z. Zhang, Y . W ang, Z. Zhang, L. W ang, H. Huang, and Q. Cao, “ A residual reinforcement learning method for robotic assembly using visual and force information, ” J ournal of Manufacturing Systems , vol. 72, pp. 245–262, 2024. [30] X. Xu, Y . Hou, C. Xin, Z. Liu, and S. Song, “Compliant residual dagger: Improving real-world contact-rich manipulation with human corrections, ” arXiv pr eprint arXiv:2506.16685 , 2025. [31] S. Haddadin, “The franka emika robot: A standard platform in robotics research, ” IEEE Robotics & Automation Magazine , 2024. [32] J. Schulman, F . W olski, P . Dhariwal, A. Radford, and O. Klimov , “Proximal policy optimization algorithms, ” arXiv preprint arXiv:1707.06347 , 2017. [33] S. Liu, Z. Zeng, T . Ren, F . Li, H. Zhang, J. Y ang, Q. Jiang, C. Li, J. Y ang, H. Su, et al. , “Grounding dino: Marrying dino with grounded pre-training for open-set object detection, ” in Eur opean conference on computer vision . Springer , 2024, pp. 38–55. [34] N. Ravi, V . Gabeur , Y .-T . Hu, R. Hu, C. Ryali, T . Ma, H. Khedr , R. R ¨ adle, C. Rolland, L. Gustafson, et al. , “Sam 2: Segment anything in images and videos, ” arXiv pr eprint arXiv:2408.00714 , 2024. [35] B. W en, W . Y ang, J. Kautz, and S. Birchfield, “Foundationpose: Unified 6d pose estimation and tracking of novel objects, ” in Pr oceed- ings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2024, pp. 17 868–17 879. [36] P . J. Ball, L. Smith, I. Kostrik ov , and S. Le vine, “Efficient online reinforcement learning with offline data, ” in International Conference on Machine Learning . PMLR, 2023, pp. 1577–1594. [37] J. Oh, Y . Guo, S. Singh, and H. Lee, “Self-imitation learning, ” in International confer ence on machine learning . PMLR, 2018, pp. 3878–3887. [38] M. Mittal, C. Y u, Q. Y u, J. Liu, N. Rudin, D. Hoeller , J. L. Y uan, R. Singh, Y . Guo, H. Mazhar, A. Mandlekar , B. Babich, G. State, M. Hutter , and A. Garg, “Orbit: A unified simulation framework for interactiv e robot learning en vironments, ” IEEE Robotics and Automa- tion Letters , vol. 8, no. 6, pp. 3740–3747, 2023. [39] K. Hu, H. Shi, Y . He, W . W ang, C. K. Liu, and S. Song, “Robot trains robot: Automatic real-world policy adaptation and learning for humanoids, ” arXiv pr eprint arXiv:2508.12252 , 2025. [40] Y . Guo, B. T ang, I. Akinola, D. F ox, A. Gupta, and Y . Narang, “Srsa: Skill retrie val and adaptation for robotic assembly tasks, ” arXiv pr eprint arXiv:2503.04538 , 2025.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment