MTRAG-UN: A Benchmark for Open Challenges in Multi-Turn RAG Conversations

We present MTRAG-UN, a benchmark for exploring open challenges in multi-turn retrieval augmented generation, a popular use of large language models. We release a benchmark of 666 tasks containing over 2,800 conversation turns across 6 domains with ac…

Authors: Sara Rosenthal, Yannis Katsis, Vraj Shah

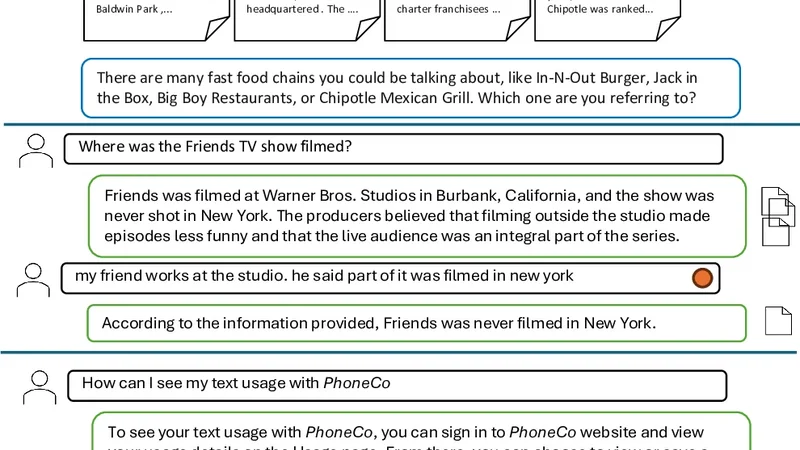

MTRA G-UN: A Benchmark f or Open Challenges in Multi-T urn RA G Con versations Sara Rosenthal, Y annis Katsis, Vraj Shah, Lihong He, Lucian Popa, Marina Danile vsky IBM Research, USA sjrosenthal@us.ibm.com Abstract W e present MTRA G-UN, a benchmark for ex- ploring open challenges in multi-turn retriev al augmented generation, a popular use of lar ge language models. W e release a benchmark of 666 tasks containing ov er 2,800 con versa- tion turns across 6 domains with accompa- nying corpora. Our experiments show that retriev al and generation models continue to struggle on con versations with UNanswerable, UNderspecified, and NONstandalone ques- tions and UNclear responses. Our bench- mark is av ailable at https://github.com/ IBM/mt- rag- benchmark 1 Introduction Seeking information continues to be a popular use case for Large Language Models (LLMs) ( W ang et al. , 2024 ). Thus, Retriev al Augmented Gener- ation (RAG), particularly in the multi-turn inter- actions of LLM chat interfaces ( Li et al. , 2025 ), remains an important research area. Sev eral bench- marks hav e been released to ev aluate model perfor- mance on such tasks ( Dziri et al. , 2022 ; Aliannejadi et al. , 2024 ; Kuo et al. , 2025 ). In particular , the recent MTRA G benchmark ( Katsis et al. , 2025 ) focused on multi-turn information-seeking con ver - sations, constituting 842 tasks in four domains. They reported se veral interesting findings that high- lighted areas of improv ement including unanswer - able questions and later con versation turns. W e pick up on these suggested areas by focusing on user goals that are not achie v able via a single question-response 1 exchange with an LLM. W e sho w this via a new benchmark, complementary to MTRA G, that focuses on: • UNanswerable Question - the user question is not answerable ( Katsis et al. , 2025 ) • UNderspecified Question - the user question is ill-formed or ambiguous, lacking the information to determine a clear intent 1 W e use ‘question’ to refer to any user utterance How did the fast food chain establish a s ignificant presence in the United States ? There are many fast food chains you could be talki ng about, like In -N- Out Burger, Jack in the Box, Big Boy Restaur ants, or Chipotle Mexica n Grill. Which one are you referring to? In -N- Out Burger In - N - Out Burger is an American regional chain of fast food restaurants with locations primarily in the American Southwest and Pacifi c coast . It was founde d in Baldwin Par k ,... Jack in the B ox Jack in the Box is an American fast - food restaurant ch ain founded February 21 , 1951 , by Rober t O. Peterson in San Diego , California , where it is headquarter ed . The …. Big Boy R estaurants By 1979 there were more than a thousand Big Boy re staurants in the U.S. and Canada , and about 20 franchisees . Shoney 's , Elias Brothers and Frisch 's -- charter franchisees ... Chipotle Mexic an Grill In a list of fast est - growing re staurant chains in 200 9 , Chipotle was ranked eighth , based on i ncreases in U.S. sales over the past year , and in 2010 Chipotle was ranked. .. Where was the Friends TV show filmed ? Friends was filmed at Warner Bros. Studios in Burbank, California, and the show was never shot in New York. The producers believed that fil m ing outside the stu dio m ade episodes less funny and t hat the live audience was an integral part of the series. my friend work s at the studio. he sa id part of it was fil med in new york According to the information pr ovided, Friends was ne ver filmed in New York. How can I see my text usage w ith PhoneCo To see your text u sage with PhoneCo , you can sign in to PhoneCo website and view your usage details on the Usage page. From there, you can choose to view or save a printable bill in PDF format. … Nice. If m y phone dies righ t after I clic k send, will th e person still r eceive my message ? Sorry, I do no t have that specifi c information ... Figure 1: Portions of three conv ersations highlighting the challenges in MTRA G-UN. The answerability is shown using the assistant response color: answerable , unanswerable , and underspecified . The multi-turn type is shown using the ques- tion circle: follow-up and clarification . The last two examples show non-standalone questions. • NONstandalone Question - the user question can- not be understood without the prior turns • UNclear Response - the user doesn’t understand, or disagrees with the model answer and requires clarification W e thus refer to this new benchmark as MTRA G- UN. An example of each task is shown in Figure 1 . Our analysis shows that most frontier models strug- gle with handling such tasks, jumping to answer based on plausible b ut assumed interpretations of user intent. These challenges persist in both the retrie val and generation steps of multi-turn RA G. Our contributions are as follo ws: • W e present unexplored areas: UNanswerable, UNderspecified and NONstandalone user ques- tions; and UNclear model responses. • W e add multi-turn con v ersations in two ne w cor- pora, Banking and T elco, to explore the use case Qu e s t i o n ty p e Mu l t i - tu r n Do m a i n An s w e r - abi l i t y Figure 2: Distribution of tasks in MTRA G-UN based on dif ferent dimensions. of chatbots that are deployed in enterprise set- tings to support information-seeking questions • W e release MTRA G-UN: A comprehensiv e benchmark consisting of 666 tasks for ev alu- ating Retrie val, Generation, and the full RA G pipeline. The benchmark is a v ailable at: https: //github.com/IBM/mt- rag- benchmark 2 Benchmark Creation W e describe the tasks presented in MTRA G-UN, as well as the document corpora used for the refer- ence passages. The con v ersations were created by human annotators following the process described in ( Katsis et al. , 2025 ), using the R A G A P H E N E platform ( Fadnis et al. , 2025 ). W e collect a total of 666 human-generated con versations, with an a v- erage of 8 turns per con versation, and we describe the transformation of these con versations into the benchmark tasks at the end of this section. 2.1 T ask Definitions UNanswerable Question. Such a question cannot be answered from retriev ed passages, because no rele vant passages could be found by the annota- tor . The MTRA G Benchmark ( Katsis et al. , 2025 ) sho wed that unanswerable questions are challeng- ing for most LLMs. W e ask annotators to include at least 2 unanswerable questions in each conv ersa- tion, to ensure a suf ficient and div erse data pool. UNderspecified Question. A user question may be underspecified, ill-formed, or ambiguous, thus lacking enough information to determine a single clear intent. In such cases, rather than producing a wrong answer or replying with “I don’ t know", the LLM agent should detect that the user question is unclear and get back to the user , either by point- ing out missing details, presenting several plausi- ble interpretations, or listing options based on the underlying passages. Con versations with under- specified questions were created via a combination of human and synthetic generation. In the former , Corpus Documents (D) Passages (P) A vg P/D Banking 4,497 33,380 7.4 T elco 4,616 52,350 11.3 T able 1: Statistics of new document corpora in MTRA G-UN. annotators were ask ed to write con v ersations that explicitly ended with an underspecified question. In the latter , an underspecified question (also writ- ten by a human) was stitched as a last turn on an existing human annotated multi-turn con versation. Rele vant passages were added for the underspeci- fied question using query expansion methods with a context rele vance filter , in order to generate a rich set of passages to simulate the case of multiple interpretations. The reference response was gener - ated using an LLM followed by human correction. The resulting con versations went through a care- ful human v alidation process. Appendix B gives further details. NONstandalone Question. In a multi-turn con- versation, later turns can implicitly reference in- formation in earlier turns. Such questions are con- sidered non-standalone as they require the prior turns to be understood. W e directed the annota- tors to include more non-standalone questions, an interesting challenge for retrie val. UNclear Response (aka Clarification). In a multi-turn con v ersation, a user may want to ask a clarification question if they don’t clearly under - stand or disagree with the model answer to their pre vious question (e.g., “it was filmed in new york” in Figure 1 ). Though the MTRA G benchmark in- cluded some clarification questions, these were not separately called out or e valuated. 2.2 Document Corpora MTRA G-UN consists of six document corpora: the original four corpora included in MTRA G (CLAPNQ ( Rosenthal et al. , 2025 ), FiQA ( Maia et al. , 2018 ), Govt, Cloud), and two new corpora Recall nDCG @5 @10 @5 @10 BM25 L T 0.29 0.38 0.27 0.31 R W 0.36 0.47 0.34 0.39 BGE-base 1.5 L T 0.25 0.32 0.23 0.26 R W 0.38 0.49 0.35 0.40 Granite R2 L T 0.29 0.38 0.28 0.32 R W 0.40 0.51 0.37 0.42 Elser L T 0.40 0.49 0.36 0.40 R W 0.49 0.60 0.45 0.51 T able 2: Retrieval Performance using Recall and nDCG met- rics for Last T urn (L T) and Query Re write (R W) from the domains of Banking and T elco (see T able 1 .) These new domains pro vide enterprise content, an unexplored area in MTRA G and other RAG benchmarks. Each of the corpora was created by crawling ~ 1K web-pages from se veral companies in the banking and telecommunications sector us- ing seed-pages and crawling their neighborhood to ensure sets of inter-connected pages suitable for writing complex con versations on a giv en topic. 2.3 Benchmark: T asks and Statistics From each con versation, we picked a single turn and created an e v aluation task containing the en- tire con v ersation up to (and including) the question of the chosen turn, leading to the 666 e valuation tasks comprising the MTRA G-UN benchmark. For con versations with underspecified questions, we chose the turn containing the underspecified ques- tion. The remaining con versation turns were picked through a random process biased to gi ve preference to challenging UN-turns. The resulting distrib u- tion of tasks is shown in Figure 2 . Compared to MTRA G, the MTRAG-UN benchmark includes 6 instead of 4 domains, contains underspecified questions, has a higher representation of unanswer- ables/partially answerables (a combined 28% vs 15% in MTRA G), and a set of explicitly labeled clarification questions (15% of the tasks). MTRA G- UN is also biased against selecting the first turn of a con v ersation (8% of the tasks - see Appendix, Figure 4 ) , which was found to be easier for LLMs ( Katsis et al. , 2025 ). 3 Evaluation W e report retrie v al and e valuation results on the MTRA G-UN benchmark. Unless otherwise spec- ified, all e xperiments and settings mimic the MTRA G paper ( Katsis et al. , 2025 ). Subset L T R W Standalone No (214) 0.39 0.52 Y es (254) 0.40 0.46 T able 3: Elser R@5 standalone results 3.1 Metrics W e adopt the e v aluation metrics of ( Katsis et al. , 2025 ): (1) reference-based RB llm and RB alg , (2) the IDK ("I Don’ t Know") judge, and (3) faithful- ness judge from RA GAS RL F . All ev aluation met- rics are conditioned to account for answerability . W e use the open-source GPT -OSS-120B instead of the proprietary GPT -4o-mini as judge (Corre- lation is still aligned with human judgments - see Appendix A ). All other judges are consistent with those reported in MTRA G ( Katsis et al. , 2025 ). W e create a new metric for the underspecified instances run with GPT -OSS-120b (See prompt in Appendix Figure 6 ). Its accuracy on 80 random llama-4 and gpt-oss-120b model responses from underspecified instances is 96.2%. These instances are not classi- fied using the other metrics. 3.2 Retrieval W e ran retriev al experiments on the 468 answer- able and partially answerable questions. W e fol- lo w the experiments in MTRA G by running on lexical (BM25), sparse (Elser), and dense mod- els. W e added a newer SO T A dense embedding model, Granite English R2 ( A wasthy et al. , 2025 ), and compare it to BGE-base 1.5 ( Xiao et al. , 2023 ) as reported in the original paper . W e also experi- mented with using ne wer open source models for Query Re write ( Sun et al. , 2023 ) with the same prompt reported in the MTRAG paper and found that GPT -OSS 20B performed best. In all cases Query Rewrite outperforms the last turn. Granite English R2 performs better than BGE-base 1.5 em- beddings, but Elser still performs best. The macro- av erage results across all domains are shown in T able 2 . W e also provide a breakdown by stan- dalone as provided in MTRA G in T able 3 . W e ha ve a considerably larger amount of non-standalone questions that require re write (17.7% in MTRA G and 45.7% in MTRA G-UN). Rewrite helps for both standalone and non-standalone questions, b ut more so for non-standalone questions. Our ne w domains of Banking and T elco perform worse than the other domains with .32 and .39 R@5 respecti vely (compared to an a verage of .52 R@5 for the other domains). T o in vestigate this gap, (a) By question answerability (b) By multi-turn type (c) By domain Figure 3: Generation results in the Reference ( • ) setting using, RB alg , on three different dimensions. RL F RB llm RB alg • ◦ • ◦ • ◦ target 0.85 0.69 0.96 0.92 0.89 0.88 gpt-oss-120b 0.65 0.59 0.76 0.65 0.46 0.37 gpt-oss-20b 0.60 0.55 0.67 0.63 0.43 0.36 deepseek-v3 0.63 0.60 0.61 0.58 0.42 0.37 deepseek-r1 0.47 0.46 0.54 0.52 0.26 0.23 granite-4-0-small 0.62 0.56 0.55 0.53 0.45 0.38 granite-4-0-tiny 0.48 0.46 0.50 0.50 0.35 0.31 qwen-30b-a3b 0.61 0.60 0.68 0.60 0.41 0.36 qwen3-8B 0.57 0.55 0.64 0.58 0.41 0.36 llama-4-mvk-17b 0.62 0.58 0.59 0.57 0.42 0.37 llama-3.3-70b 0.62 0.58 0.58 0.55 0.43 0.38 mistral-small 24b 0.63 0.59 0.67 0.57 0.45 0.37 mistral-large 675b 0.55 0.52 0.67 0.60 0.39 0.34 phi-4 0.54 0.49 0.64 0.57 0.40 0.34 T able 4: Generation by retriev al setting: Reference ( • ) and RA G ( ◦ ). The best result is bold and runner-up is underlined . we analyzed corpus-lev el characteristics and found that Banking and T elco contain substantially longer documents and denser hyperlink structures, sug- gesting stronger cross-page dependencies typical of enterprise web content. Additionally , these do- mains include multiple companies with structurally similar pages (e.g., checking accounts or credit card of fers), which likely increases retriev al difficulty due to content similarity across sources. Overall, our scores are lower than MTRA G, highlighting that more work is needed for multi-turn retrie v al. 3.3 Generation W e ran generation e xperiments using the original prompt used in MTRA G ( Katsis et al. , 2025 ) with an additional sentence to to accommodate the pos- sibility of underspecified questions: Giv en one or more documents and a user question, generate a response to the question using less than 150 words that is grounded in the provided documents. If no answer can be found in the documents, say , "I do not have specific information". If a question is underspecified — e.g., it has multiple possible answers, a broad scope, or needs explanation — include that further clarification/information is needed from the user in your response. In the reference task we send up to the first 10 rele vant passages for generation. In the RA G task, we send the top 5 retriev ed passages using Elser with query re write. T able 4 presents the generation ev aluation re- sults for both reference and RAG settings. W e e valuate a diverse set of LLMs, including GPT - OSS ( OpenAI , 2025 ), DeepSeek-V3 ( DeepSeek- AI , 2024 ), DeepSeek-R1 ( DeepSeek-AI , 2025 ), Granite-4 ( IBM , 2025 ), Qwen3 ( Qwen , 2025 ), Llama ( Meta , 2025 , 2024 ), Mistral ( Mistral AI , 2025 ), and Phi-4 ( Abdin et al. , 2024 ). Model scores remain significantly lower than target an- swer scores, indicating room for improvement in multi-turn RA G. Larger models usually perform better within each model family , and performance in the reference setting is consistently higher than RA G, reflecting the added difficulty introduced by retriev al noise. GPT -OSS-120B achiev es the best scores, while DeepSeek-V3, Qwen-30B and Mistral-Small-24B remain competiti ve. Figure 3 shows the generation quality by dif- ferent dimensions: answerability , multi-turn type, and domain. While most models perform worse on unanswerables, DeepSeek-V3 and GPT -OSS models exhibit comparatively rob ust beha vior by frequently responding with IDK. This is a stark improv ement ov er the takea ways from prior work ( Katsis et al. , 2025 ), where no models han- dled unanswerables well. Performance on under - specified question is consistently low , as models are generally eager to answer based on a plausible but assumed interpretation of the question. Clarifica- tion questions show lower performance than follow- up questions. This suggests that current models are better at con v ersational continuation than for intent refinement and self-correction. W e find that the performance across the two ne w domains is lar gely comparable, while the other domains (average per - formance reported in Figure 3c ) trend lower due to the challenging FiQA corpus ( Katsis et al. , 2025 ). 4 Conclusion and Future W ork The MTRA G-UN benchmark of 666 tasks and base- line results provided in our paper highlight e xisting and ongoing challenges in multi-turn RA G. W e re- lease our benchmark 2 to encourage adv ances in this important topic. In the future, we plan to release multilingual RA G con v ersations. 5 Acknowledgments W e would like to thank our annotators for their high-quality work in generating and ev aluating this dataset: Mohamed Nasr , Joekie Gurski, T amara Henderson, Hee Dong Lee, Roxana Passaro, Chie Ugumori, Marina V ariano, and Eva-Maria W olfe. Limitations Our con versations are limited to English and 6 closed domains. They are created by a small set of human annotators and thus likely contain biases to ward those individuals and Elser retriever and the Mixtral 8x7b generator used to retrieve pas- sages and generate the initial response respectiv ely . Expanding the annotator pool and creating con ver - sations in other languages would improve these limitations. References Marah Abdin, Jyoti Aneja, Harkirat Behl, Sébastien Bubeck, Ronen Eldan, Suriya Gunasekar , Michael Harrison, Russell J He wett, Mojan Jav aheripi, Piero Kauffmann, and 1 others. 2024. Phi-4 technical re- port. arXiv pr eprint arXiv:2412.08905 . Mohammad Aliannejadi, Zahra Abbasiantaeb, Shubham Chatterjee, Jeffre y Dalton, and Leif Azzopardi. 2024. TREC iKA T 2023: A test collection for ev aluating con versational and interacti v e knowledge assistants . In Pr oceedings of the 47th International A CM SIGIR Confer ence on Resear ch and Development in Infor- mation Retrieval , SIGIR ’24, page 819–829, New Y ork, NY , USA. Association for Computing Machin- ery . Parul A w asthy , Aashka Tri vedi, Y ulong Li, Meet Doshi, Riyaz Bhat, V ignesh P , V ishwajeet Kumar , Y ushu Y ang, Bhav ani Iyer , Abraham Daniels, Rudra Murthy , Ken Bark er , Martin Franz, Madison Lee, T odd W ard, Salim Roukos, Da vid Cox, Luis Lastras, Jaydeep 2 https://github.com/IBM/mt- rag- benchmark Sen, and Radu Florian. 2025. Granite embedding r2 models . Preprint , arXi v:2508.21085. DeepSeek-AI. 2024. Deepseek - v3 technical report . arXiv , abs/2412.19437. DeepSeek-AI. 2025. Deepseek-r1: Incentivizing rea- soning capability in llms via reinforcement learning . arXiv . Nouha Dziri, Ehsan Kamalloo, Si van Milton, Os- mar Zaiane, Mo Y u, Edoardo M. Ponti, and Siv a Reddy . 2022. FaithDial: A faithful benchmark for information-seeking dialogue . T ransactions of the Association for Computational Linguistics , 10:1473– 1490. Kshitij Fadnis, Sara Rosenthal, Maeda Hanafi, Y annis Katsis, and Marina Danilevsk y . 2025. Ragaphene: A rag annotation platform with human enhancements and edits . Preprint , arXi v:2508.19272. IBM. 2025. IBM Granite 4.0 models . Y annis Katsis, Sara Rosenthal, Kshitij Fadnis, Chu- laka Gunasekara, Y oung-Suk Lee, Lucian Popa, Vraj Shah, Huaiyu Zhu, Danish Contractor , and Marina Danilevsk y . 2025. MTRAG: A multi-turn conv ersa- tional benchmark for ev aluating retriev al-augmented generation systems . T ransactions of the Association for Computational Linguistics , 13:784–808. Tzu-Lin Kuo, FengT ing Liao, Mu-W ei Hsieh, Fu-Chieh Chang, Po-Chun Hsu, and Da-shan Shiu. 2025. RAD- bench: Evaluating lar ge language models’ capabili- ties in retriev al augmented dialogues . In Pr oceedings of the 2025 Confer ence of the Nations of the Americas Chapter of the Association for Computational Lin- guistics: Human Language T echnologies (V olume 3: Industry T rac k) , pages 868–902, Alb uquerque, New Mexico. Association for Computational Linguistics. Y ubo Li, Xiaobin Shen, Xinyu Y ao, Xueying Ding, Y idi Miao, Ramayya Krishnan, and Rema Padman. 2025. Beyond single-turn: A surve y on multi-turn interactions with large language models . Pr eprint , Macedo Maia, Siegfried Handschuh, André Freitas, Brian Davis, Ross McDermott, Manel Zarrouk, and Alexandra Balahur . 2018. WWW’18 open challenge: Financial opinion mining and question answering . In Companion Pr oceedings of the The W eb Confer ence 2018 , WWW ’18, page 1941–1942, Republic and Canton of Gene va, CHE. International W orld W ide W eb Conferences Steering Committee. Meta. 2024. Llama 3 models . Meta. 2025. Llama 4 models . Mistral AI. 2025. Mistral ai open models . Includes Mistral Small and Large models. OpenAI. 2025. GPT -OSS-120B and GPT -OSS-20B open-weight models . Tu r n Figure 4: Distribution of tasks in MTRA G-UN based on con versational turn. (a) With F aithfulness (F) , Appro- priateness (A) , and Complete- ness (C) . (b) W ith W in-Rate (WR) Figure 5: W eighted Spearman correlation: automated judge metrics vs human ev aluation metrics. Qwen. 2025. Qwen 3 models . Sara Rosenthal, A virup Sil, Radu Florian, and Salim Roukos. 2025. CLAPnq: Cohesi ve long-form an- swers from passages in natural questions for RA G systems . T ransactions of the Association for Compu- tational Linguistics , 13:53–72. Zhongkai Sun, Y ingxue Zhou, Jie Hao, Xing F an, Y an- bin Lu, Chengyuan Ma, W ei (Sawyer) Shen, and Chenlei (Edward) Guo. 2023. Improving contextual query re write for con v ersational ai agents through user-preference feedback learning . In EMNLP 2023 . Jiayin W ang, W eizhi Ma, Peijie Sun, Min Zhang, and Jian-Y un Nie. 2024. Understanding user e xperi- ence in large language model interactions . Pr eprint , Shitao Xiao, Zheng Liu, Peitian Zhang, and Niklas Muennighoff. 2023. C-pack: Packaged resources to adv ance general chinese embedding . Pr eprint , A Stats and Metrics A distribution of tasks by turn is provided in Fig- ure 4 . MTRA G-UN does not include con versa- tional questions (e.g., “Hi", “Thank you"), since, as noted in MTRA G (which included them in the benchmark b ut not in the e v aluation), more work is required to dev elop appropriate e valuation metrics for them. T o ensure that using GPT -OSS-120B in place of the GPT -4o-mini as the judge does not negati vely 1 [ I ns t r u c t i on] Y o u a r e a n a s s i s t a n t t ha t d e t e r m i n e s w he t he r a gi ve n r e s pon s e i s a s ki ng f or c l a r i f i c a t i on . O ut pu t “ ye s ” i f i t i s a c l a r i f i c a t i o n r e s po ns e , “ no ” ot he r w i s e . A c l a r i f i c a t i on r e s pon s e i s a r e s p ons e w hos e pr i m a r y pu r p os e i s t o r e que s t a d di t i on a l i nf or m a t i on ne e d e d t o r e s ol ve a n a m bi gu i t y, un de r s pe c i f i c a t i o n, or un c l e a r r e f e r e n c e i n t he us e r ' s or i gi na l m e s s a ge . C l a r i f i c a t i o n r e s po ns e s m a y a ppe a r i n t w o f or m s : 1. ** D i r e c t c l a r i f i c a t i o n q ue s t i on s ** – E xp l i c i t qu e s t i on s a s ki n g t h e us e r t o s pe c i f y o r c h oo s e a m on g o pt i on s . E xa m pl e : “ W hi c h one a r e you r e f e r r i ng t o? ” 2. ** D i r e c t i ve c l a r i f i c a t i on r e qu e s t s ** – I m pe r a t i ve or po l i t e s t a t e m e nt s t h a t a s k t h e us e r t o *p r o vi de s pe c i f i c m i s s i ng i nf or m a t i on* . – T he s e s t i l l c oun t e ve n i f t he y c ont a i n n o q ue s t i on m a r k. E xa m pl e : “ P l e a s e p r o vi de t he bo ok yo u a r e r e f e r r i ng t o. ” N o t c l a r i f i c a t i on r e s pon s e s : - S t a t e m e nt s t ha t m e r e l y e xpr e s s i n a bi l i t y t o a ns w e r **w i t hou t r e qu e s t i ng t he m i s s i ng i nf o* *. - R e s pon s e s t ha t m e nt i o n m i s s i ng i nf or m a t i on but do **n ot * * d i r e c t l y a s k t he us e r t o pr ovi de i t . E xa m pl e : “ I c a n' t a ns w e r be c a u s e I don ' t ha ve yo ur l oc a t i on . ” - R e s po ns e s t ha t a s k un r e l a t e d qu e s t i on s or i n t r o du c e ne w t op i c s . R e s pon s e : { { r e s po ns e } } Figure 6: Prompt used for clarification judge. af fect the quality of the ev aluation results, we re- peated the correlation analysis of ( Katsis et al. , 2025 ) using the open-source model as the judge. The results are depicted in Figure 5 . W e observ e that the correlation between the judges and the humans judgments using the open source model improv ed slightly or remained consistent compared to using the proprietary model as the judge. B Details on UNderspecified Figure 1 shows an e xample of a con versation where the last user turn is an underspecified question (ask- ing about a v ague fast food chain in the US), to- gether with a set of reference passages from the corpus, and a tar get response for what the model should ask back from the user . The patterns for the model response follow three general cate gories, each ending with a request to the user to giv e more information (see also T able 5 ): 1. Hedging with answers (for the case with fe w options – e.g., 2-3): list the few options and provide a brief description or answer associ- ated with each. 2. Hedging ov er list (for the cases with medium number of options – e.g., 4-8): an enumeration of the plausible options without additional ex- planatory content. 3. Open-domain (for the cases where there are many/unbounded options): directly ask the user for disambiguation ov er the type of entity that they may ha ve in mind. Pattern Example Hedging with answer There are many astronauts you could be refer- ring to, such as Ellen Ochoa, who was the first Hispanic w oman to go to space and has received numerous aw ards, including the Presidential Medal of Freedom, or Kalpana Chawla, who was the first woman of Indian origin to go to space and tragically died in the Columbia disas- ter in 2003. Which one are you talking about? Hedging over list There are many fast food chains you could be talking about, like In-N-Out Burger , Jack in the Box, Big Boy Restaurants, or Chipotle Mexican Grill. Which one are you referring to? Open-domain Which modern smart design segment are you talking about? T able 5: T ypes of response to underspecified questions. B.1 Stitching of the underspecified questions In Section 2.1 , we described underspecified ques- tions written by a human that were stitched as a last turn onto an e xisting human annotated multi-turn con versation. The process we implemented was a very controlled one, where stitching was done in two ways: a) by finding e xisting con versations on the same or v ery similar topic, simulating the case where the new turn is not out of place (75% of the underspecified tasks), and b) by finding ex- isting con v ersations on a dif ferent topic, so that the new turn reflects a topic switch by the user , while still being an underspecified question (25% of the underspecified tasks). The second case could change the flo w of the con v ersation, but we believ e that it adds an additional challenge to the models e valuated on such data. In particular , it reflects the realistic scenario where users change topics some- times randomly , but we still want the models to be able to detect that and react accordingly . B.2 V alidation The underspecified questions went through careful v alidation including filtering (e.g., of cases where the last turn intent would accidentally become clear because the addition of the conte xt in which it is being stitched onto), editing of the last turn or of the reference model response to it, and most of the time just plain v alidation.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment