IU: Imperceptible Universal Backdoor Attack

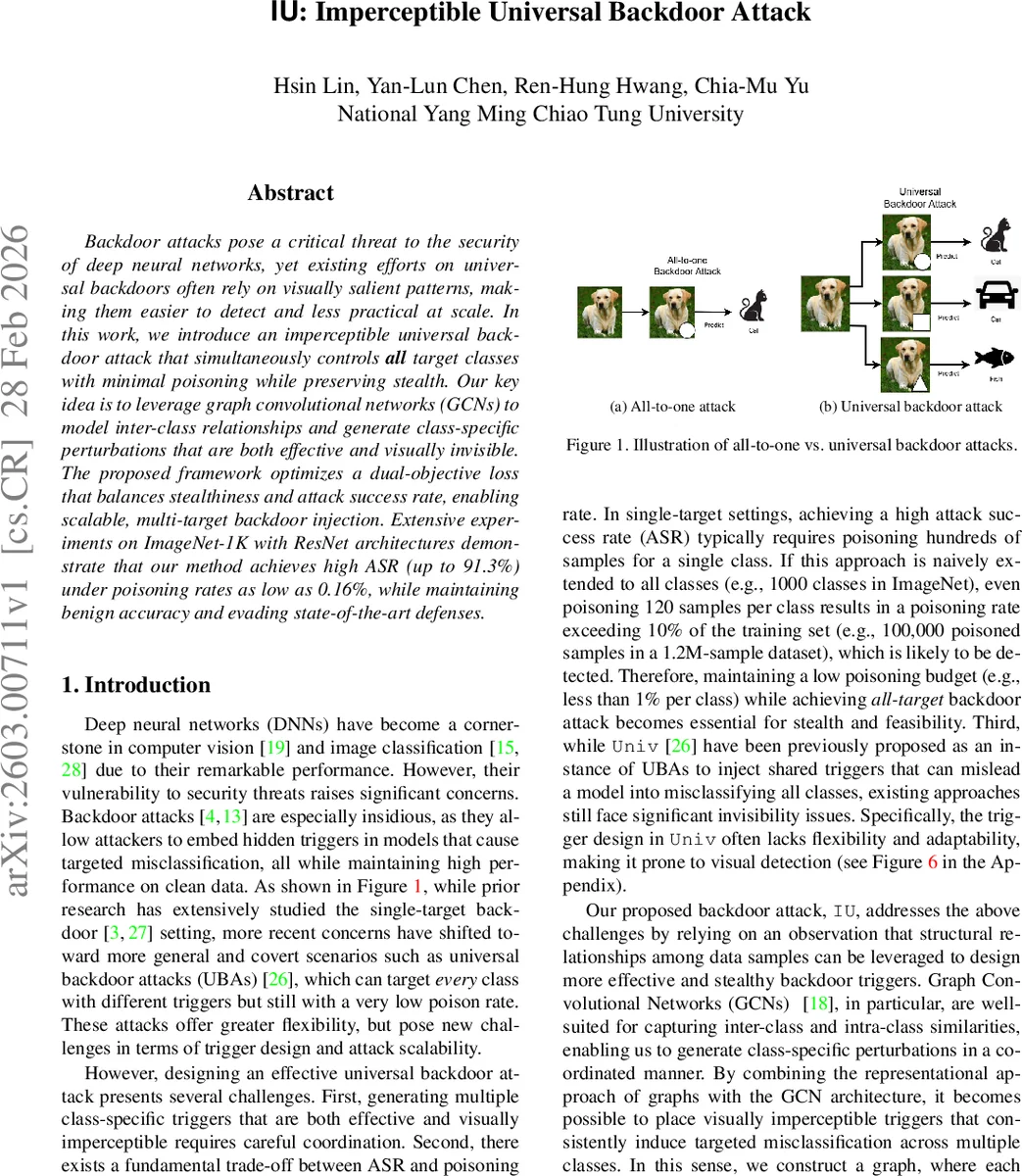

Backdoor attacks pose a critical threat to the security of deep neural networks, yet existing efforts on universal backdoors often rely on visually salient patterns, making them easier to detect and less practical at scale. In this work, we introduce a novel imperceptible universal backdoor attack that simultaneously controls all target classes with minimal poisoning while preserving stealth. Our key idea is to leverage graph convolutional networks (GCNs) to model inter-class relationships and generate class-specific perturbations that are both effective and visually invisible. The proposed framework optimizes a dual-objective loss that balances stealthiness (measured by perceptual similarity metrics such as PSNR) and attack success rate (ASR), enabling scalable, multi-target backdoor injection. Extensive experiments on ImageNet-1K with ResNet architectures demonstrate that our method achieves high ASR (up to 91.3%) under poisoning rates as low as 0.16%, while maintaining benign accuracy and evading state-of-the-art defenses. These results highlight the emerging risks of invisible universal backdoors and call for more robust detection and mitigation strategies.

💡 Research Summary

The paper introduces IU (Imperceptible Universal), a novel backdoor attack that simultaneously targets all classes in a large‑scale image classification setting while remaining visually invisible. The authors identify two major shortcomings of existing universal backdoor approaches: (1) reliance on conspicuous trigger patterns that are easy to detect, and (2) the need for a high poisoning rate when scaling to thousands of classes, which raises suspicion. To overcome these issues, IU leverages Graph Convolutional Networks (GCNs) to capture inter‑class relationships and generate class‑specific perturbations that are both effective and imperceptible.

Core Methodology

- Graph Construction – Each target class is encoded into a binary latent code (length n) using a pretrained classifier, following the procedure of the prior “Univ” method. Pairwise ℓ₁ distances between codes are computed; if the distance dij is below a threshold t, an edge is added with weight wij = (Weight_min)·exp(−dij/t). This yields a weighted graph G = (V, E, W) where nodes represent classes and edge weights reflect semantic similarity.

- GCN Trigger Generator – The graph and node features are fed into a GCN. After several convolutional layers, the GCN outputs a set of perturbation tensors T = {Ty} for all classes. Because the GCN propagates information across similar nodes, the resulting triggers for related classes share common “high‑sensitivity” directions in the victim model’s feature space.

- Dual‑Objective Loss – Training optimizes a weighted sum of (i) Stealth Loss L_stealth = max(0, p – PSNR(X + Ty, X)), which penalizes triggers that cause the Peak Signal‑to‑Noise Ratio to fall below a preset threshold p, and (ii) Attack Loss L_attack = CE(f_pretrain(X + Ty), y), the cross‑entropy between a clean pretrained model’s prediction on the poisoned image and the intended target label y. The total loss is L_total = (1 − β)L_stealth + βL_attack, where β balances invisibility and attack success.

- Poisoning and Inference – After training, a small fraction of training images (as low as 0.16% of the whole ImageNet‑1K set) are modified by adding the appropriate class‑specific trigger and relabeled to the target class. The victim model trained on this poisoned dataset learns the backdoor. At inference time, an attacker can append Ty to any clean input to force misclassification into class y.

Theoretical Insight

The authors model the victim classifier as softmax(W·ϕ(x)), where ϕ(x) is the penultimate feature vector. For a trigger Ty, the average feature shift vy = E

Comments & Academic Discussion

Loading comments...

Leave a Comment