U-Mind: A Unified Framework for Real-Time Multimodal Interaction with Audiovisual Generation

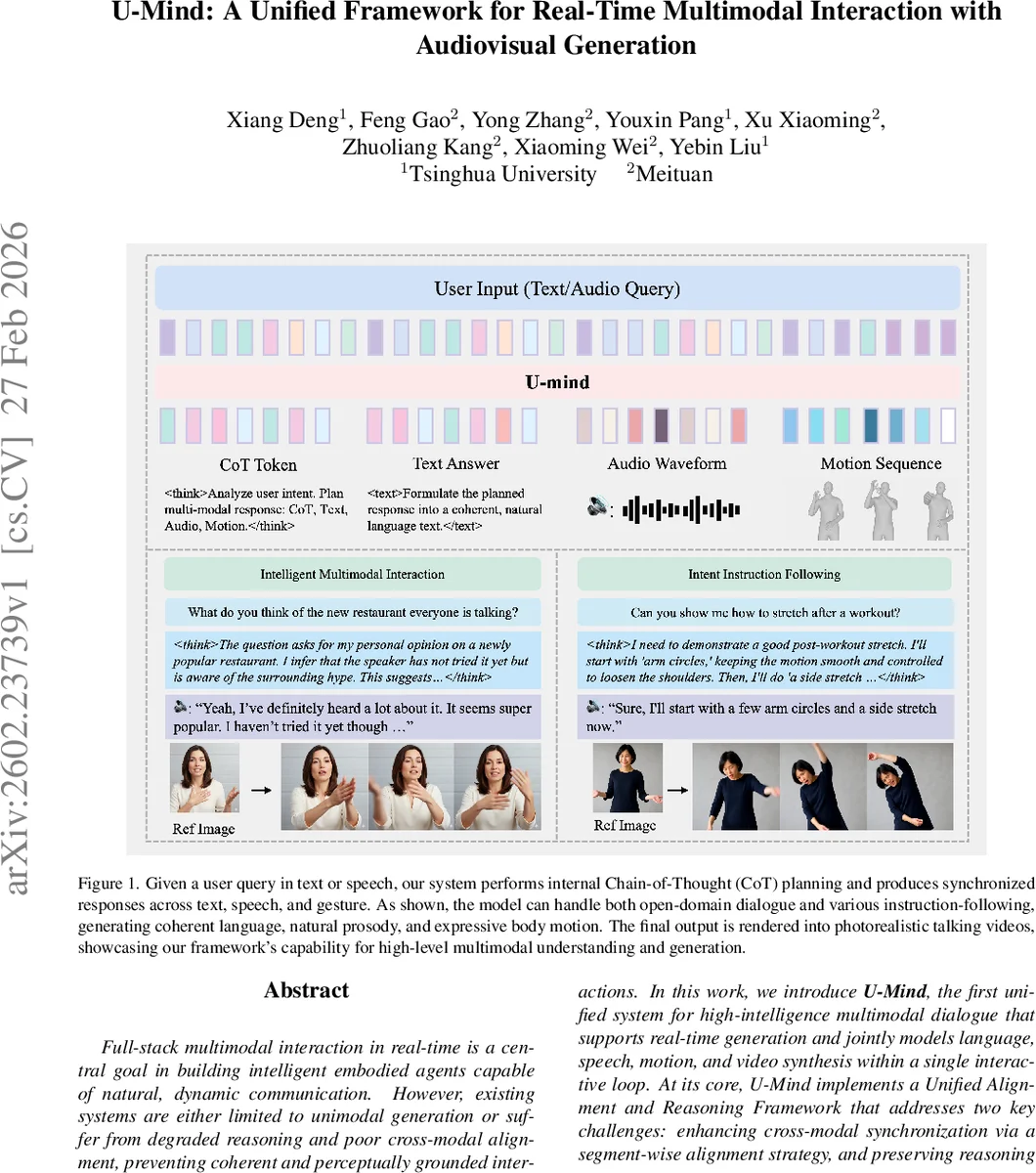

Full-stack multimodal interaction in real-time is a central goal in building intelligent embodied agents capable of natural, dynamic communication. However, existing systems are either limited to unimodal generation or suffer from degraded reasoning and poor cross-modal alignment, preventing coherent and perceptually grounded interactions. In this work, we introduce U-Mind, the first unified system for high-intelligence multimodal dialogue that supports real-time generation and jointly models language, speech, motion, and video synthesis within a single interactive loop. At its core, U-Mind implements a Unified Alignment and Reasoning Framework that addresses two key challenges: enhancing cross-modal synchronization via a segment-wise alignment strategy, and preserving reasoning abilities through Rehearsal-Driven Learning. During inference, U-Mind adopts a text-first decoding pipeline that performs internal chain-of-thought planning followed by temporally synchronized generation across modalities. To close the loop, we implement a real-time video rendering framework conditioned on pose and speech, enabling expressive and synchronized visual feedback. Extensive experiments demonstrate that U-Mind achieves state-of-the-art performance on a range of multimodal interaction tasks, including question answering, instruction following, and motion generation, paving the way toward intelligent, immersive conversational agents.

💡 Research Summary

U‑Mind introduces a unified, real‑time multimodal interaction system that simultaneously generates text, speech, motion, and video within a single autoregressive loop. Built on LLaMA‑2‑7B, the framework extends the language‑only backbone by discretizing speech and body motion into token sequences using residual vector‑quantized variational autoencoders (RVQ‑VAE). Speech is encoded into acoustic tokens that preserve semantic content and prosody, while motion is represented by SMPL‑X pose parameters converted to 6‑D joint rotations and quantized into motion tokens. All token types share a common embedding space, allowing the model to predict interleaved multimodal streams token‑by‑token.

The core methodological contribution is a two‑stage training regime designed to preserve high‑level reasoning while learning cross‑modal alignment. In the Rehearsal‑Driven Foundational Pre‑training stage, the model is exposed to a balanced mixture of modality‑grounded tasks (text‑to‑speech, text‑to‑motion, speech‑to‑motion) and pure‑text chain‑of‑thought (CoT) QA data. A novel segment‑wise alignment strategy splits inputs at prosodic boundaries and randomizes segment combinations, encouraging fine‑grained temporal synchronization across modalities. The rehearsal component continuously rehearses symbolic reasoning, preventing catastrophic forgetting of the LLM’s planning abilities.

During Instruction Tuning, a text‑first decoding pipeline is employed. Every response begins with an internal CoT plan delimited by special <think> and </think> tokens. This plan is pure text and guides the subsequent generation of the visible modalities: textual reply, acoustic token stream, and motion token stream. By forcing the model to reason before emitting continuous outputs, the approach maintains logical coherence even in complex instruction‑following scenarios.

For rendering, U‑Mind provides two real‑time video synthesis back‑ends. The first uses a diffusion‑based 2‑D video generator conditioned on 2‑D keypoints projected from SMPL‑X poses via DWPose. The second employs Gaussian splatting to directly render 3‑D human videos from the pose sequence. Both pipelines are synchronized with the generated speech, producing photorealistic talking‑head videos that reflect the planned gestures and prosody.

Experiments are conducted on BEAT‑v2 (speech‑to‑motion) and HumanML3D (text‑to‑motion) datasets, each augmented with three QA‑style triples generated by a large language model to inject reasoning signals. Additional pure‑text CoT data from OpenOrca and a multilingual TTS corpus (Common Voice) are used. Baselines include SOLAMI (an end‑to‑end multimodal dialogue system), LOM combined with a TTS module, and EMA‑GE (speech‑to‑motion). U‑Mind outperforms all baselines on question‑answering accuracy, motion naturalness (FID, diversity), speech‑motion synchronization metrics, and latency (average <150 ms). User studies report higher perceived naturalness and coherence.

Key contributions are: (1) the first unified full‑stack multimodal agent that retains high‑level reasoning while generating synchronized speech, gesture, and video in real time; (2) a unified alignment and reasoning framework that combines segment‑wise alignment, rehearsal‑driven pre‑training, and text‑first CoT decoding; (3) state‑of‑the‑art performance across a spectrum of multimodal tasks, demonstrating that integrated token‑level modeling can bridge the gap between symbolic reasoning and continuous perception. U‑Mind thus paves the way for intelligent embodied agents such as digital humans, virtual assistants, and interactive educational robots that can converse, gesture, and appear visually coherent in real time.

Comments & Academic Discussion

Loading comments...

Leave a Comment