ACPBench: Reasoning about Action, Change, and Planning

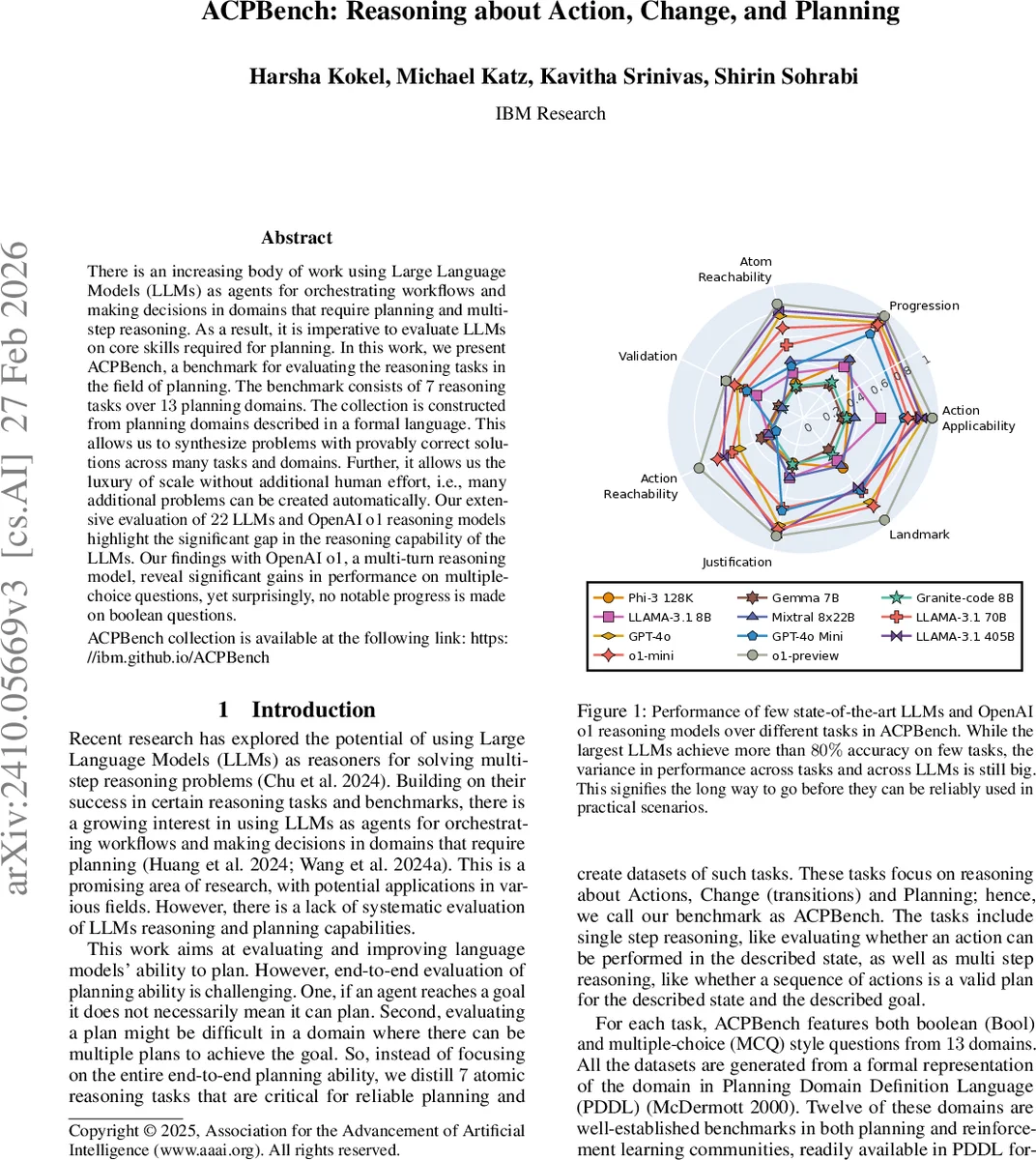

There is an increasing body of work using Large Language Models (LLMs) as agents for orchestrating workflows and making decisions in domains that require planning and multi-step reasoning. As a result, it is imperative to evaluate LLMs on core skills required for planning. In this work, we present ACPBench, a benchmark for evaluating the reasoning tasks in the field of planning. The benchmark consists of 7 reasoning tasks over 13 planning domains. The collection is constructed from planning domains described in a formal language. This allows us to synthesize problems with provably correct solutions across many tasks and domains. Further, it allows us the luxury of scale without additional human effort, i.e., many additional problems can be created automatically. Our extensive evaluation of 22 LLMs and OpenAI o1 reasoning models highlights the significant gap in the reasoning capability of the LLMs. Our findings with OpenAI o1, a multi-turn reasoning model, reveal significant gains in performance on multiple-choice questions, yet surprisingly, no notable progress is made on boolean questions. The ACPBench collection is available at https://ibm.github.io/ACPBench.

💡 Research Summary

The paper introduces ACPBench, a comprehensive benchmark designed to evaluate large language models (LLMs) on core reasoning abilities required for planning. Unlike existing evaluation suites that focus on end‑to‑end plan generation, ACPBench isolates seven atomic reasoning tasks that together capture the essential components of planning: Applicability (determining which actions are executable in a given state), Progression (understanding state changes after an action), Reachability (whether a sub‑goal can eventually be achieved), Action Reachability (whether a specific action can be executed given its preconditions), Validation (checking if a state‑goal pair satisfies the goal), Justification (explaining why an action leads toward a goal), and Landmark (identifying necessary intermediate facts). For each task the benchmark provides both Boolean (yes/no) and multiple‑choice (MCQ) questions.

All data are generated automatically from domains expressed in the Planning Domain Definition Language (PDDL). The authors selected 13 domains—11 classic benchmarks such as BlocksWorld, Logistics, Grid, Ferry, and a novel “Swap” domain—plus the text‑based Alfworld environment. By parsing the PDDL files, extracting predicates, actions, and transition rules, and applying carefully crafted natural‑language templates, they produce human‑readable problem descriptions and queries. Because the underlying logical structure is known, correct answers are provably correct, allowing massive, noise‑free dataset creation without manual labeling. The benchmark contains thousands of questions per task, balanced across positive and negative examples.

The authors evaluate 22 state‑of‑the‑art LLMs (including open‑source models like Phi‑3, Mixtral, LLaMA‑3, and closed‑source GPT‑4o) and two OpenAI o1 reasoning models (preview and mini). They use chain‑of‑thought prompting and two in‑context examples. Results show a substantial performance gap: GPT‑4o reaches an average MCQ accuracy of 78.4% but drops to 52.5% on the hardest Validation task; o1‑preview attains the highest MCQ average of 87.3% yet its Boolean accuracy hovers around 63–68%, indicating little improvement over other models on pure logical yes/no questions. This suggests current LLMs excel at selecting among alternatives but still struggle with precise logical verification.

To test whether model size alone explains performance, the authors fine‑tune an 8‑billion‑parameter model on the ACPBench data. The fine‑tuned model matches the large models (≈80% MCQ, ≈70% Boolean) and demonstrates some generalization to unseen domains, highlighting the importance of task‑specific training data. Three ablation studies are presented: (1) the impact of chain‑of‑thought and in‑context examples (yielding 5–10 percentage‑point gains across tasks), (2) whether ACPBench tasks correlate with full plan generation ability (they find that while the tasks probe essential reasoning steps, LLMs still cannot reliably produce complete, correct plans), and (3) a temporal analysis showing steady MCQ improvements in newer models but stagnant Boolean performance.

In summary, ACPBench offers a scalable, formally grounded benchmark that isolates key planning reasoning skills, provides extensive coverage across domains, and reveals that despite impressive advances, LLMs remain far from robust planning agents. The work underscores the need for better logical reasoning, especially for Boolean verification and multi‑step state transition understanding, and positions ACPBench as a valuable tool for tracking future progress.

Comments & Academic Discussion

Loading comments...

Leave a Comment