Skarimva: Skeleton-based Action Recognition is a Multi-view Application

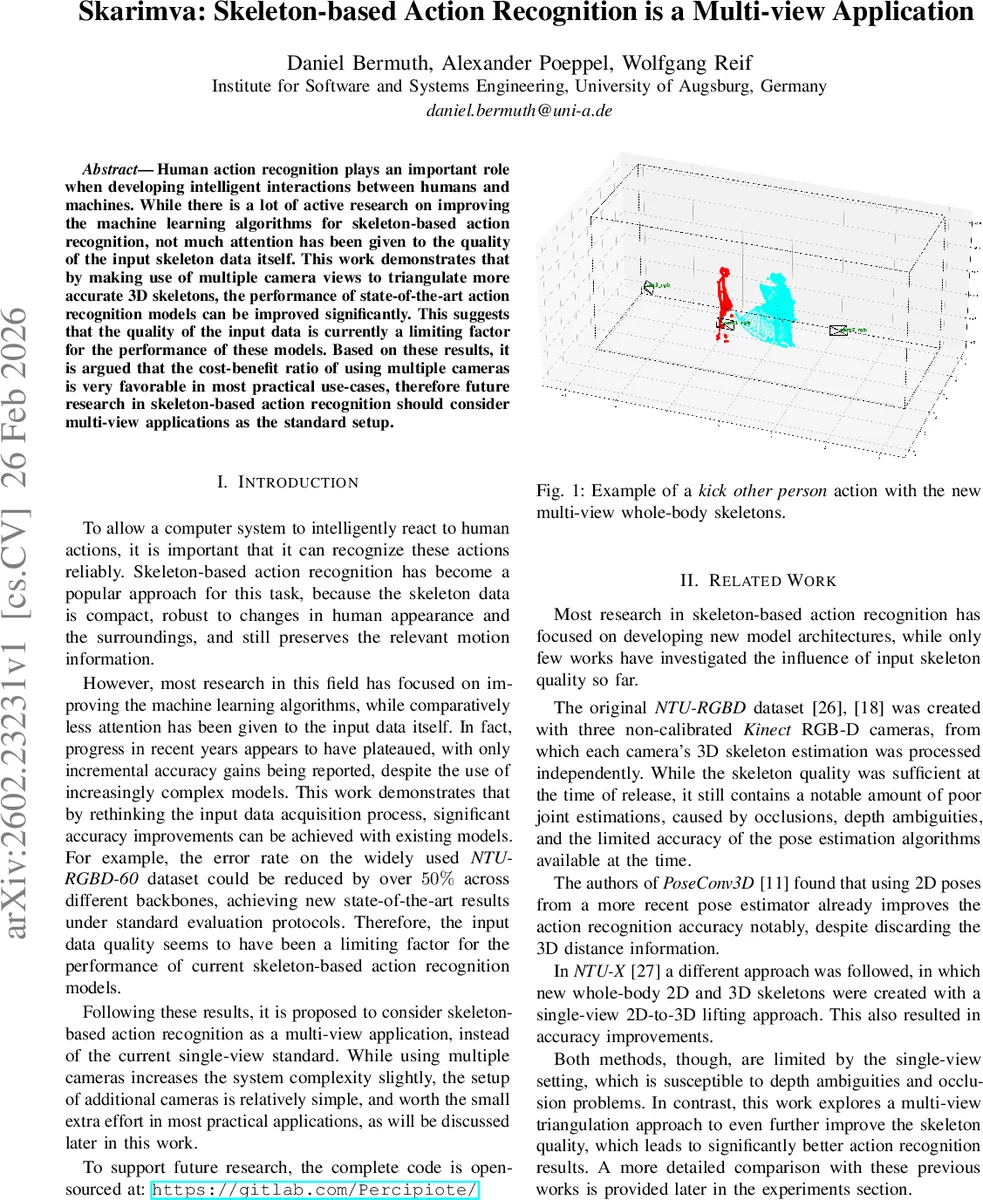

Human action recognition plays an important role when developing intelligent interactions between humans and machines. While there is a lot of active research on improving the machine learning algorithms for skeleton-based action recognition, not much attention has been given to the quality of the input skeleton data itself. This work demonstrates that by making use of multiple camera views to triangulate more accurate 3D~skeletons, the performance of state-of-the-art action recognition models can be improved significantly. This suggests that the quality of the input data is currently a limiting factor for the performance of these models. Based on these results, it is argued that the cost-benefit ratio of using multiple cameras is very favorable in most practical use-cases, therefore future research in skeleton-based action recognition should consider multi-view applications as the standard setup.

💡 Research Summary

Paper Overview

The manuscript “Skarimva: Skeleton‑based Action Recognition is a Multi‑view Application” investigates a largely overlooked factor in skeleton‑based human action recognition: the quality of the input 3D skeletons. While most recent work focuses on designing ever more sophisticated graph‑convolutional networks (GCNs), the authors argue that the performance ceiling is primarily due to noisy, occlusion‑prone skeletons in the widely used NTU‑RGBD‑60 and NTU‑RGBD‑120 datasets. To test this hypothesis, they reconstruct the datasets using a multi‑camera triangulation pipeline, generate high‑fidelity whole‑body skeletons (including face and finger keypoints), and evaluate several state‑of‑the‑art GCN models on the new data.

Methodology

- Camera Calibration & Synchronization – The original NTU‑RGBD recordings were captured with three non‑calibrated Kinect sensors. The authors recover intrinsic and extrinsic parameters by minimizing a multi‑view reprojection loss together with pose‑alignment errors, employing iterative outlier removal. Temporal alignment is also estimated, though the final experiments show that precise synchronization is not required for substantial gains.

- Multi‑view Pose Estimation – They adopt RapidPoseTriangulation, a recent 3D multi‑view, multi‑person pose estimator capable of whole‑body reconstruction (body, hands, face, fingers). The model runs at ~130 FPS on an RTX 4080, comfortably faster than the 30 FPS video streams.

- Tracking & Skeleton Sequencing – A simple distance‑based tracker links skeletons across frames. Tracks shorter than a threshold are discarded as false detections; the two longest tracks (the dataset contains at most two people) are kept for each sequence.

- Skeleton Sets – Several joint configurations are defined:

- wb25 – original 25 joints (body only).

- wb137 – full whole‑body set (25 body + 21 hand + 70 face keypoints).

- wb69 – whole‑body without additional face points.

- wb31 – adds only three fingertip joints.

These allow analysis of how additional keypoints affect recognition performance.

Experimental Setup

Three leading GCN models are re‑trained on the new skeletons with minimal changes: MSG3D, DG‑STGCN, and ProtoGCN. Hyper‑parameters follow the original implementations to ensure fair comparison. Instead of the common multi‑stream (joint + bone) ensembling, the authors concatenate all four modalities (joint, bone, joint‑motion, bone‑motion) into a single graph, thereby halving training effort. Data augmentation consists of random rotations around the Z‑axis (facilitated by world‑aligned skeletons) and uniform scaling.

Results – Full‑body Skeletons

Table I shows that across both NTU‑RGBD‑60 and NTU‑RGBD‑120, every model gains 1–3 percentage points when using the full wb137 set compared with the original wb25. For example, MSG3D improves from 90.8 % to 96.5 % on NTU‑60, and ProtoGCN from 91.8 % to 97.1 %. Adding all four modalities into a single model yields comparable accuracy to the traditional two‑stream ensemble while requiring far less computation.

Ablation on Joint Types

Increasing the number of face and finger joints does not linearly improve performance; in some cases accuracy slightly drops, suggesting over‑fitting to irrelevant keypoints and a dramatic increase in graph edges that slows inference. This observation aligns with prior work (NTU‑X) and points to a need for more efficient GCN architectures that can selectively attend to discriminative joints.

Comparison with Prior Skeleton Improvements

The authors compare their multi‑view triangulation against two earlier attempts to improve NTU skeletons: PoseConv3D (higher‑quality 2D body poses) and NTU‑X (single‑view 2D‑to‑3D lifting). As shown in Table II, the multi‑view approach outperforms both by a clear margin (e.g., MSG3D on NTU‑60: 94.0 % vs. 92.5 % for PoseConv3D). The authors argue that multi‑view triangulation preserves 3D geometric consistency and mitigates occlusions, whereas lifting methods lose depth cues and are limited by the quality of the single view.

Multi‑stream Ensembling

When the authors apply the heavy multi‑stream + multi‑sample ensembling (common in the literature) they achieve new state‑of‑the‑art numbers: ProtoGCN+Skarimva reaches 97.5 % on NTU‑60 and 95.4 % on NTU‑120 (Table III). This demonstrates that the skeleton quality improvement is orthogonal to model architecture and can be combined with any existing enhancement technique.

Few‑shot Learning

Recognizing that large labeled datasets are often unavailable, the authors adapt the classification head to a contrastive embedding plus prototype‑based classifier. In a one‑shot setting, ProtoGCN+Skarimva attains 76.0 % accuracy (Table IV), substantially higher than previous bests (~68 %). With five examples per class, accuracy climbs to 84.9 % (Table V). These results indicate that higher‑quality skeletons provide more discriminative features even when training data is scarce.

Real‑time Considerations

Processing multiple video streams adds computational load, but the authors note that RapidPoseTriangulation already runs faster than the camera frame rate on consumer‑grade GPUs. They propose inexpensive home‑setup scenarios: two or three USB webcams, a printed chessboard for calibration, and a simple GUI to guide users. Even without precise synchronization, the reported gains persist, making multi‑view acquisition feasible for both professional (sports analytics, surveillance, robotics) and consumer (gaming, AR/VR) applications.

Discussion & Conclusions

The paper reframes skeleton‑based action recognition as inherently a multi‑view problem, akin to binocular vision in biology. By demonstrating that a relatively modest hardware investment yields dramatic performance jumps, the authors argue that future research should adopt multi‑view data acquisition as the default. They also highlight open challenges: designing GCNs that can efficiently handle the larger joint graphs, automating calibration and synchronization, and extending the pipeline to mobile devices with built‑in multi‑camera arrays.

Impact

This work shifts the community’s focus from “more complex networks” to “better data”. It provides a reproducible pipeline (open‑sourced code, integration into the skelda library) and compelling empirical evidence that multi‑view triangulation is a low‑cost, high‑payoff strategy. Researchers aiming for state‑of‑the‑art performance, as well as practitioners seeking robust real‑time systems, will find the methodology directly applicable. Future directions include lightweight whole‑body GCNs, self‑calibrating multi‑camera rigs, and end‑to‑end training that jointly optimizes pose reconstruction and action classification.

Comments & Academic Discussion

Loading comments...

Leave a Comment