Joint Optimization for 4D Human-Scene Reconstruction in the Wild

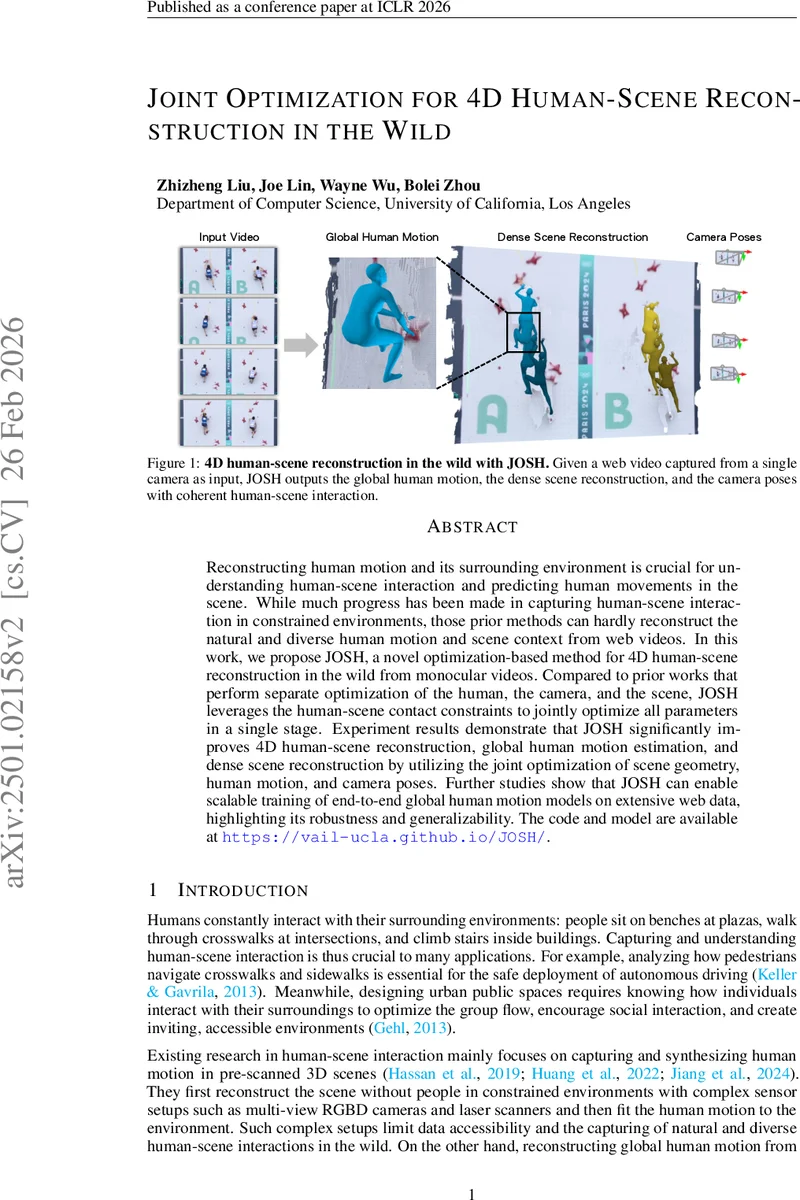

Reconstructing human motion and its surrounding environment is crucial for understanding human-scene interaction and predicting human movements in the scene. While much progress has been made in capturing human-scene interaction in constrained environments, those prior methods can hardly reconstruct the natural and diverse human motion and scene context from web videos. In this work, we propose JOSH, a novel optimization-based method for 4D human-scene reconstruction in the wild from monocular videos. JOSH uses techniques in both dense scene reconstruction and human mesh recovery as initialization, and then it leverages the human-scene contact constraints to jointly optimize the scene, the camera poses, and the human motion. Experiment results show JOSH achieves better results on both global human motion estimation and dense scene reconstruction by joint optimization of scene geometry and human motion. We further design a more efficient model, JOSH3R, and directly train it with pseudo-labels from web videos. JOSH3R outperforms other optimization-free methods by only training with labels predicted from JOSH, further demonstrating its accuracy and generalization ability.

💡 Research Summary

The paper tackles the challenging problem of 4‑D human‑scene reconstruction from a single monocular video captured “in the wild”. The goal is to jointly recover (i) the camera intrinsics and extrinsics for every frame, (ii) the global SMPL‑based human motion of all people in the scene, and (iii) a dense 3‑D point‑cloud representation of the surrounding environment. Existing works either rely on multi‑camera rigs and depth sensors to pre‑scan static scenes, or they estimate human motion without any scene context, leading to physically implausible results when applied to uncontrolled web videos.

JOSH (Joint Optimization of Scene Geometry and Human Motion) proposes a unified optimization framework that simultaneously refines camera poses, human trajectories, and scene geometry. The pipeline consists of three stages: (1) Initialization – dense depth and point maps are obtained from off‑the‑shelf multi‑view stereo or structure‑from‑motion methods; moving humans are masked out using a video segmentation network (DEVA) to keep only background points. Human meshes are initialized with state‑of‑the‑art SMPL recovery networks such as VIMO or MASt3R, providing per‑frame local SMPL parameters. Contact labels for each mesh vertex are predicted by a contact‑estimation network (BSTRO). (2) Joint Optimization – all variables {K_t, P_t, σ_t, Z_t, Θ_t^c} are optimized together. The loss comprises four components:

- Scene reconstruction loss (L_scene) – a combination of 3‑D correspondence (robust L1) and 2‑D reprojection terms over background point correspondences, as in classic bundle adjustment.

- Human prior loss (L_human) – SMPL shape/pose priors and a temporal smoothness term to keep the global motion physically plausible.

- Contact‑Scene loss (L_c1) – for each predicted contact vertex, the nearest background point (visible and depth‑consistent) is identified; the Euclidean distance between the transformed human vertex and the scene point (scaled by per‑frame scale σ_t) is minimized. This enforces that feet, hands, etc., actually touch the reconstructed geometry.

- Contact‑Static loss (L_c2) – when a vertex remains in contact across consecutive frames, the loss penalizes any relative motion between the human vertex and its matched scene point, reducing sliding artifacts.

The total loss is a weighted sum: L = w_scene·L_scene + w_human·L_human + w_c1·L_c1 + w_c2·L_c2. Optimization proceeds with a differentiable non‑linear least‑squares solver (e.g., Levenberg‑Marquardt) and updates the contact correspondences at each iteration, allowing the system to refine both geometry and motion iteratively.

Extensive experiments evaluate JOSH with different initializers. When initialized with VIMO or MASt3R, JOSH achieves a 15 %+ reduction in mean per‑joint position error (MPJPE) over the previous state‑of‑the‑art, and improves Chamfer distance for scene reconstruction. Ablation studies confirm that each contact loss contributes significantly: removing L_c1 degrades foot‑ground alignment, while removing L_c2 leads to noticeable foot‑sliding.

Beyond the optimizer, the authors introduce JOSH3R, a lightweight end‑to‑end network that learns to predict the same outputs directly from video frames. JOSH3R is trained solely on pseudo‑labels generated by JOSH (camera poses, global SMPL trajectories, dense point clouds). It incorporates a “human trajectory head” that predicts relative SE(3) transforms between consecutive frames, enabling real‑time inference. Despite being trained on synthetic labels, JOSH3R outperforms other optimization‑free baselines and even rivals some methods that use ground‑truth motion capture data. This demonstrates that JOSH can serve as a scalable labeling engine for massive web video collections.

In summary, the paper makes three key contributions: (1) a novel formulation that treats human‑scene contact as a strong geometric constraint, (2) a joint optimization that couples camera, human, and scene variables in a single stage, and (3) a pipeline that converts the high‑quality reconstructions into training data for fast, end‑to‑end models. The approach opens the door to large‑scale, in‑the‑wild 4‑D reconstruction, with potential extensions to multi‑person interactions, dynamic environments, and real‑time AR/VR or robotics applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment