IV-tuning: Parameter-Efficient Transfer Learning for Infrared-Visible Tasks

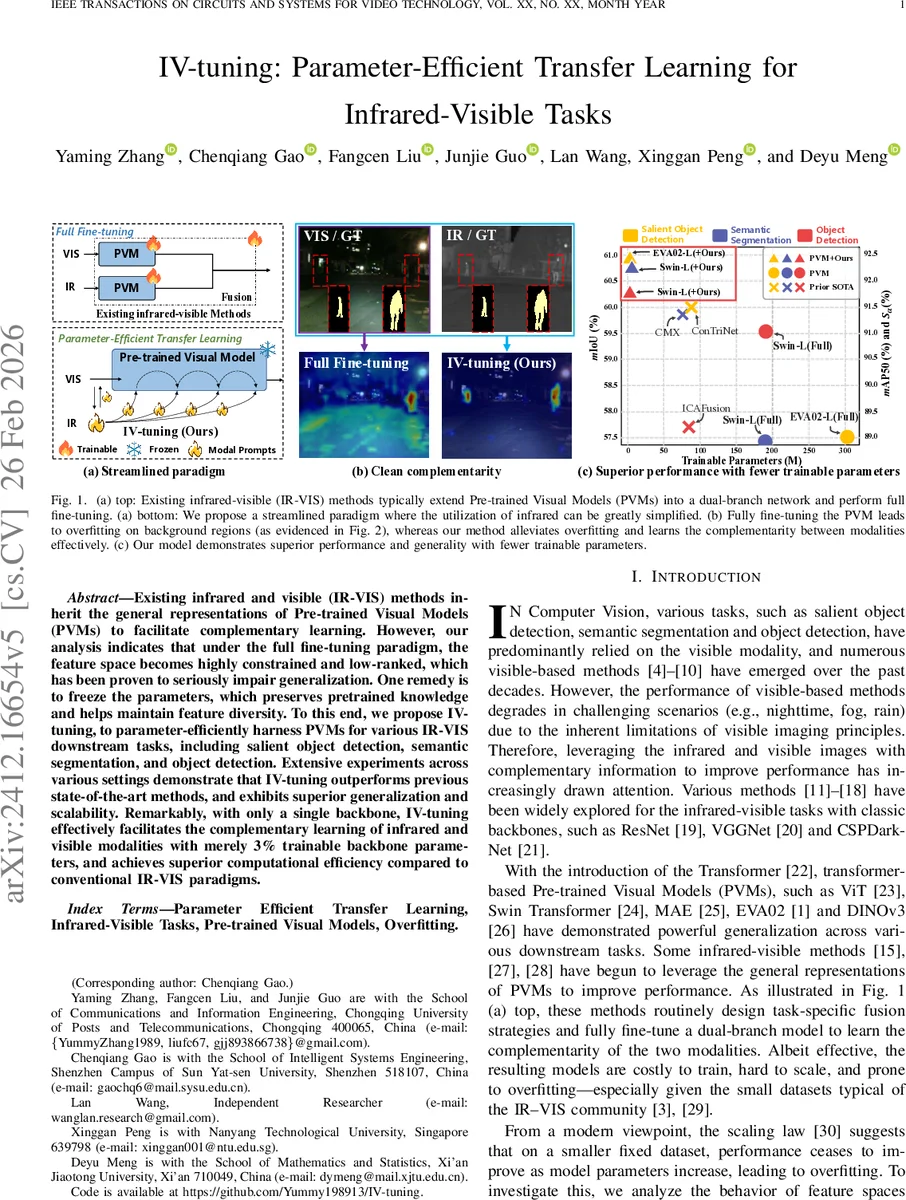

Existing infrared and visible (IR-VIS) methods inherit the general representations of Pre-trained Visual Models (PVMs) to facilitate complementary learning. However, our analysis indicates that under the full fine-tuning paradigm, the feature space becomes highly constrained and low-ranked, which has been proven to seriously impair generalization. One remedy is to freeze the parameters, which preserves pretrained knowledge and helps maintain feature diversity. To this end, we propose IV-tuning, to parameter-efficiently harness PVMs for various IR-VIS downstream tasks, including salient object detection, semantic segmentation, and object detection. Extensive experiments across various settings demonstrate that IV-tuning outperforms previous state-of-the-art methods, and exhibits superior generalization and scalability. Remarkably, with only a single backbone, IV-tuning effectively facilitates the complementary learning of infrared and visible modalities with merely 3% trainable backbone parameters, and achieves superior computational efficiency compared to conventional IR-VIS paradigms.

💡 Research Summary

The paper addresses the problem of integrating infrared (IR) and visible (VIS) imagery for tasks such as salient object detection, semantic segmentation, and object detection. Existing IR‑VIS approaches typically adopt a dual‑branch architecture that either fuses the two modalities at the image level before feeding a single backbone, or processes each modality with separate backbones and merges features at intermediate layers. While effective, these methods rely heavily on full fine‑tuning of large pre‑trained visual models (PVMs) such as ViT, Swin‑Transformer, or EVA‑02. The authors demonstrate that full fine‑tuning drives the feature space of deep layers into a highly constrained, low‑rank subspace, as shown by Principal Component Analysis (PCA). This compression leads to over‑fitting, especially on the small datasets typical of IR‑VIS research, and degrades generalization.

Freezing the backbone preserves the rich, diverse representations learned from massive VIS datasets, but alone it fails to extract task‑specific discriminative cues. Parameter‑Efficient Transfer Learning (PETL) offers a middle ground by inserting lightweight adapters or prompt modules while keeping the backbone frozen. However, prior PETL applications to IR‑VIS have not fully considered the intrinsic differences between the modalities.

The authors conduct a frequency‑domain analysis and find that IR images contain dominant low‑frequency components (thermal structures), whereas VIS images carry more high‑frequency texture. Conventional 3×3 convolutions tend to suppress low‑frequency signals, whereas a global linear projection naturally preserves them. This insight motivates the design of a modality‑aware prompt (MP) that applies a linear projection separately to IR and VIS inputs, generating modality‑specific prompts that retain the essential low‑frequency IR information.

IV‑tuning consists of two key components: (1) Modality‑aware Prompter (MP) blocks and (2) a two‑stage fusion strategy. The MP‑α block creates an initial prompt P₀ by linearly projecting each modality. This prompt is fed into a cascade of MP‑β blocks placed after each transformer layer. MP‑β updates the prompt based on the layer’s output, allowing the system to adapt the prompt as the feature space evolves from low‑rank (early layers) to high‑dimensional (deep layers). Two fusion mechanisms are defined: α‑fusion, which shares the same prompt across low‑rank layers, and β‑fusion, which performs layer‑wise prompt updates for high‑dimensional layers. This design respects the observed “phase transition” in feature rank across depth.

Implementation-wise, the backbone (e.g., EVA‑02‑L or Swin‑Transformer) is completely frozen; only the MP modules (constituting less than 3 % of total parameters) are trainable. The authors evaluate IV‑tuning on five public datasets covering the three tasks, comparing against full fine‑tuning, conventional dual‑branch methods, and recent PETL baselines. Results show consistent improvements of 2–4 % in mIoU, F‑measure, or AP, while reducing FLOPs and memory usage by over 30 %. Notably, the linear‑projection‑based prompts preserve IR low‑frequency cues, leading to robust performance under challenging lighting conditions (night, fog).

In summary, IV‑tuning offers a principled solution to the over‑fitting problem of full fine‑tuning large PVMs on small IR‑VIS datasets, while efficiently leveraging the pretrained knowledge. By freezing the backbone, introducing modality‑aware linear prompts, and employing a depth‑aware fusion scheme, the method achieves state‑of‑the‑art results with a fraction of trainable parameters and computational cost. This work provides a scalable blueprint for extending large vision foundation models to multimodal sensor fusion tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment