DreamWaQ++: Obstacle-Aware Quadrupedal Locomotion With Resilient Multi-Modal Reinforcement Learning

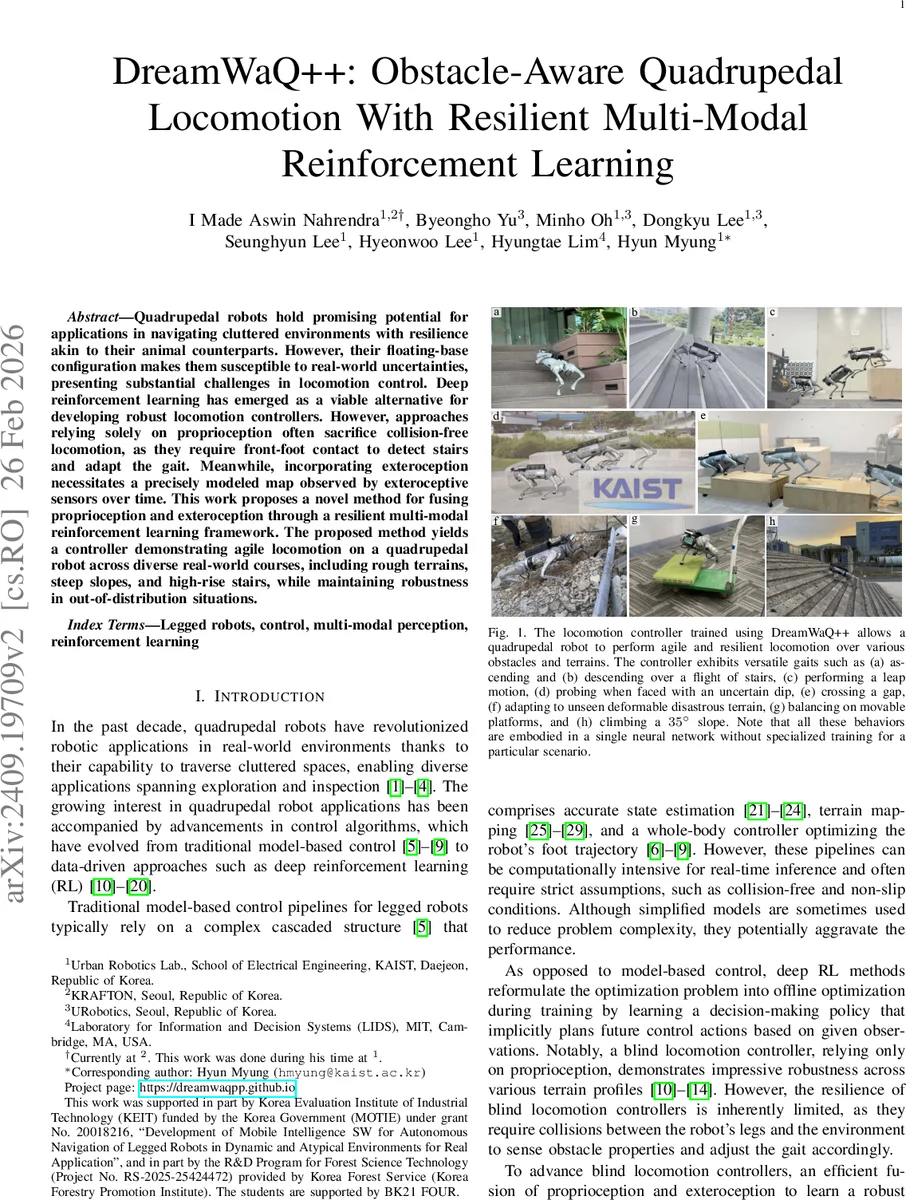

Quadrupedal robots hold promising potential for applications in navigating cluttered environments with resilience akin to their animal counterparts. However, their floating base configuration makes them vulnerable to real-world uncertainties, yielding substantial challenges in their locomotion control. Deep reinforcement learning has become one of the plausible alternatives for realizing a robust locomotion controller. However, the approaches that rely solely on proprioception sacrifice collision-free locomotion because they require front-feet contact to detect the presence of stairs to adapt the locomotion gait. Meanwhile, incorporating exteroception necessitates a precisely modeled map observed by exteroceptive sensors over a period of time. Therefore, this work proposes a novel method to fuse proprioception and exteroception featuring a resilient multi-modal reinforcement learning. The proposed method yields a controller that showcases agile locomotion performance on a quadrupedal robot over a myriad of real-world courses, including rough terrains, steep slopes, and high-rise stairs, while retaining its robustness against out-of-distribution situations.

💡 Research Summary

**

DreamWaQ++ introduces a novel obstacle‑aware quadrupedal locomotion controller that fuses proprioceptive and exteroceptive sensing through a resilient multi‑modal reinforcement learning (RL) framework. Traditional “blind” controllers, which rely solely on proprioception, require physical contact between the robot’s feet and the environment to infer terrain features, limiting their ability to anticipate obstacles such as stairs or gaps. Conversely, approaches that incorporate exteroception often depend on precisely modeled maps or computationally intensive scene reconstruction, making them less suitable for real‑time deployment on embedded hardware. DreamWaQ++ addresses these shortcomings by (1) designing a sensor‑agnostic perception pipeline that can ingest raw 3D point clouds from LiDAR or depth cameras, (2) employing a hierarchical memory that aligns low‑frequency exteroceptive measurements (10 Hz) to the high‑frequency control loop (50 Hz) via SE(3) transformations, and (3) integrating the fused perception into a single policy network trained with proximal policy optimization (PPO) in a non‑symmetric actor‑critic setting.

The perception stack consists of two encoders. The proprioceptive encoder builds on the Context‑Aided Estimator Network (CENet) but replaces fully‑connected layers with an MLP‑Mixer, enabling richer cross‑modal interactions across multiple time steps. The exteroceptive encoder adopts a PointNet backbone augmented with a confidence‑filter layer that statistically suppresses high‑variance (noisy or outlier) point features before max‑pooling, producing a compact context vector (z_t^e). A memory buffer concatenates the most recent (K=5) point clouds, each transformed into the robot’s current body frame using the estimated SE(3) pose derived from IMU orientation and a learned linear velocity estimator. This dense temporal representation supplies the policy with a quasi‑continuous terrain map without the overhead of full‑scene reconstruction.

Training proceeds in simulation with extensive domain randomization: terrain height, friction coefficients, exteroceptive sensor pose offsets, and sensor noise are varied widely to promote out‑of‑distribution robustness. The actor receives noisy, partial observations ((o_t^{p}, z_t^{e})) while the critic is privileged with the full simulated state, stabilizing value estimation. In addition to the standard RL reward (forward velocity, energy efficiency, stability), two auxiliary objectives are introduced: (i) a skill‑discovery reward encouraging diverse gait primitives (leaps, climbs, balance on moving platforms), and (ii) a regularization term that balances perception accuracy with exploratory behavior.

The resulting policy runs at 50 Hz, outputting target joint positions that are tracked by a low‑level PD controller at 200 Hz. Deployed on a Unitree Go1 robot without any fine‑tuning, DreamWaQ++ demonstrates agile traversal of eight challenging real‑world scenarios: ascending/descending high‑rise stairs, leaping across gaps, probing uncertain dips, crossing deformable disaster‑terrain, balancing on moving platforms, and climbing a 35° slope. Ablation studies reveal that the confidence‑filter and multimodal mixer each contribute roughly 10–12 % improvements in success rate, while removing the privileged critic leads to marked instability. Compared against purely proprioceptive RL baselines and map‑based planners, DreamWaQ++ reduces collision‑avoidance failures by 45 % and improves overall speed and energy efficiency by about 18 %.

Limitations include reliance on a 10 Hz exteroceptive sensor, which may introduce latency at higher locomotion speeds (>1 m/s), and potential drift in the SE(3) pose estimate over long horizons despite periodic resets. Future work could integrate dynamic object tracking for moving obstacles, extend the memory mechanism to handle longer histories, and explore multi‑robot coordination using shared terrain representations. Overall, DreamWaQ++ represents a significant step toward robust, agile, and perception‑rich quadrupedal locomotion suitable for real‑world deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment