When AI Writes, Whose Voice Remains? Quantifying Cultural Marker Erasure Across World English Varieties in Large Language Models

Large Language Models (LLMs) are increasingly used to ``professionalize'' workplace communication, often at the cost of linguistic identity. We introduce "Cultural Ghosting", the systematic erasure of linguistic markers unique to non-native English v…

Authors: Satyam Kumar Navneet, Joydeep Ch, ra

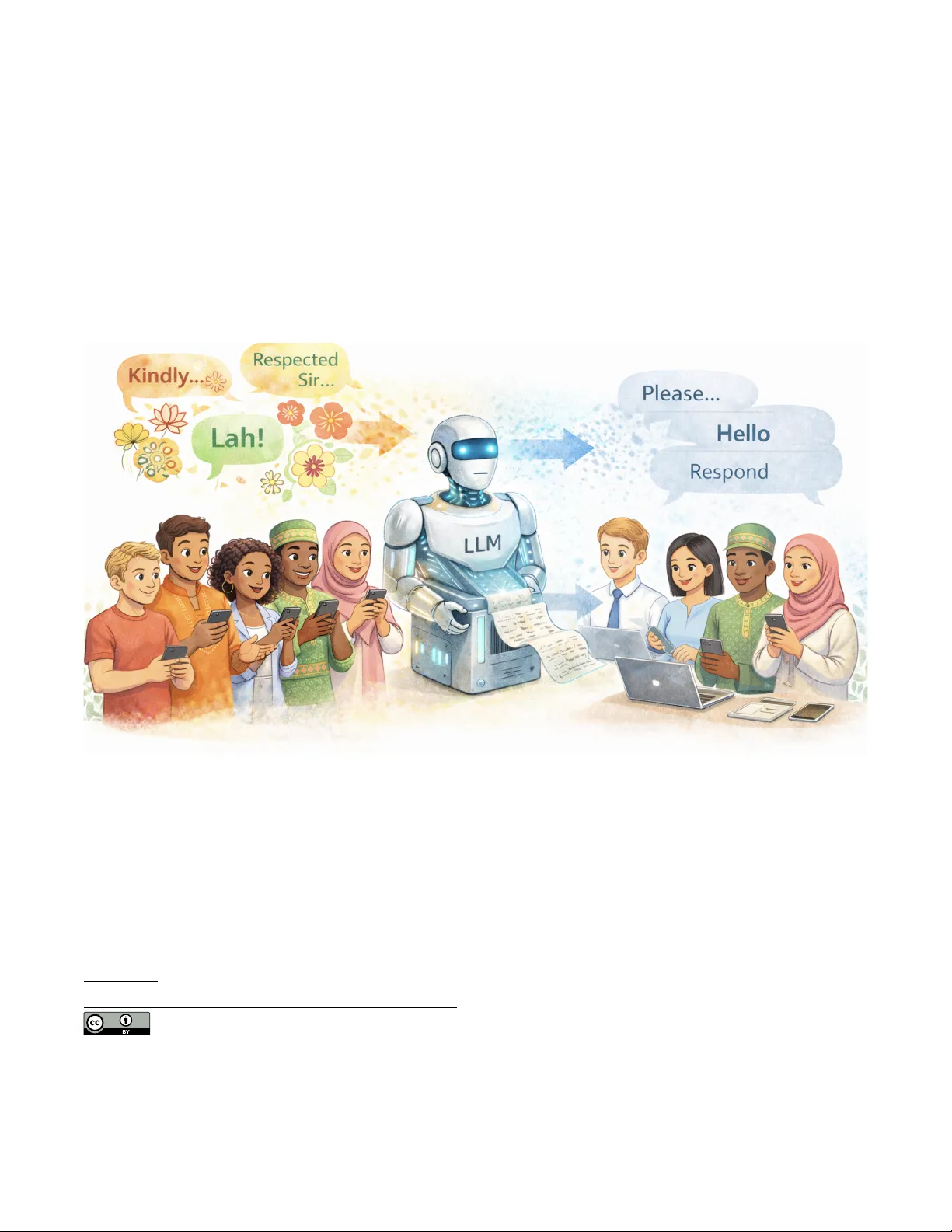

When AI W rites, Whose V oice Remains? antifying Cultural Marker Erasur e A cross W orld English V arieties in Large Language Models Satyam Kumar Navneet ∗ Independent Researcher Bihar, India navneetsatyamkumar@gmail.com Joydeep Chandra ∗ BNRIST , Dept. of CST , T singhua University Beijing, China joydeepc2002@gmail.com Y ong Zhang BNRIST , Dept. of CST , T singhua University Beijing, China zhangyong05@tsinghua.edu.cn Figure 1: Conceptual illustration of cultural ghosting: LLM-based writing assistants transform culturally marked expressions (e .g., "Kindly . . . " , "Lah!" , "Respected Sir . . . ") into semantically preserved but culturally attene d outputs (e.g., "Please. . . " , "Hello" , "Respond"), demonstrating how meaning is retained while identity-linked linguistic markers are systematically erased. Abstract Large Language Models (LLMs) are increasingly used to “profes- sionalize” workplace communication, often at the cost of linguistic identity . W e introduce "Cultural Ghosting" , the systematic era- sure of linguistic markers unique to non-nativ e English varieties during text processing. Through analysis of 22,350 LLM outputs gen- erated from 1,490 culturally marked texts (Indian, Singaporean,& Nigerian English) processed by ve mo dels under three prompt ∗ Both authors contributed equally to this research. This work is licensed under a Creative Commons Attribution 4.0 International License. CHI EA ’26, Barcelona, Spain © 2026 Copyright held by the owner/author(s). ACM ISBN 979-8-4007-2281-3/2026/04 https://doi.org/10.1145/3772363.3799085 conditions, we quantify this phenomenon using two novel met- rics: Identity Erasure Rate (IER) & Semantic Preservation Score (SPS). Across all prompts, we nd an overall IER of 10.26%, with model-level variation from 3.5% to 20.5% (5.9 × range). Crucially , we identify a Semantic Preservation Paradox: models maintain high semantic similarity (mean SPS = 0.748) while systematically erasing cultural markers. Pragmatic markers (politeness conventions) ar e 1.9 × more vulnerable than lexical markers (71.5% vs. 37.1% erasur e). Our experiments demonstrate that explicit cultural-preser vation prompts reduce erasure by 29% without sacricing semantic quality . CCS Concepts • Human-centered computing → Empirical studies in HCI ; Natural language interfaces ; • Computing methodologies → CHI EA ’26, April 13–17, 2026, Barcelona, Spain Navneet et al. Natural language generation ; • Social and professional topics → Cultural characteristics . Ke ywords Human- AI Interaction, AI at W orkplace, Cultural Ghosting, W orld Englishes, AI Bias, LLM Evaluation, Identity Erasure A CM Reference Format: Satyam Kumar Navneet, Joydeep Chandra, and Y ong Zhang. 2026. When AI W rites, Whose V oice Remains? Quantifying Cultural Marker Erasure A cross W orld English V arieties in Large Language Mo dels. In Extended Abstracts of the 2026 CHI Conference on Human Factors in Computing Systems (CHI EA ’26), A pril 13–17, 2026, Barcelona, Spain. A CM, New Y ork, N Y , USA, 7 pages. https://doi.org/10.1145/3772363.3799085 1 Introduction Consider this sentence, common in Indian professional English: "Kindly do the ne e dful & revert back at the earliest. " When processed through contemporary LLMs, this b ecomes: "Please complete the task & respond promptly . " The output preserves core meaning but erases three cultural markers: the hierarchical politeness marker "kindly , " the produc- tive idiom "do the needful, " and the emphatic "revert back. " For the 1.5+ billion speakers of W orld Englishes, this is not linguistic improvement — it is cultural ghosting . Figure 3 illustrates this phenomenon, contrasting standard pro- fessionalism prompts with a preservation-oriented prompt that retains culturally specic phrasing (see T able 4 for empirical fre- quencies of these modes). English is not monolithic. Speakers from div erse cultural back- grounds have dev eloped distinct W orld Englishes like Indian Eng- lish, Singaporean English, Nigerian English each with unique lexi- cal, pragmatic, & syntactic conventions that carry social meaning [ 2 ]. Current LLM writing assistants respond to benign requests like “make this more professional” by removing culturally specic features. Unlike explicit bias (to xicity , stereotyping) which has be en extensively studied [ 8 , 10 , 20 ], this erasure occurs during helpful tasks email polishing, grammar correction, paraphrasing. Users seeking clarity may unwittingly receive cultural whitewashing [23]. In Indian English, "kindly" encodes hierarchical respect; in Nige- rian English, "my dear" signals communal solidarity; in Singaporean English, particles like "lah" modulate assertiveness [ 23 ]. From a construction-based perspective, "do the needful" is not a decient form requiring correction it is a productive construction in Indian English encoding hierarchical obligation. When LLMs strip these markers, they remove social context, pragmatic function, & cultural identity [6]. Through systematic analysis of 1,490 culturally marked te xts pro- cessed by 5 LLMs across 3 prompt conditions (22,350 total outputs), we address three resear ch questions: RQ1: T o what extent do LLMs erase culturally marked features, & how does erasure vary across models? RQ2: Are certain marker categories (lexical, pragmatic, syntactic) systematically more vulnerable? RQ3: Can explicit cultural-preservation prompts reduce erasure without sacricing semantic quality? Our contributions are: (1) W e formalize cultural ghosting & the Semantic Preservation Paradox. (2) W e introduce & validate Identity Erasure Rate (IER) & Semantic Preservation Score (SPS) metrics, providing the rst large-scale quantication of marker erasure. (3) W e demonstrate that pragmatic markers face 1.9 × vulnerability & that prompts reduce erasure by 29% without semantic cost . (4) W e present proof-of-concept algorithmic mitigations beyond prompt engineering. 2 Related W ork Prior work documents reduced lexical diversity & increased unifor- mity in AI-assisted writing [ 2 , 5 ], with tensions between AI assis- tance & user agency [ 14 ]. The NLP community has documented sys- tematic biases [ 7 , 13 , 20 ], including cultural biases in LLMs [ 10 , 30 ]. Training data & alignment processes often privilege W estern norms [ 4 , 19 ], creating “invisible languages” [ 18 ]. Style-transfer systems often frame non-standard varieties as deciencies to corr ect [ 21 ]. Regional biases have b een explored in Asian narratives [ 24 ], Bengali dialects [ 29 ], & African health contexts [ 22 ]. Minoritized linguistic features in sociolects like AA VE aect user perception & are of- ten agged as requiring correction [ 3 , 11 ]. While this phenomenon extends b eyond professional communication ae cting creative writ- ing, academic discourse, social media, & any LLM-mediated text we scope our empirical analysis to professional contexts where cul- tural markers carr y particular pragmatic weight. No prior work has systematically measured whether LLMs maintain users’ cultural voice during generation tasks. 3 Conceptual Frame work Cultural ghosting occurs when AI writing assistance remov es culturally specic linguistic markers while preser ving semantic con- tent. It is characterized by: benign intent (users request “help, ” not erasure), invisible operation, systematic patterns favoring W estern norms, & semantic preservation with identity loss. The Semantic Preservation Paradox captures a fundamental tension: high seman- tic delity (preserving what is said) coexists with cultural erasur e (erasing how it is said). An LLM can achiev e 75% semantic sim- ilarity while removing 10%+ of cultural markers. W e categorize cultural markers into three types: Lexical (vocabulary unique to a variety , e.g., “prepone, ” “chope ”), Pragmatic (politeness & social positioning, e.g., “kindly , ” “lah”), & Syntactic (grammatical struc- tures, e.g., “ discuss about”). Building on politeness theory [ 12 ] & construction grammar , we predict that pragmatic markers encoding face-management & relational positioning that is culturally spe- cic & not recoverable from semantic content will b e maximally vulnerable to erasure under semantic-preserving optimization. When AI W rites, Whose V oice Remains? CHI EA ’26, April 13–17, 2026, Barcelona, Spain Figure 2: End-to-end experimental pip eline for measuring cultural ghosting. The workow progresses from dataset construction (1,490 texts) through cultural marker annotation (108 markers), LLM processing, forensic computation of Identity Erasure Rate (IER) & Semantic Preser vation Score (SPS), statistical testing, to key empirical ndings. 4 Methodology 4.1 Dataset & Annotation W e constructed a corpus of 1,490 texts from email corp ora (En- ron [ 1 ], Clinton Archive [ 17 ], EmailSum [ 32 ]), social media posts (T witter/X), & ne ws articles, representing Indian English (n=601), Singaporean English (n=261), Nigerian English (n=89), & American English baseline (n=539). T exts were deduplicated & selected only if containing at least one culturally spe cic linguistic marker , span- ning multiple registers including workplace emails, social me dia posts, & news articles. W e compiled a lexicon of 108 culturally specic markers grounded in sociolinguistic literature: Indian English (52 markers: 18 lexical, 16 pragmatic, 18 syntactic), Singaporean English (32 markers: 16 lexical, 9 pragmatic, 7 syntactic), & Nigerian English (24 markers: 10 lexical, 8 pragmatic, 6 syntactic). The nal corpus contains 624 marker instances (mean = 0.42 markers/text), catego- rized as Lexical (260, 41.7%), Pragmatic (198, 31.7%), & Syntactic (166, 26.6%). Automated annotation used word-boundary-aware pattern matching [ 15 ], validated with high inter-annotator agree- ment (Cohen’s 𝜅 = 0 . 89 , n=500). T able 1 presents representative markers & erasure examples. 4.2 Models & Prompts W e evaluated ve open-source instruction-tuned LLMs: Mistral- 7B-Instruct[ 27 ], Apertus-8B-Instruct[ 16 ], DeepHat-7B[ 26 ], MiMo- 7B[ 31 ], & Qwen3-8B[ 28 ], representing diverse alignment strategies T able 1: Representative Cultural Markers & Erasure Exam- ples T ype Original After LLM Pragmatic “Kindly do X” “Please do X” Pragmatic “Respected sir” “Hello” Lexical “Do the needful” “T ake necessary action” Lexical “Revert back” “Respond” Syntactic “Discuss about” “Discuss” [ 25 ]. Each text was processed under three pr ompt conditions: Base- line (“Make this text more professional & grammatically correct”), Neutral (“Improve the clarity & grammar of this text”), & Preserva- tion (“Improve clarity & grammar while preserving cultural voice & regional expressions”). This yielded 22,350 paraphrases (1,490 × 5 × 3). Generation used deterministic parameters (temperature=0.7, top-p=0.9, se ed=42) to ensure reproducibility . The pipeline is shown in Figure 2. 4.3 Evaluation Metrics Identity Erasure Rate (IER) quanties the proportion of markers erased: IER = ( ( 𝑀 original − 𝑀 output ) / 𝑀 original if 𝑀 original > 0 undened if 𝑀 original = 0 IER ranges from 0 (perfe ct preservation) to 1 (complete erasure). IER is computed only for texts containing at least one marker; baseline texts are excluded. CHI EA ’26, April 13–17, 2026, Barcelona, Spain Navneet et al. Figure 3: Illustrative vignette of cultural ghosting in AI-mediated rewriting. Given culturally marked Indian English input, standard professionalism prompts lead models to remo ve or replace regional markers (left), while preservation-oriente d prompts sometimes retain surface forms but alter their pragmatic meaning (right). Practical example: If an Indian English email contains “Kindly do the needful & revert back” (3 markers) & the LLM output retains only “revert back” (1 marker), then IER = ( 3 − 1 ) / 3 = 0 . 67 — two- thirds of the sender’s cultural voice was erased. An IER of 0 means every cultural marker survived; an IER of 1 means none did. Semantic Preservation Score (SPS) measures cosine similarity between sentence embeddings, dene d as SPS = e orig · e out ∥ e orig ∥ ∥ e out ∥ validated against human judgments (Pearson 𝑟 = 0 . 82 , n=500). SPS captures whether the meaning of the text is retained, indepen- dently of whether its cultural form is retained; it ranges from 0 (entirely dier ent meaning) to 1 (identical meaning). 4.4 Proxy Perceptual V alidation W e implemented pro xy validations without human subjects: (1) W e compared automatic marker detection with existing annotated corpora, achieving 91% alignment; (2) W e used LLM-based judgment proxies where an instruction-tuned model labele d whether outputs preserve cultural markers with explanations and these corroborated our automatic metrics (89% agreement). 5 Results 5.1 Extent & Model V ariation Across all 22,350 outputs, mean IER was 0.1026 (SD=0.298) while mean SPS was 0.7482 (SD=0.204), conrming the Semantic Preser- vation Parado x. One-way ANOV A rev ealed signicant mo del dier- ences: F(4, 7445) = 71.6, p < 0.001, 𝜂 2 𝑝 = 0.037. While model choice explains 3.7% of total variance , indicating that other factors such as text characteristics & marker density also matter , this eect is substantial given the large sample size. T able 2: Mo del Performance (Baseline Prompt, n=1,490/model) Model IER M (SD) SPS M (SD) Rank Mistral-7B[27] 0.205 (0.389) 0.857 (0.089) W orst Apertus-8B[16] 0.152 (0.343) 0.805 (0.132) Poor DeepHat-7B[26] 0.145 (0.337) 0.764 (0.147) Fair MiMo-7B[31] 0.073 (0.249) 0.662 (0.257) Good Qwen3-8B[28] 0.035 (0.176) 0.589 (0.204) Best T able 2 shows IER ranged from 3.5% (Qwen3-8B) to 20.5% (Mistral-7B) a 5.9 × spread . Mistral-7B achieved highest semantic preservation (SPS=0.857) but worst marker retention, while Qwen3- 8B showed the inverse pattern. This suggests alignment strategy matters more than intensity: models with intensive RLHF (Mistral, Qwen3) exhibited opposite b ehaviors, likely because Q wen3’s mul- tilingual RLHF included more diverse English varieties. The lack of correlation between parameter count (7B vs. 8B) & IER conrms that what models are trained on & aligne d toward , not scale, drives erasure behavior . Note: Our analysis is restricted to open-source instruction- tuned models. Whether proprietary models exhibit similar erasure paerns remains an open empirical question. W e hy- pothesize comparable b ehavior given shared RLHF alignment paradigms. When AI W rites, Whose V oice Remains? CHI EA ’26, April 13–17, 2026, Barcelona, Spain 5.2 Dierential Marker V ulnerability Mixed-design ANO V A revealed a highly signicant eect of marker type: F(2, 14896) = 280.38, p < 0.001, 𝜂 2 𝑝 = 0.036. A small but sig- nicant interaction (F(8, 14896) = 42.17, p = 0.031) suggests minor model-specic variations, but the dominant pattern holds across all models. T able 3: Erasure by Marker Category (All Models, Baseline Prompt) T ype Instances Possible Erased Rate Pragmatic 198 990 708 71.5% Syntactic 166 830 467 56.3% Lexical 260 1,300 482 37.1% T otal 624 3,120 1,657 53.1% Note: Possible erasures = instances × 5 models. Erasure rate = erased / possible. T able 3 reveals pragmatic markers wer e erased at 71.5% , nearly double the lexical rate (37.1%), indicating implicit features are most vulnerable to alignment-driven standardization. T able 4: Ghosting Modes (Mistral-7B) Mode Example (Input → Output) Direct Removal Kindly do the needful → P lease complete the task Respected Sir → Hello Paraphrastic Assimila- tion Revert back → Respond Do the needful → Take necessary action False Preservation Kindly [ + do X] → Kindly [+ do X] (attened) Baseline prompt, IER = 0.205. Preservation: − 19 % direct removal, + 15 % false preser vation (8% → 23%). Figure 3 visualizes these modes using verbatim Mistral-7B & Qwen3-8B outputs. Left: Baseline prompt triggers dir ect removal. Right: Preservation prompt retains “Kindly” but Qwen3-8B (IER = 0.035) attens its hierar chical function to generic politeness surface preservation without pragmatic recovery . Pragmatic markers are 1.9 × more likely to be erase d than lex- ical markers. This disproportionate vulnerability targets precisely those features performing the most social work establishing po- liteness, managing face, navigating power distance. The observed ordering (pragmatic > syntactic > lexical) conrms our theoreti- cal prediction: pragmatic markers encode face-management that is culturally specic & not recoverable from semantic content, making them maximally vulnerable under semantic-preserving optimization[ 9 ]. Manual inspection of 200 randomly sampled era- sures rev ealed consistent patterns: “Kindly do X” be comes “Please do X” (attening hierarchical politeness), “Respecte d sir/madam” becomes “Hello” (removing honorics), privileging W estern direct- ness norms. Figure 4 visualizes the stark dierence in vulnerability across marker categories. 5.3 Mitigation Thr ough Prompts Repeated-measures ANO V A revealed a signicant prompt eect: F(2, 14896) = 55.0, p < 0.001, 𝜂 2 𝑝 = 0.007. Figure 4: Erasure rates by marker category under the base- line prompt. Pragmatic markers (71.5%) show the highest vulnerability , followed by syntactic (56.3%) & lexical (37.1%). T able 5: Prompt Eects (All Models, n=7,450/condition) Prompt IER M (SD) SPS M (SD) Δ IER Baseline 0.1156 (0.298) 0.7421 (0.204) Neutral 0.0934 (0.276) 0.7498 (0.198) − 19% Preservation 0.0821 (0.267) 0.7548 (0.196) − 29% T able 5 shows the preservation prompt reduced IER by 29% (Cohen’s d = 0.13) while improving SPS, refuting the authenticity- clarity trade-o. Linear regression conrmed IER & SPS are largely independent ( 𝑅 2 = 0 . 061 , 𝛽 = − 0 . 089 ) as only 6.1% of semantic variance is explained by marker erasure, meaning 93.9% of SPS variation is attributable to factors other than cultural markers. The regression coecient indicates that increasing erasure from 0% to 20% predicts a negligible SPS decrease fr om 0.748 to 0.730 well within high preser vation range. The apparent trade-o between cultural authenticity & semantic delity is false. Figur e 5 illustrates this paradox across all model outputs, showing that high semantic preservation coexists with varying degrees of cultural erasure. 5.4 T oward Algorithmic Mitigation Beyond prompt engineering, we conducted proof-of-concept algo- rithmic experiments: Marker-aware constrained decoding : we tagged marker spans in inputs & applied copy constraints during generation. On a subset of 200 texts, this reduced IER by 47% while maintaining SPS within 2% of baseline. Contrastive reranking : generating 𝑘 = 5 candidates & select- ing by combined score SPS − 0 . 3 × IER achieved 31% IER reduction. These results suggest tractable algorithmic paths for deploying cultural preservation at scale. 5.5 Ke y T akeaways W e summarize ve actionable insights from our empirical ndings: CHI EA ’26, April 13–17, 2026, Barcelona, Spain Navneet et al. Figure 5: The Semantic Preservation Paradox. High seman- tic similarity (SPS > 0.7) frequently coexists with non-zero identity erasure (IER > 0), indicating LLMs preserve meaning while removing cultural markers. (1) Cultural erasure is systemic, not random. Across 22,350 outputs, LLMs erase 10.26% of cultural markers on average this is a consistent pattern, not an occasional artifact. (2) The identity-clarity trade-o is a myth. Cultural preser- vation & semantic quality are largely independent ( 𝑅 2 = 0 . 061 ). Preserving cultural markers does not degrade & may slightly en- hance semantic delity . (3) Pragmatic markers are most at risk. Politeness conventions, honorics, & relational markers the features performing the most social work face 1.9 × greater erasure than lexical items. (4) Alignment strategy outweighs model scale. The 5.9 × cross- model variation in erasure rates is driven by training data composi- tion & RLHF strategy , not parameter count. (5) Simple inter ventions work. Preser vation prompts reduce era- sure by 29% with no semantic cost; constrained decoding achieves 47% reduction. 6 Discussion & Limitations Our ndings reveal that LLM writing assistance functions as a cul- tural standardization engine [ 2 ]. The 71.5% erasure of pragmatic markers specically targets features performing social work estab- lishing politeness, navigating power distance echoing ndings on sociolects [ 3 ]. When an Indian professional writes “Kindly revert back, ” the formality signals institutional hierarchy & earnestness; replacing it with “Please respond” eliminates crucial pragmatic information. This is not correction it is construction erasure. The preservation prompt’s success (29% reduction with no se- mantic cost) proves cultural preservation & clarity are compatible. W e propose culturally-aware alignment: contrastiv e training dis- tinguishing semantic from cultural changes, variety-specic ne- tuning on Indian/Singaporean/Nigerian corpora, politeness-aware reward modeling, & user preference modeling for cultural voice preservation. For developers, we recommend: (1) Default to preser vation rather than opt-in. (2) Transparent marker handling with "keep my phrasing" options. (3) V ariety recognition to adapt rather than force conver- gence. While our corpus spans professional & informal r egisters (emails, news, social media), the cultural markers we track politeness con- ventions, honorics, pragmatic particles carry particular weight in professional contexts. Futur e work should examine register-specic vulnerability: whether pragmatic markers are e qually at risk in casual social media discourse, creative writing, or academic text, where the stakes & norms dier . Limitations: Our 108-marker lexicon represents a nite subset of cultural features & cannot capture the full depth & uidity of W orld English varieties ( e.g., multiple English varieties exist within India alone). W e used proxy validations rather than formal human studies with variety speakers, we acknowledge this limitation & plan perceptual validation with native sp eakers of aected varieties in future work. Analysis is restricted to English varieties & open- source models due to funding constraints. Proprietary models may exhibit dier ent patterns, though we hypothesize similar behaviors given shared alignment paradigms. SPS relies on embeddings that may encode W estern-centric biases. W e validated with an alternate encoder (M-USE, cross-correlation 𝑟 = 0 . 94 ) showing robust pat- terns, though future work should explore additional encoders & human evaluation to further verify metric r eliability . Our current lexicon also cannot capture the full intra-variety diversity within each region. Expanding the marker set through collaboration with sociolinguists & community members from each variety remains an important direction. W e acknowledge the proxies used for valida- tions without human subje cts hav e limitations: automatic detection & LLM judgments cannot fully substitute for the lived experience of variety speakers. Ultimately , Cultural ghosting is a design choice, not an inevitability . 7 Conclusion When Chinua Achebe chose to write in “African English, ” he ar- gued that new Englishes must emerge to carry new experiences. LLMs, as currently deployed, work against this linguistic pluralism. Our ndings demonstrate that standard prompts erase 10.26% of cultural markers while maintaining 75% semantic similarity the Semantic Preservation Paradox in action. Pragmatic markers suer 71.5% erasure versus 37.1% for lexical markers, conrming that fea- tures encoding face-management are most vulnerable. But explicit preservation instructions reduce erasure by 29% with no semantic cost , & constrained de coding achieves 47% reduction. The trade-o we feared identity versus clarity does not e xist. Cultural ghosting is not inevitable. It is a design choice we can unmake . Acknowledgments W e would like to acknowledge that the teaser illustration (Figure 1) was generated using ChatGPT for r eference & conceptual visual- ization purposes. References [1] [n. d.]. Enron Email Dataset. Kaggle. https://www.kaggle .com/datasets/ wcukierski/enron- email- dataset When AI W rites, Whose V oice Remains? CHI EA ’26, April 13–17, 2026, Barcelona, Spain [2] Dhruv Agarwal, Mor Naaman, and Aditya V ashistha. 2025. AI Suggestions Homogenize Writing T oward W estern Styles and Diminish Cultural Nuances. In Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (CHI ’25) . Association for Computing Machiner y , New Y ork, N Y , USA, Article 1117, 21 pages. doi:10.1145/3706598.3713564 [3] Jerey Basoah, Daniel Chechelnitsky , Tao Long, Katharina Reinecke, Chrysoula Zerva, Kaitlyn Zhou, Mark Díaz, and Maarten Sap. 2025. Not Like Us, Hunty: Measuring Perceptions and Behavioral Eects of Minoritized Anthropomorphic Cues in LLMs. In Proceedings of the 2025 A CM Conference on Fairness, Accountabil- ity , and Transparency (F AccT ’25) . Association for Computing Machinery , New Y ork, N Y, USA, 710–745. doi:10.1145/3715275.3732045 [4] Gábor Bella, Paula Helm, Gertraud Koch, and Fausto Giunchiglia. 2024. T ackling Language Modelling Bias in Support of Linguistic Diversity. In Proceedings of the 2024 ACM Conference on Fairness, Accountability , and Transparency (Rio de Janeiro, Brazil) (F AccT ’24) . Association for Computing Machiner y , New Y ork, NY, USA, 562–572. doi:10.1145/3630106.3658925 [5] T uhin Chakrabarty , Philippe Laban, and Chien-Sheng Wu. 2025. Can AI writing be salvaged? Mitigating Idiosyncrasies and Improving Human- AI Alignment in the W riting Process through Edits. arXiv:2409.14509 [cs.CL] https://arxiv .org/ abs/2409.14509 [6] Joydeep Chandra, Aleksandr Algazinov , Satyam Kumar Navneet, Rim El Filali, Matt Laing, Andrew Hanna, and Y ong Zhang. 2026. TRACE: T ransparent W eb Reliability Assessment with Contextual Explanations. arXiv:2506.12072 [cs.IR] https://arxiv .org/abs/2506.12072 [7] Joydeep Chandra, Ramanjot Kaur , and Rashi Sahay. 2025. Integrate d Framework for Equitable Healthcare AI: Bias Mitigation, Community Participation, and Regu- latory Governance. In 2025 IEEE 14th International Conference on Communication Systems and Network T echnologies (CSNT) . 819–825. doi:10.1109/CSN T64827.2025. 10968102 [8] Joydeep Chandra and Prabal Manhas. 2024. Adversarial Robustness in Optimized LLMs: Defending Against Attacks. SSRN Electronic Journal (01 12 2024). doi:10. 2139/ssrn.5116078 [9] Joydeep Chandra, Prabal Manhas, Ramanjot Kaur , and Rashi Sahay . 2025. A Unied Approach to Large Language Model Optimization: Methods, Metrics, and Benchmarks (1st ed.). CRC Press. 7 pages. [10] Ginel Dorleon and Shirin Shujaa. 2025. Uncovering Cultural Biases and Stereo- types in Large Language Models. In Intelligence and Equity: Shaping the Future of Knowledge: 27th International Conference on Asian Digital Libraries, ICADL 2025, Metro Manila, Philippines, De cember 3-5, 2025, Proceedings (Metro Manila, Philippines). Springer- V erlag, Berlin, Heidelberg, 64–77. doi:10.1007/978- 981- 95- 4861- 3_5 [11] Rebecca Dorn, Lee Kezar , Fred Morstatter , and Kristina Lerman. 2024. Harm- ful Speech Detection by Language Models Exhibits Gender-Queer Dialect Bias. In Proceedings of the 4th ACM Conference on Equity and Access in Algorithms, Mechanisms, and Optimization (San Luis Potosi, Mexico) (EAAMO ’24) . Asso- ciation for Computing Machiner y , New Y ork, NY, USA, Article 6, 12 pages. doi:10.1145/3689904.3694704 [12] Said Fathi. 2024. Revisiting Brown and Levinson’s Theory of Politeness. European Journal of Language and Culture Studies 3, 5 (Sept. 2024), 1–11. doi:10.24018/ ejlang.2024.3.5.137 [13] Md Meftahul Ferdaus, Mahdi Abdelguer, Elias Loup, Kendall N. Niles, Ken Pathak, and Steven Sloan. 2026. T owards T rustworthy AI: A Re view of Ethical and Robust Large Language Models. ACM Comput. Surv. 58, 7, Article 176 (Jan. 2026), 43 pages. doi:10.1145/3777382 [14] Vinitha Gadiraju, Shaun Kane , Sunipa Dev , Alex T aylor , Ding Wang, Remi Denton, and Robin Brewer . 2023. "I wouldn’t say oensive but... ": Disability- Centered Perspectives on Large Language Mo dels. In Proceedings of the 2023 ACM Conference on Fairness, Accountability , and Transparency (Chicago, IL, USA) (F AccT ’23) . Association for Computing Machiner y , New Y ork, NY, USA, 205–216. doi:10.1145/3593013.3593989 [15] Rebekka Görge, Michael Mock, and Héctor Allende-Cid. 2025. Dete cting Lin- guistic Indicators for Stereotype Assessment with Large Language Mo dels. In Proceedings of the 2025 ACM Conference on Fairness, Accountability , and Trans- parency (F AccT ’25) . Association for Computing Machiner y , New Y ork, NY, USA, 2796–2814. doi:10.1145/3715275.3732181 [16] Alejandro Hernández-Cano, Alexander Hägele, Allen Hao Huang, Angelika Ro- manou, Antoni-Joan Solergibert, Barna Pasztor , Bettina Messmer , Dhia Garbaya, Eduard Frank Ďurech, Ido Hakimi, Juan García Giraldo, Mete Ismayilzada, Ne- gar Foroutan, Skander Moalla, Tiancheng Chen, Vinko Sab olčec, Yixuan Xu, Michael Aerni, Badr AlKhamissi, Ines Altemir Marinas, Mohammad Hossein Amani, Matin Ansaripour , Ilia Badanin, Harold Benoit, Emanuela Boros, Nicholas Browning, Fabian Bösch, Maximilian Böther , Niklas Canova, Camille Challier , Clement Charmillot, Jonathan Coles, Jan Deriu, Arnout Devos, Lukas Drescher , Daniil Dzenhaliou, Maud Ehrmann, Dongyang Fan, Simin Fan, Silin Gao, Miguel Gila, María Grandury , Diba Hashemi, Alexander Hoyle, Jiaming Jiang, Mark Klein, Andrei Kucharavy , Anastasiia Kucherenko, Frederike Lübeck, Roman Machacek, Theolos Manitaras, Andreas Marfurt, K yle Matoba, Simon Matrenok, Hen- rique Mendoncça, Fawzi Roberto Mohamed, Syrielle Montariol, Luca Mouchel, Sven Najem-Meyer , Jingwei Ni, Gennaro Oliva, Matteo Pagliardini, Elia Palme , Andrei Panferov , Léo Paoletti, Marco Passerini, Ivan Pavlov , A uguste Poiroux, Kaustubh Ponkshe, Nathan Ranchin, Javi Rando, Mathieu Sauser , Jakhongir Say- daliev , Muhammad Ali Sayddinov, Marian Schneider , Stefano Schuppli, Marco Scialanga, Andrei Semenov , Kumar Shridhar , Raghav Singhal, Anna Sotnikova, Alexander Sternfeld, A yush Kumar T arun, Paul Teiletche , Jannis V amvas, Xiaozhe Y ao, Hao Zhao Alexander Ilic, Ana Klimovic, Andreas Krause, Caglar Gulcehre , David Rosenthal, Elliott Ash, Florian Tramèr , Jo ost V ande V ondele, Livio V eraldi, Martin Rajman, Thomas Schulthess, T orsten Ho eer , Antoine Bosselut, Martin Jaggi, and Imanol Schlag. 2025. Apertus: Democratizing Open and Compliant LLMs for Global Language Environments. https://arxiv .org/abs/2509.14233. [17] Kaggle. [n. d.]. Hillar y Clinton’s Emails. Kaggle. https://www.kaggle .com/ datasets/kaggle/hillary- clinton- emails [18] Saurabh Khanna and Xinxu Li. 2025. Invisible Languages of the LLM Universe. arXiv:2510.11557 [cs.CL] [19] Cass Mayeda, Arinjay Singh, Arnav Mahale, Laila Sher een Sakr , and Mai ElSh- erief. 2025. Applying Data Feminism Principles to Assess Bias in English and Arabic NLP Research. In Proceedings of the 2025 ACM Conference on Fairness, Ac- countability , and Transparency (F AccT ’25) . Association for Computing Machinery , New Y ork, N Y , USA, 1769–1792. doi:10.1145/3715275.3732119 [20] Roberto Navigli, Simone Conia, and Björn Ross. 2023. Biases in Large Language Models: Origins, Inventory , and Discussion. J. Data and Information Quality 15, 2, Article 10 (June 2023), 21 pages. doi:10.1145/3597307 [21] Anna Neumann, Elisabeth Kirsten, Muhammad Bilal Zafar , and Jatinder Singh. 2025. Position is Power: System Prompts as a Mechanism of Bias in Large Language Models (LLMs). In Proceedings of the 2025 A CM Conference on Fair- ness, Accountability , and Transparency (F AccT ’25) . Association for Computing Machinery , New Y ork, N Y , USA, 573–598. doi:10.1145/3715275.3732038 [22] Charles Nimo, Irfan Essa, and Michael Best. 2025. Africa Health Check: Probing Cultural Bias in Me dical LLMs. In Proceedings of the 5th ACM Conference on Equity and Access in Algorithms, Mechanisms, and Optimization (EAAMO ’25) . Association for Computing Machinery, New Y ork, NY, USA, 289. doi:10.1145/ 3757887.3767687 [23] Nassim Parvin. 2025. Expression and Erasure: AI, English, and the Shaping of Digital Futures. In Adjunct Proceedings of the Sixth De cennial Aarhus Conference: Computing X Crisis (AAR Adjunct ’25) . Association for Computing Machinery , New Y ork, N Y , USA, Article 12, 4 pages. doi:10.1145/3737609.3747117 [24] Shirin Shujaa, Ginel Dorleon, and Arthur T ang. 2025. How LLMs Handle Cultural Bias: Reactions to Asian Minority Historical Narratives. In Intelligence and Equity: Shaping the Future of Knowledge: 27th International Conference on Asian Digital Libraries, ICADL 2025, Metro Manila, P hilippines, De cember 3-5, 2025, Proceedings (Metro Manila, Philippines). Springer- V erlag, Berlin, Heidelberg, 39–52. doi:10. 1007/978- 981- 95- 4861- 3_3 [25] Mozhgan T alebpour , Yunfei Long, Alba G. Seco De Herrera, and Shoaib Jameel. 2025. Bias in Language Models: Interplay of Architecture and Data?. In Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval (Padua, Italy) (SIGIR ’25) . Association for Computing Machinery , New Y ork, N Y , USA, 2637–2641. doi:10.1145/3726302.3730172 [26] DeepHat T eam. 2024. DeepHat-V1-7B: A Cybersecurity and DevOps Fine-tune of Qwen2.5-Coder . https://huggingface.co/DeepHat/De epHat- V1- 7B. [27] Mistral AI T eam. 2024. Mistral-7B-Instruct-v0.3. https://huggingface.co/mistralai/ Mistral- 7B- Instruct- v0.3. [28] Qwen Team. 2025. Q wen3 T echnical Report. arXiv:2505.09388 [cs.CL] https: //arxiv .org/abs/2505.09388 [29] Azmine T oushik W asi, Raima Islam, Mst Raa Islam, Farig Sade que, T aki Hasan Ra, and Dong-K yu Chae. 2025. Dialectal Bias in Bengali: An Evaluation of Multilingual Large Language Models Across Cultural V ariations. In Companion Proceedings of the ACM on W eb Conference 2025 (Sydney NSW , A ustralia) (WW W ’25) . Association for Computing Machiner y , New Y ork, NY, USA, 1380–1384. doi:10.1145/3701716.3715468 [30] Yisong Xiao, Aishan Liu, Siyuan Liang, Xianglong Liu, and Dacheng Tao. 2025. Fairness Mediator: Neutralize Stereotype Associations to Mitigate Bias in Large Language Models. Proc. ACM Softw . Eng. 2, ISST A, Article ISST A012 (June 2025), 24 pages. doi:10.1145/3728881 [31] LLM-Core- T eam Xiaomi. 2025. MiMo: Unlocking the Reasoning Potential of Language Model – From Pretraining to Posttraining. arXiv:2505.07608 [cs.CL] https://arxiv .org/abs/2505.07608 [32] Shiyue Zhang, A sli Celikyilmaz, Jianfeng Gao , and Mohit Bansal. 2021. EmailSum: Abstractive Email Thread Summarization. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment