Slice and Explain: Logic-Based Explanations for Neural Networks through Domain Slicing

Neural networks (NNs) are pervasive across various domains but often lack interpretability. To address the growing need for explanations, logic-based approaches have been proposed to explain predictions made by NNs, offering correctness guarantees. H…

Authors: Luiz Fern, o Paulino Queiroz, Carlos Henrique Leitão Cavalcante

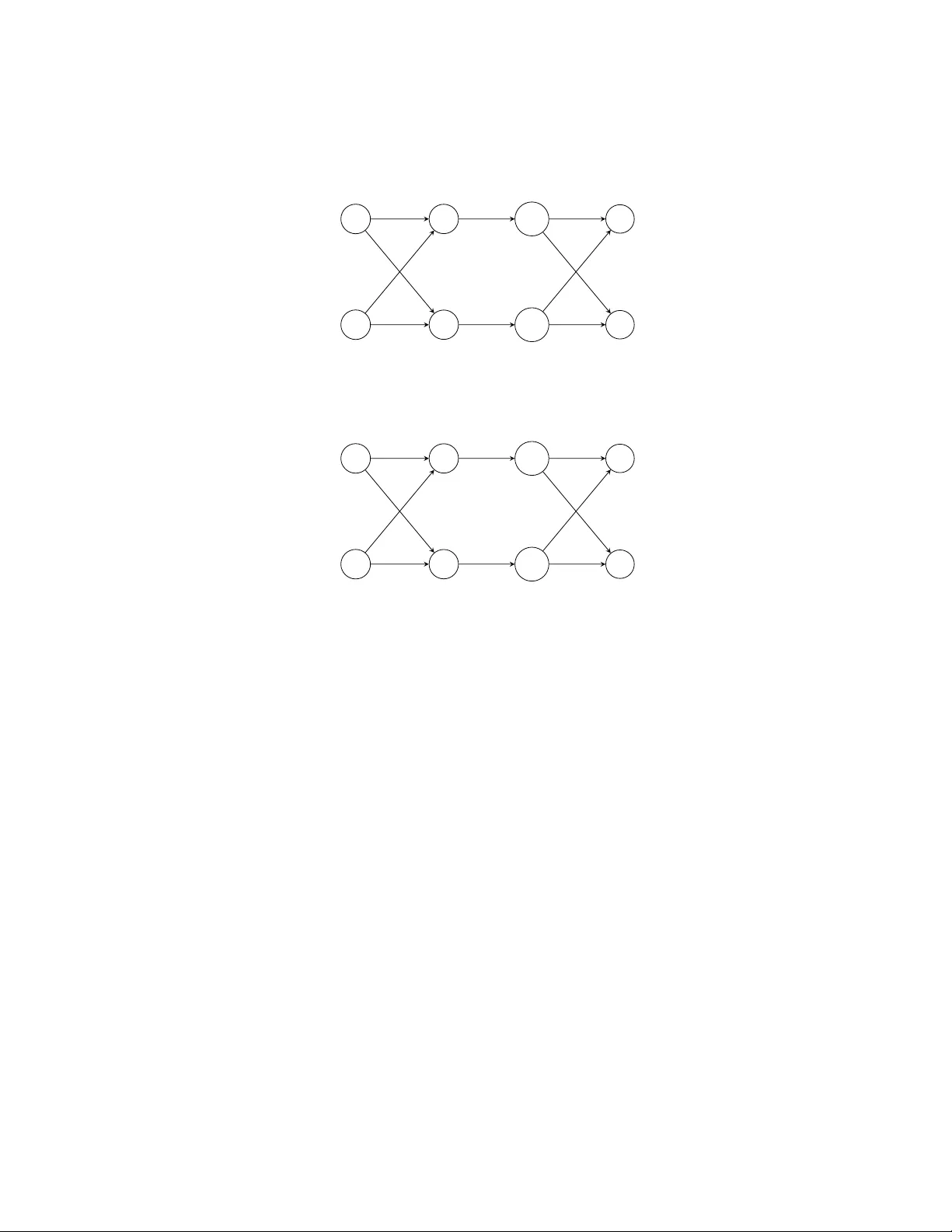

Slice and Explain: Logic-Based Explanations for Neural Net w orks through Domain Slicing Luiz F ernando P aulino Queiroz [0009 − 0003 − 2407 − 3204] , Carlos Henrique Leitão Ca v alcante [0000 − 0001 − 9395 − 8338] , and Thiago Alv es Ro c ha [0000 − 0001 − 7037 − 9683] Instituto F ederal do Ceará (IF CE), Maracanaú, Ceará, Brazil {luiz.fernando, henriqueleitao, thiago.alves}@ifce.edu.br Abstract. Neural net works (NNs) are perv asive across v arious domains but often lack interpretabilit y . T o address the growing need for explana- tions, logic-based approac hes ha ve b een prop osed to explain predictions made b y NNs, offering correctness guarantees. Ho wev er, scalability re- mains a concern in these methods. This pap er prop oses an approac h lev eraging domain slicing to facilitate explanation generation for NNs. By reducing the complexity of logical constraints through slicing, we de- crease explanation time b y up to 40% less time, as indicated through comparativ e exp eriments. Our findings highligh t the efficacy of domain slicing in enhancing explanation efficiency for NNs. Keyw ords: Neural Net w orks · Machine Learning · Explainable Artifi- cial Intelligence · Logic-based Explainability 1 In tro duction Neural Netw orks (NNs) are widely used across v arious domains [8, 1, 13], but their lac k of interpretabilit y makes them opaque models [10]. This means that the pro cesses leading to their outputs from a given set of inputs are not inherently in terpretable or explainable. This opacit y raises concerns in critical systems with limited tolerance for failure [14]. As a result, efforts hav e b een made to dev elop to ols that can explain NN b eha vior [9, 7]. In this work, an explanation for a given input and its corresp onding output is a subset of attributes that are sufficient to determine the output. While the remaining attributes are not necessary . F or instance, consider an input { income = 5000 , employ ment _ status = empl oy ed , loan _ histor y = g ood , ag e = 30 } and let N b e a neural net work that out- puts cr e dit_appr ove d for this input. A p ossible explanation for this prediction is { l oan _ history = g ood, employ ment _ status = empl oyed } . This means that if an applican t has a goo d loan history and is employ ed, the neural netw ork N predicts cr e dit_appr ove d , regardless of the v alues of income and ag e . Heuristic explainable AI (XAI) methods, suc h as LIME [14] and Anchors [15], hav e b een used to pro vide explanations for NNs and other machine learning (ML) mo dels. Ho wev er, these metho ds lack guarantees of correctness [2], making it difficult to assess the trustw orthiness of machine learning (ML) mo dels. Recent 2 Queiroz et al. researc h in logic-based XAI for ML classifiers [17, 9, 18, 3, 16, 4, 6] offers promis- ing solutions b y providing guaran tees of correctness. Moreo ver, these metho ds also ensure explanations without redundancy [9]. Such explanations provide only essen tial information and seem easier to understand. Among these logic-based XAI approaches, the metho d prop osed by Ignatiev et al. [9] specifically targets NNs. Their approach enco des the NN as a set of logical constrain ts—comprising Bo olean com binations of linear (in)equalities ov er binary and contin uous v ari- ables—and lev erages a Mixed In teger Linear Programming (MILP) solver to determine whether a subset of input features is sufficient to determine the same prediction. Nonetheless, scalability remains a key challenge when applying these tec hniques to larger NNs. In this work, we prop ose an enhancement of the metho d b y Ignatiev et al. [9], in tro ducing a no vel tec hnique based on slicing to improv e scalabilit y . Inspired b y NN simplification tec hniques [12], this technique partitions the input domain in to smaller sub domains, allowing us to simplify the original NN and p oten tially reduce explanation computation time. T o the b est of our knowledge, this is the first application of slicing in the context of XAI. By adapting this technique to explainability , we show that domain slicing can enhance the scalabilit y of logic-based XAI while preserving correctness and irredundancy guaran tees. Our exp erimen ts ev aluate the impact of slicing on explanation times, considering configurations with up to 3 slices. Our results show that, for deep er NNs, slicing can significantly reduce ex- planation times by o ver 40% for some datasets. This suggests that slicing is a promising strategy for impro ving the scalability of XAI metho ds, particularly for larger NNs. How ever, the impact of slicing v aries across datasets, and its ef- fectiv eness seems to dep end on the characteristics of the data. F or smaller NNs, the ov erhead of handling multiple slices often outw eighed the potential gains in efficiency . These findings suggest that slicing has the p oten tial to improv e scalabilit y for larger NNs in certain scenarios. 2 Preliminaries This section aims to present basic concepts necessary for a general understanding of the w ork. 2.1 First-order Logic o ver LRA In this work, we use first-order logic (F OL) to generate explanations with guar- an tees of correctness. W e use quan tifier-free first-order formulas ov er the theory of linear real arithmetic (LRA) [11]. V ariables are allow ed to take v alues from the real num b ers R . W e consider form ulas as P n i =1 w i x i ≤ b , suc h that n ≥ 1 is a natural num ber, eac h w i and b are fixed real n umbers, and e ac h x i is a first-order v ariable. As usual, if F and G are form ulas, then ( F ∧ G ) , ( F ∨ G ) , ( ¬ F ) , ( F → G ) are formulas. W e allo w the use of other letters for v ariables instead of x i , such as s i , z i . F or example, (2 . 5 x 1 + 3 . 1 s 2 ≥ 6) ∧ ( x 1 = 1 ∨ x 1 = 2) ∧ ( x 1 = 2 → s 2 ≤ 1 . 1) Slice and Explain 3 is a formula by this definition. Observ e that we allow standard abbreviations as ¬ (2 . 5 x 1 + 3 . 1 s 2 < 6) for 2 . 5 x 1 + 3 . 1 s 2 ≥ 6 . Since we are assuming the seman tics of formulas ov er the domain of real n umbers, an assignment A for a formula F is a mapping from the first-order v ariables of F to elemen ts in the domain of real n um b ers. F or instance, { x 1 7→ 2 . 3 , x 2 7→ 1 } is an assignmen t for (2 . 5 x 1 + 3 . 1 x 2 ≥ 6) ∧ ( x 1 = 1 ∨ x 1 = 2) ∧ ( x 1 = 2 → x 2 ≤ 1 . 1) . An assignment A satisfies a formula F if F is true under this assignmen t. F or example, { x 1 7→ 2 , x 2 7→ 1 . 05 } satisfies the form ula in the ab o v e example, whereas { x 1 7→ 2 . 3 , x 2 7→ 1 } do es not satisfy it. Moreov er, an assignmen t A satisfies a set Γ of formulas if all formulas in Γ are true under A . A set of formulas Γ is satisfiable if there exists a satisfying assignment for Γ . T o giv e an example, the set { (2 . 5 x 1 + 3 . 1 x 2 ≥ 6) , ( x 1 = 1 ∨ x 1 = 2) , ( x 1 = 2 → x 2 ≤ 1 . 1) } is satisfiable since { x 1 7→ 2 , x 2 7→ 1 . 05 } satisfies it. As another example, the set { ( x 1 ≥ 2) , ( x 1 < 1) } is unsatisfiable si nce no assignmen t satisfies it. Giv en a set of form ulas Γ and a form ula G , the notation Γ | = G is used to denote lo gic al c onse quenc e or entailment , i.e., each assignmen t that satisfies Γ also satisfies G . As an illustrativ e example, let Γ = { x 1 = 2 , x 2 ≥ 1 } and G = (2 . 5 x 1 + x 2 ≥ 5) ∧ ( x 1 = 1 ∨ x 1 = 2) . Then, Γ | = G . The essence of en tailment lies in ensuring the correctness of the conclusion G based on the set of premises Γ . In the context of computing explanations, as presented in [9], logical consequence serves as a fundamental to ol for guaran teeing the correctness of predictions made b y NNs. The relationship b et ween satisfiabilit y and entailmen t is a fundamental as- p ect of logic. It is widely known that, for all sets of form ulas Γ and all formulas G , it holds that Γ | = G if and only if Γ ∪ {¬ G } is unsatisfiable. (1) F or instance, { x 1 = 2 , x 2 ≥ 1) , ¬ ((2 . 5 x 1 + x 2 ≥ 5) ∧ ( x 1 = 1 ∨ x 1 = 2)) } has no satisfying assignmen t since an assignment that satisfies { x 1 = 2 , x 2 ≥ 1 } also satisfies (2 . 5 x 1 + x 2 ≥ 5) ∧ ( x 1 = 1 ∨ x 1 = 2) and, therefore, does not satisfy ¬ ((2 . 5 x 1 + x 2 ≥ 5) ∧ ( x 1 = 1 ∨ x 1 = 2)) . Since our approach builds up on the concept of logical consequence, we can leverage this connection in the context of computing explanations for NNs. 2.2 Classification Problems and Neural Net works In mac hine learning, classification problems are defined ov er a set of n input features F = { x 1 , ..., x n } and a set of k classes K = { c 1 , c 2 , ..., c k } . In this w ork, we consider that each input feature x i ∈ F tak es its v alues v i from the domain of real num b ers. Moreov er, each input feature x i has an upp er b ound ub i and a lo wer bound l b i suc h that l b i ≤ x i ≤ ub i , i.e., its domain is the closed in terv al [ l i , u i ] . This is represen ted as an input domain D = { l 1 ≤ x 1 ≤ u 1 , l 2 ≤ x 2 ≤ u 2 , ..., l n ≤ x n ≤ u n } . F or example, a feature for the height of a p erson b elongs to the real num b ers and may hav e lo wer and upp er b ounds of 0 . 5 and 2 . 1 meters, respectively . As another example, a pixel intensit y feature in an image classification problem belongs to the real num b ers and typically has low er and 4 Queiroz et al. upp er b ounds of 0 and 255 , resp ectively , representing the range of gra yscale v alues. Finally , a set I = { x 1 = v 1 , x 2 = v 2 , ..., x n = v n } , suc h that each v i is in the domain of x i , represen ts an instance of the input domain. In this work, a NN is a function that maps elements in the input domain in to the set of classes K . Then, a NN is a function N such that, for all instances I , N ( I ) = c ∈ K . A NN is represented as L + 1 la yers of neurons with L ≥ 1 . Eac h lay er l ∈ { 0 , 1 , ..., L } is comp osed of n l neurons, num bered from 1 to n l . These la yers and neurons define the arc hitecture of the NN. Lay er 0 is fictitious and corresp onds to the input of the NN, while the last lay er L corresp onds to its outputs. Lay ers 1 to L − 1 are typically referred to as hidden la yers. Let x l i b e the output of the i th neuron of the l th lay er, with i ∈ { 1 , ..., n l } . The inputs to the NN can b e represen ted as x 0 i or simply x i . Moreo ver, we represent the outputs as x L i or simply o i . The v alues x l i of the neurons in a given la yer l are computed through the output v alues x l − 1 j of the previous lay er, with j ∈ { 1 , ..., n l − 1 } . Each neuron applies a linear com bination of the output of the neurons in the previous la y er. Then, the neuron applies a nonlinear function, also known as an activ ation func- tion. The output of the linear part is represented as P n l − 1 j =1 w l i,j x l − 1 j + b l i where w l i,j and b l i denote the w eigh ts and bias, resp ectiv ely , serving as parameters of the i th neuron of lay er l . In this w ork, we consider only the Rectified Lin- ear Unit ( ReLU ) as activ ation function b ecause it can b e represented b y linear constrain ts due to its piecewise-linear nature. This function is a widely used ac- tiv ation whose output is the maxim um b et ween its input v alue and zero. Then, x l i = ReLU( P n l − 1 j =1 w l i,j x l − 1 j + b l i ) is the output of the ReLU . The last lay er L is comp osed of n L = k neurons, one for eac h class. It is common to normalize the output la yer using an additional Softmax la yer. Consequently , these v al- ues represent the probabilities associated with each class. The class with the highest probability is c hosen as the predicted class. How ev er, we do not need to consider this normalization transformation as it do es not c hange the max- im um v alue of the last la yer. Th us, the predicted class is c i ∈ K such that i = arg max j ∈{ 1 ,...,k } x L j . 2.3 MILP – Mixed In teger Linear Programming In Mixed In teger Linear Programming (MILP), the ob jectiv e is to optimize a linear function sub ject to linear constraints, where some or all of the v ariables are required to b e integers [5]. T o illustrate the structure of a MILP problem, w e provide an example below: min y 1 s.t. 1 ≤ x 1 ≤ 3 3 x 1 + s 1 − 2 = y 1 0 ≤ y 1 ≤ 3 x 1 − 2 0 ≤ s 1 ≤ 3 x 1 − 2 (2) z 1 = 1 → y 1 ≤ 0 Slice and Explain 5 z 1 = 0 → s 1 ≤ 0 z 1 ∈ { 0 , 1 } In the MILP problem in (2), we wan t to find v alues for v ariables x 1 , y 1 , s 1 , z 1 minimizing the v alue of the ob jective function y 1 , among all v alues that satisfy the constraints. V ariable z 1 is binary since z 1 ∈ { 0 , 1 } is a constrain t in the MILP , while v ariables x 1 , y 1 , s 1 ha ve the real num b ers R as their domain. The constrain ts in a MILP may appear as linear equations, linear inequalities, and indicator constrain ts. Indicator constrain ts can b e seen as logical implications of the form z = v → P n i =1 w i x i ≤ b such that z is a binary v ariable, and v is a constan t 0 or 1 . An imp ortant observ ation is that a MILP problem without an ob jective func- tion corresp onds to a satisfiability problem, as discussed in Subsection 2.1. Given that the approach for computing explanations relies on logical consequence, and considering the connection b et w een satisfiabilit y and logical consequence, we emplo y a MILP solv er to address explanation tasks. A dditionally , throughout the construction of the MILP mo del, w e may utilize optimization, sp ecifically emplo ying a MILP solver, to determine tight low er and upp er bounds for the neurons of NNs. 3 Logic-based XAI for Neural Net works As previously mentioned, most metho ds for explaining ML mo dels are heuristic, resulting in explanations that can not b e fully trusted. Logic-based explainabil- it y provides results with guaran tees of correctness and irredundancy . F or NNs, Ignatiev et al. [9] emplo y ed a logic-based approach based on linear constraints with binary and contin uous v ariables. Given an instance, this approach identifies a subset of input features sufficient to justify the corresp onden t output giv en by the NN. This metho d ensures correctness and minimality of explanations, which are referred to as ab ductive explanations . An abductive explanation is a subset of features that form a rule. When applied, this rule guarantees the same mo del prediction. The formal definition b elo w encapsulates this notion. Definition 1 (Ab ductiv e Explanation [9]). L et I = { x 1 = v 1 , ..., x n = v n } b e an instanc e and N b e a NN such that N ( I ) = c ∈ K . An ab ductiv e explanation X is a minimal subset of I such that for al l v ′ 1 ∈ [ l 1 , u 1 ] , ..., v ′ n ∈ [ l n , u n ] , if v ′ j = v j for e ach x j = v j ∈ X , then N ( { x 1 = v ′ 1 , ..., x n = v ′ n } ) = c . An abductive explanation is a minimal set of features from an instance that are sufficien t for the prediction. This subset ensures that, when the v alues of these features are fixed and the other features are v aried within their p ossible ranges, the prediction remains the same. In other words, it iden tifies key features that are sufficient for the output. Minimality ensures that the explanation X do es not include any redundan t features. In other words, remo ving any feature x j = v j from X would result in a subset that no longer guaran tees the same prediction c o ver the defined bounds. 6 Queiroz et al. It is imp ortant to note that a giv en instance may ha ve m ultiple distinct ab ductiv e explanations. Differen t subsets of features ma y independently satisfy the conditions for an explanation while maintaining minimality . This means that there can b e multiple wa ys to justify a prediction, each highlighting different asp ects of the instance that are sufficien t to ensure the same output. The approac h by Ignatiev et al. [9] to obtain abductive explanations for NNs w orks as follo ws. First, the NN and the input domain D are encoded as a set of form ulas F . W e encode a NN with L + 1 la yers and D as F in (3)-(10), where l = 1 , . . . , L − 1 and, for each l , j = 1 , . . . , n l . lb i ≤ x i ≤ ub i , i = 1 , ..., n (3) n l − 1 X i =1 w l i,j x l − 1 i + b l j = x l j − s l j (4) z l j = 1 → x l j ≤ 0 (5) z l j = 0 → s l j ≤ 0 (6) z l j = 0 ∨ z l j = 1 (7) 0 ≤ x l j ≤ ub l x,j (8) 0 ≤ s l j ≤ ub l s,j (9) o i = n L − 1 X j =1 w L i,j x L − 1 i + b L i , i = 1 , ..., k (10) In the following, we explain the notation. The enco ding uses v ariables x l j and o i with the same meaning as in the notation for NNs. Auxiliary v ariables s l j and z l j con trol the b eha viour of ReLU activ ations. Moreov er, this enco ding uses implications to represent the behavior of ReLU . V ariable z l j is binary and if z l j is equal to 1 , the ReLU output x l j is 0 and − s l j is equal to the linear part. Otherwise, the output x l j is equal to the linear part and s l j is equal to 0 . The b ounds ub l x,j are defined b y isolating v ariable x l j from other constrain ts in subsequent lay ers. Then, x l j is maximized to find its upper bound. A similar pro cess is applied to find the b ounds ub l s,j for v ariables s l j . In this case, ub l s,j corresp onds to the absolute v alue of the smallest non-p ositiv e input to the ReLU function. These b ounds ub l x,j and ub l s,j can be determined due to the b ounds of the features. F urthermore, these bounds can assist the solv er in accelerating the computation of explanations. Finally , in (3), v ariables x i ha ve low er and upper b ounds lb i , ub i , resp ectiv ely , defined b y the input domain. Therefore, D ⊆ F . The second step in this approach consists of defining a form ula E j that cap- tures the prediction of the NN. Given an instance I = { x 1 = v 1 , x 2 = v 2 , ..., x n = v n } from the input domain, the neural netw ork N , and its corresp onding pre- diction N ( I ) = c j , w e enco de this information b y asserting that o j is the largest among all output neurons: E j = V k i =1 ,i = j o j > o i . Then, I ∪ F is satisfiable and I ∪ F | = E j . An ab ductiv e explanation X is calculated removing feature b y feature from I . F or example, given x i = v i ∈ I , if ( I \ { x i = v i } ) ∪ F | = E j , then feature x i = v i is not required for the explanation and can b e remov ed. In other Slice and Explain 7 w ords, x i can take any v alue within its domain, while guaranteeing that the pre- diction remains c j . Otherwise, if ( I \ { x i = v i } ) ∪ F | = E j , then x i = v i is k ept, since class c j cannot be guaranteed. In this case, there exists some v alue within the domain of x i that w ould change the prediction. This pro cess is describ ed in Algorithm 1 and is performed for all features. Then, X is the result at the end of this pro cedure. This means that for the v alues of the features in X , the NN mak es the same classification c j , whatev er the v alues of the remaining features. Algorithm 1 Computing an Explanation X Input: F , I , E j 1: X ← I 2: for x i = v i in I do 3: if ( X \ { x i = v i } ) ∪ F | = E j then 4: X ← X \{ x i = v i } 5: return X As stated in (1), to chec k en tailments ( X \ { x i = v i } ) ∪ F | = E j , it is equiv alen t to test whether ( X \ { x i = v i } ) ∪ F ∪ {¬ E j } is unsatisfiable. Since F , X and ¬ E are enco ded as linear constraints with contin uous and binary v ariables, along with indicator constraints, suc h en tailments can b e addressed using a MILP solv er. 4 Logic-based XAI for NNs via Slicing Previous w ork [9] introduced a logic-based approac h for explaining NNs, iden ti- fying a subset of input features sufficient to justify a given output. This metho d ensures correctness and minimalit y of explanations, which are referred to as ab ductiv e explanations. Unfortunately , this approac h b ecomes computationally prohibitiv e with increasing netw ork size. In this work, w e address this limita- tion b y exploring the concept of slicing . W e partition the feature domains into smaller sub domains. Then, within eac h subdomain, w e can simplify the original NN, p oten tially reducing the time to compute explanations. Therefore, domain slicing ma y enable the computation of ab ductiv e explanations for larger NNs. There are several approac hes for partitioning the input domain. One direct metho d ev aluated in our work inv olves dividing the domain of a feature into tw o equal sub-domains. More formally , let x i = v i in I such that l b i ≤ x i ≤ ub i in F and define m i := ( l i + u i ) / 2 . Then, we consider the sub-domains lb i ≤ x i ≤ m i and m i ≤ x i ≤ ub i for feature x i . T o illustrate the method, w e consider Example 1 b elo w. Example 1. F or instance, consider an input domain with tw o features: D = { 0 . 2 ≤ x 1 ≤ 0 . 7 , 0 . 2 ≤ x 2 ≤ 0 . 5 } . Also consider the simple NN in Fig. 1. This NN consists of an input lay er, one hidden lay er, and one output la yer. Each lay er consists of tw o neurons. Then, there are tw o inputs to this NN, x 1 with domain 8 Queiroz et al. x 1 [0 . 2 , 0 . 7] x 2 [0 . 2 , 0 . 5] y 1 [ − 0 . 3 , 0 . 8] y 2 [ − 0 . 3 , 0 . 5] x 1 1 x 1 2 o 1 o 2 − 1 1 2 − 1 ReLU ReLU 1 1 1 − 1 Fig. 1. Example of Neural Net work [0 . 2 , 0 . 7] and x 2 with domain [0 . 2 , 0 . 5] . T o simplify the example, w e separate eac h neuron in the hidden lay er into tw o parts: one representing the output of the linear transformation and the other capturing the output of the ReLU activ a- tion. W e denote the upp er neuron in the hidden lay er as y 1 and the low er neuron as y 2 . The weigh ts for the linear transformations are represen ted b y w eights on the edges. The bias for each neuron is 0 and has been omitted to simplify the example. Ab ov e or below some neurons, there is an in terv al representing a tigh t range of v alues the neuron can take. These initial interv als for y 1 and y 2 can b e obtained through optimization, for example. In particular, the upp er b ound of y 1 can b e determined by maximizing: − 1 x 1 + 2 x 2 . A low er b ound for y 1 can b e found using a similar optimization process. This optimization is feasible due to the predefined b ounds on the input features. The upp er b ound ub 1 x, 1 of x 1 1 is, then, the maxim um betw een 0 and the largest input to the ReLU function. In our example, this results in ub 1 x, 1 = max { 0 , 0 . 8 } = 0 . 8 . Similarly , the bound ub 1 s, 1 of s 1 1 is the absolute v alue of the minimum betw een 0 and the smallest in- put to the ReLU function. In this case, we obtain: ub 1 s, 1 = | min { 0 , − 0 . 3 }| = 0 . 3 . A similar reasoning applies to x 1 2 and s 1 2 , where their resp ectiv e b ounds can be determined using the same approac h based on the inputs to the ReLU function. In this example, w e apply the slicing metho d to x 2 , obtaining the t wo sub- domains: D 1 = { 0 . 2 ≤ x 1 ≤ 0 . 7 , 0 . 2 ≤ x 2 ≤ 0 . 35 } and D 2 = { 0 . 2 ≤ x 1 ≤ 0 . 7 , 0 . 35 ≤ x 2 ≤ 0 . 5 } . Then, within each subdomain, w e can simplify the orig- inal NN, p oten tially reducing the time to compute explanations. F or example, the simplification corresp onding to subdomain D 1 is presen ted in Fig. 2. The sub domain D 1 affects the propagation of v alues within the NN. F or y 1 , its original interv al w as [ − 0 . 3 , 0 . 8] . With the new subdomain restriction, the v alues of x 2 are no w constrained within the reduced range [0 . 2 , 0 . 35] . This decreases the influence of x 2 on the linear transformations, lo w ering the upp er bound of y 1 to 0 . 5 . Th us, the updated interv al for y 1 b ecomes [ − 0 . 3 , 0 . 5] . Consequen tly , ub 1 x, 1 = max { 0 , 0 . 5 } = 0 . 5 . Therefore, for sub domain D 1 , the form ula 0 ≤ x 1 1 ≤ 0 . 8 corresp onding to Equation (8) for l = 1 and j = 1 , can b e simplified to 0 ≤ x 1 1 ≤ 0 . 5 . In a MILP problem, tightening the b ounds of a contin uous v ariable Slice and Explain 9 x 1 [0 . 2 , 0 . 7] x 2 [0 . 2 , 0 . 35] y 1 [ − 0 . 3 , 0 . 5] y 2 [ − 0 . 15 , 0 . 5] x 1 1 x 1 2 o 1 o 2 − 1 1 2 − 1 ReLU ReLU 1 1 1 − 1 Fig. 2. Slicing respective to subdomain D 1 x 1 [0 . 2 , 0 . 7] x 2 [0 . 35 , 0 . 5] y 1 [0 . 0 , 0 . 8] y 2 [ − 0 . 3 , 0 . 35] x 1 1 x 1 2 o 1 o 2 − 1 1 2 − 1 ReLU ReLU 1 1 1 − 1 Fig. 3. Slicing respective to subdomain D 2 reduces the feasible region, thereby limiting the search space explored by the solv er and p oten tially improving solv er efficiency . F or y 2 , whose original interv al was [ − 0 . 3 , 0 . 5] , the new restriction on x 2 in sub domain D 1 affects the low er bound. Since the minimum v alue of x 2 is no w 0 . 2 , the linear transformation results in an up dated low er b ound of − 0 . 15 for y 2 . Consequen tly , the new in terv al for y 2 b ecomes [ − 0 . 15 , 0 . 5] . Therefore, ub 1 s, 2 = | min { 0 , − 0 . 15 }| = 0 . 15 . Then, for subdomain D 1 , the form ula 0 ≤ s 1 2 ≤ 0 . 3 corresp onding to Equation (9) for l = 1 and j = 1 , can b e simplified to 0 ≤ s 1 2 ≤ 0 . 15 . The simplification corresp onding to sub domain D 2 is presented in Fig. 3. Initially , the in terv al of neuron y 2 w as [ − 0 . 3 , 0 . 5] . After slicing, due to the new range of x 2 in the subdomain D 2 , the upper b ound of y 2 decreases to 0 . 35 . The lo wer bound of y 2 remains unc hanged at − 0 . 3 . The most in teresting case in this example occurs with neuron y 1 in subdomain D 2 . Initially , its interv al was [ − 0 . 3 , 0 . 8] , but with the sub domain constraint x 2 ≥ 0 . 35 in D 2 , the lo wer bound of y 1 increases from − 0 . 3 to 0 . 0 . In this case, the form ulas − 1 x 1 + 2 x 2 = x 1 1 − s 1 1 , z 1 1 = 1 → x 1 1 ≤ 0 , z 1 1 = 0 → s 1 1 ≤ 0 , z 1 1 = 0 ∨ z 1 1 = 1 , 0 ≤ x l j ≤ 0 . 8 , and 0 ≤ s l j ≤ 0 . 3 corresponding to Equations (4)-(7) for l = 1 and j = 1 , i.e., for neuron x 1 1 , can b e simplified to − 1 x 1 + 2 x 2 = x 1 1 and 10 Queiroz et al. 0 ≤ x 1 1 ≤ 0 . 8 . In other w ords, we remo ve the constrain ts asso ciated with binary v ariable z 1 1 and real v ariable s 1 1 . Since MILP solv ers are generally more efficien t with few er binary v ariables, this reduction potentially improv es computational efficiency . W e will now contin ue exploring the slicing approac h b y extending it to tw o features simultaneously . This will allo w us to understand how the sub domains are formed and how the combined effect of slicing multiple features partitions the input domain. Example 2. Consider an input domain with three features: D = { l b 1 ≤ x 1 ≤ ub 1 , l b 2 ≤ x 2 ≤ ub 2 , l b 3 ≤ x 3 ≤ ub 3 } . Applying this slicing metho d to x 1 , w e obtain tw o sub-domains: D 1 = { l b 1 ≤ x 1 ≤ m 1 , l b 2 ≤ x 2 ≤ ub 2 , l b 3 ≤ x 3 ≤ ub 3 } and D 2 = { m 1 ≤ x 1 ≤ ub 1 , l b 2 ≤ x 2 ≤ ub 2 , l b 3 ≤ x 3 ≤ ub 3 } . Now, applying the same partitioning method to x 2 , each of the previous sub-domains is further divided in to t wo, resulting in four sub-domains: D 1 = { l b 1 ≤ x 1 ≤ m 1 , l b 2 ≤ x 2 ≤ m 2 , l b 3 ≤ x 3 ≤ ub 3 } , D 2 = { lb 1 ≤ x 1 ≤ m 1 , m 2 ≤ x 2 ≤ ub 2 , l b 3 ≤ x 3 ≤ ub 3 } , D 3 = { m 1 ≤ x 1 ≤ ub 1 , l b 2 ≤ x 2 ≤ m 2 , l b 3 ≤ x 3 ≤ ub 3 } , and D 4 = { m 1 ≤ x 1 ≤ ub 1 , m 2 ≤ x 2 ≤ ub 2 , l b 3 ≤ x 3 ≤ ub 3 } . By con tinuing this process, we iteratively refine the input domain, generating a finer partitioning of the domain. In netw orks with a larger num b er of input neurons, the num b er of resulting input sub domains can b ecome prohibitiv ely large. Then, w e will ev aluate the domain slicing tec hnique by limiting the v alues to a maxim um of three features. Next, we explore how analyzing a single sub domain can sometimes b e enough to determine that a feature must b e retained in the explanation. This o ccurs when the constraints within that sub domain inheren tly enforce the necessit y of the feature to ensure the prediction, even without considering the entire inp ut domain. In Algorithm 1, w e test whether ( X \ x i = v i ) ∪ F | = E j . Since the domain of feature x i is in F , i.e., l i ≤ x i ≤ u i ∈ F , w e are effectively testing whether all assignmen ts A that satisfy ( X \ x i = v i ) , F and l i ≤ x i ≤ u i also satisfy E j . If there exists an assignment A where x i 7→ v ′ i ∈ A for some v ′ i ∈ [ l i , u i ] such that A satisfy ( X \ x i = v i ) and F but do es not satisfy E j , then ( X \ x i = v i ) ∪ F | = E j . Consequen tly , Algorithm 1 ensures that x i = v i remains in X . No w, supp ose we apply slicing to feature x i , splitting its domain into tw o sub domains D 1 and D 2 where l i ≤ x i ≤ m i ∈ D 1 and m i ≤ x i ≤ u i ∈ D 2 . T o verify whether ( X \ x i = v i ) ∪ F | = E j it suffices to chec k b oth sub domains separately: ( X \ x i = v i ) ∪ F ∪ { m i ≤ x i } | = E j and ( X \ x i = v i ) ∪ F ∪ { x i ≤ m i } | = E j . Since l i ≤ x i and x i ≤ u i are already present in F , if ( X \ x i = v i ) ∪ F ∪ { x i ≤ m i } | = E j , then there exists an assignment A with x i 7→ v ′ i ∈ A for some v ′ i ∈ [ l i , m i ] suc h that A satisfy ( X \ x i = v i ) and F but does not satisfy E j . Consequently , since v ′ i ∈ [ l i , m i ] ⊆ [ l i , u i ] , the same assignment A satisfies ( X \ x i = v i ) and F but do es not satisfy E j . Thus, we conclude that ( X \ x i = v i ) ∪ F | = E j without needing to ev aluate the entire domain l i ≤ x i ≤ u i . Slice and Explain 11 Similarly , if ( X \ { x i = v i } ) ∪ F ∪ { m i ≤ x i } | = E j , the same reasoning applies, ensuring that ( X \ x i = v i ) ∪ F | = E j . W e now consider the conv erse. Assume that ( X \ x i = v i ) ∪ F | = E j . Then there exists an assignment A where x i 7→ v ′ i ∈ A for some v ′ i ∈ [ l i , u i ] , such that A satisfies ( X \ { x i = v i } ) and F , but do es not satisfy E j . W e ha ve tw o p ossible cases (b oth may hold if v ′ i = m i ). If v ′ i ∈ [ l i , m i ] , then it follows that ( X \ { x i = v i } ) ∪ F ∪ { x i ≤ m i } | = E j . If v ′ i ∈ [ m i , u i ] , then w e conclude that ( X \ { x i = v i } ) ∪ F ∪ { m i ≤ x i } | = E j . F rom the reasoning abov e, w e conclude that v erifying ( X \{ x i = v i } ) ∪F | = E j can b e reduced to c hec king the t wo sub domains separately . F urthermore, w e established that if ( X \ { x i = v i } ) ∪ F | = E j , then at least one of these sub domain c hecks m ust also fail. These observ ations lead to the follo wing prop osition: Prop osition 1. L et m i := ( l i + u i ) / 2 for a fe atur e x i with l i ≤ x i ≤ u i ∈ D . Then, ( X \{ x i = v i } ) ∪ F | = E j if and only if ( X \ { x i = v i } ) ∪ F ∪ { x i ≤ m i } | = E j and ( X \ { x i = v i } ) ∪ F ∪ { m i ≤ x i } | = E j . This observ ation naturally extends to m ultiple features. Sp ecifically , if ( X \ { x i = v i , x p = v p } ) ∪ F ∪ { x i ≤ m i , m p ≤ x p } | = E j , then considering the entire domain also do es not en tail E j , i.e., ( X \ { x i = v i , x p = v p } ) ∪ F | = E j . This suggests a structured wa y to refine the input domain. The follo wing prop osition formalizes this idea for tw o features, showing that verifying entailmen t ov er the full domain can b e reduced to v erifying it o ver smaller subdomains. Prop osition 2. L et m i := ( l i + u i ) / 2 for a fe atur e x i with l i ≤ x i ≤ u i ∈ D , and m p := ( l p + u p ) / 2 for a fe atur e x p with l p ≤ x p ≤ u p ∈ D . Then, ( X \ { x i = v i , x p = v p } ) ∪ F | = E j if and only if ( X \ { x i = v i , x p = v p } ) ∪ F ∪ { x i ≤ m i , x p ≤ m p } | = E j , ( X \ { x i = v i , x p = v p } ) ∪ F ∪ { x i ≤ m i , m p ≤ x p } | = E j , ( X \ { x i = v i , x p = v p } ) ∪ F ∪ { m i ≤ x i , x p ≤ m p } | = E j , and ( X \ { x i = v i , x p = v p } ) ∪ F ∪ { m i ≤ x i , m p ≤ x p } | = E j . The prop osition shows that ins tead of ev aluating entailmen t o v er the en tire in- put space, it suffices to chec k it o ver a partitioned set of subdomains. Moreo ver, eac h sub domain can simplify the constrain ts of the MILP problem, as demon- strated in Example 1. How ever, in NNs with a large num b er of input features, the n umber of resulting sub domains can gro w exp onentially , making the approac h computationally impractical. T o address this, in our experiments, w e limit the domain slicing technique to at most three features, whic h are selected incremen- tally and at random. In the subsequent section, we will ev aluate the impact of domain slicing b y measuring its effect on explanation computation time. 5 Exp erimen ts In our exp eriments, we applied slicing to contin uous input features. F or each trained NN, we considered four slicing cases: no slicing and one to three sliced attributes. The case without any slicing represen ts the baseline model found in other works [9], whereas the cases with one or more slicings represent the 12 Queiroz et al. approac h w e prop ose. The features selected for slicing were chosen randomly , and eac h additional slice reused the sliced features from the previous slicing lev el. All slicing configurations pro duce the same explanations, ensuring correctness and minimalit y . P erformance w as ev aluated b y summing explanation times (in seconds) o ver a fixed set of randomly selected instances. F or each slicing level, we also measure d the n umber of binary v ariables remo ved due to the input domain and sub domains, av eraging across sub domains. These simplifications are applied to the constraints in Equations (4)-(7), as illustrated in Example 1. The exten t of binary v ariable elimination ma y influence the computational efficiency of our approac h. As our method guaran tees p erfect fidelity to the underlying mo del by construction, w e do not ev aluate this metric in our exp erimen ts. W e used fiv e datasets from the UCI Mac hine Learning Repository 1 and the P enn Mac hine Learning Benc hmarks 2 : Auto (25 features), Hepatitis (19 fea- tures), Australian (14 features), Heart-Statlog (13 features), and Glass (9 fea- tures). F or each, we trained three NNs, each with 16 neurons p er la yer: one with t wo hidden lay ers, one with three hidden lay ers, and one with four hidden lay ers. The NNs were implemented with T ensorFlo w, using a 90%-10% data split for training and testing. T raining used a batc h size of 4 and 100 ep o c hs. Explana- tions w ere p erformed on the test set. F or the en tailmen t chec ks in Algorithm 1, w e used IBM-CPLEX 3 via the DOcplex API to handle MILP constraints. All exp erimen ts were run on an Intel Core i5-7200U (2.50 GHz) with 8 GB of RAM. F ull details are a v ailable in our rep ository 4 . The results for 2-, 3-, and 4-la yer NNs are shown in T ables 1–3, resp ectiv ely . The n um b er of features of each dataset is indicated in paren theses. F or 2-lay er NNs (T able 1), slicing did not improv e explanation time and often increased it, with little to no binary v ariable remov al. This suggests that, for smaller arc hitectures, the ov erhead of sli cing outw eighs its b enefits, and performance gains ma y only app ear b ey ond a certain NN size. T able 1. Explanation time (in seconds) and a v erage % binary v ariables remo ved by n umber of slices for 2-lay ers NNs. Dataset Metric 0 slices 1 slice 2 slices 3 slices Auto Exp Time (s) 12.56 13.17 13.32 13.80 (25) % Bin Rem 0.00 0.00 0.00 0.00 Hepatitis Exp Time (s) 4.49 5.83 6.74 9.97 (19) % Bin Rem 0.00 0.00 0.00 0.00 Australian Exp Time (s) 32.20 33.25 33.01 34.09 (14) % Bin Rem 0.00 1.00 1.50 2.13 Heart-Statlog Exp Time (s) 9.65 9.85 10.75 13.12 (13) % Bin Rem 0.00 0.00 1.00 1.38 Glass Exp Time (s) 5.80 5.97 6.60 6.87 (9) % Bin Rem 0.00 0.00 0.00 0.13 1 https://archive.ics.uci.edu/ml/ 2 https://github.com/EpistasisLab/penn- ml- benchmarks/ 3 https://www.ibm.com/docs/en/icos/22.1.2 4 https://github.com/Luizfernandopq/Explications- ANNs Slice and Explain 13 F or 3-la yer NNs (T able 2), slicing sometimes impro ved explanation time, esp ecially when accompanied by notable binary v ariable remov al. In the Aus- tralian dataset, binary v ariable remo v al reac hed 16% with three slices, and the b est explanation time reduction (5.00%) o ccurred with tw o slices (12.75% re- mo v al). Heart-Statlog follo w ed a similar pattern, with up to 3.50% remov al and significan t time reductions of 15.67% (three slices) and 11.22% (tw o slices). In con trast, Hepatitis and Glass show ed low binary v ariable remov al and increasing explanation times with more slices, indicating that slicing introduced o verhead without sufficien t simplification. The Auto dataset sho wed an interesting b eha v- ior: no binary v ariables were remov ed at an y slicing lev el, yet explanation time impro ved (13.40% with one slice, 9.36% with three). This suggests that efficiency gains can also stem from other structural changes induced by slicing, not just from binary v ariable elimination. T able 2. Explanation time (in seconds) and av erage % of binary v ariables remov ed by n umber of slices for 3-lay ers NNs. The v alues in b old represent the slices that achiev ed a reduction in explanation time compared to 0 slices. Dataset Metric 0 slices 1 slice 2 slices 3 slices Auto Exp Time (s) 56.40 48.84 68.86 51.12 (25) % Bin Rem 0.00 0.00 0.00 0.00 Hepatitis Exp Time (s) 15.85 19.82 19.90 18.59 (19) % Bin Rem 0.00 0.00 0.00 0.38 Australian Exp Time (s) 149.92 154.09 142.43 153.18 (14) % Bin Rem 0.00 11.00 12.75 16.00 Heart-Statlog Exp Time (s) 69.50 73.63 61.70 58.61 (13) % Bin Rem 0.00 1.00 1.50 3.50 Glass Exp Time (s) 38.99 53.65 42.44 49.58 (9) % Bin Rem 1.00 1.00 1.00 1.13 Next, w e discuss the results for 4-lay ers NNs from T able 3. The Australian and Heart-Statlog datasets show the most substantial benefits from slicing. In the Australian dataset, explanation times improv e consistently as more slices are added, culminating in a remark able 37.20% reduction with three slices. This aligns with the progressive increase in binary v ariable remo v al, which reac hes 23.38% for three slices. Similarly , the Heart-Statlog dataset b enefits across all slicing configurations, with explanation time reductions exceeding 25% for one slice and reaching 40.46% with three slices. These results highlight the p oten tial of slicing to enhance efficiency in explanation tasks, esp ecially for larger NNs. Con versely , the Auto dataset presen ts a case where slicing consistently increases explanation time, and no binary v ariables are remov ed at any slicing lev el. This reinforces the observ ation that, for this dataset, slicing introduces computational o verhead without pro viding meaningful simplifications. Similarly , the Hepati- tis and Glass datasets exhibit low binary v ariable remo v al (at most 1.50% and 2.00%, resp ectiv ely), and their explanation time reductions are inconsistent. The Hepatitis dataset b enefits sligh tly from one slice (6.28% reduction) but deteri- orates with additional slices. The Glass dataset shows a notable impro vemen t only with three slices (21.39% reduction). 14 Queiroz et al. T able 3. Explanation time (in seconds) and av erage % of binary v ariables remov ed by n umber of slices for 4-lay ers NNs. The v alues in b old represent the slices that achiev ed a reduction in explanation time compared to 0 slices. Dataset Metric 0 slices 1 slice 2 slices 3 slices Auto Exp Time (s) 387.34 454.78 466.26 460.04 (25) % Bin Rem 0.00 0.00 0.00 0.00 Hepatitis Exp Time (s) 463.45 434.36 510.26 625.97 (19) % Bin Rem 1.00 1.00 1.50 1.50 Australian Exp Time (s) 6156.11 6017.00 5195.69 3867.26 (14) % Bin Rem 1.00 15.50 19.00 23.38 Heart-Statlog Exp Time (s) 3317.58 2457.75 2523.63 1974.47 (13) % Bin Rem 3.00 3.00 3.50 4.75 Glass Exp Time (s) 217.89 253.87 233.12 171.31 (9) % Bin Rem 1.00 1.00 1.50 2.00 Ov erall, the results suggest that, for deep er net works, the effectiveness of slicing is strongly influenced by the extent of binary v ariable remov al. When this remo v al is substan tial, as in the Australian and Heart-Statlog datasets, slicing tends to yield consistent efficiency gains. Ho wev er, when the n umber of binary v ariables remov ed is minimal or nonexisten t, slicing may introduce o verhead that out weighs its potential benefits. A dditionally , the relationship betw een binary v ariable remo v al and expla- nation time impro vemen ts appears to b e more dataset-dep enden t than strictly related to NN depth or data dimensionality . F or instance, the Auto and Glass datasets b oth exhibit minimal binary v ariable remov al at any slicing lev el. These datasets ha v e significan tly differen t dimensionalities (25 and 9, respectively), suggesting that the impact of slicing is not solely determined by the n umber of input features, but rather b y dataset-sp ecific characteristics or features selected for slicing. Certain features may b etter capture meaningful structure in the data, leading to greater simplification and more efficien t explanations. 6 Conclusions In this pap er, we prop ose using domain slicing to enhance the scalability of logic- based XAI for NNs. Our approach partitions the input domain into smaller sub- domains, allo wing simplification of logical constrain ts. Exp erimen ts show that domain slicing can reduce explanation time b y ov er 40%. Notably , the benefits of slicing are more pronounced in cases where binary v ariable remov al b ecomes more effectiv e. This impro vemen t suggests that mo dest slicing can enhance effi- ciency without compromising correctness. Moreo ver, the relationship b et ween explanation time impro vemen ts and bi- nary v ariable remov al app ears to dep end more on dataset-sp ecific characteristics than on NN depth or input dimensionalit y . This also suggests that the features selected for slicing can play a key role, highlighting the imp ortance of feature selection in the slicing pro cess. F uture w ork may explore slicing strategies that adapt to dataset characteristics and net work prop erties, as well as tec hniques for automatically iden tifying the most effective features for slicing. Slice and Explain 15 A ckno wledgmen ts. This w ork w as partially funded b y Co ordenação de Aperfeiçoa- men to de Pessoal de Nív el Sup erior (CAPES). References [1] Alghoul, A., Al Ajrami, S., Al Jarousha, G., Harb, G., Abu-Naser, S.S.: Email classification using artificial neural net work. IJAER 2 (11) (2018) [2] Amparore, E., P erotti, A., Ba jardi, P .: T o trust or not to trust an expla- nation: using LEAF to ev aluate lo cal linear XAI metho ds. PeerJ Computer Science 7 (2021) [3] Audemard, G., Bellart, S., Bounia, L., Koric he, F., Lagniez, J.M., Mar- quis, P .: On preferred ab ductiv e explanations for decision trees and random forests. In: 31st IJCAI (2022) [4] Bassan, S., Katz, G.: T ow ards formal XAI: F ormally approximate minimal explanations of neural net works. In: T A CAS (2023) [5] Bénic hou, M., Gauthier, J.M., Giro det, P ., Hentges, G., Ribière, G., Vin- cen t, O.: Experiments in mixed-in teger linear programming. Mathematical Programming 1 , 76–94 (1971) [6] Bjørner, K., Judson, S., Cano, F., Goldman, D., Sho emak er, N., Pisk ac, R., Könighofer, B.: F ormal XAI via syntax-guided syn thesis. In: 1st AISoLA, pp. 119–137 (2023) [7] Elb oher, Y.Y., Gottschlic h, J., Katz, G.: An abstraction-based framework for neural net work v erification. In: 32nd CA V (2020) [8] Go odfellow, I., Bengio, Y., Courville, A.: Deep Learning. MIT Press (2016) [9] Ignatiev, A., Naro dytsk a, N., Marques-Silv a, J.: Ab duction-based explana- tions for mac hine learning mo dels. In: 33rd AAAI (2019) [10] K oh, P .W., Liang, P .: Understanding black-box predictions via influence functions. In: 34th ICML (2017) [11] Kro ening, D., Stric hman, O.: Decision pro cedures. Springer (2016) [12] Laha v, O., Katz, G.: Pruning and slicing neural net w orks using formal v er- ification. In: FMCAD (2021) [13] Musleh, M.M., Ala jrami, E., Khalil, A.J., Abu-Nasser, B.S., Barho om, A.M., Naser, S.A.: Predicting liver patients using artificial neural net work. IJAISR 3 (10) (2019) [14] Rib eiro, M.T., Singh, S., Guestrin, C.: “ Why should I trust you?” explaining the predictions of an y classifier. In: 22nd ACM SIGKDD (2016) [15] Rib eiro, M.T., Singh, S., Guestrin, C.: Anc hors: High-precision model- agnostic explanations. In: 32nd AAAI (2018) [16] Ro c ha Filho, F.M., da Rocha Neto, A.R., Ro c ha, T.A.: Generalizing logic- based explanations for machine learning classifiers via optimization. Exp ert Systems with Applications 289 (2025) [17] Shih, A., Choi, A., Darwiche, A.: A symbolic approach to explaining ba yesian net work classifiers. In: 27th IJCAI (2018) [18] W ang, E., Khosravi, P ., V an den Bro ec k, G.: Probabilistic sufficient expla- nations. In: 30th IJCAI (2021)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment