PASTA: A Modular Program Analysis Tool Framework for Accelerators

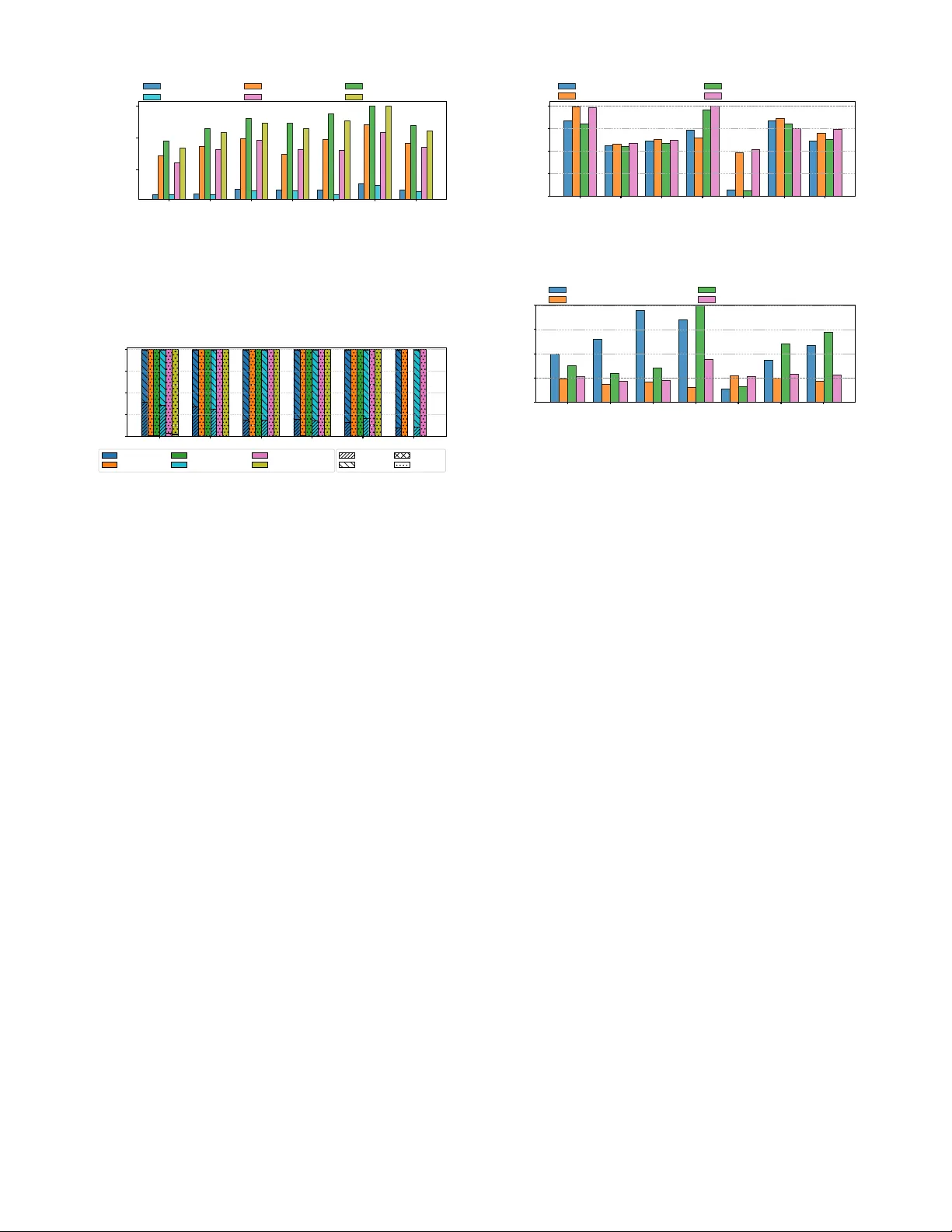

The increasing complexity and diversity of hardware accelerators in modern computing systems demand flexible, low-overhead program analysis tools. We present PASTA, a low-overhead and modular Program AnalysiS Tool Framework for Accelerators. PASTA ab…

Authors: Mao Lin, Hyeran Jeon, Keren Zhou