Understanding Artificial Theory of Mind: Perturbed Tasks and Reasoning in Large Language Models

Theory of Mind (ToM) refers to an agent's ability to model the internal states of others. Contributing to the debate whether large language models (LLMs) exhibit genuine ToM capabilities, our study investigates their ToM robustness using perturbation…

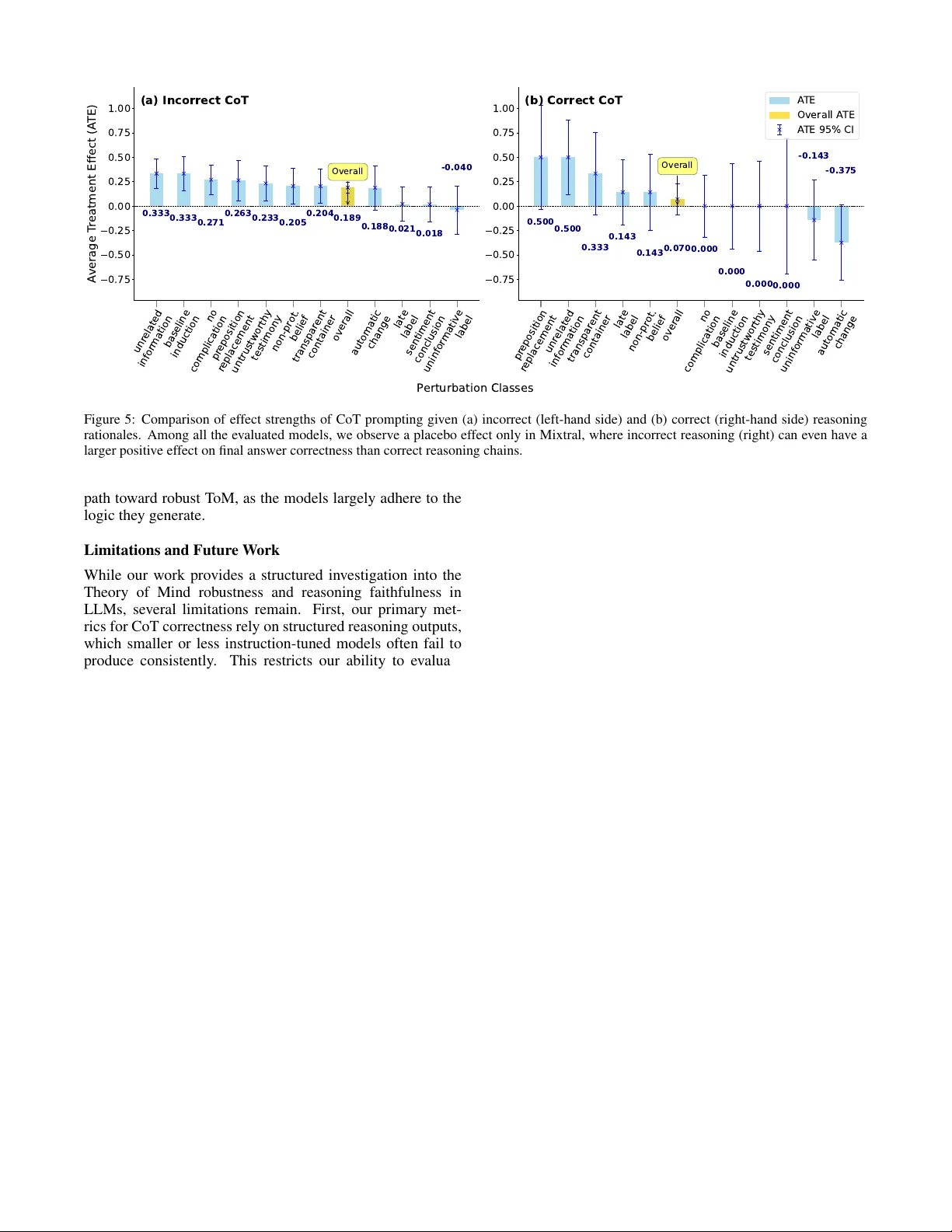

Authors: Christian Nickel, Laura Schrewe, Florian Mai