Adaptive Penalized Doubly Robust Regression for Longitudinal Data

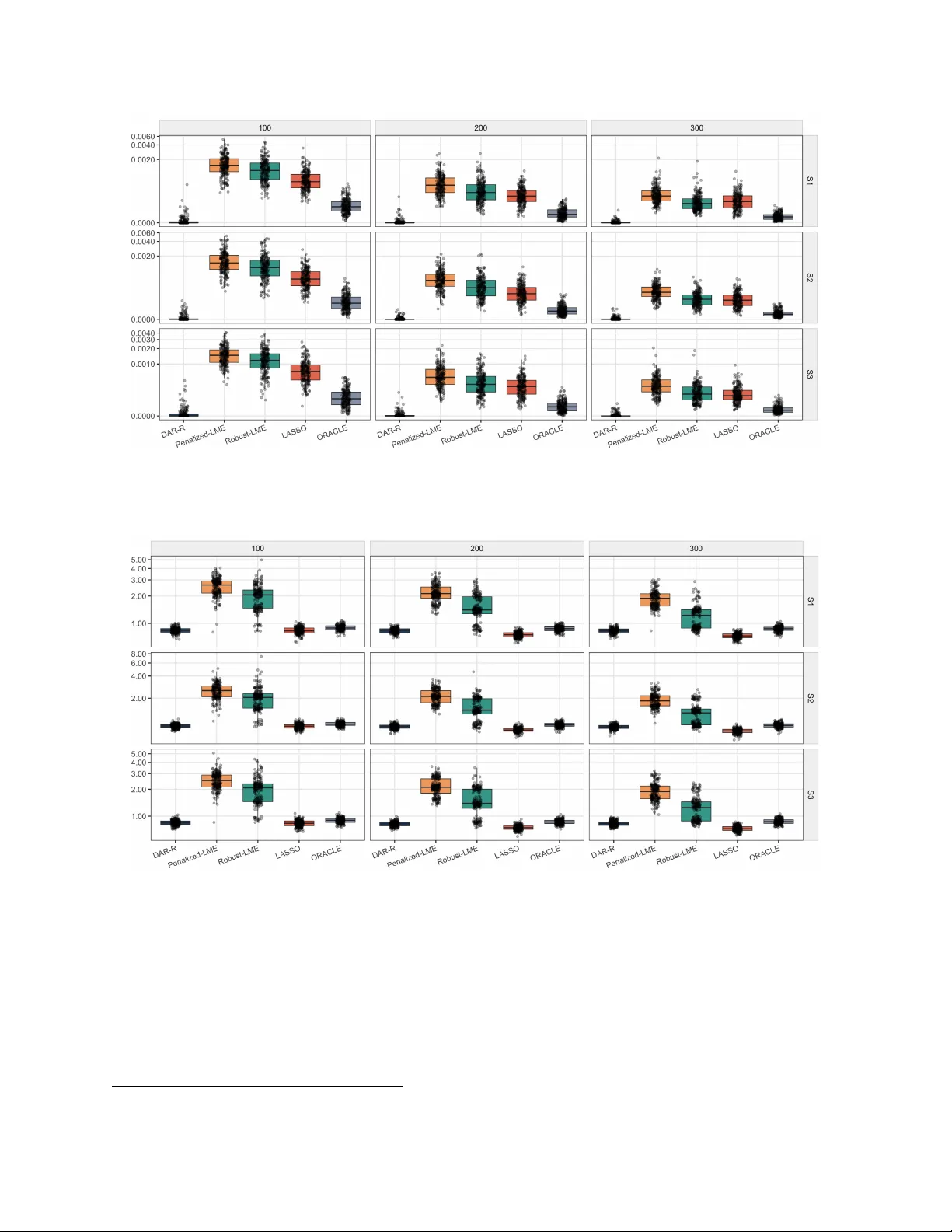

Longitudinal data often involve heterogeneity, sparse signals, and contamination from response outliers or high-leverage observations especially in biomedical science. Existing methods usually address only part of this problem, either emphasizing pen…

Authors: Yuyao Wang, Yu Lu, Tianni Zhang