AkiraRust: Re-thinking LLM-aided Rust Repair Using a Feedback-guided Thinking Switch

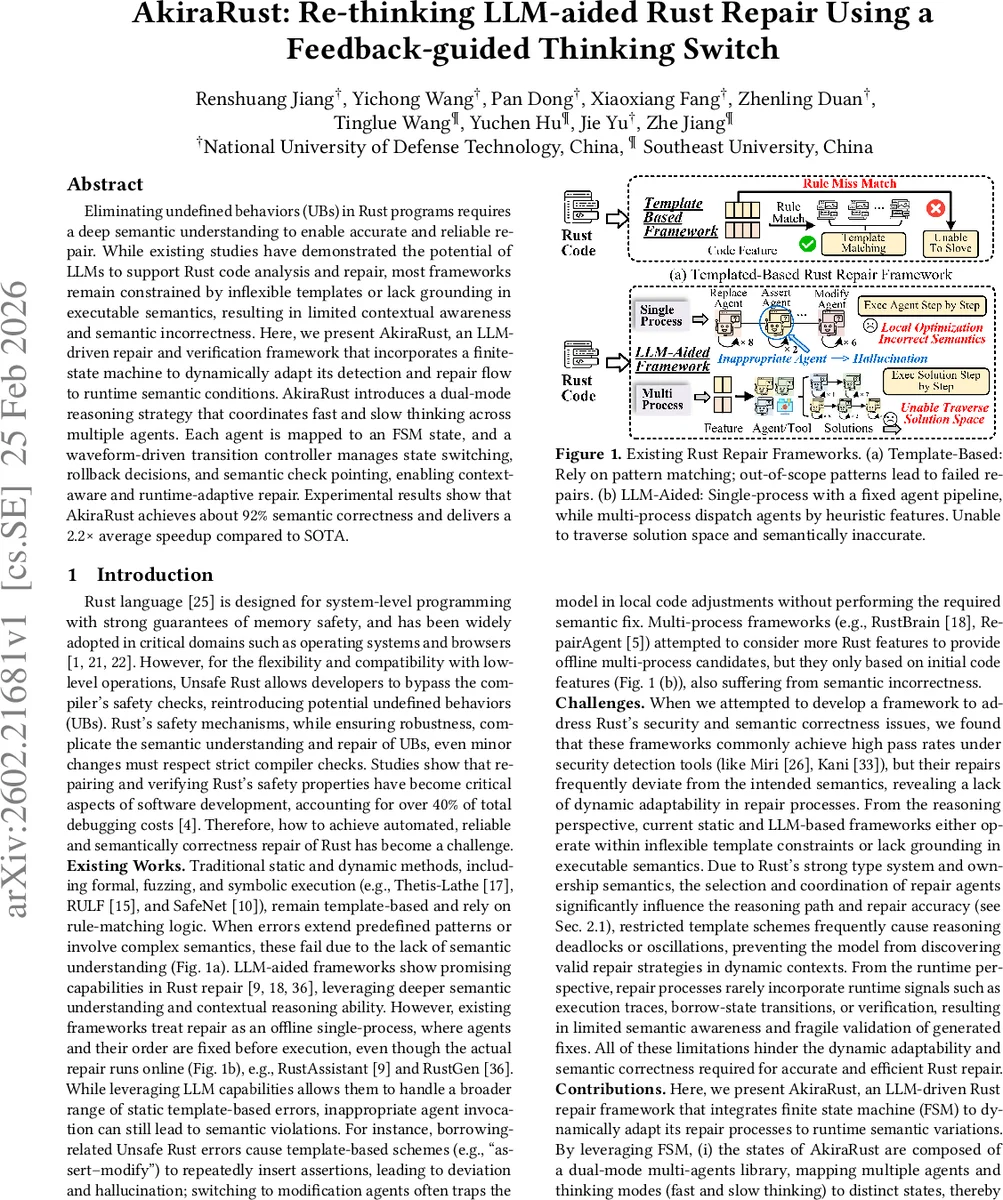

Eliminating undefined behaviors (UBs) in Rust programs requires a deep semantic understanding to enable accurate and reliable repair. While existing studies have demonstrated the potential of LLMs to support Rust code analysis and repair, most frameworks remain constrained by inflexible templates or lack grounding in executable semantics, resulting in limited contextual awareness and semantic incorrectness. Here, we present AkiraRust, an LLM-driven repair and verification framework that incorporates a finite-state machine to dynamically adapt its detection and repair flow to runtime semantic conditions. AkiraRust introduces a dual-mode reasoning strategy that coordinates fast and slow thinking across multiple agents. Each agent is mapped to an FSM state, and a waveform-driven transition controller manages state switching, rollback decisions, and semantic check pointing, enabling context-aware and runtime-adaptive repair. Experimental results show that AkiraRust achieves about 92% semantic correctness and delivers a 2.2x average speedup compared to SOTA.

💡 Research Summary

AkiraRust introduces a novel framework for automatically repairing unsafe Rust code by tightly integrating large language models (LLMs) with a finite‑state‑machine (FSM) driven runtime adaptation mechanism. The authors begin by highlighting the shortcomings of existing approaches: static template‑based tools lack semantic depth, while current LLM‑assisted systems typically operate as a single, fixed pipeline of agents, which leads to semantic hallucinations and poor handling of complex ownership or lifetime violations.

The core contribution of AkiraRust is threefold. First, an FSM is used to model the entire repair workflow. Each state encodes a specific combination of an LLM‑based repair agent (e.g., assertion insertion, code modification, safe‑Rust replacement, knowledge‑base query) and a reasoning mode (fast or slow thinking). Transitions are governed by a “waveform‑driven” controller that monitors a continuously updated code‑incorrectness score—derived from the number of undefined behaviors (UBs) reported by tools such as Miri, semantic consistency checks, and structural soundness metrics. When the score exceeds a predefined threshold, the controller triggers a rollback to the last known good version, thereby preventing the propagation of erroneous patches.

Second, the framework distinguishes between fast and slow thinking. Fast‑thinking agents generate lightweight, heuristic‑driven patches quickly, suitable for simple defects (complexity level 1–2). Slow‑thinking agents perform deeper semantic analysis, iterating over ownership, lifetimes, and type constraints, which is essential for more intricate bugs (complexity level 3–5). Empirical evaluation on 100 real‑world Rust defects shows that fast thinking attains a 92 % success rate on simple bugs with minimal latency, whereas slow thinking, though slower, dramatically improves accuracy on complex cases, eliminating the need for multiple fast‑thinking iterations that would otherwise cause a three‑fold overhead.

Third, the authors address LLM hallucination by introducing a temperature‑controlled experimental setup. High temperature (0.7) increases exploration, raising raw success rates but also inflating hallucination scores. Adding the rollback mechanism under high temperature dramatically reduces hallucination (score approaches zero) while preserving a high success rate (≈90 %). This demonstrates that controlled exploration combined with verification can harness the creative potential of LLMs without sacrificing safety.

The experimental section evaluates AkiraRust on the Miri benchmark suite, comparing it against state‑of‑the‑art tools such as RustBrain, RustAssistant, and RustGen. AkiraRust achieves approximately 92 % semantic correctness and a 2.2× speedup on average. Notably, it successfully repairs complex UB patterns like dangling pointers and atomic access violations that prior tools miss or mishandle.

Key insights distilled from the study are: (1) aligning repair agents with specific defect features dramatically improves accuracy; (2) adaptive switching between fast and slow reasoning based on defect complexity balances efficiency and correctness; (3) hallucination is not merely noise but a source of exploratory power that can be tamed through verification‑driven rollbacks.

The paper acknowledges limitations: the FSM’s state space and transition rules are manually crafted, and scalability to large, multi‑module codebases remains to be proven. Future work is proposed on automated FSM synthesis, tighter integration with static analysis pipelines, and extending the approach to other systems languages such as C++ or Go.

In summary, AkiraRust presents a compelling paradigm for LLM‑assisted program repair in safety‑critical languages. By marrying a formal FSM control loop with dual‑mode reasoning and a verification‑backed rollback strategy, it demonstrates that large language models can be safely leveraged to produce semantically sound patches for Rust’s stringent safety guarantees.

Comments & Academic Discussion

Loading comments...

Leave a Comment