BARREL: Boundary-Aware Reasoning for Factual and Reliable LRMs

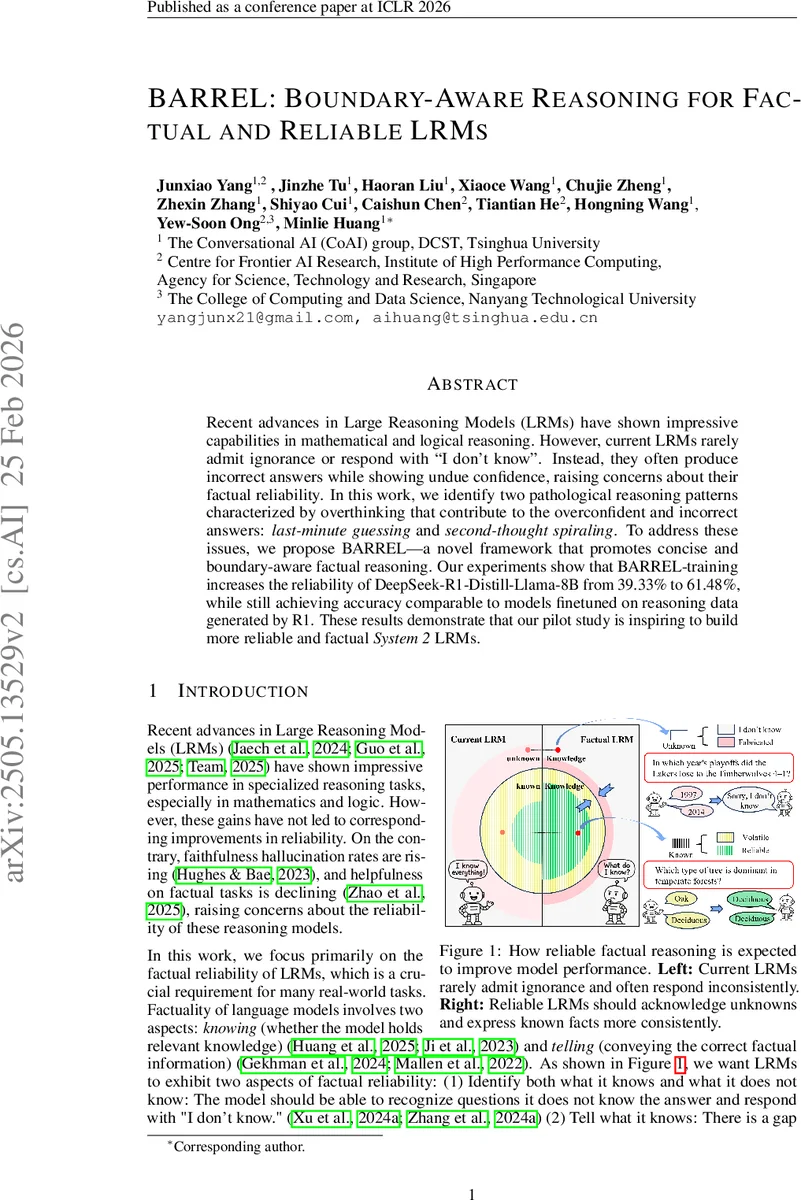

Recent advances in Large Reasoning Models (LRMs) have shown impressive capabilities in mathematical and logical reasoning. However, current LRMs rarely admit ignorance or respond with “I don’t know”. Instead, they often produce incorrect answers while showing undue confidence, raising concerns about their factual reliability. In this work, we identify two pathological reasoning patterns characterized by overthinking that contribute to the overconfident and incorrect answers: last-minute guessing and second-thought spiraling. To address these issues, we propose BARREL-a novel framework that promotes concise and boundary-aware factual reasoning. Our experiments show that BARREL-training increases the reliability of DeepSeek-R1-Distill-Llama-8B from 39.33% to 61.48%, while still achieving accuracy comparable to models finetuned on reasoning data generated by R1. These results demonstrate that our pilot study is inspiring to build more reliable and factual System 2 LRMs.

💡 Research Summary

Large Reasoning Models (LRMs) have achieved impressive performance on mathematical and logical tasks, yet their factual reliability remains poor: they rarely admit ignorance and often produce confident but incorrect answers. This paper identifies a “factual overthinking” phenomenon—models consume more tokens when they are wrong than when they are right—and isolates two pathological reasoning patterns that drive it. Last‑minute Guessing occurs when a model, after extensive but inconclusive reasoning, abruptly commits to an answer in a final burst, typically on questions it does not truly know. Second‑thought Spiraling happens when a model initially finds the correct answer but continues to over‑analyze, eventually overturning its own correct conclusion. Both patterns lead to over‑generation of tokens and reduced factual accuracy.

To remedy these issues, the authors propose BARREL (Boundary‑Aware Reasoning for Reliable and Factual LRMs), a three‑stage training pipeline.

- Knowledge Labeling: Using a sampling‑based probing strategy, each question is labeled as known or unknown for the target model. The method samples K prompts and L repetitions per prompt; if any sampled answer matches the ground‑truth under a strict evaluator, the question is marked known.

- Reasoning Trace Construction for Supervised Fine‑Tuning (SFT): For known questions, BARREL constructs a reasoning trace that first presents the gold answer with strong evidence, then enumerates alternative (weak‑evidence) candidates, and finally reconfirms the correct answer with a confident conclusion. For unknown questions, the trace explores plausible answer‑evidence pairs but, after exhausting reasonable candidates, inserts an uncertainty‑aware refusal such as “Sorry, I don’t know.” These traces are generated automatically by prompting GPT‑4 with detailed instructions, yielding Long‑CoT‑style rationales that encode the desired boundary‑aware behavior. The model is then SFT‑trained to reproduce these traces and the final answer (gold answer for known, refusal for unknown).

- GRPO Stage (Group‑wise Reinforcement Policy Optimization): A rule‑based reward function assigns a high reward (r_c) for correct answers, a medium reward (r_s) for truthful refusals, and a low reward (r_w) for incorrect or hallucinated outputs, ensuring r_c > r_s > r_w. The reward is normalized across a batch, and the policy is updated using the GRPO objective, which incorporates importance‑weight clipping, step‑wise KL regularization, and the normalized advantage. This stage refines the model’s policy to prefer correct answers and honest refusals without requiring explicit known/unknown labels during RL.

Experiments are conducted on three 8‑B‑scale models (DeepSeek‑R1‑Distill‑Llama‑8B, DeepSeek‑R1‑Distill‑Qwen‑7B, Qwen3‑8B) using TriViaQA, SciQ, and NQ‑Open for training, and a 3,000‑question test set sampled from the same sources. Baselines include vanilla in‑context learning (ICL), ICL with refusal examples (ICL‑IDK), a distillation SFT baseline, vanilla GRPO, and two reliability‑enhanced GRPO variants that add verbal confidence extraction or a probing classifier.

Metrics are Accuracy (Acc.), Truthfulness (the proportion of correct answers plus truthful refusals), and Reliability (a weighted combination of Acc. and Truthfulness that penalizes over‑confident errors). BARREL raises reliability from 39.33 % to 61.48 % on DeepSeek‑R1‑Distill‑Llama‑8B, while also improving accuracy from 38.43 % to 40.7 %. Ablation studies show that the medium‑level reward for refusals is crucial: without it, the model rarely generates “I don’t know,” and reliability gains disappear. Qualitative analysis confirms that BARREL reduces both Last‑minute Guessing and Second‑thought Spiraling, leading to shorter, more decisive reasoning traces for known questions and honest uncertainty expressions for unknown ones.

The paper’s contributions are threefold: (1) discovery and formalization of factual overthinking and its two pathological patterns; (2) the first training pipeline that embeds knowledge‑boundary awareness into the reasoning process, enabling LRMs to say “I don’t know” in a principled way; (3) demonstration that intermediate‑level rewards effectively encourage uncertainty‑aware refusals, addressing a fundamental flaw in existing RL‑based LRM training where refusals receive no incentive. BARREL thus offers a practical roadmap for building System 2‑style reasoning models that are both factually accurate and reliably calibrated.

Comments & Academic Discussion

Loading comments...

Leave a Comment