Efficient Online Learning in Interacting Particle Systems

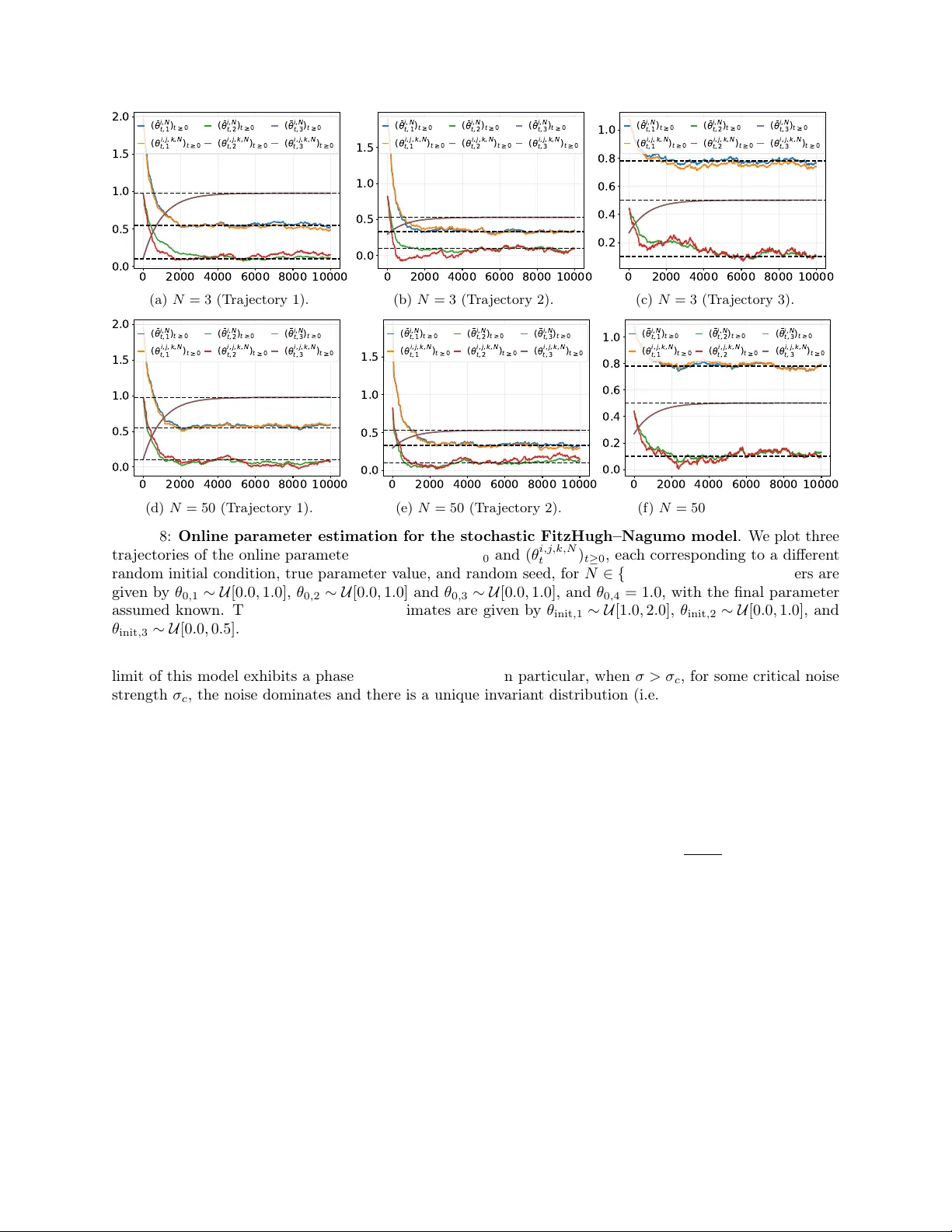

We introduce a new method for online parameter estimation in stochastic interacting particle systems, based on continuous observation of a small number of particles from the system. Our method recursively updates the model parameters using a stochast…

Authors: Louis Sharrock, Nikolas Kantas, Grigorios A. Pavliotis