On the Convergence of Stochastic Gradient Descent with Perturbed Forward-Backward Passes

We study stochastic gradient descent (SGD) for composite optimization problems with $N$ sequential operators subject to perturbations in both the forward and backward passes. Unlike classical analyses that treat gradient noise as additive and localiz…

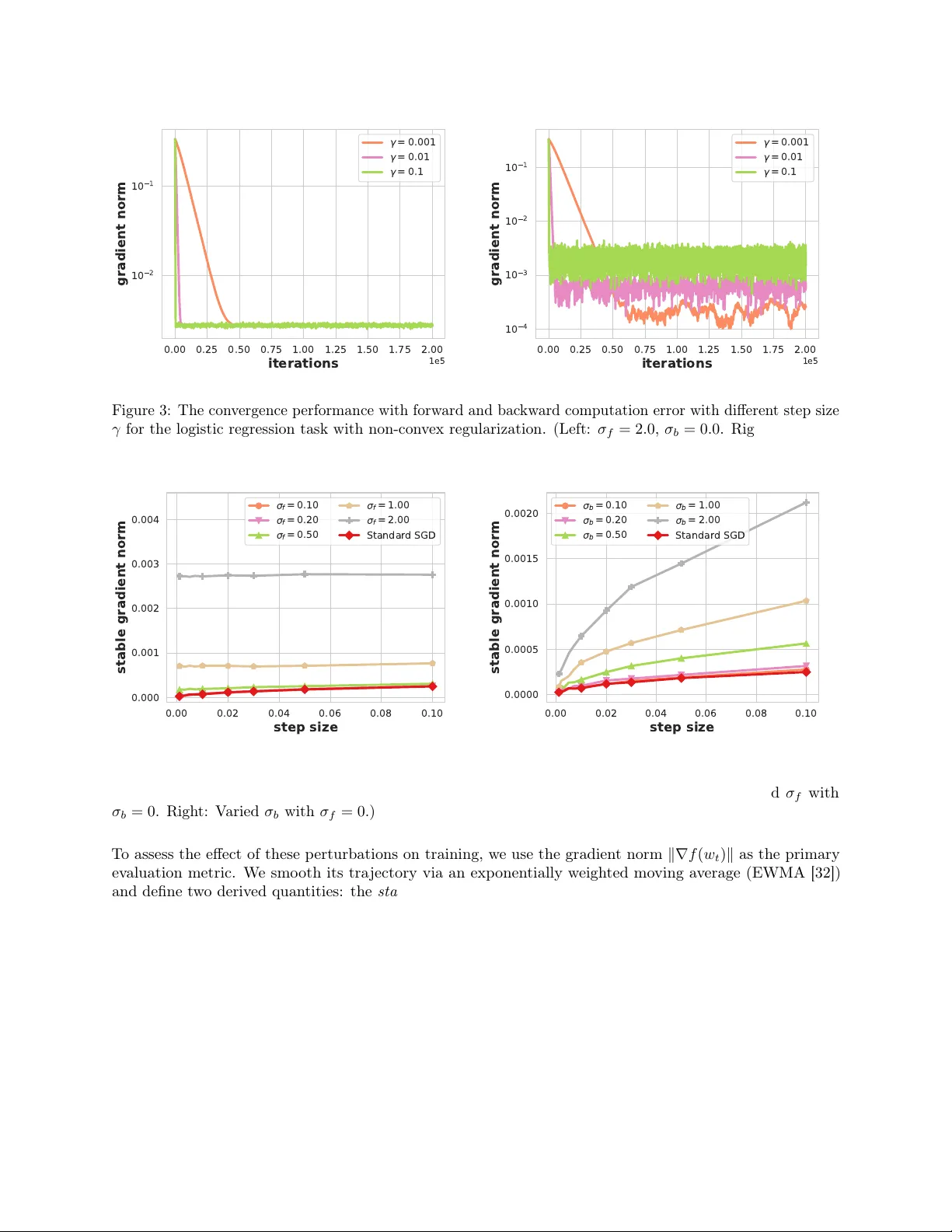

Authors: Boao Kong, Hengrui Zhang, Kun Yuan