Posterior Mode Guided Dimension Reduction for Bayesian Model Averaging in Heavy-Tailed Linear Regression

For large model spaces, the potential entrapment of Markov chain Monte Carlo (MCMC) based methods with spike-and-slab priors poses significant challenges in posterior computation in regression models. On the other hand, maximum a posteriori (MAP) est…

Authors: Shamriddha De, Joyee Ghosh

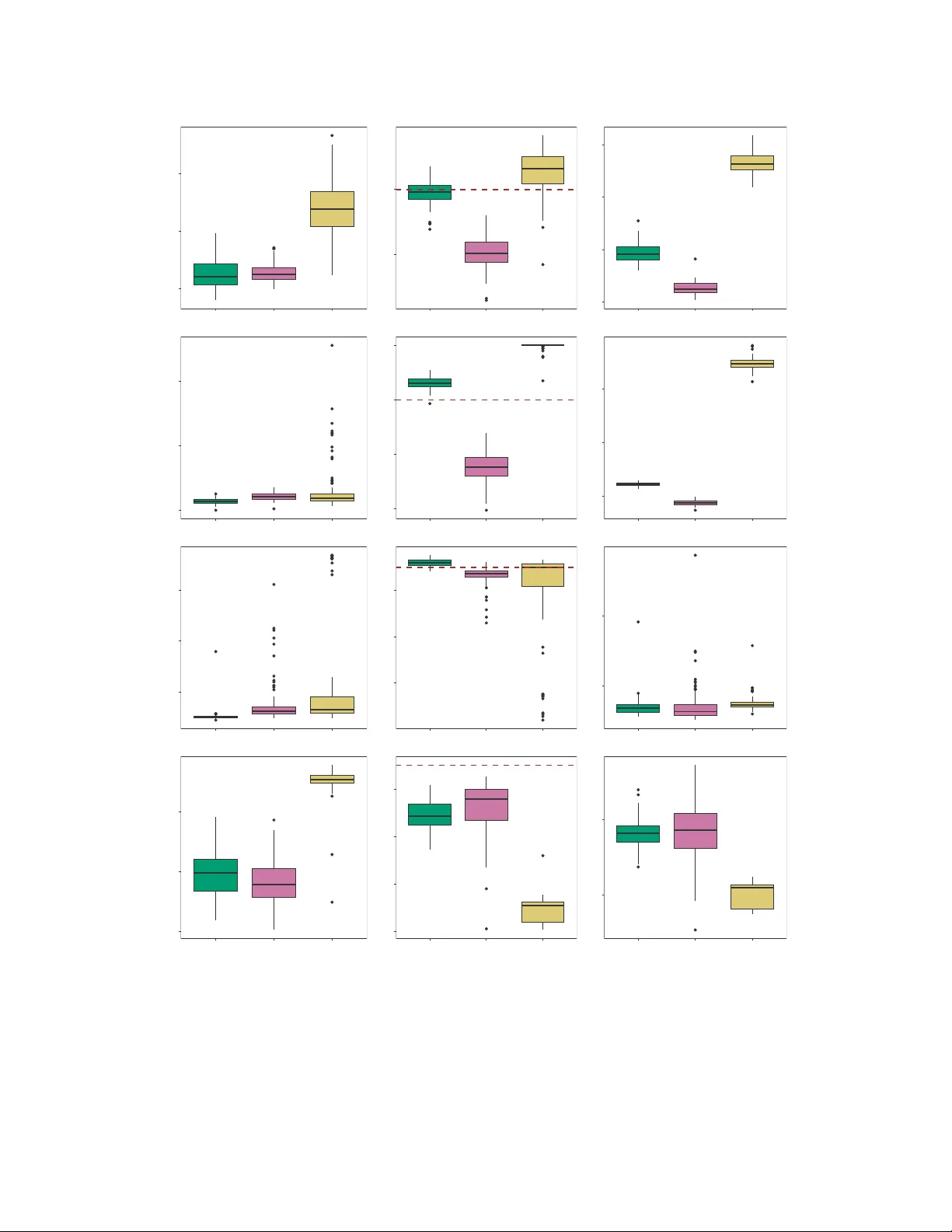

P osterior Mode Guided Dimension Reduction f or Baye sian Model A v eraging in Hea vy-T ailed Linear Regr ession Shamriddha De * and Joyee Ghosh † Abstract For lar ge model spaces, the potential entrap ment of Mark ov chain Monte C arlo (MCMC) based methods with spik e-and-sla b priors poses significa nt challe nges in poster ior comput ation in re- gressi on models. O n the other hand, max imum a po steriori (MA P) estimation , which is a more computa tionally viable alternat iv e, fails to provi de uncert ainty quant ification. T o addres s thes e proble ms si multaneous ly and efficien tly , th is paper proposes a hybrid method that blend s MAP estimatio n with MCMC-based stochas tic search algorithms w ithin a heav y-tailed error frame- work. Under hyperb olic errors, the current wo rk dev elops a two-step expe ctation con ditional maximizati on (ECM) guided MCMC algorithm. In the first step, we conduct an ECM-based poster ior maximizati on and perform variab le selecti on, thereby ide ntifying a redu ced model space in a high posterio r probab ility region . In the secon d step, we e xecut e a Gibbs sampler on the red uced model space for po sterior computat ion. S uch a method is ex pected to impro ve the ef ficiency of posterior computa tion an d enhanc e its inferentia l richn ess. Through simu lation studie s and b enchmark real life exa mples, our pro posed method is sho wn to exh ibit se vera l adv antage s in var iable select ion and uncer tainty quantificatio n o ver vario us state-of-t he-art methods . Ke ywords: Baye sian v ariable se lection, Expectatio n Conditional Maximizat ion (ECM), H y- perbol ic distrib ution, Marko v chain Mo nte Carlo ( MCMC), Maximum a poster iori ( MAP ) estimatio n, Spike- and-slab priors. 1 Introduction The e xtensive literature o n variable selection in linear regression m o d els s pans a broad range of modeling techniques, ranging from the classi cal formulation s based on normal errors to m o re re- cent approaches that em p hasize robustness to departures from normali ty . From a Bayesian p oint * PhD Can d idate, Departm e n t of Statistics an d Actuaria l Science, T he University o f Iowa, Iow a City , USA † Associate Pro fessor , Departm ent o f Statistics and Actuarial Science, The Univ ersity of Io wa, Iow a City , USA 1 of vie w , the problem of v ariable selection is often addressed by putting s p i ke-and-slab priors on the regression coefficients. Se veral authors , in cl u ding Geor ge and McCulloch ( 1993 , 1997 ); Gramacy and Pantaleo ( 20 10 ); Hans ( 2010 , 2011 ); Clyde et al. ( 2011 ); Ghosh and Clyde ( 2011 ); Ghosh and Ghattas ( 2015 ); Zanella and Roberts ( 2019 ); Nie and Ro ˇ ckov ´ a ( 2023a ), ha ve uti lized such priors to incorporate model uncertainty un d er normal error mod els. Spike-and-slab prior formulations hav e also been adopted for Bayesian variable selection and m odel a veraging in li n- ear regression models with hea vy-tailed errors ( Gramacy and Pantaleo , 2010 ; R en et al. , 2023 ; Chakraborty et al. , 2 025 ; De and Ghosh , 2 0 26 ). Under spike-and-slab pri o rs , the size of the model s pace gro ws e xponent i ally with the num - ber of p redictors. Thus, e ven a modest increase in the num ber of predictors results in a l ar ge model space, whos e posterior exploration with Markov chain Mont e Carlo (MCMC) is compu- tationally daunting. Owing to the m ultimod al structure of t he posterior distribution, standard MCMC algorithm s are susceptibl e to entrapment at local m odes. Rather than full posterio r ex- ploration, a more viable alternative is t o focus o n t he restri cted go al of pos terior mode estim a- tion, com monly referre d to as maximum a post eriori (MAP) estimation. The av ail ability of non- stochastic maxi mization techniques like the expec tation maximization (EM ) algorit hm and i t s vari- ants ( Dempster et al. , 1977 ; Meng and Rubi n , 1993 ; W ei and T anner , 1990 ; Ruth , 2024 ) has further inspired many authors t o explore and im p lement ef ficient MAP-based techniques. In particular , Ro ˇ ckov ´ a and G eorge ( 2014 ) proposed the expectation maximization variable selection (EMVS) al- gorithm with continuou s s pike-and-slab priors in a normal error m o del. For the normal likelihood, Ro ˇ ckov ´ a and G eorge ( 2018 ) later proposed the spike-and-slab lasso by combi ning spike-and-slab priors with the Bayesian lasso u s ing Laplace priors ( Park and Casella , 2008 ) on the regression co- ef ficients. A more rob ust v ersion of the spike-and-slab lasso with asymmetric Laplace errors w as recently developed by Liu et al. ( 2024 ) throu g h the spike-and-slab quantil e lasso (SSQLASSO). Despite a s ignificant comp utational gain over MCMC algorith ms, determin istic MAP-based methods in the literature have primarily focused on coefficient estimat i on and var iable selection. They l ack uncertainty qu antification for tail hea viness estimation, which is a core objectiv e of pos- 2 terior sampl i ng via MCMC met h ods. In o ther words, neither MCMC nor MAP-based methods can typically provide a st and -alone pathway to all the aforementioned goals in h i gh-dimensional regression m odels with p o tentially hea vy-tailed errors. Motivated by this lim i tation, we try to si- multaneously address bot h comp utational efficienc y and inferential richness within a flexible m od- eling framew ork by blending MAP estim ati on with MCMC-based stochastic search algorithms. For this purpose, we model t he errors usin g the hyperbolic distribution and p ropose a two-step expectation conditional maximization (ECM) algorithm ( Meng and Rubin , 1993 ) guided MCMC frame work. The hyperbolic error model (HEM) ensures fle xibility in tail h ea viness by typically var ying its s hape parameter η . In th e first s tep of our proposed method, for a suitably chosen degree of tai l hea v iness (fixed value of η ), we cond uct an ECM-based p osterior maximization and perform variable selection usin g spi ke-and-slab pri ors on the regression coef ficients. The tail hea viness parameter η is treated as fixed at this stage, as ECM-based estim ation of t ail hea viness is o ften inaccurate. This approach efficiently identifies a reduced m o del space in a hi g h pos t erior density re gion, based on the chosen predictors. The s econd step is inspired by a special case of the stochastic search v ariable selection approach of De and Ghosh ( 2026 ). More specifically , in the second st ep, we im p lement a Gibbs samp l er with a Metropolis-Hasti n g step on t he reduced model space, and perform post erior inference for tail thi ckn ess b y puttin g a prior on η . W e use the shorthand GECM-HEM to denote our propos ed ECM guided Gib b s (G) sampling algorithm for the h y perbolic error model (HEM). The rest of t he article is organized as follows. In Section 2 , we introduce our proposed two-step GECM-HEM algorithm. In Section 3 , we carry out a simu lation study to compare the performance of our proposed method w i th the stochasti c search HEM ( De and Ghosh , 2026 ), as well as wi th two other s t ate-of-the-art MAP esti mation t echniques. W e analyze three real life datasets in Section 4 . Concluding remarks and possible directions for future work are stated in Section 5 . The proof of a propositi on pertain i ng to the normal scale m ixture representation of the hyperbolic dist ribution is out lined in Appendix A . Some addi tional results for one of the real datasets (Am es Housing dataset) are reported in Appendix B . For clarity on parameterization, the p robability densit y func- 3 tions of som e distributions used in the article are provided in Appendix C . 2 The GECM A pproach T owards the Hyperb olic Err or Model W e begin this section by briefly revisiting the hyperbolic error m odel (HEM) ( De and Ghosh , 202 6 ) implemented with MCMC, which serves as a precursor to our proposed m ethod. Thereafter , we de- scribe our proposed technique and di s cuss the suggested choices for the relev ant hyperparameters in detail. 2.1 Re view of the Stochast ic Sear ch Hyperbolic Err or Model T o induce flexibility in tail hea viness, De and Ghosh ( 2026 ) prop osed a mixture of the hyperbolic and th e Student- t distributions for modelin g the errors, and showed that this mixture m odel reduces to the H E M as a s p ecial case. Given the piv otal role of the HEM in the present paper , we re view it at th e outset. Let us cons i der a l inear regression mo del Y = X β + ǫ , (1) with an n × 1 response vec tor Y , an n × p design matrix X of p covariates, β as the p × 1 vector of unknown regression coeffic ients, and ǫ as the n -dimens i onal vec tor of in dependent and identically distributed hyperbolic errors. More precisely , the error distribution has the form f Hyperb olic ( ǫ i | η , ρ 2 ) = 1 2 p η ρ 2 K 1 ( η ) e − { η ( η + ǫ 2 i /ρ 2 ) } 1 / 2 , −∞ < ǫ i < ∞ , i = 1 , 2 , . . . , n, (2) with shape parameter η > 0 and scale parameter ρ 2 > 0 . Includ ing an intercept term in ( 1 ) is redun- dant, since the cov ariates and the response variables are centered and scaled about their respectiv e means and standard deviations. The model uncertainty in ( 1 ) i s in troduced using a p -dimensi onal latent binary vector γ = ( γ 1 , γ 2 , . . . , γ p ) ⊤ , where γ j ∈ { 0 , 1 } , for every j = 1 , 2 , . . . , p , w i th 4 γ j = 0 and γ j = 1 respecti vely signifyin g t he exclusion or inclusion of the j th co variate in the model. For eve ry model γ in the model space Γ = { 0 , 1 } p , we define β γ to be the p γ -dimensional vector of the non-zero components in β , wh ere p γ = P p j =1 γ j , and let X γ denote the matrix of the corresponding colu m ns of t he desig n matrix X . Gneiting ( 1997 ) expressed the hy p erbolic density as a no rm al scale m ixture wit h a general- ized inv erse Gaussi an (GIG) mixing dens ity , which has been utilized by Park and Casella ( 2008 ); De and Ghosh ( 2024 , 2 0 26 ) to form ulate a computation ally ef ficient likelihood for model ( 1 ). The said likelihood, along wi t h the hierarchical prior specification by De and Ghosh ( 2026 ) for t he unknown parameters, are stated below: Y | γ , β , σ ∼ N( X γ β γ , Σ) , σ 2 i | ρ 2 , η ind ∼ GIG(1 , η /ρ 2 , η ρ 2 ) , i = 1 , 2 , . . . , n, β j | γ j , ρ 2 , τ 2 ind ∼ N(0 , ρ 2 τ 2 ) I ( γ j = 1) + δ { 0 } ( β j ) I ( γ j = 0) , j = 1 , 2 , . . . , p, τ 2 ∼ InvGamma( λ τ / 2 , λ τ / 2) , ρ 2 ∼ InvGamma( a ρ , b ρ ) , η ∼ DiscUniform( G η ) , γ j | θ ind ∼ Bernoulli( θ ) , j = 1 , 2 , . . . , p, θ ∼ Beta( c θ , d θ ) , (3) where σ = ( σ 2 1 , σ 2 2 , . . . , σ 2 n ) ⊤ , Σ = diag ( σ 2 1 , σ 2 2 , . . . , σ 2 n ) , λ τ > 0 , a ρ > 0 , b ρ > 0 , c θ > 0 , d θ > 0 , G η is a finite set o f posi tiv e real numbers, δ { 0 } ( · ) represents a point m ass at zer o, and I ( · ) denotes t he indicator function. Armed with these priors and likelihood , De and Ghosh ( 2026 ) deve loped a Gibbs sampl er with a Metrop olis-Hastings step for po s terior computati o n. As far as the prior su pport G η of η is concerned, De and G h osh ( 2024 , 202 6 ) recomm ended G η = { 0 . 05 , 0 . 1 , 0 . 2 , 0 . 3 , . . . , 0 . 9 , 1 , 2 , 5 , 10 , 2 0 , 50 } as a su i table grid encompassing a wide range of tail hea viness. While the stochastic search HEM can be v i e wed as a gold standard for model a veraging in 5 a heavy-tailed frame work, com putational challenges emerge in probl ems in volving large mo d el spaces. The multi modal nature of the posterior distribution over th e models often causes the Gibbs sampler to get trapped in local modes. Escaping such entrapment demands the execution of ex- tremely long chains of the sampler and com promises the computational ef ficiency of the algorithm. Resorting to a simpli fied goal of MAP estimation th rough EM-like algorit hms appears to be a more practical alternative to address the d rawbacks of the s tochastic search HEM. Howe ver , the lack of uncertainty quantification in s uch deterministic techniques highlights t h e continued need for the Gibbs sam p ler . Accordingly , we propose an algo ri t hm that combines the computati o nal ef ficiency of determi nistic MAP esti m ation with the uncertainty quantification provided by stochastic s earch MCMC. 2.2 The ECM Guided Gibbs Samplin g W e dev elop a two-step method that com bines determi n istic MAP estim ation for com putational speed wit h stochastic searc h MCMC for un certaint y quantification, particularly in the tails, thereby lev eraging the complementary st rengths of both the approaches. Our proposed techni q ue begins with an initial le vel of v ariable selectio n based on posterior m ode estim ation via the ECM algo- rithm ( Meng and Rubin , 1 993 ), followed by a Gibbs samp l er that performs a final le vel of v ariable selection on the reduced mod el space obtained from the modal estimates. Step 1: The ECM Step In the first step, we intend to esti m ate the mode of the pos t erior distribution, m ar ginalized over the model uncertainty parameter γ , that is, p ( β , ρ 2 , η , θ, τ 2 , σ | Y ) = P γ p ( γ , β , ρ 2 , η , θ, τ 2 , σ | Y ) . Howe ver , for a lar ge v alue of p , direct maxim ization th rough complete enumeration of all the 2 p models and taking summatio n over them is computational l y infeasible. Therefore, following Ro ˇ ckov ´ a and G eorge ( 2014 ), we consider γ as the “missing data” and maximi ze the condi tional expectation of t he “complete data” log-post erior log p ( γ , β , ρ 2 , η , θ, τ 2 , σ | Y ) . The condition al expectation is taken over γ , gi ven the observed data Y and th e curr ent parameter estim ates. Due to 6 the unav ailability of closed-form expressions for s imultaneous m aximization for all the parameters, the ECM algorithm ( Meng and Rubin , 1993 ) is applied to maxim ize over bl o cks o f parameters conditionally on others. For computational ease in the ECM step, we make a few changes to ( 3 ). A sligh tly modified version of the scale m ixture representation of the hyperbolic distribution by Gneiting ( 1997 ) is indicated in Proposit ion 1 and is proven in Appendix A . Pr oposition 1. Let A and a b e random variables s uch that A | a 2 ∼ N(0 , ρ 2 a 2 ) and a 2 ∼ GIG(1 , η , η ) . Then A is distri buted as Hyp erb olic ( η , ρ 2 ) . The scale mixture representation in Proposition 1 leads to the fol lowing reformulated likelihood for mo d el ( 1 ), whi ch has been depl oyed in the ECM step: Y | β , σ , ρ 2 ∼ N( X β , ρ 2 Σ) , σ 2 i | η ind ∼ GIG(1 , η , η ) , i = 1 , 2 , . . . , n. (4) Moreover , to ensure di f ferentiabilit y of the objective function, we replace the mixture of point mass at zero and a continuous prior (point mass spike-and-slab prior) o n the re gression coeffi cients by a continuous sp ike-and-slab prior . That is, we consid er a mixture of two contin uous distrib ution s as the prio r , where o ne component (spike) is concentrated around zero, whil e the other component (slab) i s m ore diffuse. Such a prior shrinks the coef ficients for the noise va riables to wards zero, but cannot enforce them to be exactly zero. Therefore, the modal estimate of β does not explicitl y drop any co variate from the model . For the re maini n g parameters γ , ρ 2 , τ 2 and θ , we retain the 7 priors in ( 3 ). In a nutshell, the priors used for the ECM st ep are stated below: β j | γ j , ρ 2 , τ 2 ind ∼ (1 − γ j )N(0 , κ 0 ρ 2 τ 2 ) + γ j N(0 , κ 1 ρ 2 τ 2 ) , j = 1 , 2 , . . . , p, τ 2 ∼ InvGamma( λ τ / 2 , λ τ / 2) , ρ 2 ∼ InvGamma( a ρ , b ρ ) , η ∼ DiscUniform( G η ) , γ j | θ ind ∼ Bernoulli( θ ) , j = 1 , 2 , . . . , p, θ ∼ Beta( c θ , d θ ) , (5) where κ 0 > 0 and κ 1 > 0 are respectiv ely cho s en to be s mall and large, corresponding to the spike and t he slab comp onents. The hyperparameters κ 0 and κ 1 control the strengths of t h e re spec- tiv e spike and s lab components. A small er value o f κ 0 indicates a more peaked spike with more concentration around zero, while a larger value of κ 1 signifies a flatter slab . Preliminary analyses of the ECM algorith m re vealed poor estimation of the tail heaviness for modest sam p le size or weak signal strengt h, under the priors in ( 5 ). According ly , instead of max- imizing wi th respect to the shape parameter η ov er it s prior support G η , we fix its v alue at η = 1 , corresponding to a slightly heavier tail than the normal distribution. This strategy tends t o pro- vide some safeguard against the potent ial adversities of variable selecti o n u nder a miss pecified light-tailed mod el. Let E ∗ γ [ · ] denote the conditional e xpectation E γ [ ·| Y , η = 1 , ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 , ˆ σ ] over γ , given Y , η = 1 , and the current estimates ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 and ˆ σ of the remaining p arameters β , ρ 2 , θ , τ 2 and σ respectively . The goal is to maxim i ze the condit ional expectation E ∗ γ [log p ( γ , β , ρ 2 , θ , τ 2 , σ )] , which, using the likelihood and th e priors in ( 4 ) and ( 5 ), re duces t o i terativ ely maximi zing the 8 function Q = − 1 2 ( n log ρ 2 + (1 + η ) n X i =1 σ 2 i + 1 ρ 2 ( Y − X β ) ⊤ Σ − 1 ( Y − X β ) + η n X i =1 1 σ 2 i + 2 n log K 1 ( η ) ) − 1 2 ( p X j =1 E ∗ γ [log α j ] + p log ρ 2 + 1 ρ 2 τ 2 β ⊤ Ω β + ( p + λ τ + 2) log τ 2 + λ τ τ 2 ) − ( a ρ + 1) ρ 2 + b ρ ρ 2 + ( p + d θ − 1) log (1 − θ ) + ( c θ − 1) log θ + log θ 1 − θ p X j =1 E ∗ γ [ γ j ] , (6) where η = 1 , α j = κ 0 (1 − γ j ) + κ 1 γ j for j = 1 , 2 , . . . , p , and Ω = diag(( E ∗ γ [1 /α j ])) . Th e com- plicated nature of the obj ectiv e fun ction Q prohibits clo s ed-form maximization t o be performed jointly over all the parameters u s ing the traditional EM algorithm ( Dempster et al. , 1977 ). As an alternativ e, we adopt t he ECM algorithm ( Meng and Rubin , 1993 ), which proceeds by maximizing Q sequenti ally over each parameter , conditional on t he current estimates of t he remainin g param- eters. 1. The E-Step: In the expectation (E) step, w e compute the conditional e xpectations E ∗ γ [ γ j ] , E ∗ γ [log α j ] and E ∗ γ [1 /α j ] , for e very j = 1 , 2 , . . . , p . W e note that t h e condit ional poste- rior di s tribution of γ , giv en the response variables Y , η = 1 and the curr ent estimates ( ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 , ˆ σ ) , depends on ( Y , η = 1 , σ ) only through ( ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 ) , and the conditional expectation E ∗ γ [ γ j ] si mplifies to E ∗ γ [ γ j ] = P ( γ j = 1 | Y , η = 1 , ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 , ˆ σ ) = p ( ˆ β j | γ j = 1 , ˆ ρ 2 , ˆ τ 2 ) P ( γ j = 1 | ˆ θ ) P 1 t =0 p ( ˆ β j | γ j = t, ˆ ρ 2 , ˆ τ 2 ) P ( γ j = t | ˆ θ ) = g j , (7) for e very j = 1 , 2 , . . . , p . The prior specification in ( 5 ) ensures comput at i onal tractabilit y of the quanti ties in volv ed in ( 7 ). Furthermo re, for ev ery j = 1 , 2 , . . . , p , the remaining conditional expectations can be ev aluated using ( 7 ) as weighted avera ges o f functions of the 9 spike and the s l ab strength hyperparameters κ 0 and κ 1 in the following manner: E ∗ γ [log α j ] = E ∗ γ [log { κ 0 (1 − γ j ) + κ 1 γ j } ] = (1 − g j ) log κ 0 + g j log κ 1 , E ∗ γ [1 /α j ] = E ∗ γ 1 κ 0 (1 − γ j ) + κ 1 γ j = 1 − g j κ 0 + g j κ 1 . (8) 2. The CM-Ste p: The condi tional m axi mization (CM) step of the ECM algorithm m axi mizes the objective functi on Q for each parameter , given the current estimates of the remaini n g parameters, and yields t he foll owing closed-form est imates at eve ry it eration: ˆ β = X ⊤ ˆ Σ − 1 X + 1 ˆ τ 2 Ω − 1 X ⊤ ˆ Σ − 1 Y , ˆ ρ 2 = 2 b ρ + ( Y − X ˆ β ) ⊤ Σ − 1 ( Y − X ˆ β ) + ˆ β ⊤ Ω ˆ β / ˆ τ 2 n + p + 2 a ρ + 2 , ˆ τ 2 = λ τ + ˆ β ⊤ Ω ˆ β / ˆ ρ 2 p + λ τ + 2 , ˆ θ = c θ + P p j =1 g j − 1 c θ + d θ + p − 2 , ˆ σ 2 i = 1 2 η − 1 + ( 1 + 4 η η + ( y i − x ⊤ i ˆ β ) 2 ˆ ρ 2 !) 1 / 2 , i = 1 , 2 , . . . , n, (9) where η = 1 , ˆ Σ = diag ( ˆ σ 2 1 , ˆ σ 2 2 , . . . , ˆ σ 2 n ) , and for e very i = 1 , 2 , . . . , n , x i is the i t h row of the d esi gn matrix X and y i is the i th com ponent of the response vector Y . Under continuous s pike-and-slab priors, the modal estimates of the regression coef ficients can- not be exactly zero and t herefore d o not allow e xplicit exclusion of a covariate. Moreover , the median probabi lity model ( Barbieri and Berger , 2004 ) and other Bayes factor based approaches ( Ghosh and Ghattas , 2015 ; De and Ghosh , 2026 ), w h ich rely on thresholding the posterior p rob a- bility P ( γ j = 1 | Y ) to select cov ariates, are not appl icable here because the post erior distribution over models is unava ilable. Ro ˇ ckov ´ a and George ( 2014 ) estim ated the most probabl e submodel as the high est probability model condi tional on t he m odal estim ates of th e parameters. Following this strategy , we choose 10 our best submodel as the model with the h ighest conditional probabi lity , given η = 1 and the modal estimates ( ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 , ˆ σ ) from t he ECM algorithm. That is, the estimated model i s ˆ γ = arg max γ P ( γ | η = 1 , ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 , ˆ σ ) , (10) which can be obtained by threshol d ing the conditional probabil ity of each component as ˆ γ j = 1 ⇐ ⇒ P ( γ j = 1 | η = 1 , ˆ β , ˆ ρ 2 , ˆ θ , ˆ τ 2 , ˆ σ ) ≥ 0 . 5 , (11) for e very j = 1 , 2 , . . . , p . In other words, the j t h covariate is d rop ped from our model if the con- ditional probabili ty of the correspondi ng “slab” component, given η = 1 and th e modal estimates ( ˆ β , ˆ ρ 2 , ˆ θ, ˆ τ 2 , ˆ σ ) , falls below a threshold of 0.5. Th i s gi ves rise to a reduced model space consist ing of 2 p ∗ models, where p ∗ ≤ p , based on the design matrix X ∗ with p ∗ columns from the original design matrix X correspon d ing to the included covariates. When the true data generating model contains sub stantially fewer signals than p , the resulting reduced m odel space facilitates bett er mixing and greater stability of the Gibb s sampler comp ared with the o n e operating in the origi nal model space. Accordingly , the reduced model space is util i zed in the Gibbs sampling step (Step 2) of our propos ed algorithm. Step 2: The G ibbs Sampling Ste p In t h e second step, we s tudy the tail beha vi or and perform uncertainty quantification through an MCMC algorithm on the reduced model space deduced from the ECM-based modal esti mates. The likelihood and priors in ( 3 ) corresponding to the stochastic search HEM ( De and Gh o sh , 2026 ) en- able clos ed-form full cond itional distri butions, which can be further ut ilized to deduce an ef ficient Gibbs sampler , along with an MH-step to address model selectio n . Alt h ough a preliminary lev el of model reduction is performed in the ECM st ep, we incorporate this additional step of model uncertainty on t he reduced model space to allo w droppin g of any potent ial false positives/signals. Moreover , the flexibility in tail i s induced t hrough a di screte uniform p ri o r on the shape parameter 11 η , as in dicated i n ( 3 ). 2.3 Choice of Hyperp arameters The propo s ed GECM-HEM algorithm in volves sev eral hyperparameters, whose choices are crucial to the performance of t h e algorithm. For t he ECM step, a vi tal question arises regarding the choice of κ 0 and κ 1 in the prior on the regression coeffic ients β in ( 5 ). Conditional on τ 2 , κ 0 and κ 1 respectiv ely controls the v ariances of the spi ke and the slab comp o nents. Similarly , marginalization over τ 2 leads to a mixture of multivariate Student- t prio rs on β with λ τ degrees of freedom, and scale parameters κ 0 ρ 2 and κ 1 ρ 2 corresponding to t h e spike and th e slab components respecti vely . In this case, κ 0 and κ 1 regulates the scales of the respective Student- t distributions in the spike and the s lab components. Besides, the prior specification in ( 3 ) yields a multivariate Stud ent- t prior with λ τ degrees of freedom and scale ρ 2 on the non-zero re gression coef ficients β γ in the Gibbs sampling step, when marginalized over τ 2 . Since the covariates and response v ariables are standardized, we choose κ 1 = 1 , which also preserves consistency between the priors on the slab comp o nents in the ECM and the Gi b bs sampl ing steps. Furthermore, we take λ τ = 1 for reasonably thick tailed priors on the slab components. The s p ike hyperparameter κ 0 af fects the algorithm eve n more crit ically than the slab . Rather than s u ggesting a s ingle value, it is more app eali n g to tune κ 0 from a grid of v alues, based on the data. Following the grid recommended by Ro ˇ ckov ´ a and George ( 2014 ), we allow κ 0 to be selected from { 0 . 01 , 0 . 02 , . . . , 0 . 5 1 } through 10-fold cross-validation. The optimal κ 0 is chosen to be the value whi ch minim izes the median of the fold-wise medians of absol ute diffe rences between the actual and the predicted values o f the response v ariables. It mi ght be not ed that the cross-validation can be carried out i n parallel to enhance th e computation al ef ficiency of the al g orithm. Since the response variables are st and ardi zed to hav e a un i t variance, an InvGamma( a ρ = 2 . 1 , b ρ = 0 . 1) prior is pl aced on the hyperbolic error scale parameter ρ 2 , such that the prior mass is mo stly concentrated bel ow one. For θ , the probability of t he slab component, we consider a Beta( r θ = 1 , s θ = 1) prior , which has also been widel y used in th e literature. 12 The role of the shape parameter η of the hyperbolic errors requires some di s cussion as well. As indicated in Section 2.2 , the value of η is considered to be fixed at the ECM step, while associatin g a prior t o it i n th e Gibbs sampler . That is , η s erves as a fix ed h y perparameter for the ECM step and as a vital parameter with a prior in the Gib bs sam pling step. Ideally , putting a pri o r on η through o ut the entire algorithm would ha ve been more appropriate for capturing tail uncertainty . Howe ver , owing to th e inabi l ity of suitable tail esti mation by the ECM, as ob served in prelim inary analyses, the value of η is fix ed at η = 1 . It might be not ed that the normal and the Laplace distributions can be obtained as limiti ng cases of the hyperbol ic distri bution for l ar ge and small values of η respectiv ely . A value of η = 1 produces tails heavier than those of a normal distri bution but much lighter than thos e of a Laplace distribution. When the degree of tail heaviness is unknown and cannot be accurately estimated, thi s choice represents a practical compromise and may reduce sensitivity to model misspecification. For the Gibbs sampl ing step, we pu t a discrete uniform prior o n η with prio r supp ort G η = { 0 . 05 , 0 . 1 , 0 . 2 , 0 . 3 , . . . , 0 . 9 , 1 , 2 , 5 , 10 , 20 , 50 } , as sugg est ed by De and Ghosh ( 2026 ), to stu dy the u n k nown t ail beha vior more extensively . 3 Simulatio n Study The ef ficacy of the proposed GECM-HEM technique is demons trated through simulation studies in comparison with se veral competing methods. W e g enerate n = 400 o b serv ations from the regression model y i = β 0 + β 1 x i 1 + β 2 x i 2 + · · · + β p x ip + ǫ i , i = 1 , 2 , . . . , n, (12) under t h e following scenarios: (I) W e take p = 100 0 . The cov ariates x i = ( x i 1 , x i 2 , . . . , x ip ) ⊤ , i = 1 , 2 , . . . , n , are indepen- dently drawn from a multivariate normal di stribution N p ( 0 , Σ X ) with a first order aut o re- gressiv e correlation structure as Σ X = ( 0 . 6 | k − l | ) 1 ≤ k , l ≤ p . The true regression coefficients are 13 β 0 = 2 , β 1 = · · · = β 100 = 1 . 5 , β 101 = · · · = β p = 0 . The errors are assumed t o follow a Hyp erb olic( η = 0 . 5 , ρ 2 = 2) dis tribution. (II) T h is scenario is similar to Scenario (I), exce pt that the errors are normall y di s tributed wit h zero mean and variance 2. (III) Here, we take p = 150 0 , and the co variates x ij s ( i = 1 , 2 , . . . , n ; j = 1 , 2 , . . . , p ) are independently drawn from a s tandard n o rmal dist ri bution. The true regression coefficients are β 0 = 2 , β 1 = · · · = β 50 = 1 . 5 , β 51 = · · · = β p = 0 , and the errors ha ve a Student- t distribution with 2.05 degrees of freedom. (IV) W ith p = 1500 and a design matrix sim ilar to Scenario (III), we consid er the true regression coef ficients as β 0 = 2 , β 1 = · · · = β 50 = 0 . 9 , β 51 = · · · = β p = 0 , and generate errors from a Hyp erb o lic ( η = 0 . 5 , ρ 2 = 2) dis tribution. Each scenar io is r eplicated 100 tim es to capture va riabilit y in the resulting performance mea- sures. Th e studied methods are assessed in terms of estimation and out-of-sample predictiv e performances. For s tudying estimation p erformance, we consider the root-m ean-squared errors (RMSEs) of the regre ssion coefficients, defined as h ( p + 1) − 1 P p j =0 ( β j − ˆ β j ) 2 i 1 / 2 , wh ere ˆ β j de- notes the esti mate of the j th regression coefficient β j ( j = 0 , 1 , 2 , . . . , p ). W e also compare t h e RMSEs of the expected values of the response v ariables, E ( y i | β ) = P p j =0 β j x ij , obt ained as h n − 1 P n i =1 { P p j =0 x ij ( β j − ˆ β j ) } 2 i 1 / 2 , where x i 0 = 1 , for e very i = 1 , 2 , . . . , n . Moreo ver , the true positive rates (TPRs) and th e true negative rates (TNRs) of the methods are examined to inspect the variable selection performance. The TPR denotes th e proportion of signals correctly i dentified by a method among all the true signals, while the TNR is t h e proportion of correctly dropped noise var iables among all the true noise va riables. Theref ore, bot h TPR and TNR should be equal to 1 in an id eal scenario of perfect detection of signals and noise variables. Based o n 100 0 out-of-sample observations for each scenario, the predictive performance is ev aluated using median of absolut e diffe rences (MeADs) between the actual and the predicted values of the response variables, as well as emp i rical coverage probabilities and median widths of 90% prediction intervals. 14 3.1 Comparison With th e Stochastic Search HEM At t he outset, we demonstrate the adv antage of GE CM-HEM ov er th e usual stochastic search HEM (G-HEM) ( De and Ghosh , 2026 ) in Section 2.1 for large model sp aces, usin g t h e simulati on setting in Scenario (I) wit h hyperbolic errors. Our goal is t o highlight the comp u tational benefits of posterior exploration in a reduced model space using GECM-HEM, com pared to e xploration by G-HEM in the ent ire mo del space containing al l the 2 p models. For a thorough in vestigati on, G-HEM is ex ecuted with two diff erent model in i tialization schem es for th e MCMC algorithm. Specifically , G-HEM-Null s t arts the p o sterior exploration from a null model with no cov ariates by setting γ = 0 and β = 0 , whereas G-HEM-Full be gins the MCMC at the full model contai n ing all cov ariates, with γ j = 1 ( j = 1 , 2 , . . . , p ) and β set to the correspond- ing ridge estimate. For the proposed GECM-HEM procedure, model selection is first performed using the ECM step (with cross-validation impl em ented on four CP U cores), after wh i ch the Gibbs sampling is impl em ented und er the reduced m odel for 11000 iterations, di scarding the first 1000 iterations as burnin. In a sim ilar amount of clock tim e, G-HEM-Full runs for 100 0 iterations (after a burnin of 100), while G-HEM -Null runs for 400 0 iterations (after a burnin of 1000). Because of the robustness of median ov er m ean, t he point est i mates ( ˆ β 1 , ˆ β 2 , . . . , ˆ β p ) ⊤ of the regression coef ficients ( β 1 , β 2 , . . . , β p ) ⊤ are taken as the comp o nent-wise medians of the post erior samples. Moreover , for e very MCMC iteration, the p o sterior sam ples of ( β 1 , β 2 , . . . , β p ) ⊤ lead to a draw o f the intercept parameter β 0 as β 0 = ¯ Y − p X j =1 β j ¯ x j , (13) where ¯ Y is the mean o f the non-centered and unscaled response variable, and ¯ x j is the mean of the n on-centered and unscaled j th covariate ( j = 1 , 2 , . . . , p ). The posterior median ˆ β 0 of t h ese MCMC samples provides a po i nt estimate of β 0 . Sim ilarly , MCMC sampl es for t he expected values o f the response variables, E ( y i | β ) s ( i = 1 , 2 , . . . , n ), are obtained at e very iteration using 15 the p o sterior samples of ( β 0 , β 1 , β 2 , . . . , β p ) ⊤ as E ( y i | β ) = β 0 + p X j =0 β j x ij , (14) and their p o s terior medians serve as th e correspondin g p o int estimates. Using these estim ates, the RMSEs of t h e regression coefficients as well as those of the expected response v ariables are computed and compared through boxplots in Figure 1 . Both the plot s in Figure 1 d epict s ignifi- cantly lo wer RMSEs for the proposed GE CM-HEM method than for the G-HEM versions, with G-HEM-Full as the closest com petitor . 0.1 0.2 0.3 0.4 GECM−HEM G−HEM−Null G−HEM−Full RMSE of Regression Coefficients 1 2 3 4 5 GECM−HEM G−HEM−Null G−HEM−Full RMSE of Expected Response E ( y | β ) Figure 1: RMSEs of all regression coef ficients and the e xpected values of the response variables for t h e proposed G E CM-HEM method and d i f ferent versions of G-HEM under true hyperbolic errors in Scenario (I). The boxplo t s are b ased on 100 replicates. T o assess the effica cy of GECM-HEM for variable selection, we examine the inclusion i n dica- tors γ j s ( j = 1 , 2 , . . . , p ), whi ch are estim ated using the median p rob ability mo d el ( Barbieri and Berger , 2004 ). More precisely , the j t h inclusion in dicator is esti mated as ˆ γ j = 1 , if the corresponding marginal posterior i nclusion probability P ( γ j = 1 | Y ) i s at l east 0.5. Th e esti mated inclusion in- dicators are used t o calculate t he TPRs and TNRs against the t rue inclusion indicators, and t h e results are summarized i n T able 1 . G-HEM-Null yields low values of both TPR and TNR throug h incorrect id ent ification of m o st of t h e sign als and noi se variables. Like wise, G-HEM -Full remains entrapped at the full m o del throughout t he MCMC chain and fails to drop any cov ariate, as e vi d ent from i t s TPR of 1 and TNR of 0. In contrast, with both TPR and TNR v alues close to 1, our method 16 GECM-HEM shin es in inclusion and exclusion of appropriate variables. T able 1: TPRs and TNRs of all cova riates for t he proposed GECM-HEM method and different versions of G-HEM under true h yperbolic errors in Scenario (I). The values are av eraged over 100 replications. GECM-HEM G-HEM-Null G-HEM-Full TPR 0.990 0.682 1.000 TNR 0.999 0.815 0.000 W e now proceed to com pare the predictive performance of the three methods with a newly generated test dataset of 1000 observations and the estim ates from the traini ng samples. Us ing the MCMC sam p les based on the trainin g set, we o btain the point predi cti ons for the test sam p les and compute their MeADs from the actual values of t he response va riables. For uncertainty quantifi- cation, we use the estim ated quantiles of the posterio r predi ctive distrib utions to cons t ruct 90% prediction intervals, and measure their empirical cov erage probabilities and median widths. All the results, based on 100 replicati o ns, pertaining to predictiv e performance are presented in Figure 2 . GECM-HEM performs satis factorily in terms of point prediction. As far as predicti o n intervals are concerned, GECM-HEM attains nominal cove rage, while the two ve rsions of G-HEM sho w substantial overcov erage with significantly larger medi an widths. 2 4 6 8 GECM−HEM G−HEM−Null G−HEM−Full Predictive MeAD 0.85 0.90 0.95 1.00 GECM−HEM G−HEM−Null G−HEM−Full Empirical Coverage Probability 10 20 30 40 50 GECM−HEM G−HEM−Null G−HEM−Full Median Width Figure 2: Predictive performance of the proposed GECM-HEM method and di f ferent versions of G-HEM under true hyperbolic errors in Scenar io (I). In the middle plot, t h e dashed li ne marks a cove rage of 90%. The boxplo ts are based on 100 repli cates. T o sum marize, GECM-HEM rem arkabl y outperforms G-HEM in coefficient estimation, v ari- able selection and predictio n, i n th e same running tim e. Th us, for lar ge model spaces, performing 17 MCMC on a posterior mo d e gui d ed reduced model s pace appear s to be a more compu t ationally ef ficient alternativ e to a traditional implementation of M CMC-based posterior exploration ov er the entire m odel space. 3.2 Comparison With MAP Estimators In this sec tion our goal is to comp are t he performance of G E CM-HEM with two st ate-of-the- art MAP e stim ation techniques, namely , EMVS ( Ro ˇ ckov ´ a and G eorge , 2014 ) and SSQLASSO ( Liu et al. , 2024 ). Under dif ferent kin ds o f spike-and-slab priors, bot h t he contenders perform de- terministic model selecti o n th rough posterior maximization us i ng t he EM algorithm. Specifically , the EMVS algorithm assumes a norm al likelihood, wh ile SSQLASSO incorporates a hea vier-tailed asymmetric Laplace likelihood. The competing m o dels thus enable a two-f old comparison. First, the study helps us scrutinize t h e potential adv antages and disadv antages of our prop osed hybrid approach over MAP estimators . Second, we can compare the flexibility o f different likelihoo d s in capturing tail-heaviness. For t he present analys is, SSQLASSO and EMVS are implemented using t he e mBayes ( Liu and W u , 2024 ) and EMVS ( Ro ˇ ckov ´ a and Moran , 2021 ) packages in R respectiv ely . At first, we e valuate t h e methods in t erms of estimation of the regression coefficients and the expected values of the response variables. For GECM-HEM , these are est imated us i ng the respectiv e post erior medians, as stated in Section 3.1 . O n t he other hand, the M AP techni ques estimate the same quantities via posterio r maxi m ization. From the boxpl ots of t he RMSEs of the regression coef ficients in Figure 3 , GECM-HEM typically shows the lo west RMSEs among the t hree method s for the normal and the Stu d ent - t error models, while exhibitin g com petitive performance with SSQLASSO for true hyperbolic errors. EMVS consistently produces the large st RMSEs across all the s cenarios, with hyperbolic errors depicting th e most stark dif ference wi th t he other t wo. A similar observation can be m ade for the expected responses E ( y | β ) fr om the boxpl ots of th ei r RMSEs i n Figure 3 . W e now refer to the TPRs and TNRs o f the m ethods in T able 2 to analyze their variable selec- 18 0.05 0.10 0.15 0.20 0.25 0.30 GECM−HEM SSQLASSO EMVS RMSE of Regression Coefficients 1 2 3 4 5 GECM−HEM SSQLASSO EMVS RMSE of Expected Response E ( y | β ) Scenario I (Hyperbolic) 0.05 0.10 0.15 0.20 GECM−HEM SSQLASSO EMVS RMSE of Regression Coefficients 1 2 3 GECM−HEM SSQLASSO EMVS RMSE of Expected Response E ( y | β ) Scenario II (Normal) 0.0 0.1 0.2 GECM−HEM SSQLASSO EMVS RMSE of Regression Coefficients 0 3 6 9 GECM−HEM SSQLASSO EMVS RMSE of Expected Response E ( y | β ) Scenario III (Student−t) 0.04 0.08 0.12 0.16 GECM−HEM SSQLASSO EMVS RMSE of Regression Coefficients 2 4 6 GECM−HEM SSQLASSO EMVS RMSE of Expected Response E ( y | β ) Scenario IV (Hyperbolic, Weak) Figure 3: RMSEs of all regression coef ficients and the e xpected values of the response variables for the p roposed GECM-HEM method and the other studi ed MAP-based m ethods under different scenarios. The boxplot s are based on 100 replications. 19 tion performance. Sim ilar to Section 3.1 , variable selection for GECM-HEM is carried out u s ing the m edian p rob ability m odel ( Barbieri and Berger , 2004 ). On th e other hand, for the MAP-based methods EMVS and SSQLASSO, we use the models returned by their respective R packages. Un- der Scenarios (I)-(III), the TPR s and TNRs for GECM-HEM are close to 1, i n dicating the effe c- tiv eness of our proposed method in i n cluding signals and excluding noise cov ariates. For Scenario (IV), correspondi n g t o signals with smaller magni tudes and acc ordingl y weaker informati on, we observe a drop in b oth the metrics for GECM-HEM, with the reducti o n bein g less pronounced for the TNR. SSQLASS O p ro d u ces similar TPRs b ut lower TNRs than GECM-HEM across all the scenarios, whil e EMVS sho ws the re verse pattern wi th similar TNRs and lo wer TPRs. In other words, SSQLASSO and EMVS fa vor lar ger and smaller models respectively , while our method tends to make a trade-off , with a sli ght preference towa rds s m aller models. T able 2: TPRs (TNRs) of all regression coef ficients for t h e proposed GECM-HEM method and the other studied MAP-based metho ds un der dif ferent s cenarios. The v alues are a veraged o ver 1 00 replications. GECM-HEM SSQLASSO EMVS Scenario (I) (Hyperbolic) 0.990 (0.999 ) 1.000 (0.956 ) 0.895 (1.000 ) Scenario (II) (Norm al) 1.000 (1.000 ) 1.000 (0.707 ) 0.993 (1.000 ) Scenario (III) (Student- t ) 0.999 (0.998) 0.993 (0.947) 0.893 (0.998) Scenario (IV) (Hyperbolic, W eak) 0.916 (0.973) 0.91 2 (0.944) 0.031 (1.000) Next, we look at t he prediction result s in Figure 4 . The predicti ve M eAD beha ves s i milarly to the RMSEs of the regression coef ficients and the mean response in Figure 3 . That is, in terms of point prediction, GECM-HEM sho ws competitiv e performance with SSQLASSO, with slight improvement for t h e normal and the Student- t generating models. EMVS is consi s tently outper- formed b y yi el d ing larger MeAD values than th e other two methods. W e also study th e predictio n intervals for the m ethods based on the out-of-samp le observations. For GECM-HEM, the predic- tions are ca rried out using the post erior predictive distribution. F or SSQLASSO and EMVS, the predictiv e errors are generated from their respective assumed error distributions, that is, Laplace for SSQLASSO and normal for EMVS, conditional on the obtained posterior m odes. As far as predic- tion int erv als are concerned, the result s in Figure 4 exhibit two distin ct patt erns : one for Scenario 20 (IV) and another for Scenarios (I)-(III). In Scenario (IV), wit h weak er signals than in the remain- ing scenarios, all the meth ods display undercovera ge. While the undercoverage is most s e vere for EMVS, the coverage for SSQLASSO is more variable t han GECM-HEM. The correspond- ing widths of the prediction int erv als beha ve si milarly to the cove rage, with th e shortest interva ls for EM VS and more varying widths for SSQLASSO. For Scenarios (I) and (II) corresponding to hyperbol ic and normal errors, SSQLASSO has a general tendency of sign ificant undercover - age with sho rt er widths comp ared to GECM-HEM, whi l e EMVS produces wider intervals than GECM-HEM with not abl e overcov erage. In these scenarios, G E CM-HEM attains no minal cover - age. Under Student- t errors in Scenario (II I), the t hree methods exhibit si m ilar widths and maintain a coverage close to the nominal lev el. In a nu t shell, GECM-HEM exhibits comp et i tiv e performance in coef ficient estimation and point prediction, and superior performance in variable selecti o n and uncertainty quantification through predicti o n in terva ls. Moreover , the consistency of t he results for GECM-HEM across t h e scenarios indicates its robustness to diffe rent error di s tributions. Howe ver , the comparati vely mod- est performance of GECM-HEM in Scenario (IV), corresponding to lo w signal s trength, suggest s that sim ultaneous tail es t imation and variable selection may require stronger sig n als. 3.3 Computation T ime W e conclude the simulation study by highlighting t he computational adv antage of o u r proposed method. Both the competing methods EMVS and SS QLASSO are imp lemented in C thro u g h th ei r respectiv e softwa re packages, whereas our method is e xecuted in R. Therefore, the computation times may n o t be stri ct l y comparable, si nce lower level programmin g languages such as C are generally faster than higher l e vel languages such as R. Despite this fact, we in vestigate th e potential gain of using parallel computation to perform cross-validation for choosi ng the optimal value of the spike hyperparameter in t h e ECM step of our proposed met h o d, which is a distinctive feature of our algorit hm. The computation times of t h e algorithm s are reported in T able 3 . For GECM-HEM, we run 21 2 3 4 GECM−HEM SSQLASSO EMVS Predictive MeAD 0.8 0.9 GECM−HEM SSQLASSO EMVS Empirical Coverage Probability 8 12 16 20 GECM−HEM SSQLASSO EMVS Median Width Scenario I (Hyperbolic) 1 2 3 GECM−HEM SSQLASSO EMVS Predictive MeAD 0.7 0.8 0.9 1.0 GECM−HEM SSQLASSO EMVS Empirical Coverage Probability 5 10 15 GECM−HEM SSQLASSO EMVS Median Width Scenario II (Normal) 2 4 6 GECM−HEM SSQLASSO EMVS Predictive MeAD 0.4 0.6 0.8 GECM−HEM SSQLASSO EMVS Empirical Coverage Probability 10 20 GECM−HEM SSQLASSO EMVS Median Width Scenario III (Student−t) 2 3 4 GECM−HEM SSQLASSO EMVS Predictive MeAD 0.2 0.4 0.6 0.8 GECM−HEM SSQLASSO EMVS Empirical Coverage Probability 5 10 GECM−HEM SSQLASSO EMVS Median Width Scenario IV (Hyperbolic, Weak) Figure 4: Predicti ve performance of t he propos ed GECM-HEM m eth od and the other s tudied MAP-based m ethods under differ ent scenarios. Th e boxpl ots are based on 100 replications. the m ethod using 1, 4, 6 and 10 CPU cores respectiv ely . Since any standard computer typically consists of 4 or 6 CPU cores, we cho ose these values to stud y t he t ime taken by the proposed 22 method in a traditional machine. The remaining choices, namely 1 and 10 cores, correspond to no parallelization and paralleli zati o n over a larger number of cores than in a s tandard m achine respectiv ely . W e observe from T able 3 that wh ile EMVS turns out to be the fastest among the three methods, GECM-HEM is com putationally competit iv e wi th SSQLASS O and can be made faster through parallel computation, e ven though SSQLASS O is impl emented i n C wh i le GECM-HEM is imp lemented in R. Overall, our propo s ed GECM-HEM method appears to b e a computati onally viable alternativ e for sim ultaneous tail estimati o n, v ariable selection and uncertainty quant ification, compared t o state-of-the-art MAP-based t echniques. T able 3: Computation time (in mi n utes) for the proposed GECM-HEM method u s ing different number of CPU cores, and th e other MAP-based methods, und er a single repli cation of Scenario I with errors generated from hy perbolic dist ri bution. GECM-HEM SSQLASSO EMVS 1 core 4 cores 6 cores 10 cores 23.50 11.65 10.23 6.23 21.87 1.60 4 Real Data Analysi s W e now proceed to demons t rate the performance of GE CM-HEM using three real datasets. Every dataset is divided into traini ng and test sets with a 90%-10% split ratio, and the process is repeated 100 tim es to reduce sens i tivity of th e results to a s pecific choice of split. Our propos ed method i s compared with EMVS and SS QLASSO in terms of predictive accurac y t hrough median absolute deviations of the predicted test sample response v alues, empirical co verage prob abilities and m e- dian widths of 90% prediction int erv als. The results on predictive accurac y are depi ct ed in Figure 5 . Addit i onally , we inspect model parsimony across the methods for each dataset. It i s worthwhile to ment ion that for ou r proposed GECM-HEM method, th e variable selection is carried out us- ing the median probability model ( Barbieri and Berge r , 2004 ), simil ar to th e simulation st udy in Section 3 . 23 4.1 Boston Housing Dataset The Bost on Housi ng dataset from th e MASS package in R, which has been extensive ly used as a benchm ark example for regression m odeling with hea vy-tailed errors, is used as an illust rativ e example in this paper . It consists of 506 obs ervations on m edian house value, regressed on 13 neighborhood characteristics as covar iates. T o achieve an approx i mate sym metry in the distribution of t h e residuals , the logarith m of the median house v alue is considered as the respons e va riable. Follo wing Ghosh and Reiter ( 2013 ); De and Gho s h ( 2024 , 2026 ), we add 10 0 0 noise var iables, independently generated from a standard normal distribution, resulting in p = 1013 , to make the problem more interestin g for v ariable selection. This enables us to compare ho w effecti vely the competing methods can drop the n o i se variables from the model, whi ch can in t u rn aff ect the predictiv e performance. From t he box plots i n the topmost panel in Figure 5 , we observe that while SSQLASSO typ- ically yields the lo west predicti ve MeAD v alues, GECM-HEM i s a close competitor . On the other hand, in terms of empirical covera ges and median widths of 90% prediction i ntervals, both GECM-HEM and SSQLASSO usually attain nominal coverage, with s l ightly wider interva ls for GECM-HEM. EMVS i s outperformed by the other two m ethods in all aspects, with larger pre- dictive MeAD, sub stantial undercoverage, and overly narrow prediction int erv als. In other words, GECM-HEM exhibits sati s factory performance in uncertainty quantification through prediction intervals and a com petitive performance in point predictio n. W e now in vestigate the model size chosen by each method. The desi g n matrix is known to contain 1000 noise var iables, along with the 13 origin al predictors. W it h this information up front, it will b e useful to st udy these two types of cov ariates separately . In particular , for each type, we look at the fi ve-number summary s t atistics of the number of cov ariates included in the model. The summaries are taken ove r the 100 replicates and are reported in T able 4 . It is e v i dent from T able 4 t h at for most o f the replicates, all the three metho ds perform si milarly i n choosing he original cov ariates and dropp i ng the n o i se v ariables. Howe ver , we also observ e that SSQLASSO includes nearly all of the original co variates and retains a large number of noise predictors i n s o m e 24 replicates, i ndicating its tendency to choose bigger models . T able 4: Fi ve-number su mmary statisti cs of the num b er of co variates (original and noise) included in the model by the propos ed GECM-HEM method and the other studied MAP-based m et h ods for the Bost on Housin g dat aset . The st atistics are com puted based on 1 0 0 repli cati ons. Cov ariates Method M inimum 1st Quartile Median 3rd Quartile Maximum Original (13) GECM-HEM 3 4 4 5 5 SSQLASSO 3 4 4 5 12 EMVS 3 3 3 3 5 Noise (1000) GECM-HEM 0 0 0 1 3 SSQLASSO 0 0 0 0 819 EMVS 0 0 0 0 2 4.2 Univ ersity of V erm ont F aculty Salaries Dataset W e analyze data on fa culty salaries for the year 2020 at t he Uni versity o f V ermont obtained from Kaggle, using the log-transformed salary as the response variable, with job title, department and college serving as predictors. Furthermore, since all the 3 co variates are categorical with mul tiple lev els, we create dummy variables for each lev el, and also incorpo rate th e interactions between colleges and job t itles. The result ing dataset consists of 1756 observ ations, wi t h 936 cov ariates and t h e logarithm of salary as the respo n se variable. It is to be noted that 605 of the i n teraction terms are not observed in th e data. Accordingly , these 605 colum ns in the desig n matrix contain only zero entries across all ro ws. Instead of removing these columns manually , we retain them to check if the studied methods can ef fectiv ely discard them. W e observe that while GECM-HEM and EMVS can successfully exclude t hese colu mns, the implementatio n of SSQLASSO via the emBaye s package results in a runtime error . Therefore, we execute SSQLASSO on the smaller design matrix contain i ng the remaining 331 columns , after manually drop ping the 605 colum ns with all zero entries. The middle panel of Figure 5 shows the predictive performance of the methods. Similar to the Boston Housing dataset, the predicti ve M eADs for GECM-HEM and SSQLASSO are close to on e another , with slightly lo wer values for SSQLASSO, while those for EMVS are subs tantially l ar ger . 25 All the methods exhibit u ndercove rage for 90% prediction intervals. The proposed GECM-HEM method attain s a cov erage nearly s imilar to that of EMVS, but wi t h a smaller w i dth. On the other hand, SSQLASSO produces n arrower intervals and notably lower cove rage than the ot her methods. T o compare model si zes, we e xamine the number of predictors selected by each method among the 331 cov ariates used in fitting SSQLASSO. A quick glance at t he fi ve-number summaries in T able 5 rev eals a sharp dist inction i n the model sparsit y across the methods. Both EMVS and GECM-HEM fa vor sm aller models, with E M VS selecting the least number o f covar iates. In con- trast, SSQLASSO exhibits l i mited shrinkage by d ropping only a few co variates in most replicates. T able 5: Fiv e-number summ ary statisti cs of the number of covariates (among the 331 cov ariates used to run SSQLASSO) in cluded in the model by the proposed GECM-HEM method and the other stud i ed MAP-based methods for the Faculty Salaries dataset. The statist ics are computed based on 100 replications. Method Mini mum 1st Quartile Median 3rd Quartil e Maximum GECM-HEM 22 27 28 29 33 SSQLASSO 87 319 323 325 328 EMVS 5 5 5 5 5 4.3 Ames Housing Dataset As an alternative to the Bost on Ho using dataset, De Cock ( 2011 ) proposed the Am es Housing dataset (a vailable in the modeldata package in R) as a touchstone for h ea vy-tailed regression modeling problems. The origi nal dataset contains 2930 observations on 74 variables, incl u d ing house price and 73 co variates potentially af fecting the prices. T o capture potential nonl inear ef- fects, we create bins for some of the numeric cov ariates, thereby con verting them to categorical var iables. In particular , we consider 12 bins each for the year of building a house and the y ear of its remod eli ng. Thereafter , we include i n teraction terms between the neig hborhood, and year of building, year o f remodeling and year of sale (considered to be categorical with sale years as lev els). Using dummy variables for each l e vel of the categorical var iables, the resulti ng design 26 matrix consi sts of 2930 ro ws and 962 columns, and the log-t rans form ed selling price is taken as the respo n se variable. In this example, SSQLASSO can be successfully e xecuted for on l y 11 ou t of the 100 replicates using the emB ayes package in R. Therefore , we report o ur findings for the three method s based on these 11 replicates in the main paper , and sp ecify the results for GE CM-HEM and EM VS based on all t h e 100 replicates in Appendi x B . Using the 11 replicates, the boxpl ots in Figure 5 indicate slightly lower MeAD for SSQLASSO than GECM-HEM. The prediction intervals for GECM-HEM are a bit wider and sho w a slight overco verage, compared to a slight undercovera ge by SSQLASS O. Both the m ethods significantly outp erform EMVS, which yields large predicti ve MeAD, along with wide predicti o n intervals and gross undercoverage. The variable selection results (T able 6 ) based on the 11 replicates repeats a similar story like the Faculty Salaries dataset . EMVS chooses t he sm allest mod el, followed by GECM-HEM, while SSQLASSO retains a susbtanti al number of predictors. T able 6: Fi ve-number summ ary statistics of th e num ber of cova riates i ncluded in the model by the proposed GECM-HEM metho d and the ot h er studied MAP-based method s for t he Ames Housing dataset. The statistics are com puted based on 11 replications for which SSQLAS SO can be suc- cessfully executed. Method Mini mum 1st Quartile Median 3rd Quartil e Maximum GECM-HEM 36 38 39 41 44 SSQLASSO 827 922 929 947 955 EMVS 7 10 14 15 15 5 Conclusio n In this paper , we ha ve propos ed a two-step approach, namely , GECM-HEM, by combini ng MAP estimation through the ECM alg o rithm, alon g wi th a Gib b s sampler , for performing model se- lection and pos t erior computati on within a heavy-tailed frame work. This approach leverages th e computational advantage of M AP estimatio n and inferential benefits of MCMC-based algorithms. Compared wit h t wo representative MAP esti mators and a stand-alone MCMC-based approach, 27 0.02 0.04 0.06 0.08 GECM−HEM SSQLASSO EMVS Predictive MeAD 0.7 0.8 0.9 1.0 GECM−HEM SSQLASSO EMVS Empirical Coverage Probability 0.24 0.26 0.28 0.30 GECM−HEM SSQLASSO EMVS Median Width Boston Housing 0.010 0.015 0.020 0.025 GECM−HEM SSQLASSO EMVS Predictive MeAD 0.80 0.85 0.90 GECM−HEM SSQLASSO EMVS Empirical Coverage Probability 0.10 0.12 0.14 GECM−HEM SSQLASSO EMVS Median Width Faculty Salaries 0.004 0.006 0.008 0.010 GECM−HEM SSQLASSO EMVS Predictive MeAD 0.80 0.85 0.90 0.95 GECM−HEM SSQLASSO EMVS Empirical Coverage Probability 0.025 0.030 0.035 0.040 0.045 0.050 GECM−HEM SSQLASSO EMVS Median Width Ames Housing Figure 5: Predicti ve performance of t he propos ed GECM-HEM m eth od and the other s tudied MAP-based method s for di f ferent real datasets. In the plots for empirical coverage probability , the dash ed lines mark a cov erage of 90%. The boxplots for Boston Housing and Faculty Salaries datasets are based on 100 replications. The boxplots for Ames Housing dataset are based on 11 replications for which SSQLASSO can be successfully executed. the proposed GECM-HEM method has shown competitiv e performance in coefficient estimation and point prediction, and h as demon strated su p erior performance in variable selection and uncer - tainty quantification. Althoug h for a fixed degree of tail-hea viness, M A P-based techniques like SSQLASSO can produce more precise estim ates, ou r met h od performs fa vora bly in sim ultane- 28 ously accomm odating tail flexibilit y and uncertaint y quantification. W e con cl u de this paper with some possi ble directions for future work. Unlike De and Ghosh ( 2026 ), we have focused solely on the hyperbolic distribution rather than a mixture of hyperbolic and Student- t distributions for mo d eling er rors. Fernandez and Steel ( 1999 ) pointed out the poten- tial asymptoti c unboundedness of the Student- t likelihood , and cautioned against using likelihood maximization techni q u es such as EM-like algorithms for this di stribution when the degrees of free- dom are small. A possible future direction is to extend our proposed GECM-HEM technique to the mixture of hyperbolic and Student- t errors ( De and Ghosh , 20 2 6 ), with careful specification of the prior for the tail hea viness parameter . Furthermore, studying the Bayesian bootstrap ( Rubin , 1981 ; Nie and Ro ˇ ckov ´ a , 2023a , b ) as an alternative to the Gibbs sampler for uncertainty quantification in the second step of our proposed method is another possibi l ity . Lastly , instead of using spike- and-slab priors on regression coefficients with beta-binomial priors on the model size, e xploring alternativ e priors like the horseshoe prior ( Carv alho et al. , 2009 , 2010 ; De and Ghosh , 2024 ) and the recently proposed matryoshk a do l l prior ( W omack et al. , 2025 ; Gho s h , 2025 ) represents an- other p o ssible direction for future work. References Barbieri, M. and Ber ger , J. (2004). Optimal predictiv e m odel selection. The An nals of Sta tistics , 32(3):870–897. Carv alho, C., Polson, N., and Scott, J. (2009). Handli ng sparsity via the hors esh oe. In van Dyk, D. and W elling, M., editors, Pr oceeding s o f the T welfth Interna t ional Confer ence on Artificial Intelligence and Statistics , volume 5 of Procee dings of Mac hine Learni n g Resear ch , pages 73– 80. PMLR. Carv alho, C., Polson, N., and Scott, J. (2010). The horseshoe est i mator for sparse sig nals. Biometrika , 97(2):465 – 4 80. 29 Chakraborty , S ., Khare, K., and Michail idis, G. (2025). A gener alized Bayesian app roach for high-dimensi o nal robust regression with serially correlated errors and predict o rs. arXiv pre print , arXiv:2412.05673 . Clyde, M., Ghosh, J., and Littman, M. (2011). Bayesian adapti ve s ampling for variable selection and model av eraging. Journal of Computat ional and Graphi cal S t atistics , 20(1):8 0 –101. De, S. and Ghosh, J. (20 2 4). Horseshoe prior for Bayesian lin ear regression wi th hyperbolic err ors. Statisti cs and Applicat i ons , 22(3):199–209. De, S. and Ghos h , J. (2026). Robust Bayesian model av eraging for linear regression m odels with hea vy-tailed errors. Journal of Appl ied Stati stics , 53(2):304–330. De Cock, D. (2011). Ames, Iowa: Alternative t o t he Boston Housing data as an end of semester regression project. Journal of Stat istics Education , 19 (3):1–15. Dempster , A., Laird, N., and Rubin, D. (1977). Maxi mum likelihood from incomplete data via the EM algorit h m. Journal of the Royal Statisti cal Society: Series B (Methodological) , 39(1):1 –22. Fernandez, C. and Steel, M. (1999). Multivar iate Student -t regression models: Pitfalls and infer - ence. Biometrika , 86(1):15 3 –167. Geor ge, E. and McCulloch, R. (19 93). V ariable selection via Gibbs samplin g . Journal of the American Stat istical Association , 88 (42 3):881–889. Geor ge, E. and McCulloch, R. (1997). Approaches for Bayesian variable selection. Stati stica Sinica , 7(2):339–37 3 . Ghosh, J. (2025). On the choice of model space priors and multipli city cont rol in Bayesian variable selection: an application to streamin g logist i c regre ssion . a r Xiv pr eprint arXiv:2512.22504 . Ghosh, J. and Clyde, M. (2011). Rao-B lackwellization for Bayesian variable selectio n and model a veraging in linear and binary regression: A novel data augmentation approach. Journal of the American Stat istical Association , 10 6 (495):1041–1052. 30 Ghosh, J. and Gh attas, A. (2015). Bayesian v ariable selection under collinearity . The American Statisti ci a n , 69(3):165–173. Ghosh, J. and Reiter , J. (2013). Secure Bayesian mod el a veraging for horizontally partitioned data. Statisti cs and Computing , 23(3): 3 11–322. Gneiting, T . (1997). Normal scale mixt u res and dual probability densities. Journal of Stati stical Computation and Simulati o n , 59(4):375–384. Gramacy , R. and P antaleo, E. (2010). Shrinkage regression for multivar iate inference with missing data, and an app l ication to portfolio balancing. Bayesian A nalysis , 5(2):237–2 6 2. Hans, C. (2010). Model uncertainty and variable sel ecti o n in Bayesian lasso regression. Sta t istics and Computin g , 20:221– 229. Hans, C. (2011). El ast ic net regression modeling with the orthant normal prior . J ournal of the American Stat istical Association , 10 6 (496):1383–1393. Liu, Y ., Ren, J., Ma, S., and W u, C. (2024). The spike-and-slab quantile LASS O for robust variable selection in cancer genomics st udies. St atistics in Medicine , 43(26):4928–498 3. Liu, Y . and W u, C. (2024). emBayes: Robust Bayesian V ariable Selection via Expectation- Maximization . R p ackage version 0.1.6 . Meng, X.-L. and Rubin, D. (1993). Maximum like lihoo d estimati on via the ECM algorithm: A general framework. Biometrika , 80(2):267–278 . Nie, L. and R o ˇ ckov ´ a, V . (2023a). Bayesian boot strap spike-and-slab lasso. Journal of the Am eri can Statisti cal Associat ion , 118(543):2013 –2028. Nie, L. and Ro ˇ ckov ´ a, V . (2023b). Deep boot strap for Bayesian inference. Philosophi- cal T ransactions of the Royal Society A: Mathemati cal, Physical and Engi neering Sciences , 381(2247):20220 154. 31 Park, T . and Ca sella, G. (2008 ). The Bayesian lasso . J o u rnal of the American Statistical Associa- tion , 103(482):68 1–686. Ren, J., Zh ou, F ., Li, X., Ma, S., Jiang, Y ., and W u, C. (202 3). Robust bayesian variable selection for gene–en vironment i n teractions. Biometrics , 79(2):6 8 4–694. Ro ˇ ckov ´ a, V . and George, E. (2014). EMVS: The EM approach to Bayesian va riable selection. J ournal o f the American St atistical Ass o ciation , 109(506):828–84 6 . Ro ˇ ckov ´ a, V . and George, E. (2018). The spike-and-slab lasso. Journal of t he American Statis t ical Association , 113(521):43 1–444. Ro ˇ ckov ´ a, V . and Moran, G. (2021). EMVS: Bayesian V ariable Selection using EM Algorithm . R package version 1.2 . 1 . Rubin, D. (1981). T h e Bayesian bo o tstrap. The Anna l s of Statistics , 9(1): 1 30–134. Ruth, W . (2024). A re view of Monte Carlo-based versions of the EM algorithm. arXiv pr eprint, arXiv:2401.00945 . W ei, G. and T anner , M. (1990). A M o nte Carlo implementati o n of th e EM algorithm and the poor m an’ s data augmentation alg o rithms. J o urnal of the American Statist ical Association , 85(411):699–704 . W om ack, A., T ayl o r -Rodr ´ ıguez, D., and Fuentes, C. (2025). The matryoshk a doll pri o r – principled multipli city correction in Bayesian mo d el comparison. Bayesian Analysis , pages 1–25 . Zanella, G. and Roberts, G. (2019). Scalable i mportance tempering and Bayesian variable sel ec- tion. J ournal of the Royal Statist ical Society: Series B (St atistical Methodology) , 81(3):489–517. 32 A p pendix A P r oof of Proposition 1 Pr oposition 1. Let A and a be random vari ables such tha t A | a 2 ∼ N(0 , ρ 2 a 2 ) and a 2 ∼ G IG(1 , η , η ) . Then A is distri buted as Hyp erb olic ( η , ρ 2 ) . Pr o o f. The condi tional density of A given a 2 is f A ( A | a 2 ) = 1 p 2 π ρ 2 a 2 exp − A 2 2 ρ 2 a 2 , −∞ < A < ∞ , while the density of a 2 is f a 2 ( a 2 ) = 1 2 K 1 ( η ) exp − η 2 a 2 + 1 a 2 , x > 0 . The dens i ty of A , marginalized over a 2 , is obtained as f A ( A ) = Z ∞ 0 f A ( A | a 2 ) f a 2 ( a 2 ) da 2 , = 1 2 K 1 ( η ) p 2 π ρ 2 Z ∞ 0 1 √ a 2 exp − 1 2 η a 2 + η + A 2 /ρ 2 a 2 da 2 . The above integral can be e valuated usin g t he GIG( λ, a, b ) kernel in ( 15 ) with λ = 1 / 2 , a = η , b = η + A 2 /ρ 2 , thereby yielding f A ( A ) = 1 p 2 π ρ 2 K 1 ( η ) η + A 2 /ρ 2 η 1 / 4 K 1 / 2 p η ( η + A 2 /ρ 2 ) , which furt h er simp l ifies to the density fun ction of the Hyp erb olic( η , ρ 2 ) distribution in ( 2 ), using the clo s ed form expression K 1 / 2 ( x ) = p π / 2 x e − x . A p pendix B A dditional Results f or Ames Housing Dataset In this section, we reproduce the results for GECM-HEM and EMVS based on all the 1 00 replicates intended for our analysis. The results on both predicti ve performance (Figure 6 ) and model size 33 (T able 7 ) for t he methods are nearly similar to th o se stated in Section 4.3 , with GECM-HEM visibly outp erforming EMVS i n every aspect of comparison. 0.006 0.008 0.010 0.012 GECM−HEM EMVS Predictive MeAD 0.80 0.85 0.90 0.95 GECM−HEM EMVS Empirical Coverage Probability 0.035 0.040 0.045 0.050 GECM−HEM EMVS Median Width Figure 6: Predictive performance of t h e proposed GECM-HEM m et h od and EMVS for Ames Housing dataset. In t h e middle plot, the dashed line marks a coverage of 90%. The boxplo t s are based on 100 replications. T able 7: Fiv e-number summ ary statist i cs of the num b er of covariates included in th e model by the p roposed GECM-HEM method and EMVS for the Ames Housing dataset. The statistics are computed based on 100 replicatio n s. Method Mini mum 1st Quartile Median 3rd Quartil e Maximum GECM-HEM 33 38 40 41 59 EMVS 3 13 14 14 16 A p pendix C S ome Useful Distributions Some of the frequently encount ered probability distributions in thi s article are revie wed i n t h is section, to remove ambiguity about the p arameterizatio n s of their density functions used herein. 1. T h e generalized in verse Gaussian (GIG) distribution, denoted by GIG( λ, a, b ) , has a densi t y f GIG ( x | λ, a, b ) = ( a/b ) λ/ 2 2 K λ ( √ ab ) x λ − 1 e − ( ax + b/x ) / 2 , x > 0 , (15) where a, b > 0 , p ∈ R and K λ is a modified Bessel function of the s econd ki n d. 34 2. T h e densit y function of the gamma d i stribution Gamma( a, b ) is f Gamma ( x | a, b ) = b a Γ( a ) x a − 1 e − bx , x > 0 , (16) where a > 0 and b > 0 are t he shape and t he rate parameters respectiv ely . 3. Sim ilarly , the i n verse gamm a distribution In vGamma ( a, b ) has t h e density f In vGamma ( x | a, b ) = b a Γ( a ) x − a − 1 e − b/x , x > 0 , (17) where a > 0 and b > 0 respectiv ely denote the shape and the scale parameters. 4. T h e probability mass functi on o f the discrete un iform distribution DiscUniform( G ) on a finite set of real values G ca n be expressed as f DiscUniform ( x ) = 1 |G | , x ∈ G , (18) where |G | represents the number of elements in G . 35

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment