Contextual Safety Reasoning and Grounding for Open-World Robots

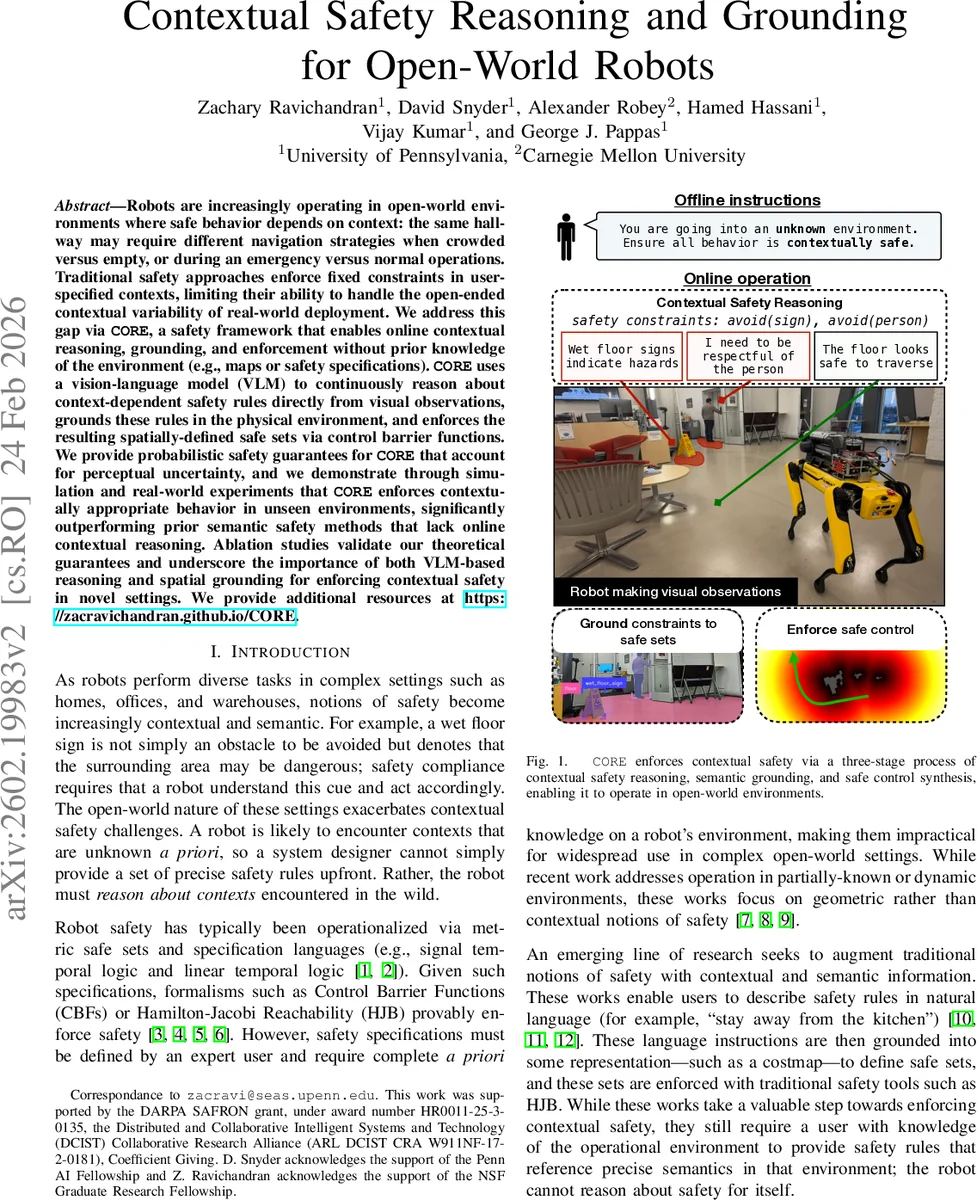

Robots are increasingly operating in open-world environments where safe behavior depends on context: the same hallway may require different navigation strategies when crowded versus empty, or during an emergency versus normal operations. Traditional safety approaches enforce fixed constraints in user-specified contexts, limiting their ability to handle the open-ended contextual variability of real-world deployment. We address this gap via CORE, a safety framework that enables online contextual reasoning, grounding, and enforcement without prior knowledge of the environment (e.g., maps or safety specifications). CORE uses a vision-language model (VLM) to continuously reason about context-dependent safety rules directly from visual observations, grounds these rules in the physical environment, and enforces the resulting spatially-defined safe sets via control barrier functions. We provide probabilistic safety guarantees for CORE that account for perceptual uncertainty, and we demonstrate through simulation and real-world experiments that CORE enforces contextually appropriate behavior in unseen environments, significantly outperforming prior semantic safety methods that lack online contextual reasoning. Ablation studies validate our theoretical guarantees and underscore the importance of both VLM-based reasoning and spatial grounding for enforcing contextual safety in novel settings. We provide additional resources at https://zacravichandran.github.io/CORE.

💡 Research Summary

The paper introduces CORE (Contextual Safety Reasoning and Enforcement), a novel safety framework that enables robots to operate safely in open‑world environments without any prior maps or pre‑specified safety rules. CORE consists of three tightly coupled modules: (1) a vision‑language model (VLM) that continuously extracts context‑dependent safety constraints from raw RGB‑D observations, (2) a semantic grounding pipeline that maps the VLM‑generated predicates into spatially‑defined safe sets in the robot’s workspace, and (3) a control barrier function (CBF) based controller that enforces these safe sets while providing probabilistic safety guarantees that explicitly account for perception uncertainty.

The VLM is prompted with high‑level safety guidelines (e.g., “follow pedestrian social norms”) and the current image. It outputs a structured JSON containing safety predicates such as ON(floor), NEAR(person), AROUND(wet_floor_sign) together with a chain‑of‑thought justification. Four spatial operators (ON, NEAR, AROUND, BETWEEN) are used to encode how each semantic class should influence safety. This structured output is crucial for downstream grounding.

Grounding proceeds by first segmenting the image with an open‑vocabulary segmentation model (e.g., SAM) to obtain pixel masks for each semantic class identified by the VLM. Depending on the operator, morphological operations (dilation for AROUND, convex hull for BETWEEN, etc.) generate pixel‑level safe and unsafe regions. Depth information projects these pixels into a 3‑D point cloud, which is then accumulated into a 2‑D occupancy grid of the robot’s workspace. For each grid cell the algorithm counts safe and unsafe point observations, yielding a Bayesian estimate of the probability that a state lying in that cell is safe. Cells whose probability exceeds a threshold τ form the final safe set S. A signed‑distance barrier function h(x) is constructed over S, enabling standard forward‑invariance CBF constraints.

The key theoretical contribution is a probabilistic safety guarantee: because the barrier function is built from noisy perception, the authors prove that, under mild assumptions, the probability of exiting the safe set within K steps is bounded by a user‑specified δ. This extends existing CBF literature, which typically only models state or dynamics uncertainty, by explicitly handling perception‑driven barrier construction.

Experiments include both simulation scenarios (crowded vs. empty hallways, emergency vs. normal operation) and real‑world trials on a mobile robot platform equipped with an RGB‑D camera. CORE is compared against (a) an oracle that uses ground‑truth context, (b) prior semantic‑safety methods that rely on static language‑specified rules, and (c) a baseline that uses only segmentation without VLM reasoning. CORE matches the oracle’s safety performance while requiring no prior knowledge, and outperforms the baselines by a factor of roughly five in terms of successful safe navigation episodes. Ablation studies confirm that both the VLM reasoning and the spatial grounding steps are essential: removing either dramatically reduces safety success rates.

The authors discuss limitations such as VLM inference errors propagating through the pipeline, computational overhead of running large VLMs and segmentation models in real time, and the current inability to resolve conflicting contextual rules in multi‑agent settings. Future work is outlined to integrate VLM and CBF optimization more tightly, incorporate multimodal cues (speech, textual instructions), and validate the approach in larger, more diverse real‑world deployments.

In summary, CORE demonstrates that contextual safety can be achieved on-the-fly by marrying modern vision‑language understanding with classical control‑theoretic safety tools, offering a promising pathway toward truly autonomous robots that can reason about and respect the nuanced safety expectations of everyday human environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment