DiSPo: Diffusion-SSM based Policy Learning for Coarse-to-Fine Action Discretization

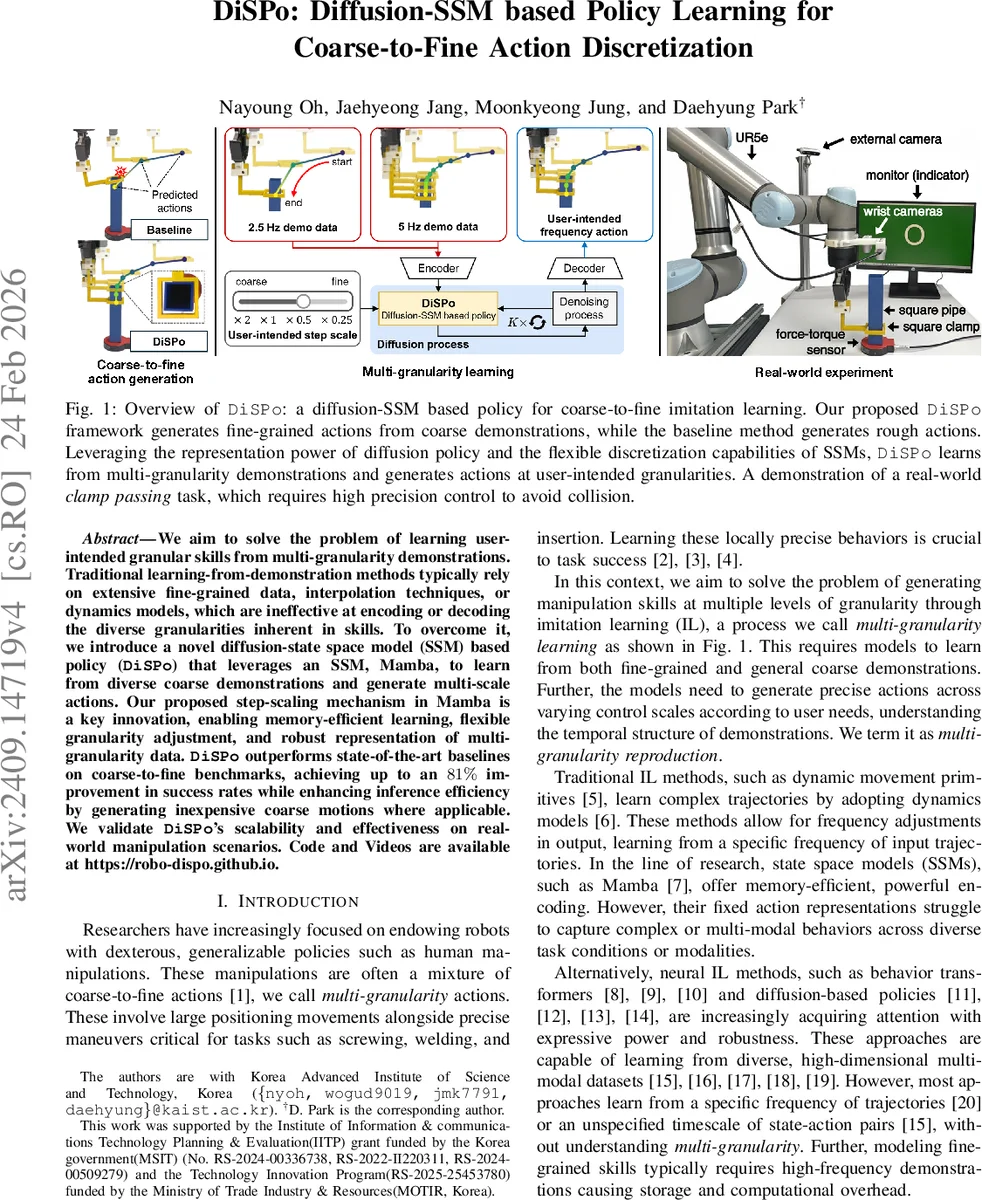

We aim to solve the problem of generating coarse-to-fine skills learning from demonstrations (LfD). To scale precision, traditional LfD approaches often rely on extensive fine-grained demonstrations with external interpolations or dynamics models with limited generalization capabilities. For memory-efficient learning and convenient granularity change, we propose a novel diffusion-state space model (SSM) based policy (DiSPo) that learns from diverse coarse skills and produces varying control scales of actions by leveraging an SSM, Mamba. Our evaluations show the adoption of Mamba and the proposed step-scaling method enable DiSPo to outperform in three coarse-to-fine benchmark tests with maximum 81% higher success rate than baselines. In addition, DiSPo improves inference efficiency by generating coarse motions in less critical regions. We finally demonstrate the scalability of actions with simulation and real-world manipulation tasks. Code and Videos are available at https://robo-dispo.github.io.

💡 Research Summary

The paper introduces DiSPo (Diffusion‑SSM based Policy), a novel framework for learning and executing robot manipulation skills that span multiple temporal granularities, from coarse, large‑scale motions to fine, high‑precision actions. Traditional learning‑from‑demonstration (LfD) approaches either require large amounts of high‑frequency demonstration data or rely on external interpolation and dynamics models, both of which are memory‑intensive and limited in generalization. DiSPo addresses these limitations by integrating two powerful ideas: (1) a diffusion‑based denoising process (DDPM) that progressively removes Gaussian noise from an action sequence, and (2) a step‑scaled version of the state‑space model (SSM) Mamba, which dynamically adjusts the discrete time step Δ based on a user‑provided scale factor r.

The architecture consists of N stacked DiSPo blocks, each built around a modified Mamba block. At each diffusion step k, the model receives a noisy action segment a^(k), a history of observations o, a diffusion‑step embedding k, and a vector of step‑scale factors r. The observations and actions are linearly embedded, the diffusion step is sinusoidally encoded, and all three are combined via adaptive layer normalization (adaLN). The step‑scaled Mamba block then predicts the SSM parameters (A, B, C) and a scaled time step Δ_i = r · SoftPlus(f_Δ(u_i)). This scaling allows the same network to operate on sequences sampled at any frequency, effectively giving the policy a controllable “temporal resolution.” After the internal SSM updates, an action head predicts the noise ε̂^(k) for the current segment. The denoised action for the next diffusion step is computed as a^(k‑1) = α·a^(k) − γ·ε̂^(k) + 𝒩(0,σ²I), following the standard DDPM formulation. An auxiliary observation head reconstructs the raw observation (image + proprioception) to encourage the network to retain fine visual details.

Training proceeds in two stages. First, a pre‑training phase augments the original demonstration dataset by randomly resampling each trajectory with different scale factors r_j, thereby creating a set of sequences at various frequencies ω_j. The loss combines a mean‑squared error on the predicted noise (L_ε) and a reconstruction loss on the observation (L_o), weighted by λ. This stage teaches the model to handle arbitrary granularities without over‑fitting to a single sampling rate. Second, a fine‑tuning phase generates “pseudo demonstrations” at higher frequencies that are not present in the original data. Starting from Gaussian noise, the pretrained DiSPo runs the full diffusion process with a target scale r = ω_target/ω_0, producing a noise‑free high‑frequency action sequence. To improve fidelity, the low‑frequency portion of this generated sequence is replaced with the original demonstration (plus noise) before the next diffusion step, ensuring that the model retains the overall task structure while learning finer motions.

The authors evaluate DiSPo on three simulated benchmarks—clamp passing, passage passing, and button touch—each requiring both coarse positioning and fine adjustments, as well as on a real‑world clamp‑passing task with a physical robot. Baselines include behavior transformers, diffusion‑based policies, and a vanilla Mamba policy without step scaling. DiSPo consistently outperforms all baselines, achieving up to an 81 % increase in success rate. Notably, the step‑scaled mechanism enables users to request actions that are twice as fine or twice as coarse simply by setting r = 2 or r = 0.5, without retraining. Moreover, because the policy can generate inexpensive coarse motions in regions where precision is unnecessary, inference time and computational load are reduced compared with fixed‑step approaches.

Key contributions of the work are: (1) a memory‑efficient SSM encoder combined with diffusion denoising that yields a robust, expressive policy; (2) the introduction of a step‑scale factor that gives direct control over temporal granularity, a capability absent in prior SSM or diffusion policies; (3) a two‑stage training pipeline that leverages random resampling and pseudo‑demonstration generation to overcome the scarcity of high‑frequency data; and (4) extensive empirical validation demonstrating both simulation and real‑world effectiveness. The paper opens several avenues for future research, such as extending the framework to multi‑joint manipulators, integrating non‑visual modalities like tactile feedback, and learning the step‑scale predictor ϕ_r via reinforcement learning for online adaptation. Overall, DiSPo represents a significant step toward flexible, scalable imitation learning that can seamlessly transition between coarse and fine robot behaviors.

Comments & Academic Discussion

Loading comments...

Leave a Comment