QuickGrasp: Responsive Video-Language Querying Service via Accelerated Tokenization and Edge-Augmented Inference

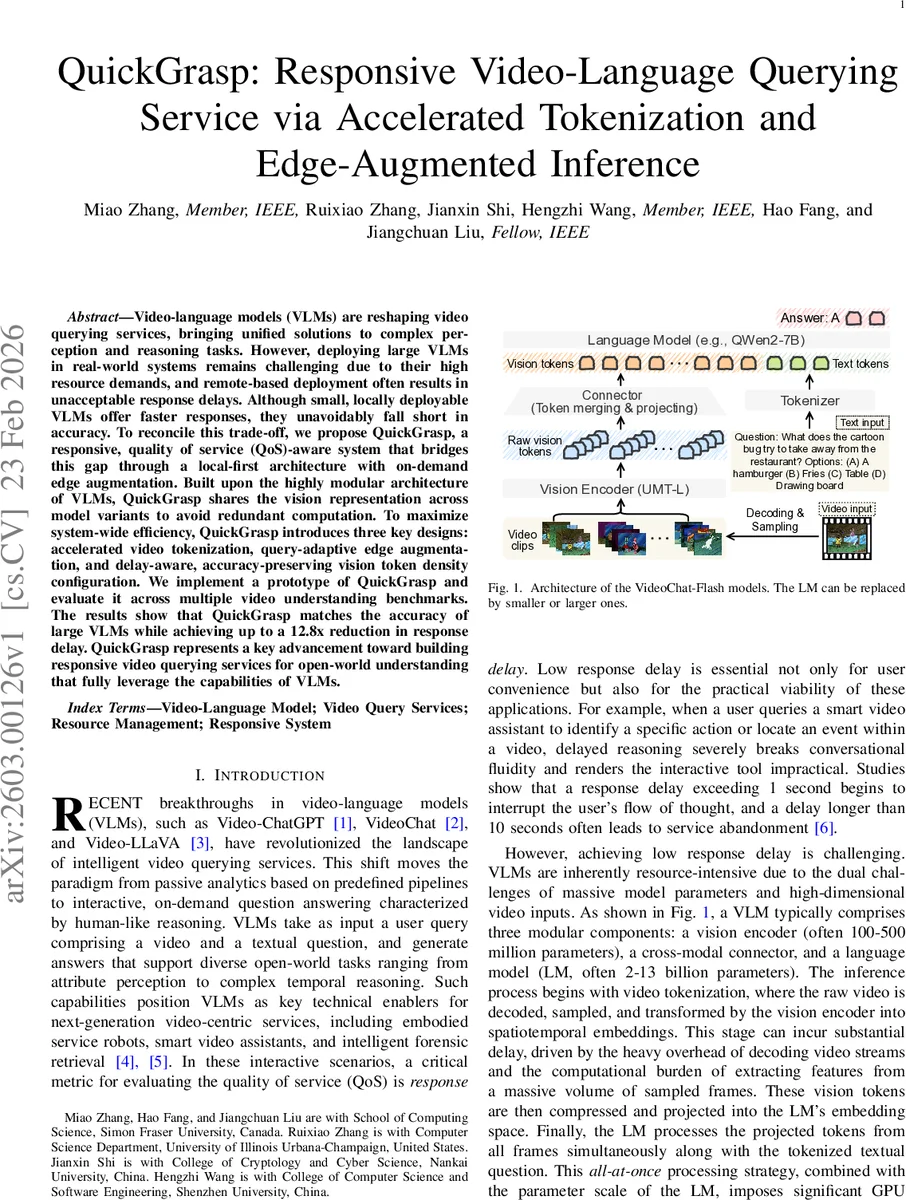

Video-language models (VLMs) are reshaping video querying services, bringing unified solutions to complex perception and reasoning tasks. However, deploying large VLMs in real-world systems remains challenging due to their high resource demands, and remote-based deployment often results in unacceptable response delays. Although small, locally deployable VLMs offer faster responses, they unavoidably fall short in accuracy. To reconcile this trade-off, we propose QuickGrasp, a responsive, quality of service (QoS)-aware system that bridges this gap through a local-first architecture with on-demand edge augmentation. Built upon the highly modular architecture of VLMs, QuickGrasp shares the vision representation across model variants to avoid redundant computation. To maximize system-wide efficiency, QuickGrasp introduces three key designs: accelerated video tokenization, query-adaptive edge augmentation, and delay-aware, accuracy-preserving vision token density configuration. We implement a prototype of QuickGrasp and evaluate it across multiple video understanding benchmarks. The results show that QuickGrasp matches the accuracy of large VLMs while achieving up to a 12.8x reduction in response delay. QuickGrasp represents a key advancement toward building responsive video querying services for open-world understanding that fully leverage the capabilities of VLMs.

💡 Research Summary

The paper introduces QuickGrasp, a responsive video‑language querying service that reconciles the classic speed‑accuracy trade‑off of large versus small vision‑language models (VLMs). Large VLMs (e.g., 7‑billion‑parameter models) deliver state‑of‑the‑art accuracy but require massive GPU memory and incur prohibitive network latency when deployed as remote services. Small, locally‑deployable VLMs (≈2 B parameters) run fast on consumer‑grade GPUs yet suffer a noticeable drop in answer quality, especially on longer or more complex videos. QuickGrasp adopts a “local‑first” architecture with on‑demand edge augmentation, leveraging the modular nature of VLMs to share vision representations across model variants and thereby avoid redundant computation.

Three technical contributions enable this design. First, accelerated video tokenization tackles the dominant bottleneck identified in profiling studies: decoding and frame sampling consume 42 %–73 % of total inference time, especially for long videos. QuickGrasp replaces naïve fixed‑rate sampling with keyframe‑aligned sampling and a pipelined decode‑to‑token conversion that dramatically reduces preprocessing latency. Second, query‑adaptive edge augmentation reuses the locally generated vision tokens at the edge, transmitting only compressed token streams instead of raw video frames. The decision to offload a query is driven by the calibrated confidence score of the local VLM rather than static textual difficulty estimates, ensuring that the edge is invoked only when the local model is likely to err. Empirical analysis shows that the 2 B model matches the 7 B model’s answer in 60 %–76 % of cases, making confidence‑based routing highly effective. Third, QoS‑aware token density configuration treats the number of vision tokens per frame as a controllable resource. Because token density directly trades off network/computational cost against answer quality, QuickGrasp formulates density selection as a contextual multi‑armed bandit (CMAB) problem. The system continuously learns a policy that maximizes long‑term utility—high accuracy with low response delay—by adapting token density to each query’s context (video length, question complexity, local confidence).

The authors implement a prototype on a consumer‑grade NVIDIA RTX 4090 and an edge server equipped with an RTX PRO 6000. Evaluation spans three comprehensive video‑understanding benchmarks, including MVBench (short clips) and Video‑MME (short, medium, and long videos up to one hour). Results demonstrate that QuickGrasp attains the same accuracy as the large 7 B VLM while reducing end‑to‑end response time by up to 12.8×. For long videos, the average latency drops from >15 seconds (remote‑only deployment) to under 2 seconds. Token density adaptation cuts network traffic by roughly 40 % compared with a fixed‑density baseline, and edge offloading occurs for only about 22 % of queries, preserving bandwidth and energy.

In summary, QuickGrasp provides a practical blueprint for building real‑time, open‑world video‑language services. By sharing vision encoders, using confidence‑driven routing, and dynamically tuning token density, it simultaneously captures the reasoning power of large models and the low‑latency advantage of small, locally‑run models. The work opens avenues for further research on multi‑modal extensions (audio, sensor data), heterogeneous edge resources, and more sophisticated bandit‑based QoS optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment