Behavior Learning (BL): Learning Hierarchical Optimization Structures from Data

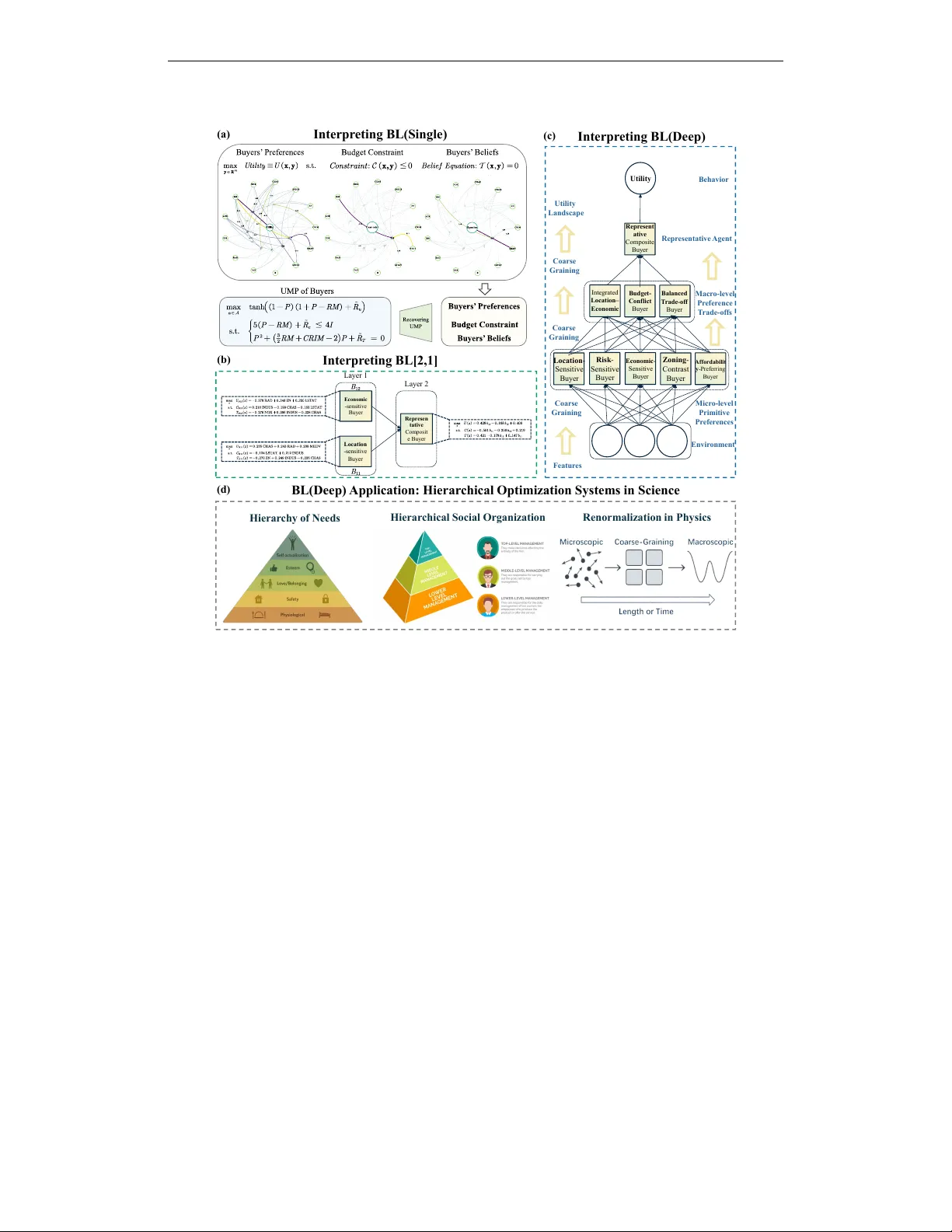

Inspired by behavioral science, we propose Behavior Learning (BL), a novel general-purpose machine learning framework that learns interpretable and identifiable optimization structures from data, ranging from single optimization problems to hierarchi…

Authors: Zhenyao Ma, Yue Liang, Dongxu Li