AdaWorldPolicy: World-Model-Driven Diffusion Policy with Online Adaptive Learning for Robotic Manipulation

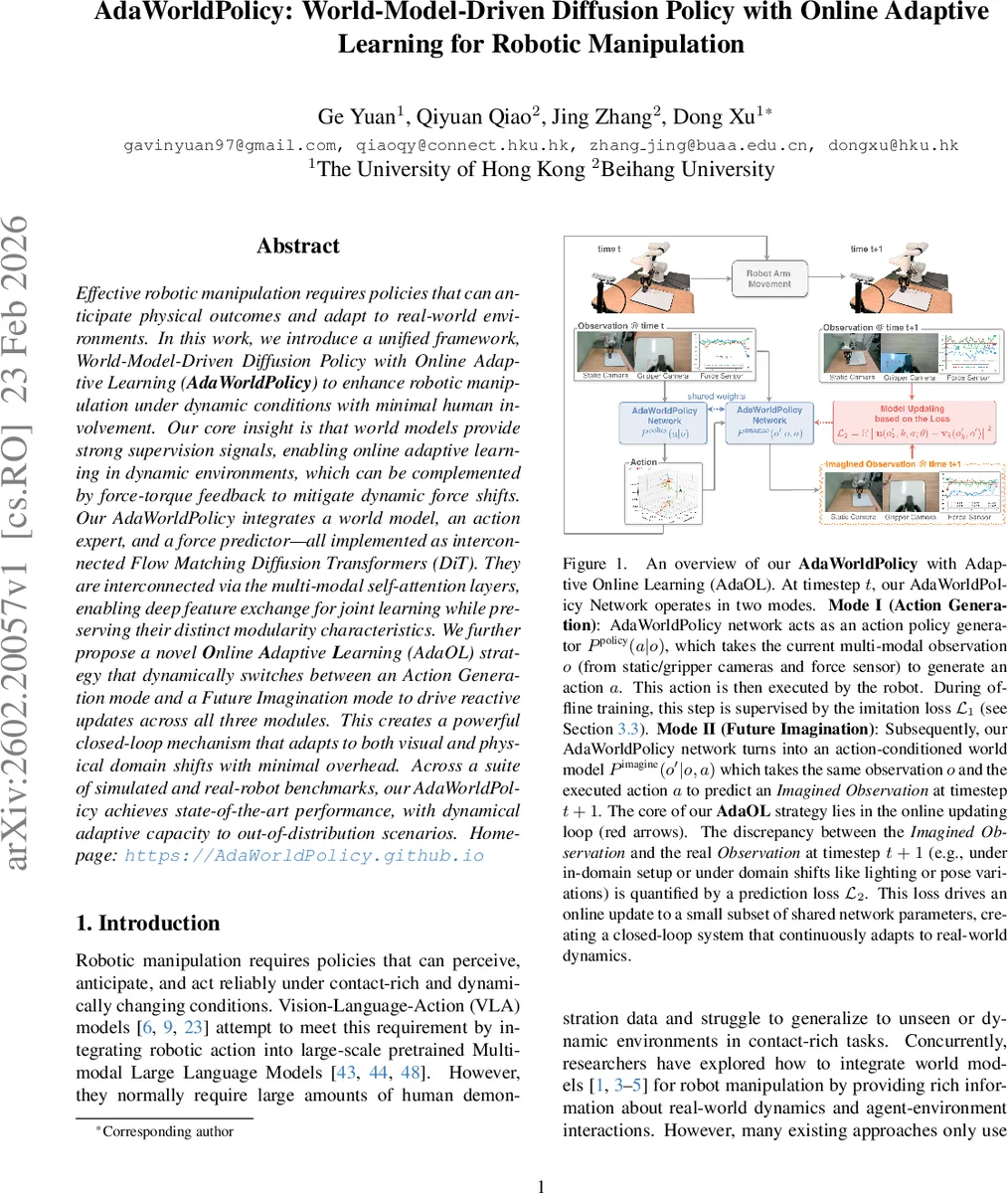

Effective robotic manipulation requires policies that can anticipate physical outcomes and adapt to real-world environments. Effective robotic manipulation requires policies that can anticipate physical outcomes and adapt to real-world environments. In this work, we introduce a unified framework, World-Model-Driven Diffusion Policy with Online Adaptive Learning (AdaWorldPolicy) to enhance robotic manipulation under dynamic conditions with minimal human involvement. Our core insight is that world models provide strong supervision signals, enabling online adaptive learning in dynamic environments, which can be complemented by force-torque feedback to mitigate dynamic force shifts. Our AdaWorldPolicy integrates a world model, an action expert, and a force predictor-all implemented as interconnected Flow Matching Diffusion Transformers (DiT). They are interconnected via the multi-modal self-attention layers, enabling deep feature exchange for joint learning while preserving their distinct modularity characteristics. We further propose a novel Online Adaptive Learning (AdaOL) strategy that dynamically switches between an Action Generation mode and a Future Imagination mode to drive reactive updates across all three modules. This creates a powerful closed-loop mechanism that adapts to both visual and physical domain shifts with minimal overhead. Across a suite of simulated and real-robot benchmarks, our AdaWorldPolicy achieves state-of-the-art performance, with dynamical adaptive capacity to out-of-distribution scenarios.

💡 Research Summary

AdaWorldPolicy presents a unified framework that tightly integrates a world model, an action expert, and a force‑torque predictor within a single diffusion‑based transformer architecture, and equips this system with a novel online adaptive learning mechanism (AdaOL) to handle visual and physical domain shifts in real‑time robotic manipulation. The three components are implemented as Flow Matching Diffusion Transformers (DiTs) and are interconnected through Multi‑modal Self‑Attention (MMSA) layers, allowing deep cross‑modal feature exchange while preserving each module’s specialized representation.

The framework operates in two complementary modes. In Mode I (Action Generation), the action token is initialized as pure Gaussian noise; conditioned on the current multi‑modal observation (static camera, gripper camera, force‑torque readings, textual goal embedding, and robot state), the Action Model denoises this token to produce a robot action. Supervision in this mode uses a Flow Matching loss L₁ that aligns the denoised action field with the ground‑truth action from the offline dataset. In Mode II (Future Imagination), the same action is fed as a conditioning token, and the World Model predicts the next visual observation while the Force Predictor forecasts the subsequent force‑torque vector. A second Flow Matching loss L₂ measures the discrepancy between the imagined observation/force and the actual sensor readings at the next timestep.

AdaOL leverages the prediction error L₂ as a self‑supervised signal to update a small set of shared parameters (implemented as LoRA adapters) after each interaction step. By alternating between the two modes, the system first executes an action, then imagines its consequences, and finally corrects its internal representations based on the real outcome. This closed‑loop adaptation simultaneously mitigates lighting changes, camera pose variations, and dynamic force shifts such as varying contact stiffness or friction, all with only a few milliseconds of additional computation, preserving real‑time control at >30 Hz.

Technical contributions include: (1) turning the world model from a passive “digital twin” into an active supervisor that drives online parameter updates; (2) employing diffusion‑based generation with Flow Matching to embed physical consistency directly into the policy, avoiding the physically implausible actions sometimes produced by pure behavior‑cloning diffusion policies; (3) designing MMSA to enable each modality (vision, force, text, robot state) to query relevant information from the others without collapsing them into a single shared embedding space; and (4) demonstrating that low‑rank adapter updates are sufficient for rapid test‑time adaptation, keeping memory and compute overhead minimal.

Empirical evaluation spans a suite of simulated benchmarks (PushT, CALVIN, LIBERO) and real‑world experiments on a 7‑DoF arm with a parallel‑jaw gripper. In out‑of‑distribution (OOD) scenarios, AdaWorldPolicy surpasses prior state‑of‑the‑art methods by more than 5 % in success rate, while achieving roughly a 1 % gain on in‑distribution tasks. The force‑aware variant reduces force prediction error by over 30 % in contact‑rich tasks such as insertion and assembly, leading to smoother and safer interactions. Ablation studies confirm that both the dual‑mode operation and the MMSA‑mediated feature sharing are essential for the observed performance gains.

Limitations include reliance on a fixed set of LoRA parameters, which may constrain adaptation under extreme domain shifts, and a force predictor that currently models only scalar torque components rather than full contact wrench. Future work aims to extend the architecture to multi‑robot collaboration, richer language‑conditioned goals, and more comprehensive physical sensing (e.g., tactile arrays).

In summary, AdaWorldPolicy demonstrates that a world‑model‑driven diffusion policy, coupled with a lightweight online adaptation loop, can achieve robust, physically consistent, and rapidly adaptable manipulation in dynamic, contact‑rich environments, setting a new benchmark for real‑world robotic learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment