Learn by Reasoning: Analogical Weight Generation for Few-Shot Class-Incremental Learning

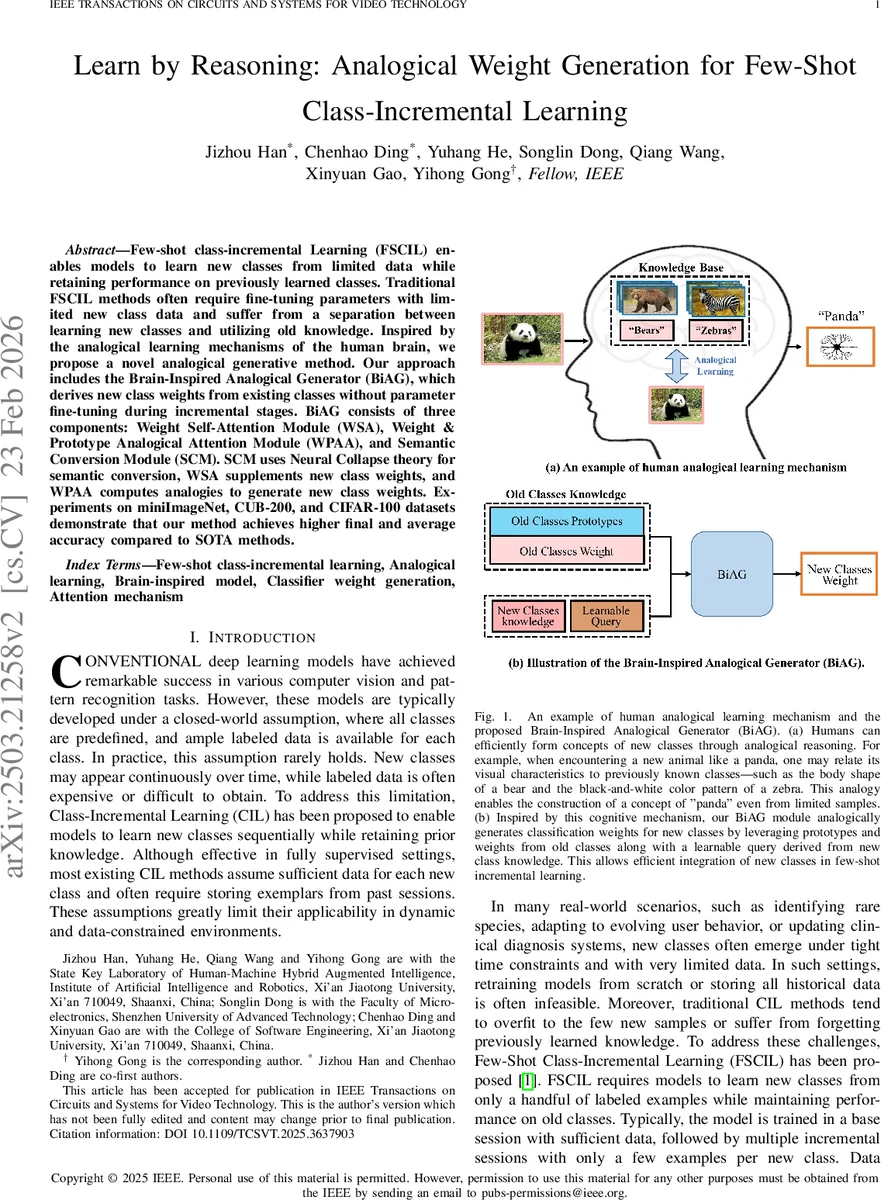

Few-shot class-incremental Learning (FSCIL) enables models to learn new classes from limited data while retaining performance on previously learned classes. Traditional FSCIL methods often require fine-tuning parameters with limited new class data and suffer from a separation between learning new classes and utilizing old knowledge. Inspired by the analogical learning mechanisms of the human brain, we propose a novel analogical generative method. Our approach includes the Brain-Inspired Analogical Generator (BiAG), which derives new class weights from existing classes without parameter fine-tuning during incremental stages. BiAG consists of three components: Weight Self-Attention Module (WSA), Weight & Prototype Analogical Attention Module (WPAA), and Semantic Conversion Module (SCM). SCM uses Neural Collapse theory for semantic conversion, WSA supplements new class weights, and WPAA computes analogies to generate new class weights. Experiments on miniImageNet, CUB-200, and CIFAR-100 datasets demonstrate that our method achieves higher final and average accuracy compared to SOTA methods.

💡 Research Summary

Few‑Shot Class‑Incremental Learning (FSCIL) requires a model to continuously incorporate new categories from only a handful of examples while preserving performance on all previously learned classes. Existing FSCIL approaches either jointly optimize all classes using meta‑learning, replay, or dynamic architectures, or they treat new‑class learning and old‑class retention as separate sequential steps. Both paradigms typically involve fine‑tuning of model parameters during each incremental session, which leads to over‑fitting on the scarce new‑class data and to catastrophic forgetting of earlier knowledge.

Motivated by the human brain’s ability to learn new concepts through analogical reasoning—drawing parallels between a novel item and previously known ones—the authors propose a brain‑inspired analogical generator (BiAG) that synthesizes classifier weights for new classes without any parameter updates in the incremental phases. BiAG operates on three pillars:

-

Semantic Conversion Module (SCM) – Leveraging the Neural Collapse phenomenon, SCM learns a mapping that converts class prototypes (mean feature vectors) into the space of classifier weights. Neural Collapse predicts that, after sufficient training, prototypes and their corresponding linear classifier weights align directionally, enabling a principled semantic bridge between feature and weight spaces.

-

Weight Self‑Attention Module (WSA) – Before analogical reasoning, WSA refines the provisional new‑class weight by applying self‑attention over the new prototype and the set of old class weights. This step highlights salient attributes of the new class that are shared with existing knowledge, analogous to how humans first isolate key features of a novel concept.

-

Weight & Prototype Analogical Attention Module (WPAA) – The core analogical engine, WPAA, treats old class prototypes and weights as a knowledge base. A learnable query derived from the new prototype interacts with this base via attention, producing a weighted recombination of old weights that constitutes the final generated weight for the new class. In effect, WPAA performs a relational mapping (“old is to old‑prototype as new is to new‑prototype”) that mirrors human structure‑mapping theory.

Training proceeds in two stages. First, a conventional base session trains a feature extractor on a large, fully‑labeled dataset, yielding reliable prototypes and classifier weights for the base classes. Second, BiAG is trained in a pseudo‑incremental fashion: base classes are randomly split into “old” and “new” subsets, and the generator learns to reconstruct the true new‑class weights from the simulated old knowledge using a generation loss (L_G). This equips BiAG with the ability to perform analogical weight synthesis for any future incremental session.

During actual incremental inference, the feature extractor and BiAG parameters are frozen. For each new class, only its few‑shot prototype is computed; BiAG then instantly generates the corresponding classifier weight by consulting the stored old prototypes and weights. No replay buffers, exemplar storage, or fine‑tuning is required, dramatically reducing computational overhead and memory footprint.

Extensive experiments on three widely used benchmarks—miniImageNet, CUB‑200, and CIFAR‑100—covering 8–10 incremental sessions demonstrate that BiAG consistently outperforms state‑of‑the‑art FSCIL methods (e.g., TOPIC, ALFSCIL, NC‑FSCIL). The proposed method achieves higher final accuracy (the performance after the last session) and higher average accuracy (mean over all sessions), with improvements ranging from 1.5% to 4% absolute points. Ablation studies confirm that each component (SCM, WSA, WPAA) contributes meaningfully: removing SCM degrades the semantic alignment, omitting WSA harms the initial weight quality, and excluding WPAA eliminates the analogical reasoning capability altogether.

In summary, the paper makes three key contributions: (1) it introduces a biologically motivated analogical learning framework for FSCIL, (2) it operationalizes Neural Collapse theory to bridge prototypes and classifier weights, and (3) it delivers a parameter‑free incremental mechanism that efficiently integrates new classes while preserving prior knowledge. The approach opens avenues for extending analogical weight generation to other modalities (e.g., language, time‑series) and for exploring richer relational mappings such as graph‑based analogies in continual learning scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment