Personalized Help for Optimizing Low-Skilled Users' Strategy

AIs can beat humans in game environments; however, how helpful those agents are to human remains understudied. We augment CICERO, a natural language agent that demonstrates superhuman performance in Diplomacy, to generate both move and message advice based on player intentions. A dozen Diplomacy games with novice and experienced players, with varying advice settings, show that some of the generated advice is beneficial. It helps novices compete with experienced players and in some instances even surpass them. The mere presence of advice can be advantageous, even if players do not follow it.

💡 Research Summary

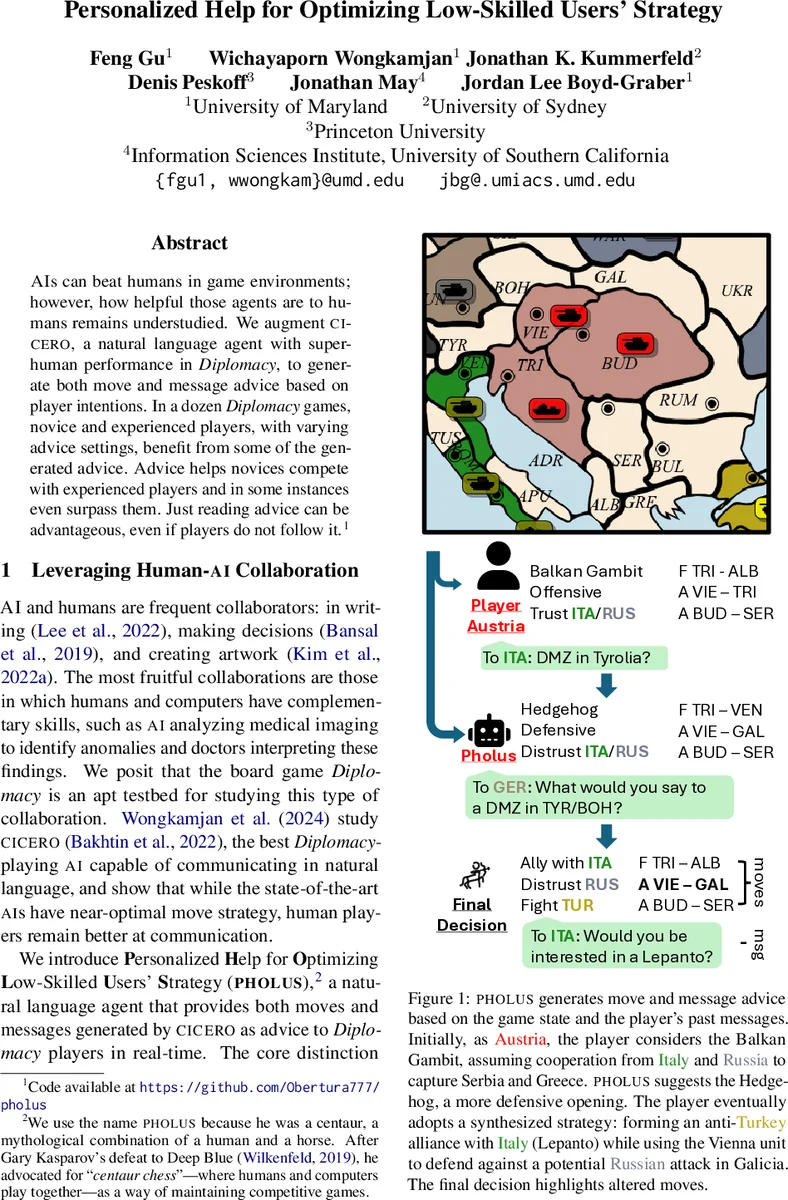

The paper introduces PHOLUS, a natural‑language advisor built on top of the state‑of‑the‑art Diplomacy AI CICERO. While CICERO can play the game at a superhuman level, it is primarily a game‑playing agent. PHOLUS instead observes the game state and the player’s past messages, inferring the player’s short‑term intentions, and then generates two types of real‑time advice: (1) move advice (specific unit orders) and (2) message advice (who to contact and what to say). The system never makes moves itself; it only suggests them to human participants.

To evaluate PHOLUS, the authors recruited 41 participants—both novices with no prior Diplomacy experience and veterans from online Diplomacy communities. Over twelve full games (approximately three hours each), each turn a player was randomly assigned to one of four conditions: no advice, message‑only advice, move‑only advice, or both move and message advice. In total the study collected 1,070 turns, 3,600 move‑advice entries, and 4,300 message‑advice entries.

Quantitative analysis used a regularized linear regression to predict supply‑center gains per turn from features such as power, turn number, player experience, and advice condition. The “no advice” condition had a negative coefficient (~‑0.05), indicating a performance penalty. Move advice showed a modest positive effect, and the combined move‑and‑message condition yielded the largest positive coefficient. Novices accepted move advice 32.6 % of the time and message advice 6.3 %, roughly double the acceptance rates of veterans (6.4 % and 3.4 % respectively). Importantly, novices who received any advice were able to stay in the game longer and, in several games, ended with more supply centers than they started, narrowing the gap with experienced players.

Agreement metrics were also computed. At the start of each turn, novices’ moves overlapped with PHOLUS suggestions 80 % of the time (agreement), but full equivalence (identical move sets) was only 46 %. By the end of the turn, agreement dropped by about 10 % and equivalence by 8 %, showing that players often incorporated parts of the advice rather than following it verbatim.

Qualitative analysis examined message advice. The authors parsed both the AI‑generated suggestions and the actual player messages into Abstract Meaning Representation (AMR) and measured similarity with the SMATCH score. Many pairs scored above 0.7, indicating that players frequently used the AI’s phrasing with minor edits (e.g., “bounce in Galicia again?” vs. “Do you want to bounce in Galicia again?”). In cases where strategic goals diverged—such as a player choosing to seek support from Turkey rather than deflecting blame onto Russia—the SMATCH score was near zero, reflecting a deliberate rejection of the AI’s diplomatic tactic. Survey responses confirmed that participants found the advice helpful for shaping their negotiation strategies, especially novices.

The paper acknowledges several limitations. PHOLUS can only infer short‑term intentions from recent moves; it cannot model higher‑level goals like long‑term alliance formation. Its advice sometimes aligns with CICERO’s optimal utility rather than the player’s preferred style, leading to overly aggressive suggestions that novices may find unsuitable. Moreover, overly generic advice can reduce trust and acceptance.

In conclusion, PHOLUS demonstrates that personalized, real‑time AI advice can substantially improve the performance of low‑skill Diplomacy players, even when the advice is not fully followed. The study contributes to the broader field of human‑AI collaboration by showing that “advisory” interaction—providing strategic insight without taking control—can be more beneficial than direct AI autonomy, especially for learning in complex, communication‑heavy environments. Future work should focus on richer intention modeling, adaptive granularity of advice, and mechanisms to prevent over‑reliance while maximizing educational impact.

Comments & Academic Discussion

Loading comments...

Leave a Comment