A Causal Framework for Estimating Heterogeneous Effects of On-Demand Tutoring

This paper introduces a scalable causal inference framework for estimating the immediate, session-level effects of on-demand human tutoring embedded within adaptive learning systems. Because students seek assistance at moments of difficulty, conventi…

Authors: Kirk Vanacore, Danielle R Thomas, Digory Smith

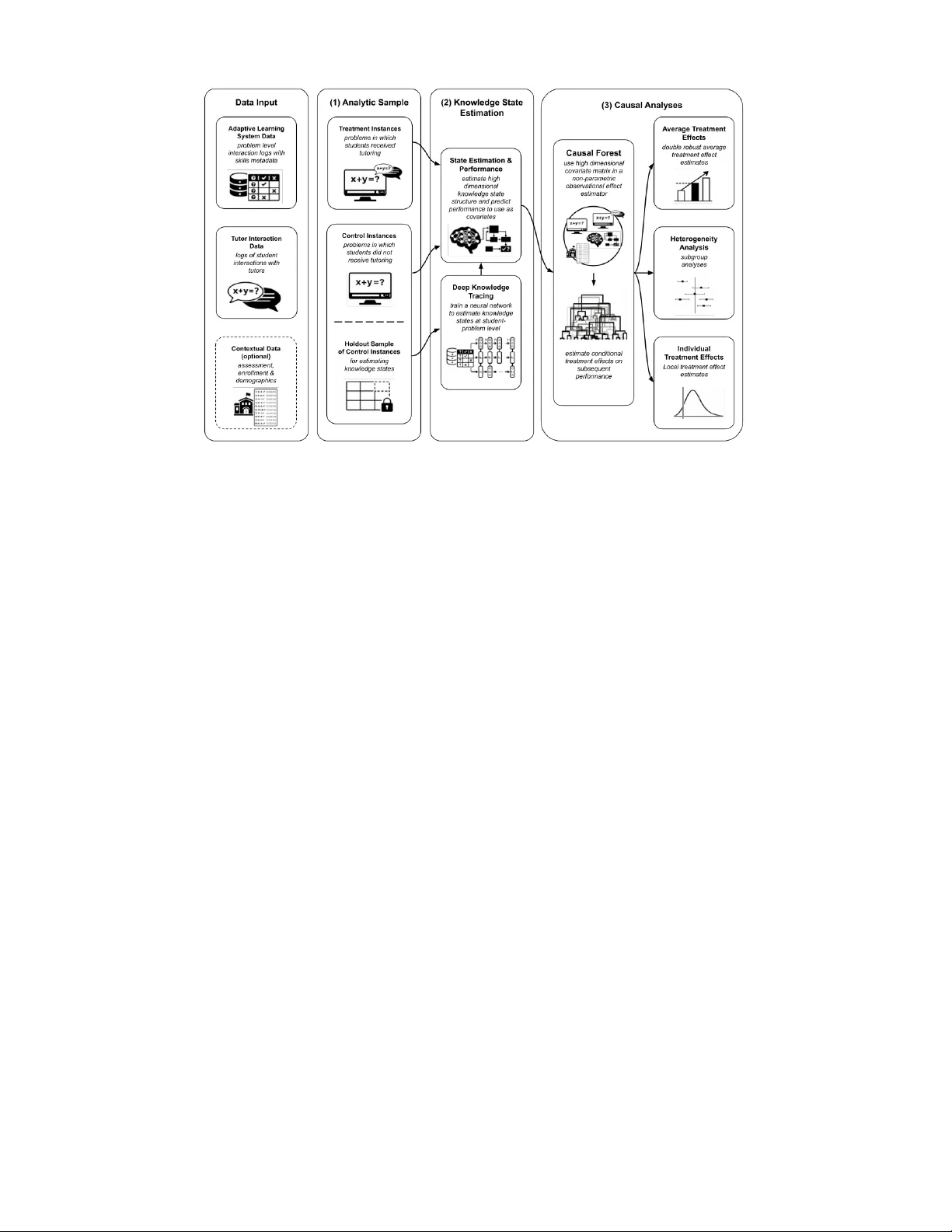

A Causal Frame w ork f or Estimating Heter ogeneous Effects of On-Demand T utoring Kirk V anacore Cornell University kpv27@conrell.edu Danielle R Thomas Carnegie Mellon Univ ersity dchine@andrew .cmu.edu Digor y Smith Eedi digor y .smith@eedi.com Bibi Groot Eedi bibi.groot@eedi.com Justin Reich Massachusetts Institute of T echnology jreich@mit.edu Rene Kizilcec Cornell University kizilcec@cor nell.edu ABSTRA CT This pap er introduces a scalable causal inference framew ork for estimating the immediate, session-lev el effects of on- demand h uman tutoring embedded within adaptiv e learn- ing systems. Because students seek assistance at momen ts of difficulty , conv entional ev aluation is confounded b y self- selection and time-v arying knowled ge states. W e address these c hallenges by integrating principled analytic sample construction with Deep Knowledge T racing (DKT) to esti- mate laten t mastery , follow ed b y doubly robust estimation using Ca usal F orests. Applying this framew ork to o ver 5,000 middle-sc ho ol mathematics tutoring sessions, we find that requesting human tutoring increases next-problem correct- ness b y approximately 4 p ercen tage points and accuracy on the subsequen t skill encoun tered b y approximately 3 p er- cen tage points, suggesting that the effects of tutoring ha ve pro ximal transfer across knowledge components. This effect is robust to v arious forms of mo del sp ecification and p o- ten tial unmeasured confounders. Notably , these effects ex- hibit significant heterogeneity across sessions and studen ts, with session-level effect estimates ranging from − 20 . 25 pp to +19 . 91 pp . Our follow-up analyses suggest that typical b e- ha vioral indicators, suc h as studen t talk time, do not con- sisten tly correlate with high-impact sessions. F urthermore, treatmen t effects are larger for studen ts with low er prior mastery and sligh tly smaller for lo w-SES students. This framew ork offers a rigorous, practical template for the ev al- uation and contin uous improv ement of on-demand human tutoring, with direct applications for emerging AI tutoring systems. K eywords T utoring, Adaptive Learning Systems, Causal Inference. 1. INTR ODUCTION T utoring is widely recognized as one of the most effective educational interv entions, particularly for studen ts strug- gling academically [11, 16, 24, 22]. How ever, recen t large- scale evidence suggests that the magnitude of K-12 tutor- ing effects v aries widely across contexts, populations, and implemen tations, complicating efforts to generalize findings and guide p olicy decisions [21]. Muc h prior work has fo- cused on “high-dosage” tutoring, whic h is typically defined as sustained, sc heduled sessions deliv ered multiple times per w eek [33]. Ho wev er, a gro wing class of interv entions pro vides on-demand tutoring: brief supp ort offered that is in tended to deliver instruction precisely at momen ts of student diffi- cult y [10, 41]. These in terven tions are increasingly embed- ded within adaptiv e learning platforms and are deliv ered b y h uman tutors or AI-based agents [30, 41]. These em bedded tutoring systems generate rich , fine-grained in teraction data, creating unprecedented opportunities for con tin uous ev aluation and improv ement. How ev er, they also in troduce a fundamen tal metho dological problem: students self-select in to tutoring at momen ts of struggle, creating se- v ere time-v arying confounding that undermines naive obser- v ational comparisons. As a result, despite the rapid gro wth of em b edded tutoring, the field lac ks scalable metho ds for rigorously estimating the causal impact of individual tutor- ing intera ctions in real-world learning platforms. This paper addresses this gap b y introducing a scalable causal inference framew ork for estimating the effect of on- demand tutor within an adaptive mathemati cs learning plat- form. Rather than ev aluating en tire programs or long-term in terv en tions, the framework is designed to estimate the im- mediate causal effect of individual tutoring in teractions on subsequen t student p erformance. Our proposed framework in tegrates three c omponents into a unified pip eline: (1) prin- cipled construction of analytic samples to appro ximate coun - terfactual comparisons, (2) laten t knowled ge state estima- tion using Deep Knowledge T racing (DKT; [29]) to address time-v arying confounding, and (3) estimatio n of conditional a ve rage treatment effects for local, individual tutoring ses- sions using Generalized Random F orests (Causal F orests; [3]). In combin ation, these components provide a practical template for estimating a v erage treatmen t effects and effect heterogeneit y from large-scale learning in teraction logs. W e further demonstrate v arious robustness tests for this framew ork, showi ng that with and without contextual v ari- ables from outside of the platform (e.g., standardized assess- men ts and demographic v ariables), this pipeline produced consisten t estimates of the tutoring effects. Our framework addresses central challenges in observ ational studies of ed- ucational interv entions, including self-selection in to treat- men t, unmeasured baseline abilit y that changes as studen ts learn, and treatmen t effect heterogeneit y . F urthermore, it provides opp ortunities to b etter understand when, for whom, and wh y tutoring is effectiv e. Finally , this metho d could b e used to estimate outcomes for ev aluating AI models for tutoring. Applying our framew ork to data from an embedded tutor- ing system, w e find that requesting tutoring has a p ositive impact on immediate performance b y increasing the likeli- hoo d that students answer the next problem correctly b y an a verage of 4 . 01 percentage p oints ( pp ). W e further find that this affects near transfer of skills: tutoring increases the probabilit y of answering the first problem on the subsequent skill correctly by 2 . 73 pp . Ho w ever, there is substan tial v ari- abilit y in these effects, with lo cal effect estimates ranging from − 20 . 25 pp to +19 . 91 pp for immediate performance cor- rectness and from − 23 . 60 pp to +47 . 70 pp for near transfer. Studen ts with low er estimated prior knowledge tended to benefit more from tutoring , although this relationship was sligh tly attenuated for lo wer-income studen ts. W e conclude b y discuss ing how causal approac hes of this kind can help researc hers and practitioners b etter understand and ev alu- ate the mechanisms that drive effective on-demand tutoring, ultimately informing the design of improv ed human and AI tutoring systems. 2. B A CKGR OUND 2.1 Efficacy and Heterogeneity in T utoring Interventions T utoring is widely recognized as one of the most effective academic in terven tions for impro ving student learning out- comes [11, 16, 24]. Comprehensiv e meta-analyses ha ve found that tutoring consistently yields significan t p ositiv e effects on student learning [22, 24]. These effects are impactful across grade levels and sub ject areas, often outp erform- ing other educational in terven tions such as class-size reduc- tion or extended school days [16, 24]. How ever, the effi- cacy of tutoring is rarely uniform; effects are often hetero- geneous across different implemen tations [24]. Impact also v aries significantly according to c haracteristics such as prior ac hiev emen t, socio economic status, and baseline ac hiev e- men t [11]. Although tutoring often targets low er-p erforming studen ts to close achiev ement gaps [16], other studies on v olun tary , on-demand models suggest that students with higher basel ine engagemen t or fewer structural barriers are sometimes more lik ely to access and b enefit from support [2, 10]. Understanding this heterogeneit y is crucial to determine whether sp ecific tutoring interv entions narrow achiev ement gaps or inadv erten tly widen them due to differen tial uptake. The magnitu de of tutoring effects is also inextricably link ed to the implementation mo del. Muc h of the literature ad- v o cates for ” high-dosage” tutoring, ty pically defined as sus- tained, scheduled sessions occurring three or more times per w eek, as a primary driver of efficacy [22, 24]. How ever, scal- ing suc h intensiv e mo dels presents logistical and financial c hallenges. Consequen tly , many Adaptiv e Learning Systems (ALS) ha ve adopted ” on-demand” or ” just-in-time” models, where brief supp ort is triggered by students making specific errors rather than a fixed sc hedule [30, 38, 41]. Although scalable, these em b edded interactions complicate the tradi- tional definition of d osage, as exp osure to treatmen t b ecomes a function of studen t agency and immediate need ra ther than administrativ e assig nmen t. 2.2 Integrating Human T utoring in Adaptive Learning Systems T o address the scalabilit y and cost constraints of high- dosage human tutoring, recen t approac hes ha ve integrated h uman support directly into ALS. “Hybrid” or ” human -in- the-loop” mo dels aim to combin e the personalized, imme- diate feedbac k of AI-driv en softw are with the motiv ational and complex p edagogical supp ort of human tutors [6, 38]. Researc h suggests that h uman tutoring can enhance the benefits of ALS [15]. F or instance, human tutors—guided b y real-time dashboards to in terv ene when students strug- gle—yields statistically significan t additional b oosts in time- on-task and skill proficiency within an ALS, compared to studen ts w orking in an ALS alone [15]. These h ybrid ap- proac hes seem to b enefit low er-p erforming students more than higher-performing ones, suggesting that human inter- v en tion may most benefit students who most need it [38]. In tegrated tutoring models often take the form of ” on- demand” c hat support embedded within the learning env i- ronmen t. In these settings, the ALS identifies a knowledge gap or a specific misconception, and a h uman tutor enters the lo op to pro vide targeted scaffolding [18]. Ev aluations of such systems ha v e shown that studen ts who engage with this hyb rid supp ort demonstrate higher learning gains and kno wledge transfer than th ose using the ALS in isolation [18, 41]. F urthermore, in terven tions explicitly designed to com- bine human tutoring with ALS p ersonalization hav e been sho wn to nearly double math learning gains compared to con trol groups, pro viding a scalable mechanism to promot e educational equit y for marginalized students [6 ]. By offload- ing rote instruction to the ALS and reserving h uman capital for high-v alue interactions, these systems offer a promising path w a y to deliver effectiv e tutoring at scale [6, 38, 42]. 2.3 Methodological Challenges in Estimating On-Demand Effects Although the efficacy of tutoring is w ell-do cumen ted, esti- mating the causal impact of on-demand help within adap- tiv e systems presents unique methodological h urdles. Unlik e sc heduled ” high-dosage” tutoring, where attendance is often mandated or fixed, ” on-demand” supp ort is driven by stu- den t agency . This in tro duces a sp ecific form of confounding: studen ts are more likely to ask for help precisely when they are least likely to succeed without it [2, 33]. Consequen tly , naiv e comparisons b et ween tutored and non-tutored prob- lem attempts often yield negativ e correlations, reflecting the studen t’s struggle rather than the interv ention’s failure. T o address this, prior EDM research has largely relied on t wo approac hes: experiments/randomized con trolled trials (R CTs) and observ ational matc hing strategies. While RCTs remain the gold stand ard, they are often implemented at the sc hool or studen t level to ev aluate general program access rather than sp ecific in teractions [10, 34, 41]. Randomizing individual help requests, which may require den ying help to a struggling student for the sake of exp erimen tal control, is often ethic ally prohibitiv e and disruptiv e to the learning experience (e.g., wait-list designs where studen ts are ran- domly assig ned to get help right aw ay or later are still dis- ruptiv e). As a result, gran ular, problem-lev el insigh ts must often be deriv ed from observ ational data. In observ ational settings, researchers hav e traditionally employ ed propensity score matching to balance treatmen t and control groups on observ ed co v ariates suc h as prior achiev ement, demograph - ics, and topic difficult y [6, 23]. How ever, standard matching approac hes typically rely on static or slo wly c hanging v ari- ables (e.g., pre-test scores, diagnostic assessmen ts). These measures fail to capture the highly dynamic, time-v arying nature of a student’s kno wledge state during a learning ses- sion. A studen t’s decision to ask for help is often a function of immediate confusion or a sp ecific misconception, which static or slowly-c hanging cov ariates cannot detect. More recent work has attempted to incorp orate dynamic measures of mastery into causal estimation. Approac hes suc h as the ” Rebar” and ” ReLOOP” method hav e demon- strated that using predictions from high-dimensional mo dels as co v ariates can reinforce matching estimators and reduce bias [36, 26]. These metho ds allo w for more precise effect estimation in the con texts of RCTs but are underutilized in observ ational studies where conditions are not randomized. A related significan t y et underdev elop ed area in Educational Data Mining (EDM) is the integration of kno wledge trac- ing (KT) into observ ational causal inference frameworks to accoun t for time-v arying confounding rooted in a studen t’s historical p erformance [1, 25, 27]. In particular, DKT ex- tends this by utilizing recurren t neural netw orks to generate high-dimensional laten t states that capture complex tem- poral dependencies in stude nt learning [29]. Ho w ever, even with these ric her represent ations, standard regression or lin- ear adjustmen ts ma y not fully account for the complex, non- linear heterogeneit y in ho w different studen ts resp ond to tu- toring. Finally , most causal studies in EDM and related fields focus on a verage treatment effects [36, 26] or subgroup analyses [28, 30, 19, 20]. A growing bo dy of work also examines heterogeneit y b y ho w students interact with learning envi- ronmen ts [37, 39]. Ho wev er, the field would b enefit from methods that estimate lo cal, session-level estimates of ef- fectiv eness. These estimates could be leveraged to flexibly explore the circumstances under whic h ALS elemen ts, lik e on-demand tutoring, are most effectiv e, including elements of heterogeneit y based on how studen ts resp ond. 3. CURRENT STUD Y The curren t study proposes an observ ational approach for ev aluating tutoring sessions embedded within digital learn- ing platforms by estimating their causal impact on stu- den ts’ subsequent p erformance. The approach integrates DKT–based estimates of students’ laten t kno wledge states in to a causal inference framework that comb ines causal forests for count erfactual outcome estimation with aug- men ted in verse probabilit y weigh ting to obtain doubly ro- bust, session-lev el treatmen t effects. T ogether, these meth- ods yield unbiased estimates of individual tutoring session effectiv eness and enable downstream analyses that charac- terize heterogeneit y in tutoring impacts across studen ts, con- texts, and instructional conditions. W e emplo y this frame- w ork on data from an ALS 1 in which studen ts could access on-demand h uman tutoring through brief text chats. 4. CONTEXT 4.1 Data The data used for this analysis came from the treatment arm of a m ulti-year rand omized con trolled trial (RCT) de- signed to estimate the causal impact of Eedi on middle sc hool mathematics achiev ement. Sc ho ols were randomly assigned to treatment or control conditions at the school lev el, with the treatmen t group receiving access to Eedi for t wo ful l academic y ears while con trol schools contin ued with business-as-usual instruction. Our current sample is from the 12 sc ho ols and 2,585 students who had access to and used Eedi during the tw o-year study . 4.2 Adaptive Lear ning System This study w as run in the ALS Eedi, which is a digital math- ematics platform designed to supp ort middle sc ho ol studen ts b y identifying and addressing misconceptions in real time through diagnostic assessmen t. This ALS uses a large bank of carefully engin eered multiple-c hoice questi ons, where eac h incorrect option maps to a sp ecific, well-documented math- ematical misconception. This design allows the Eedi to pin- point gaps in understanding at a fine grain and resp ond with targeted support, including short video explanations, struc- tured practice, and follo w-up diagnostic questions. T eac hers can deplo y the system flexibly in lessons or as homew ork, using its analytics dash b oards to see patterns of misunder- standing acro ss individuals and classes and to adapt instruc- tion accordingly . Studen ts work independen tly on quizzes of fiv e questions assigned b y their teac hers. Based on their an- sw ers, Eedi resp onds adaptively with a sequence of hints, videos, and fluency practice to help students o vercome mis- conceptions. Evidence from a large, sch o ol-lev el random- ized con trolled trial suggests that the platform can pro duce modest but meaningful improv ements in mathematics at- tainmen t when implemen ted ov er an academic year [ ? ]. 4.3 On-Demand T utoring in Eedi. Within the Eedi, h uman tutoring was implemented as on- demand, sync hronous, one-to-one c hat-based support in te- grated directly into students’ ongoing problem-solving ac- tivit y . A t any point, studen ts could initiate a liv e session that immediately connected them with an exp ert tutor. T u- tors received structured context at the start of each ses- sion—including the problem text, the studen t’s answer, and the associated misconception, allowing them to provide tar- geted, dialog-based support focused on diagnosing and re- solving misunderstandings before studen ts returned to inde- pendent w ork. The interv ention inv olved sev en teen tutors, eac h with at least three years of teac hing exp erience and sp ecific train- ing in Eedi en vironment. Sessions w ere designed to be brief 1 The name of this system is omitted for review. and task-focused, enabling studen ts to mov e fluidly b etw een independent practice and individualized help. T utors com- m unicated exclusiv ely through text chat an d used a So cratic approac h that emphasized questioning and g uided reasoning rather than direct answ er-giving. Sessions concluded once studen ts demonstrated sufficient understanding or chose to resume their lesson, positioning tutoring as a scalable, just- in-time complemen t to the broader Eedi learning environ- men t. T able 1 summarizes the characteristics of the tutoring ses- sions. T utoring sessions w ere relativ ely brief, with a median duration of 4.2 min utes and 14 messages exchanged. T utors con tributed approximately 60% of messages on a verage, con- sisten t with their role in guiding the dialogue. T able 1: T utoring Session Characteristics Metric Mean Median Q25 Q75 T otal Messages 17.7 14 9 23 T utor Messages 10.5 8 5 14 Studen t Messages 7.2 6 3 9 Duration (min utes) 5.4 4.2 2.4 6.9 5. METHODS: CA USAL FRAMEWORK FOR LOCAL EFFECT ESTIMA TION Our causal inference framew ork consists of three in tercon- nected sta ges, illustrated in Figure 1. (1) First, we construct an analytic dataset that links each tutoring interv ention to its immediate outcome while defining an appropriate con- trol group. (2) Second, we train a DKT mo del on a held- out sample of students to generate latent represen tations of studen t kno wledge for b oth the treatmen t and control sam- ples. (3) Third, we apply a Causal F orest estimator that join tly estimates prop ensity scores and treatment effects us- ing the DKT-derived hidden states and predicted proba- bilities as cov ariates. W e v alidate our findings through a series of robustness chec ks that incorp orate con textual co- v ariates (e.g., sc ho ol assignment, standardized assessmen ts, and demographics), alternativ e sample configurations to ad- dress p otential participation bias, and a placeb o outcome test. This framew ork addresses three central challenges in observ ational tutoring researc h: confounding from baseline kno wledge di fferences, self-selection into treatment, and het- erogeneit y in treatmen t effects across students. 5.1 Analytic Sample Construction Our method requires three samples: treatmen t, control, and hold-out. T able 2 presen ts the sample breakdown. When a studen t received tutoring on a problem, that instance en- tered the treatment sample. The next problem the stu- den t attempted without help served as our imme diate p erfor- manc e outcome, and the next problem on a new skill serv ed as our ne ar tr ansfer outcome. T o ensure co mparabilit y , only problems for whic h at least one student receiv ed tutoring w ere included in the causal analysis. In additio n, problem attempts from treated studen ts in which they did not receiv e tutoring were excluded from the primary analysis. T reated observ ations w ere also excluded when no subsequent prob- lem attempt was a v ailable to serve as an outcome. T o a void potential Stable Unit T reatmen t V alue Assumption (SUTV A) violations [35], only studen ts who nev er received T able 2: Analytic Sample Construction from Raw Data to Final Analytic Sample Studen ts Problem A ttempts Unique Problems Original Usage Data 2,585 852,274 8,540 T reatment Sample All T reatment Usage 1,234 465,619 6,803 Exclude d (13) (460,456) (5,337) Final Analytic T reated 1,221 5,163 1,466 Con trol Sample All Contro l Usage 1,351 386,655 8,017 DKT T raining Holdout 676 197, 332 7,485 Con trol Analysis 675 189, 323 5,884 Exclude d (10) (97,874) (4,418) Final Analytic Con trol 665 91,449 1,466 T otal Analytic Sample 1 ,886 96,6 12 1,466 tutoring were eligible for the con trol sample, as prior exp o- sure to tutoring could influence later outcomes. W e relax this restriction in a robustness chec k to assess whether this sample construction meaningfully affects results (see Sec tion 5.4). Studen ts who were nev er treated were randomly di- vided b et ween the con trol and hold-out samples. The hold- out sample was used exclusiv ely for DKT estimation (Sec- tion 5.2), while the control sample was reserv ed for causal effect estimation. The analytic control sample, therefore, consisted of students who (a) nev er receiv ed tutoring and (b) attempted at least one of the same problems as studen ts in the treatment sample. Because not all problems ov er- lapped with tutoring interv entions, con trol-group attempts on non-o verlapping problems w ere excluded. Studen ts who lac k ed an y problem attempts ov erlapping with the treatment sample were remo ved from the final analytic con trol sample. 5.2 Knowledge State Estimation W e implemented a DKT mo del using a Long Short-T erm Memory (LSTM) architecture to generate student knowl- edge estimates used as pre-treatm ent cov ariates in the c ausal analysis [29]. The mo del uses a studen t’s sequen tial history of question attempts to predict the probabilit y of correctly answ ering subsequen t questions. Critically , the model main- tains a laten t hidden state that encodes information from prior performance across time, allowin g it to summarize a studen t’s evolving knowledge state ev en when past in terac- tions inv olve different constructs than the one b eing pre- dicted. The use of DKT serv es a substan tive causal purpose. Stu- den t knowledge is a k ey confounder in this setting: studen ts are more lik ely to seek or be offered tutoring when they are struggling, and baseline kno wledge strongly predicts future performance. By conditioning on a ric h, multidimensional represen tation of studen ts’ laten t kno wledge states, we aim to reduce bias arising from time-v arying co nfounding in tu- toring assignmen t and outcome generation. F urthermore, DKT can pro vide specific p oint-in-time estimates of base- line knowledge that accounts for recen t learning, whic h may be more robust than global pretest measures. W e implemen ted the DKT mo del in PyT orch following the Figure 1: Causal F ramework for Estimating Heterogeneous Effects of On-Demand T utoring. The pip eline in tegrates ALS and tutor interaction logs to estimate student knowledge states and causal effects. These estimates dri ve do wnstream insights into effect v ariability . While the framew ork functions ind ep enden tly of con textual data, w e incorp orate it here t o test robustness and iden tify v ariables for heterogeneit y analysis. arc hitecture first introduced b y Piec h et al. [29]. The train- ing data included 197,332 problem attempts across 7,485 problems representing 1,598 distinct kno wledge comp onen ts. Item em b eddings were passed to an LSTM with a hidden dimension of 50, which up dated its hidden state h t at each time step. The resulting 50-dimensional hidden state v ector ( H 1 , . . . , H 50 ) w as used as the primary kno wledge represen- tation in our causal analysis. The output la y er applied a sigmoid act iv ation function to produce probabilit y estimates for the next construct. The model ac hieved an acceptable A UC of 0.72 on the analytic con trol sample (which w as not used for training), consistent with typical p erformance re- ported for DKT models [1]. 5.2.1 F eatur e Extraction. F or each observ ation in the treatment and control samples, w e extracted the 50-dimensional LSTM hidden state calcu- lated just prior to the intervention. W e also use the pre- dicted performance on the curren t problem, in which the studen t could receiv e tutoring support, and the subsequ ent problem, which serv es as an outcome. Including these as co v ariates in causal analysis is modeled after the Rebar method, whic h has b een shown to produce precise, un biased effect estimates by applying models generated from data out- side of the treatmen t and control sample to those samples [36]. 5.3 Causal F orest Estimation W e estimated treatmen t effects using Generalized Random F orests (GRF) as implemented in the grf R pack age [4]. W e chose to incorp orate causal forests into the pip eline b e- cause of their nonparametric flexibility and their abilit y to model high-dimensional treatment effect heterogeneity with- out functional form assumptions [3]. Causal forests offer three other adv antages that are partic- ularly relev ant in our setting. First, they hav e b een sho wn to p erform well under targeted selection, when treatment assignmen t dep ends on predictors of the outcome itself [17]. In the context of on-demand tutoring, studen ts are more lik ely to seek or receive tutoring precisely when they antici- pate p o or p erformance absent interv ention, rendering treat- men t assignmen t endogenous and outcome- driv en. Causal forests are explicitly designed to accomm o date this form of selection. Second, a key feature is honesty , in which sep- arate subsamples are used for tree construction and treat- men t effect estimation. This sample-splitting reduces adap- tiv e ov erfitting and supp orts v alid asymptotic inference by producing out-of-sample counterfactual predictions analo- gous to leav e-one-out estimation [43]. Third, causal forests directly estimate Conditional Average T reatmen t Effects (CA TEs) as flexible functions of a high-dimensio nal cov ari- ate space. Given the ric hness of our co v ariates—including the 50-dimensional DKT hidden state representation—this approac h enables estimation of fine-grained, unit-sp ecific conditional effects and supp orts do wnstream analyses of treatmen t effect heterogeneity across students and con texts. 5.3.1 Model Specification. The co v ariate matrix X included 50 DKT hidden state di- mensions, cumulativ e historical accuracy , and DKT-based probabilit y estimates of correct resp onses. The forest was trained with 500 trees, honest y enabled, and h yp erparame- ters selected via cross-v alidation. W e sp ecified students as clusters in the causal forest, implementing cluster-robust es- timation such that all observ ations from a given studen t are assigned to the same subsample during tree construction. As a result, treatmen t effect estimates account for within- studen t dependence, and predictions for each student are generated without using that studen t’s own data. 5.3.2 Estimation Engine: Robinson Decomposition and Pr opensity Modeling W e estimate heterogeneous treatmen t effects using Causal F orests, which rely on the Robinson decomposition (also kno wn as a residual-on-residual or orthogon alization strat- egy). Let Y i denote the observ ed outcome for unit i , Z i ∈ { 0 , 1 } the treatmen t indicator, and X i the v ector of pre-treatmen t co v ariates. F or eac h outcome definition (next- problem correctness and next-skill correctness), the pro ce- dure first fits tw o functions using separate regression forests: the conditional mean outcome mo del ˆ m ( X ) = E [ Y | X ] and the prop ensit y score model ˆ e ( X ) = P ( Z = 1 | X ) . These estimates are then used to construct orthogonalized (residualized) v ariables: ˜ Y i = Y i − ˆ m ( X i ) , ˜ Z i = Z i − ˆ e ( X i ) , (1) where ˜ Y i represen ts the deviation of the observ ed outcome from its co v ariate-exp ected v alue, and ˜ Z i represen ts the de- viation of the observ ed treatment assi gnmen t from its pre- dicted probabilit y . This transformation remov es outcome v ariation explained b y baseline cov ariates and treatmen t v ariation explained by selection on observ ables, yielding Neyman-orthogonal signals for effect estimation. The Causal F orest is then trained to model the relationsh ip betw een ˜ Y i and ˜ Z i as a function of X i , whic h isolates the causal signal aft er adjusting for baseline outcome differences ( ˆ m ) and treatmen t selection ( ˆ e ). Propensity scores are therefore estimated internally as part of the Causal F orest procedure via a regression forest for ˆ e ( X ). V alid estimation requires o verlap (p ositivity), mean- ing each unit must hav e a non-negligible probabilit y of both treatmen t and con trol conditional on X . In our analytic sample, estimated propensity scores ranged from 0.03 to 0.89, with most observ ations falling b et ween 0.05 and 0.20. Appendix A.1 presents the full propensity score distribution and associated o verlap and robustness diagnostics. 5.3.3 Effect Estimates. W e rep ort three t yp es of effects. The Conditional Av erage T reatment Effect (CA TE) provides unit-lev el predictions, where τ ( x ) represents the difference in expected p otenti al outcomes for an individual with co v ariates x . Sp ecifically , it captures the con trast betw een the exp ected outcome if the studen t receives tutoring, Y (1) , and the exp ected outcome if the studen t do es not receiv e tutoring, Y (0) : τ ( x ) = E [ Y (1) − Y (0) | X = x ] (2) The Av erage T reatment Effect (A TE) is the population- lev el effect, computed using Augmen ted In verse Probabilit y W eighting (AIPW) scores: ˆ τ A TE = 1 n n X i =1 ˆ τ ( X i ) + Z i − ˆ e ( X i ) ˆ e ( X i )(1 − ˆ e ( X i )) Y i − ˆ m Z i ( X i ) (3) The Av erage T reatment Effect on the T reated (A TT) is de- termined by restricting this calculation to treated units and represen ts the av erage effects for those who chose the treat- men t. The use of AIPW mak es the A TE and A TT ’dou- bly robust,’ as they combine the outcome predictions with propensity weigh ting to correct for bias [13]. Th us, if ei- ther the outcome mo del or the prop ensit y score mo del is misspecified, the estimates remain cons isten t and asymptot- ically un biased. 5.3.4 Heter ogeneity Analysis. T o test for moderators of the treatment effect, we treat the estimated unit-level CA TEs ( ˆ τ i ) as the outcome v ariable in a Linear Mixed Mo del (MLM): ˆ τ i = β 0 + β 1 · Mo derator + µ s + ϵ i (4) where µ s accoun ts for student-lev el clustering. This allo ws us to determine (via signific ance testing) which moderators explain the v ariability in treatmen t effects. 5.4 Robustness and Sensiti vity Checks T o assess whether the mo del is robust to misspecification and p otential unobserv ed confounders, w e conducted a se- ries of robustness chec ks. Our first sets of robustness chec ks determine whether adding different v ariables or sample con- figurations influences the results. 5.4.1 External V ariables Added First, we incorp orated additional cov ariates not derived from platform usage. These included standardi zed pretest scores of mathematical kno wledge (NWEA MAP RIT), which pro- vide an external measure of baseline abilit y independent of platform performance; a gender indicator with three cate- gories (male, female, or neither); and Pupil Premium F und- ing (PPF) eligibilit y , a go v ernmen t designation for students from disa dv anta ged bac kgrounds th at serv es as a pro xy for socio economic status. W e also included school identifiers . The goal of this analysis was to test whether the esti- mated Av erage T reatment Effect (A TE) remains stable af- ter adding external cov ariates, which would suggest that the DKT-based features may adequately capture relev ant confounding and that the estimated treatmen t effect is not driv en by unobserv ed demographic or institutional factors. 5.4.2 W ashout Sample Included The second robustness test ev aluates potential sel ection bias arising from defining the con trol group as students who hav e never requested tutoring. These studen ts ma y differ system- atically from treated studen ts in wa ys not fully captured b y DKT features or other observed co v ariates. Although re- stricting the control gro up in th is w ay helps preven t SUTV A violations (see Section 5.1), it ma y introduce confounding if never-treated students are fundamen tally differen t from those who seek tutoring. T o examine this p ossibility , w e re-estimate the mo del using an expanded con trol group that includes students who previously received tutoring, pro vided that the prior session did not occur within the previous t wo skills (i.e., a w ashout sample). This specification allo ws us to account for p oten tial carry ov er effects while reducing se- lection bias by increasing ov erlap b et ween treatment and con trol observ ations. 5.4.3 Pr e-Intervention Placebo T est Next, we conducted a placeb o test using an outcome for whic h no causal effect is p ossible: student p erformance on a problem that occurs b efor e the tutoring int erven tion. Placebo or “negative contro l” outcomes are widely used in causal inference as a diagnostic tool for detecting residual confounding or model misspecification. If the identification strategy is v alid, estimated treatmen t effects on suc h out- comes should be n ull, since they temporally precede treat- men t and cannot be causally influenced by it [12]. W e chose a problem three items b efore the interv ention as our placeb o outcome to av oid an y in terference with the interv ention. Finding no significan t effect on this pre-treatmen t outco me w ould therefore provide additional evidence that the metho d is not simply capturing baseline di fferences b et ween studen ts who choose tutoring and those who do not, but is instead iden tifying a genuine causal effect of tutoring. 5.4.4 Additional Checks Finally , w e emplo y t wo additional robustness c hec ks that are common in quasi-exp erimental analyses. W e present these in detail in the App endix A. W e tested the sensitivit y of our results to prop ensit y score model specification and trimming of the distribution tails to ensure common support [9]. T o quan tify the robustness of our estimates to omitted v ari- able bias, w e calculate the Robustness V alue (R V) using the sensemak r framew ork [8]. The R V represen ts the mini- m um strength of asso ciation that an unobserved confounder w ould need to ha ve with both the treatment and the out- come (measured in terms of partial R 2 ) to reduce the esti- mated treatment effect to zero. This allows us to b enc hmark the required strength of an unmeasured confounder against observ ed cov ariates like prior mastery or studen t SES . 6. RESUL TS 6.1 T r eatment Effect Estimates T able 3 presen ts the doubly robust av erage treatment ef- fect estimates from the Causal F orest a nalysis, with p-v alues adjusted for multiple corrections. W e find a statistically significan t p ositiv e effect of tutoring on immediate perfor- mance and near transfer (T able 3). The estimated a verage treatmen t effect (A TE) of tutoring on next problem correct- ness was 4 . 01 p ercentage points ( pp ) (CI = [2.51, 5.51]), p < 0 . 001) and accuracy on the first attempt at a prob- lem on the next skill w as 2 . 73 pp (CI = [1.12, 4.35]). The effects on the treatmen t group (A TT) were also significan t and similar in magnitude. 6.1.1 Robustness and Sensitivity . T able 3 presen ts results from several robustnes s c hec ks de- signed to ev aluate whether sp ecific modeling ch oices, such as co v ariate selection and sample construction, influence the stabilit y of the effect estimates. The inclusion of exter- nal demographic cov ariates yielded a nearly identical A TE of 3 . 93 pp ( C I = [2 . 73 , 5 . 51]). This stabilit y suggests that our Deep Kno wledge T racing (DKT)-derived features suc- cessfully capture the requisite student heterogeneit y , ren- dering additional demographic data redundant for the pur- poses of confounding control. In the mo del specification that included the w ashout sample (the contro l included stu- den ts who ha v e b een treated in the past, but not within t wo skills), studen ts within the control group produced a lo w er but still statistically significan t estimate of 2 . 17 pp ( C I = [1 . 17 , 6 . 16]). The conv ergence and ov erlapping con- fidence interv als across these disparate design assumptions pro vide robust evidence for a consisten t causal effect. These c hec ks were replicated for the near-transfer outcome with similarly significan t results. The effect estimate for the placebo outcome—a meas ure the- oretically unaffected by the treatmen t—w as negative and non-significan t; ho wev er, this is only after applying a Bon- ferroni correction to accoun t for family-wise error. This re- sult suggests that, if anything, students in the treatment group may actually b e biased tow ard low er baseline perfor- mance; consequen tly , the observed p ositive treatmen t effects are unlikely to be artifacts of mo del-driv en upw ard bias. Tw o additional robustness tests are detailed in App endix A.1. W e utilized paired matc hing as a diagnostic to ol for propensity score estimation, finding that a Random F orest propensity model ac hiev ed superior cov ariate balance com- pared to linear sp ecifications. F urthermore, we confirmed that trimming the tails of the propensity distribution to en- sure stricter o verlap d id not significan tly alter the treatmen t effect estimates. Finally , to address the p oten tial for omitted v ariable bias (e.g., unobserved student motiv ation), w e conducted a for- mal sensitivit y analysis using the sensemakr framew ork [7]. W e calculated the Robustness V alue ( RV q =1 ) required to reduce the treatment effect to statistic al insignificance [8]. Our results indicate that an unmeasured confounder would need to b e three times as predictive of b oth treatment as- signmen t and the outc ome as our most influential observed co v ariates to nullify the curren t findings. 6.2 T r eatment Effect Heterogeneity While the av erage effects are positive, we observe substan tial heterogeneit y in treatment lo cal effects across interv ention sessions. Figure 2 displays the distribution of local, session- lev el treatment effect estimates on studen ts’ immediate per- formance and near transfer. The CA TE for immediate per- formance has a standard deviation of 3 . 36 pp , and ranges from − 20 . 25 pp to 19 . 91 pp . The CA TE for near transfer has a standard deviation of 3 . 41 pp , and ranges from − 47 . 70 pp to 23 . 60 pp . Notably , there is a strong correlation b etw een these effects ( r = 0.69, p < 0.001), suggesting that the immediate performance CA TE may be a go o d surrogate measure for near transfer; if a tutoring session has a p ositive effect on next problem correctness, it is lik ely to ha ve a positive effect on the studen ts’ ability to solve problems on other similar skills as well. 6.3 Heterogeneity by T utoring Session Char - acteristics T o illustrate ho w this method can b e used to examine wh y some tutoring sessions are more effective than others, we analyzed three p oten tial mo derators: session length, pro- T able 3: Average treatment effect estimates in percen tage points across differen t mo del specifications. Model Sp ecification A TE (95% CI) A TT (95% CI) Primary Spe cific ation Immediate P erformance (Next Problem) 4.01 *** (2.51, 5.51) 3.98 *** (2.43, 5.54) Near T ransfer (Next Skill) 2.73 ** (1.12, 4.35) 2.65 * (0.99, 4.31) R obustness Che cks External V ariables Added (Next Problem) 3.93 *** (2.49, 5.37) 4.71 *** (2.92, 6.50) W ashout Sample Included (Next Problem) 2.17 *** (1.17, 3.16) 2.76 *** (1.91, 3.61) Placebo T est (Pre-Interv ention) -1.67 (-3.10, -0.24) -0.71 (-2.15, 0.74) Bonferr oni-c orr e cte d signific anc e: ∗ p < 0 . 05, ∗∗ p < 0 . 01, ∗∗∗ p < 0 . 001 Figure 2: Distribution of Conditional Av erage T reatmen t Effects (CA TE) on both Immediate P erformance and Near T ransfer. The solid blac k line indicates the A TE, while the dashed red lin e indicates zero effect. portion of studen t talk, and the n um ber of prior tutoring sessions. W e chose these mo derators because each of them has b een studied in more traditional tutoring en vironments. Longer and more freque nt sessions are consisten tly poin ted to as hallmarks of high-impact tutoring [33]. Eliciting stu- den t talk is often asso ciated with higher quality tutoring sessions [5]. Notably , we view this as a preliminary anal- ysis into understanding what migh t drive effectiveness in on-demand tutoring, which will lead to future directions for this line of work. Because these v ariables w ere non-normally distributed, each w as divided in to quartiles and compared using the hetero- geneit y mo del (Section 5.3.4) with T uk ey HSD p ost-hoc tests. 2 Figure 3 presents the differences in effect size on im- mediate performance b y quartile (quart ile descriptiv e statis- tics and results tables are presented in Appendix C). Session length w as measured b y the num b er of messages sen t betw een the tutors and students that sho w ed the clearest and most consisten t pattern. Mo derately long sessions (Q3) produced significan tly larger effects than very short sessions (Q1; p < 0 . 001), indicating that brief in teractions are often insufficien t to supp ort learning. Sessions in the longest quar- tile (Q4) also outp erformed the shortest sessions ( p < 0 . 05), 2 W e also ran the mo dels with transformed v ariables and found similar results, but chose to present the results in terms of quartiles to ease interpretation. but did not yield reliably greater benefits than moderately long sessions, suggesting diminishing returns b ey ond a cer- tain p oin t. As a proxy for studen t talk, w e used the p ercenta ge of total w ords in the session that were sen t by the student. This measure of the prop ortion of student talk exhibited min- imal and inconsisten t asso ciations with tutoring effectiv e- ness. Only one comparison reac hed significance, indicating sligh tly low er effects in sessions with the highest levels of studen t talk (Q4 vs. Q2; p < 0 . 01), with all other con trasts non-significan t. Students who sent betw een 14 pp and 20 pp of the words during the session had higher estimated effects than tho se who sent b et ween 29 . 8 pp and 85 . 7 pp . This con- trasts with m uch of the work on studen t talk in tutoring, whic h often finds that getting students to engage is an im- portant part of tutoring [5]. Ho wev er, this analysis suggests that student talk ma y be less imp ortant in on-deman d ses- sions that fo cus on specific problems. The num b er of tutoring sessions that preceded the inter- v en tion session was strongly and positivel y associated with impact. Students with more previous sessions (Q3 and Q4) experienced substan tially larger gains than first-time or in- frequen t users (Q1; both p < 0 . 001), with effect sizes increas- ing monotonically across quartiles. T aken together, these results suggest that tutoring is most effective when sessions are of at least moderate length and when students hav e prior Figure 3: Effect Sizes on Immediate Performance by T utoring Session Characteristics. Eac h effect is decomp osed by quartiles of the c haracteristics. Quartile ranges are presen ted in brack ets. experience engaging with on-demand support, whereas sim- ply increasing the amount of studen t talk do es not reliably translate in to greater learning gains. 6.4 Heterogeneity by Student Characteristics The CA TE estimations also provide opportunities to ev alu- ate which studen ts b enefit more from this form of tutoring. T o assess this v ariability , we estimated a series of regres- sion models predicting session-lev el CA TEs (the full table of the mo del output can b e found in App endix B). Stu- den ts with low er baselin e ability exhibited larger treatmen t effects, with DKT mastery showing the strongest asso cia- tion ( β = − 1 . 87, p < 0 . 001), indicating that a one standard deviation decrease in predicted mastery corresponded to a 1.87 p ercen tage point increase in effect size. Once DKT w as included, NWEA RIT scores sho wed only a small positive association ( β = 0 . 08), suggesting that the more proximal DKT-based measure of kno wledge was the primary driv er of heterogeneit y . This pattern is consistent with b oth a ceiling effect—where higher-performing students ha ve less ro om to impro v e—and the hypothesis that just-in-time tutoring is most v aluable for students who are struggling. Lo w-SES students b enefited slightly less on a vera ge ( β = − 0 . 21, p < 0 . 05) and in teraction terms revealed that the re- lationship b et ween prior knowledge and treatment effects w as weak er for these students (DKT × PPF: β = 0 . 25, p < 0 . 001; RIT × PPF: β = 0 . 29, p < 0 . 001). F igure 4 presents a visualization of this interaction. While lo wer- performing students generally b enefited more from tutoring, this gradien t was atten uated among PPF-eligible studen ts. One interpreta tion is that low-SES studen ts may face ad- ditional barriers—suc h as limited access to supplemen tary resources or greater comp eting demands on atten tion—that constrain the p oten tial b enefits of tutoring regardless of baseline abilit y . 7. DISCUSSION & CONCLUSION W e introduced a scalable causal framew ork for estimating problem-lev el effects of on-demand h uman tutoring em b ed- ded in an adaptiv e learning system. Across specifications, requesting tutoring pro duces mo dest but reliable gains in immediate performance (next-problem correctness; A TE of 4 percentage p oin ts) and near transfer (first attempt on the next skill; A TE of 3 p ercen tage p oin ts). These effects are similar in magnitude to other ALS features [14, 32, 31], but the large treatmen t-effect heterogeneit y suggests substan tial room to optimize tutoring qualit y . The framew ork enables session-lev el effect estimation, supp orting feature mining of tutoring interactions asso ciated with higher or lo wer impact. A central contribut ion is methodological. W e show how time-v arying kno wledge representation s deriv ed from plat- form logs can be used as pretreatment cov ariates to address confounding driv en b y evolving mastery and task difficulty in observ ational on-demand tutoring studies. By integrating Deep Knowledge T racing featu res [29] in to a doubly robust causal forest estimator [40 , 3, 13], the framework targets the core selection problem that tutoring is typically requested when studen ts an ticipate po or performance. The stabilit y of A TE estimates after adding external cov ariates (test scores, SES p rox y , sc hool iden tifiers) suggests that platform-derived learning traces capture muc h of the relev ant confounding structure. A near-zero placeb o effect further supports the iden tification strategy . The framework also supports developmen t and b enchma rk- ing of AI tutoring systems. Session-level Conditional Aver- age T reatmen t Effect (CA TE) estimates provide large sets of lab eled examples indicating whic h tutoring interactions are lik ely to produce meaningful gains. These can serv e as supervision signals for training AI tutors to emulate high- impact h uman strategies and a void lo w-impact patterns. CA TE distributions also define a principled benchmark: AI systems can b e ev aluated not only against a verage human outcomes but relat ive to the upper range of observ ed human session effects. A key substantiv e result is that the A TE alone masks wide heterogeneity , including strongly p ositiv e and negativ e session-lev el effects. Although the av erage tutoring effect is comparable to low er-cost ALS features suc h as on-demand hin ts [31], individual effects range from roughly +20 to − 20 percentage p oin ts. This indicates that some tutoring ses- Figure 4: In teractions b et w een prior kno wledge in terms of predicted mastery (A) or standardized test scores (B) and socio- economic status (SES) based on Pupil Premium F unding (PPF) eligibility . sions are high ly effectiv e while others are n eutral or coun ter- productive. The proposed framew ork enables iden tification and analysis of these high- and low-impact sessions. Our analysis of heterogeneity pro vides a preliminary , though not comprehensiv e, attempt to iden tify the factors associ- ated with higher-impact tutoring. Ev aluating session char- acteristics highlights sev eral p oten tial drivers of effective- ness. Session length exhibits the clearest pattern: mo der- ately long sessions (Q3) outperform very short sessions (Q1), while the longest sessions do not reliably impro ve up on mo d- erately long ones. This suggests a diminishing marginal re- turn on tutor time beyond a certain threshold. F urthermore, prior interv ention exp erience is strongly and monotonically associated with larger impacts, indicating that students ma y ’learn ho w to use’ tutoring more effectiv ely o ver time. Alter- nativ ely , students who access tutoring more frequently ma y possess inheren t c haracteristics, suc h as higher baseline en- gagemen t, that render the tutoring more beneficial. In con- trast, the proportion of studen t talk shows minimal asso cia- tions with impact. This finding is direct ionally inconsisten t with classic tutoring accoun ts that emphasize studen t expla- nation and constructiv e engagement as drivers of learning [5], and suggests that in brief, problem-focused, on-demand c hats, the quantit y of studen t talk ma y be less informativ e than the sp ecificity , timing, and instructional con tent of tu- tor mo ves. How ev er, the crude metric of the prop ortion of w ords used by students vs. teachers do es not capture the qualit y of the con versation; more work can b e conducted in this area using the effect estimates from our framework. T ogether, these patterns point to a practical implication for em b edded systems: effectiv eness ma y dep end less on max- imizing interaction v olume and more on ensuring sessions are long enough to resolve a misconcept ion while support- ing students in dev eloping productive help-seeking routines. Studen t-level heterogeneit y shows larger benefits for learn- ers with low er prior mastery , consisten t with just-in-time support mo dels and prior tutoring evidence [24]. How ever, lo w-SES studen ts b enefit sligh tly less on av erage and sho w a weak er mastery–impact gradien t, aligning with prior w ork suggesting that on-demand tutoring may require comple- men tary design supp orts to ensure equitable benefit [22, 10]. Finally , the robustness c hecks illustrate b oth the promise of observ ational ev aluation in em b edded interv entions. Esti- mates attenuate under alternative con trol-group construc- tions that expand ov erlap while introducing p otentia l carry- o ve r, highligh ting the difficulty of defining appropriate coun- terfactuals when treatmen t is episo dic and histories are lon- gitudinal. This reinforces the v alue of framew orks that (i) explicitly enco de time-v arying knowled ge and (ii) p rovid e di- agnostics (e.g., o verlap, placebo outcomes) to probe residual bias [35, 13]. 8. LIMIT A TIONS & FUTURE WORK Sev eral limitations of this study provide pro ductive a v- en ues for future research. First, while our causal identi - fication strategy relies on the conditional ignorabilit y as- sumption, our sensitivity analysis suggests that unmea- sured factors—suc h as studen t motiv ation or teacher prac- tices—w ould need to be substan tially more influen tial than our platform-derived measures to inv alidate the primary findings. Second, mo deling tutoring as a binary exp osure necessarily marginalizes the granular v ariations in instruc- tional quality and conv ersational strategy that likely driv e effect heterogeneity . While this work iden tifies correlates of impact, subsequent inquiries must deconstruct the ” blac k box” of the tutoring exchange b y integrating process-level data, suc h as dialogue transcripts or sp ecific instructional mo v es, to isolate the laten t causal mechanisms mediating effectiv eness. Third, the outcome measures used here are in ten tionally prox imal; while they capture immediate im- pact and near transfer, they may not reflect long-term per- sistence or durable achiev ement. F uture research should ex- amine the comp ounding effects of these just-in-time inter- v en tions to determine if session-lev el gains translate in to cu- m ulativ e impro vemen ts in learning tra jectories. Finally , as this study examines a single platform and implemen tation, future researc h should address generalizabilit y by applying this framew ork across diverse domains and utilizing exper- imen tal or quasi-exp erimen tal designs that more directly probe equit y implications and p edagogical mec hanisms. APPENDIX Anon ymized digital appendices: https: //osf.io/vdf8q/files/bez4n?v iew_only= e45c14c4cc1a4f9e958eeb8e8753 5ee3 . A. REFERENCES [1] G. Ab delrahman, Q. W ang, and B. Nunes . Knowledge tracing: A survey . A CM Comp uting Surveys , 55(11):224:1–224:37, 2023. [2] V. Aleven, I. Roll, B. M. McLaren, and K. R. Koedinger. Help helps, but only so m uch: Researc h on help seeking with intelligen t tutoring systems. International Journal of Artificial Intel ligenc e in Educ ation , 26(1):205–223, 2016. [3] S. Athey , J. Tibshirani, and S. W ager. Generalized random forests. The A nnals of Statistics , 47(2):1148–1178, 2019. [4] S. Athey and S. W ager. Estimating treatment effects with causal forests: An application. Observational studies , 5(2):37–51, 2019. [5] M. T. Chi, S. A. Siler, H. Jeong, T. Y amauch i, and R. G. Hausmann. Learning from human tutoring. Co gnitive scienc e , 25(4):471–533, 2001. [6] D. R. Chine, C. Bren tley , C. Thomas-Browne, J. E. Ric hey , A. Gul, P . F. Carv alho, and K. R. Koedinger. Educational equit y throug h combined h uman-ai personalization: A propensity matc hing ev aluation. In International Confer enc e on Artificial Intel ligenc e in Educ ation , pages 366–377. Springer, 2022. [7] C. Cinelli and C. Hazlett. Making sense of sensitivit y: Extending omitted v ariable bias. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 82(1):39–67, 2020. [8] C. Cinelli and C. Hazlett. sensemakr: Sensitivit y analysis tools for omitted v ariable bias in r. Jour nal of Statistic al Softwar e , 94(11):1–34, 2020. [9] R. K. Crump, J. V. Hotz, G. W. Im b ens, and O. A. Mitnik. Dealing with limited ov erlap in estimation of a vera ge treatment effects. Biometrika , 96(1):187– 199, 2009. [10] G. Deacon and G. Cho jnac ki. Impacts of upch ieve on-demand tutoring on studen ts’ math knowledg e and perceptions. T ec hnical rep ort, Mathematica, 2023. [11] J. Dietrichson, M. Bøg, T. Filges, and A. M. Klin t Jørgensen. Academic interv entions for elemen tary and middle school students with lo w socio economic status: A systematic review and meta-analysis. R eview of Educ ational R esear ch , 87(2):243–282, 2017. [12] A. C. Eggers, G. T u ˜ n´ on, and A. Dafo e. Placebo tests for causal inference. Americ an Journal of Politic al Scienc e , 68(3):1106–1121, 2024. [13] A. N. Glynn an d K. M. Quinn. An introduction to the augmen ted in verse propensity w eighted estimator. Politic al A nalysis , 18:36–56, 2010. [14] A. Gurung, S. Baral, M. P . Lee, A. C. Sales, A. Haim, K. P . V anacore, A. A. McReynolds, H. Kreisberg, C. Heffernan, and N. T. Heffernan. How common are common wrong answe rs? crowdsourcing remediation at scale. In Pr o c e e dings of the T enth A CM Confer enc e on L e arning @ Scale , page 70–80, Copenhagen Denmark, 2023. ACM. [15] A. Gurung, J. Lin, J. Gutterman, D. R. Thomas, A. Houk, S. Gupta, and K. Ko edinger. Human tutoring impro v es the impact of ai tutor use on learning outcomes. In International Confere nc e on Ar tificial Intelligenc e in Educ ation , pages 393–407. Springer, 2025. [16] J. Guryan, J. Ludwig, M. P . Bhatt, P . J. Cook, J. M. Da vis, K. Do dge, and G. Sto ddard. Not too late: Impro ving academic outcomes among adolescen ts. Am eric an Ec onomic R eview , 113(3):738–765, 2023. [17] P . R. Hahn, J. S. Murra y , and C. M. Carv alho. Ba y esian regression tree mo dels for causal inference: Regularization, confounding, and heterogeneous effects (with discussion). Bayesian Analysis , 15(3):965–1056, 2020. [18] D. W. Harrison, D. J. Bro wn, and S. Higgins. A pilot impact study to ev aluate the effectiveness of eedi on raising attainmen t in mathematics at ks3. T ec hnical report, What W orked Education, 2023. [19] R. F. Kizilcec, G. M. Da vis, and G. L. Cohen. T ow ards equal opp ortunities in mo ocs: affirmation reduces gender & so cial-class ac hievemen t gap s in c hina. In Pr o c ee dings of the fourth (2017) A CM c onfer enc e on lear ning@ sc ale , pages 121–130, 2017. [20] R. F. Kizilcec, A. Saltarell i, P . Bonfert-T aylor, M. Goudzw aard, E. Hamonic, and R. Sharro c k. W elcome to the course: Early social cues influence w omen’s p ersistence in computer science. In Pr o c e e dings of the 2020 CHI c onfer enc e on human factors in c omputing systems , pages 1–13, 2020. [21] M. A. Kraft, D. S. Edw ards, and M. Cannata. The scaling dynamics and causal effects of a district-operated tutoring program. edworkingpaper no. 24-1030. Annenb er g Institute for Scho ol R eform at Br own University , 2024. [22] M. A. Kraft, B. E. Sc hueler, and G. F alk en. What impacts should we expect from tutoring at scale? exploring meta-analytic generalizabilit y , 2024. [23] S. Mo jarad, A. Essa, S. Mo jarad, an d R. S. Baker. Studying adaptiv e learning efficacy using propensity score match ing. In Comp anion pr oc e e dings of the 8th international co nfer enc e on le arning analytics and know le dge (LAK’18) , pages 5–9, 2018. [24] A. Nick ow, P . Oreop oulos, and V. Quan. The promise of tutoring for prek–12 learning: A systematic review and meta-analysis of the exp erimen tal evidence. Am eric an Educ ational R ese ar ch Jour nal , 61(1):74–107, 2024. [25] Z. A. Pardos and N. T. Heffernan. Mo deling individualization in a ba y esian netw orks implemen tation of know ledge tracing. In International c onfer enc e on user mo deling, adaptation, and p ersonalization , pages 255–266. Springer, 2010. [26] Y. Pei, A. Sales, and J. Gagnon-Bartsch . Bo osting precision in educational a/b tests using auxiliary information and design-based estimators. In Pr o c e e dings of the 17th International Confer enc e on Educ ational Data Mining , pages 990–993, 2024. [27] R. Pel´ anek. Bay esian knowledge tracing, logistic models, and beyond: an o verview of learner mo deling tec hniques. User modeling and user-adap te d inter action , 27(3):313–350, 2017. [28] D. M. Pham, K. P . V anacore, A. C. Sales, and J. A. Gagnon-Bartsc h. Lo ol: T o wa rds p ersonalization with flexible & robust estimation of heterogeneous treatmen t effects. In Pr o c e e dings of the 17th International Confer enc e on Educ ational Data Mining , pages 376–384. In ternational Educational Data Mining Society , 2024. [29] C. Piech et al. Deep knowledge tracing. In A dvanc es in Neur al Information Pr o cessing Systems , pages 505–513, 2015. [30] E. Prihar, A. Moore, and N. Heffernan. Identifying explanations within studen t-tutor chat logs. In Pr o c e e dings of the 15th International Confer enc e on Educ ational Data Mining , page 773–777, 2022. [31] E. Prihar, T. P atikorn, A. Botelho, A. Sales, and N. Heffernan. T ow ard p ersonalizing studen ts’ education with cro wdsourced tutoring. page 37–45. Association for Comput ing Machi nery , Inc, 2021. [32] E. Prihar, M. Sy ed, and K. Ostrow. Exploring common trends in online educational exp erimen ts. page 12, 2022. [33] C. D. Robinson and S. Loeb. High-impact tutoring: State of the research and priorities for future learning. National Student Supp ort A c c eler ator , 21(284):1–53, 2021. [34] C. D. Robinson, C. P ollard, S. Novicoff, S. White, and S. Lo eb. The effects of virtual tutoring on young readers: Results from a randomized controlled trial. Educ ational Evaluation and Policy Analysis , 47(4):1245–1265, 2025. [35] D. B. Rubin. Est imating causal effects of treatments in randomized and nonrandomized studies. Journal of Educ ational Psycholo gy , 66:688–701, 1974. [36] A. C. Sales, B. B. Hansen, and B. Ro wan. Rebar: Reinforcing a match ing estimator with predictions from high-dimensional co v ariates. Journal of Educ ational and Behavior al Statistics , 43(1):3–31, 2018. [37] A. C. Sales and J. F. P ane. The effect of teac hers reassigning studen ts to new cognitiv e tutor sections. International Educ ational Data Mining So ciety , 2020. [38] D. R. Thomas, J. Lin, E. Gatz, A. Gurung, S. Gupta, K. Norb erg, and K. R. Ko edinger. Impro ving student learning with hybrid human-ai tutoring: A three-study quasi-experimental in vestigation. In Pr o c e e dings of the 14th L e arning A nalytics and Know le dge Confer enc e , pages 404–415, 2024. [39] K. V anacore, A. Sales, A. Liu, and E. Ottmar. Benefit of gamification for p ersisten t learners: Propensity to repla y problems mo derates algebra-game effectiv eness. In T enth A CM Confer enc e on L ear ning @ Sc ale (L@S ’23) , Copenhagen, Denmark, 202 3. ACM. [40] S. W ager and S. Athey . Esti mation and inference of heterogeneous treatmen t effects using random forests. Journal of the A meric an Statistic al Asso ciation , 113(523):1228–1242, 2018. [41] A. W ang, A. Rysb ek, A. Huber, A. Nam biar, A. Kenolty , B. Caulfi eld, B. Lilley-Drap er, B. Groot, B. V eprek, C. Burdett, C. Willis, C. Barton, D. Smith, G. Mu, H. W alters, I. Jurenk a, I. Hulls, and V. Braz˜ ao. Ai tutoring can safely and effectively support stud ent s: An exploratory rct in uk classrooms, 2025. arXi v preprint. [42] R. E. W ang, A. T. Rib eiro, C. D. Robinson, S. Lo eb, and D. Demszky . T utor cop ilot: A human-ai approac h for scaling real-time exp ertise. arXiv pr eprint arXiv:2410.03017 , 2024. [43] E. W u and J. A. Gagnon-Bartsch. The loop estimator: Adjusting for cov ariates in randomized exp erimen ts. Evaluation revi ew , 42(4):458–488, 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment