Proximity-Based Multi-Turn Optimization: Practical Credit Assignment for LLM Agent Training

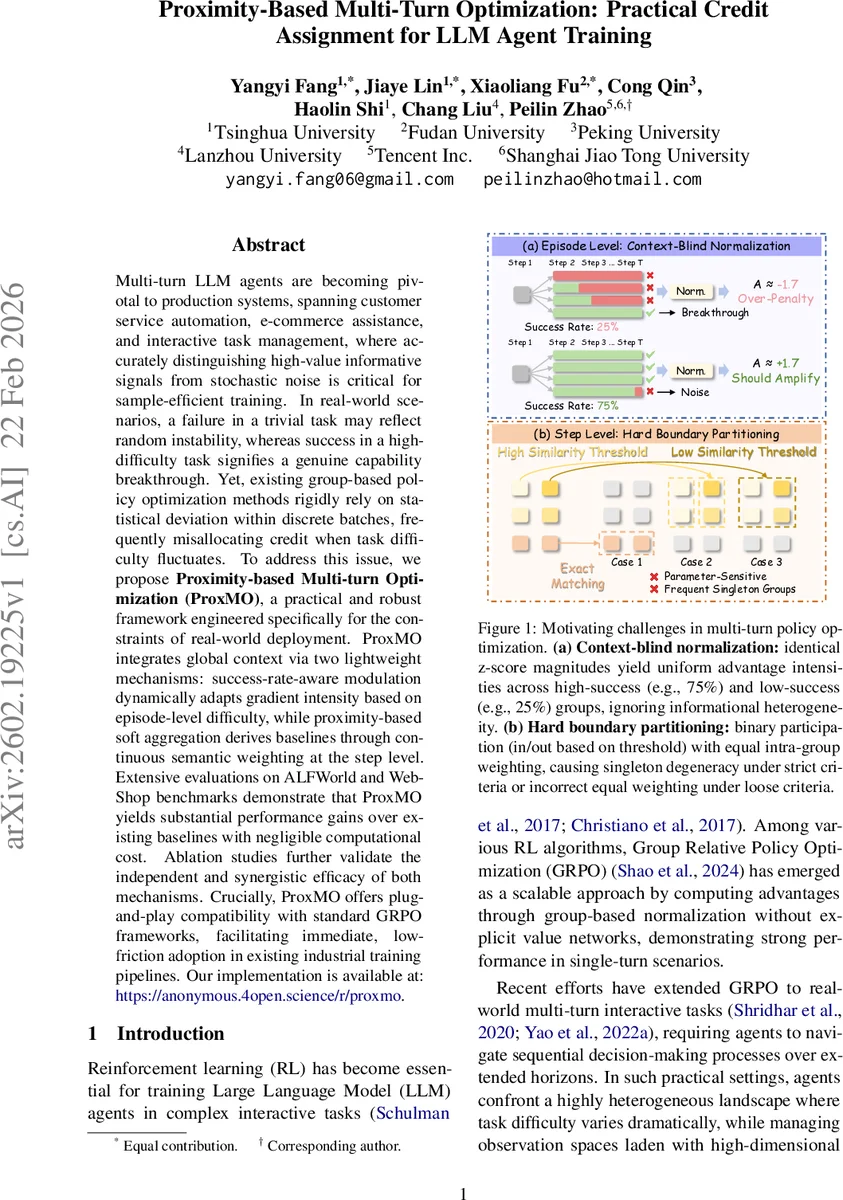

Multi-turn LLM agents are becoming pivotal to production systems, spanning customer service automation, e-commerce assistance, and interactive task management, where accurately distinguishing high-value informative signals from stochastic noise is critical for sample-efficient training. In real-world scenarios, a failure in a trivial task may reflect random instability, whereas success in a high-difficulty task signifies a genuine capability breakthrough. Yet, existing group-based policy optimization methods rigidly rely on statistical deviation within discrete batches, frequently misallocating credit when task difficulty fluctuates. To address this issue, we propose Proximity-based Multi-turn Optimization (ProxMO), a practical and robust framework engineered specifically for the constraints of real-world deployment. ProxMO integrates global context via two lightweight mechanisms: success-rate-aware modulation dynamically adapts gradient intensity based on episode-level difficulty, while proximity-based soft aggregation derives baselines through continuous semantic weighting at the step level. Extensive evaluations on ALFWorld and WebShop benchmarks demonstrate that ProxMO yields substantial performance gains over existing baselines with negligible computational cost. Ablation studies further validate the independent and synergistic efficacy of both mechanisms. Crucially, ProxMO offers plug-and-play compatibility with standard GRPO frameworks, facilitating immediate, low-friction adoption in existing industrial training pipelines. Our implementation is available at: \href{https://anonymous.4open.science/r/proxmo-B7E7/README.md}{https://anonymous.4open.science/r/proxmo}.

💡 Research Summary

The paper addresses a fundamental credit‑assignment problem in training multi‑turn large language model (LLM) agents for real‑world applications such as customer service, e‑commerce assistance, and interactive task management. In these settings, a failure on an easy task may be mere stochastic noise, while a success on a hard task signals a genuine capability breakthrough. Existing group‑based policy optimization methods, particularly Group Relative Policy Optimization (GRPO), compute advantages solely from intra‑group statistics (mean and standard deviation) and therefore treat identical statistical deviations as equally informative regardless of task difficulty. This leads to systematic misallocation of learning signals, especially when task difficulty varies widely across episodes.

ProxMO (Proximity‑based Multi‑turn Optimization) is proposed to inject global contextual information at two hierarchical levels: (1) episode‑level and (2) step‑level.

Episode‑level Success‑Rate‑Aware Modulation (PSC). For each batch of N trajectories sampled from the same instruction, the empirical success rate p is computed. A scaling weight w(R, p)=1+β·f(R, p) is applied to the standard GRPO advantage, where f(R, p) uses a sigmoid function of either the failure rate (d = 1 − p) for successes or the success rate p for failures. The hyperparameters α (sigmoid steepness) and β (overall modulation strength) control how strongly successes in low‑success groups are amplified and how much failures in high‑success groups are attenuated. The resulting modulated episode advantage ˜A_E(τ_i)=w(R(τ_i), p)·A_E(τ_i) reflects task difficulty, thereby providing a more informative learning signal than raw z‑scores.

Step‑level Proximity‑based Soft Aggregation (PSA). To avoid hard‑boundary partitioning of states (exact matching or fixed similarity thresholds) that cause singleton groups or indiscriminate weighting, PSA computes a continuous, similarity‑weighted baseline for each step. Each state s_i^t is represented by a TF‑IDF vector v_i^t; cosine similarity sim(s_i^t, s_j^t) is calculated and transformed into a temperature‑scaled weight w_ij = exp(sim/τ) / Σ_{k∈G(i)} exp(sim(s_i^t, s_k^t)/τ), where G(i) denotes all trajectories sharing the same task. The soft baseline B_i^t = Σ_j w_ij R_j^t (discounted return from step t onward) is then subtracted from the trajectory’s own return to obtain the step advantage A_S(a_i^t)=R_i^t−B_i^t. When τ→0 the method approximates exact nearest‑neighbor matching; when τ→∞ it collapses to a uniform baseline identical to the episode‑level baseline. Empirically τ=0.1 yields a good balance between sensitivity and stability.

Unified Objective. The final advantage for each action is a weighted sum of the modulated episode advantage and the soft step advantage: A(a_i^t)=˜A_E(τ_i)+ω·A_S(a_i^t), with ω defaulting to 1. This advantage is plugged into the standard clipped PPO loss, together with a KL‑penalty term, yielding a fully differentiable training objective that requires no value network.

Experiments. The authors evaluate ProxMO on two challenging multi‑turn benchmarks: ALFWorld (embodied household tasks, 3,827 instances across six categories) and WebShop (web‑based shopping, 1.1 M products, 12 K instructions). They use Qwen2.5‑1.5B‑Instruct and Qwen2.5‑7B‑Instruct as backbones, keeping all hyperparameters identical across methods for fairness. Baselines include closed‑source LLMs (GPT‑4o, Gemini‑2.5‑Pro), prompting agents (ReAct, Reflection), and RL methods (GRPO, GiGPO). ProxMO consistently outperforms GRPO by 8–18 percentage points in overall success rate, with especially large gains on long‑horizon tasks (e.g., “Look”, “Cool”, “Pick2”). It also surpasses GiGPO, demonstrating that the soft aggregation avoids the sparsity problems of hard grouping. Remarkably, the 1.5 B model trained with ProxMO matches or exceeds the performance of much larger proprietary models, highlighting its cost‑effectiveness.

Ablation & Sensitivity. Removing the PSC module degrades performance across all tasks, confirming that difficulty‑aware scaling is essential. Removing PSA leads to even larger drops, particularly on tasks requiring fine‑grained step‑level credit. Hyperparameter sweeps show that the method is robust: α∈

Comments & Academic Discussion

Loading comments...

Leave a Comment