Multiclass Calibration Assessment and Recalibration of Probability Predictions via the Linear Log Odds Calibration Function

Machine-generated probability predictions are essential in modern classification tasks such as image classification. A model is well calibrated when its predicted probabilities correspond to observed event frequencies. Despite the need for multicateg…

Authors: Amy Vennos, Xin Xing, Christopher T. Franck

Multiclass Calibration Assessmen t and Recalibration of Probabilit y Predictions via the Linear Log Odds Calibration F unction Am y V ennos ∗ , Xin Xing, and Christopher T. F ranc k † Departmen t of Statistics, Virginia P olytec hnic Institute and State Univ ersit y F ebruary 24, 2026 Abstract Mac hine-generated probabilit y predictions are essen tial in mo dern classification tasks suc h as image classification. A model is w ell calibrated when its predicted probabilities correspond to observ ed even t frequencies. Despite the need for multicate- gory recalibration metho ds, existing metho ds are limited to (i) comparing calibration b et ween t wo or more mo dels rather than directly assessing the calibration of a single mo del, (ii) requiring under-the-ho o d mo del access, e.g., accessing logit-scale predictions within the la yers of a neural net work, and (iii) providing output whic h is difficult for h uman analysts to understand. T o ov ercome (i)-(iii), we propose Multicategory Linear Log Odds (MCLLO) recalibration, which (i) includes a lik eliho o d ratio hypothesis test to assess calibration, (ii) do es not require under-the-ho o d access to mo dels and is thus applicable on a wide range of classification problems, and (iii) can b e easily interpreted. W e demonstrate the effectiveness of the MCLLO method through simulations and three real-world case studies inv olving image classification via con volutional neural net work, ob esity analysis via random forest, and ecology via regression mo deling. W e compare MCLLO to four comparator recalibration techniques utilizing b oth our h yp othesis test and the existing calibration metric Exp ected Calibration Error to sho w that our metho d w orks w ell alone and in concert with other metho ds. K eywor ds: Multiclass Classification, Calibration, Mac hine Learning, Confidence Scores, Lik eliho o d Ratio T est ∗ corresp onding author † This material is based up on work supp orted, in whole or in part, by the U.S. Department of Defense (DoD) through the Office of the Under Secretary of Defense for A cquisition and Sustainmen t (OUSD(A&S)) and the Office of the Under Secretary of Defense for Research and Engineering (OUSD(R&E)) under Con tract HQ003424D0023. The Acquisition Innov ation Research Cen ter (AIRC) is a multi-univ ersity partnership led and managed by the Stevens Institute of T echnology through the Systems Engineering Researc h Center (SERC) – a federally funded Universit y Affiliated Research Center. An y views, opinions, findings and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the United States Gov ernment (including the DoD and an y gov ernment p ersonnel). 1 1 In tro duction Multicategory probability predictions arise from statistical and machine classifiers and estimate the probability that ob jects b elong to each of c categories. F or example, an image classifier ma y b e used to predict which animals or vehicles are con tained within a set of images. Multicategory predictions are used widely in image classification ( Sam by al et al. 2023 , Rajaraman et al. 2022 , Le Coz et al. 2024 , Kupp ers et al. 2020 ) including for satellite images ( Karalas et al. 2016 , Shiuan W an & Chou 2012 ), medical diagnoses ( Hameed et al. 2020 , Mi et al. 2021 ), geological hazard mapping ( Go etz et al. 2015 ), speech recognition ( Ganapathira ju et al. 2004 ), remote sensing ( Aaron E. Maxwell & F ang 2018 ), defense applications ( Mac hado et al. 2021 ), and sorting through gene expression datasets for cancer researc h ( P anca & R ustam 2017 , Y eung & Bumgarner 2003 , Ramasw amy et al. 2001 ). The v alue of probabilit y predictions to decision makers dep ends on c alibr ation , whic h describ es ho w well empirical observ ations agree with the claimed probability predictions. If a mo del is w ell calibrated, then the probabilit y predictions in all categories agree with the observ ed frequencies of observ ations in each category . F or example, supp ose a diagnostic mo del predicts a 40% chance that a patient has cancer. Clinical risk is only prop erly con v eyed b y the mo del if 4 in 10 patien ts who are claimed to hav e a 40% chance of cancer actually hav e cancer. More generally , w e say that a mo del’s predictions are w ell calibrated if this prop erty holds for all predictions b etw een 0% and 100%. Perhaps surprisingly , there are no guaran tees that probabilit y predictions from statistical and mac hine learning mo dels are w ell calibrated, esp ecially when predictions are made outside of the data set used to train the mo del. W e w ant to know whether predictions are w ell calibrated, and if they aren’t calibrated, w e need metho ds to recalibrate them. Recalibration metho ds adjust probabilit y predictions so they are calibrated. Figure 1 provides an example of calibration in the con text of multicategory image classifica- 2 tion. This figure shows tw o images (indices 5037 and 4526) of planes from the CIF AR-10 data set ( Krizhevsky 2009 ). The image on the left is more clearly a plane than the image on the righ t. A neural net classified b oth images as planes and outputted confidence scores that reflect the neural net’s certain t y in eac h of the ten p ossible lab els. Note that 0-1 probability scale predictions outputted by machine learning algorithms are sometimes called c onfidenc e sc or es . W e include the top fiv e outputted confidence scores from the Visual Geometry Group neural net (V GGNet) ( Simon yan & Zisserman 2014 ) follo wed b y recalibrated v ersions of those predictions furnished b y out Multicategory Linear Log Odds (MCLLO) metho d, which is described in Section 2 . In this example, the image on the left receiv ed a confidence score of 1.00 for plane and a confidence score of 0.00 for all other categories. This image is obviously a plane. If we assess the empirical rate at whic h ob jects with the same confidence scores are actually planes, it is within rounding error of 100%, thus the recalibrated probabilit y is also 1.00. By con trast, for the more am biguous image on the righ t, the neural net assigns a confidence score of 0.94. Ho w ever, on the basis of av ailable training data, scores of 94% as in this case only truly contain planes in 80% of cases. The distinction betw een a 94% probabilit y of an even t v ersus an 80% actual probabilit y of the ev ent would b e crucial in man y decision making tasks. Our M CLLO metho d can b e used to detect the uncalibrated nature of these predictions and adjust them so that e.g., a confidence score of 94% in this example actually corresp onds to an empirical rate of actually b eing a plane of 80%. Muc h w ork has been done in ensuring mac hine learning mo dels are pro ducing w ell-calibrated predictions ( Niculescu-Mizil & Caruana 2005 , Guo et al. 2017 , Kumar et al. 2018 ). In the binary setting, muc h researc h has b een published in improving calibration, including empirical binning ( Zadrozn y & Elkan 2001 , Pakdaman Naeini et al. 2015 a ), isotonic regression ( Barlo w & Brunk 1972 , Naeini & Co op er 2016 ), Platt scaling ( Platt et al. 1999 ), and Beta calibration ( Kull et al. 2017 ). In our literature review of recalibration metho ds, 3 T rue label: plane VGG Net Confidence Scores: Plane: 0.94 Cat: 0.04 Bird: 0.01 Ship: 0.01 Dog: 0.01 Recalibrated Confidence Scores: Plane: 0.80 Cat: 0.12 Bird: 0.03 Dog: 0.02 Ship: 0.02 Are th ese p l an es? F i g u re 1 T rue label: plane VGG Net Confidence Scores: Plane: 1.00 Ship: 0.00 Bird: 0.00 Deer: 0.00 Cat: 0.00 Recalibrated Confidence Scores: Plane: 1.00 Ship: 0.00 Bird: 0.00 Deer: 0.00 Cat: 0.00 (MCLLO probs A RE dif f erent f or index 5037 bt w) Capt ion : T wo images (indices 5037 and 4526) of t he CI F A R-10 dat a set . T he V G G Net Neural Net classif ies bot h images as planes wit h high levels of conf idence. However [ lef t ] displays an image t hat is more clearly a plane t han [ right ] . Recalibrat ion adjust s t he probabilit y f orecast s of “plane” f or each image t o bet t er ref lect how conf ident a neural net is in it s decision. Figure 1: T w o images of observ ed label “plane" from the CIF AR-10 data set. A neural net outputted confidence scores of a set of ten labels for each image and rep orted high confidence scores for the lab el of “plane" for both images. How ever, the image on the righ t is less recognizable as a “plane" than the image on the left. Direct recalibration via MCLLO as describ ed in Section ( 2 ) adjusts the probability of eac h label to correspond b etter with the rates that planes o ccur giv en data such as these images. 4 w e w ere surprised to learn that man y efforts to impro v e calibration in binary cases do not app ear to ha ve a straightforw ard extension to the multicategory setting: for example, the m ulticategory setting introduces v arieties in the definition of calibration. The strongest of these v arieties is multiclass calibration, which considers all probabilit y predictions for ev ery category and every observ ation, and is preferred o ver other metho ds of calibration ( Silv a Filho et al. 2023 ). T wo p opular metho ds of m ulticlass recalibration are temperature scaling and v ector scaling ( Hin ton et al. 2015 , Guo et al. 2017 ), which are extensions of Platt scaling that linearly scale the lo gits , or scores outputted from the last la yer of the neural net before the softmax function, instead of probabilit y predictions. Since the softmax function is not in vertible, the logits in the lay er b efore the softmax function cannot b e recov ered. Consequen tly , temp erature scaling and vector scaling are limited in their scop e in that the metho ds can only b e applied when the logits are av ailable. This may inv olve under-the-ho o d access to, sa y , a conv olutional neural net work mo del in order to access and recalibrate the mo del logits in the appropriate lay er of the netw ork. W e show an example of this in Section 4.2 . Some metho ds, suc h as random forests, pro duce confidence scores by vote coun ting trees and do not hav e natural logits with whic h to employ temp erature and vector scaling. These scaling metho ds are th us unusable for probabilit y scale predictions. W e show such an example in Section 4.3 . A dditionally , certain shifts of the logits that pro duce the same probability predictions are recalibrated differently b y these metho ds. Our MCLLO metho d ov ercomes this issue b y operating directly on probability scale predictions, whic h are av ailable in virtually all mo dern classification techniques. Unlik e temp erature and v ector scaling, MCLLO metho ds do not require under-the-ho o d access to mo dels in order to implement recalibration. Another shortcoming among existing metho ds is that there are no recalibration techniques 5 that include hypothesis testing for calibration status. This brings ab out a limitation in that man y recalibration tec hniques rely on outside measures of calibration, such as the Hosmer Lemesho w (HL) test (which can test for calibration but do es nor prescrib e a recalibration approach) ( F agerland et al. 2008 ), a visualization of calibration called reliabilit y diagrams ( V aicenavicius et al. 2019 ), Widmann et al. ( 2019 )’s matrix-v alued k ernel-based quan tification of calibration, and the p opular Exp ected Calibration Error (ECE) ( Guo et al. 2017 , Pakdaman Naeini et al. 2015 b ) to determine calibration status. These measures of calibration are limited in that they cannot simultaneously determine with a prescrib ed significance level if a mo del is w ell-calibrated and also prescrib e a recalibration approac h if necessary . Metho ds suc h as the ECE score are useful for comparing comp eting mo dels (lo wer score is b etter) but offer less insigh t as to whether a single mo del is p erforming adequately . Man y practitioners may appreciate the hypothesis testing framework that can mak e calibration status more clear on the basis of a single mo del’s predictions, th us informing the need to recalibrate or not. A dditionally , binning-based metrics of calibration lik e ECE and Maximum Calibration Error ( Guo et al. 2017 ) are particularly limited by their dep endence on a user-sp ecified n um b er of bins, as a different n umber of bins can impact the resulting calibration metric greatly . F or example, in some applications, ECE and MCE can pro duce either the same metric or v astly differen t results dep ending on the chosen bin size for the same data set ( Reinke et al. 2023 ). See the discussion in Section 5 for commentary on how the num b er of bins affects ECE results. Heuristics for the num b er of bins in calculating ECE v ary , ranging from a recommendation of 10 to 20 bins ( Nixon et al. 2019 ), to using the square root of the n umber of observ ations ( Posocco & Bonnefo y 2021 ). By con trast, our MCLLO metho d w orks directly on the probabilit y scale with no need for binning. W e will adopt the square ro ot rule for this pap er when comparing binning-based metho ds such as ECE with the 6 MCLLO metho d. A recalibration technique with built-in h yp othesis testing is adv antageous to decision- mak ers in sev eral wa ys. First, a recalibrator with built-in hypothesis testing guarantees inclusion of the identit y map, a prop erty noted by V aicenavicius et al. ( 2019 ) that describes recalibration tec hniques that iden tify w ell-calibrated probabilit y predictions. Recalibrators that do not include the iden tity function will attempt to recalibrate well-calibrated data. F or example, Xu et al. ( 2015 ) implement a multiclass calibration approach that utilizes Maxim um Lik eliho o d Estimates (MLEs), but their approac h do es not include the identit y map and thus w ould at least sligh tly p erturb ev en well calibrated probabilities. Second, ev en though some existing recalibrators without the capabilit y of h yp othesis testing include the iden tity map, including a built-in hypothesis testing based metric of calibration con trols the t yp e one error rate and gains p o w er as sample size or departure from calibration gro ws and is not dep endent on a user-sp ecified n umber of bins, whic h are prop erties that existing measures of calibration lac k. Finally , a recalibration metho d that do es not dep end on a user-sp ecified bin size and that applies to all confidence outputs b y machine learning mo dels and data mo dels allo ws decision-mak ers to b etter trust a wider scop e of probabilit y predictions. Through our sim ulation study and case studies, we demonstrate that MCLLO recalibration can (1) iden tify well-calibrated data, (2) apply to a wider scop e of mac hine-generated predictiv e outputs than temp erature scaling or v ector scaling, and (3) impro v e calibration of uncalibrated data. The effectiveness of recalibration is shown not only using our LR T, but also via the existing measures of calibration ECE and reliabilit y diagrams. F urthermore, w e compare MCLLO recalibration to four existing recalibration techniques. W e implemen t an extension of binning describ ed b y Guo et al. ( 2017 ), and include temp erature and v ector scaling as describ ed b y this pap er, which otherwise compares existing metho ds 7 of recalibration instead of prop osing a new method of recalibration. W e also implemen t a m ulticlass calibration technique dev elop ed b y Xu et al. ( 2015 ). W e implement and assess these tec hniques in three case studies, sho wing virtues and drawbac ks of all methods under comparison. This pap er is organized as follo ws. Section 2 introduces MCLLO Recalibration and its prop erties. Section 3 demonstrates the capabilities MCLLO recalibration and LR T through a sim ulation study . Section 4 applies MCLLO recalibration to image classification, ob esit y , and ecology datasets and compares its functionality to four comparator recalibration techniques. Section 5 provides a discussion of our prop osed recalibration tec hnique and results. 2 Multicategory Linear Log Odds Metho dology W e address the m ulticategory classification problem with c > 1 classes. Eac h observ ation Y i b elongs in one and only one class. Thus we consider the random v ector Y i · ∼ M N ( p i,c × 1 ∈ R c , size = 1) , where p i,c × 1 is a collection of predictions that the observ ation will assume the v alue of eac h category . The goal of calibration assessment and recalibration is to ensure that probability predictions x i corresp ond to the actual even t rates p i . If necessary the x i can b e adjusted, i.e., recalibrated to resemble the p i so that the recalibrated predictions ultimatly describ e the actual even ts Y i . This pap er considers the p ossibilities p c × 1 ,i = x i · , the original probabilit y predictions, v ersus p c × 1 ,i = g i · , recalibrated predictions. The original and recalibrated predictions are stored in matrices X and G , resp ectively . In this section, w e consider the p ossibilit y that the recalibrated set of predictions G describing observ ations Y differs from the original predictions X according to a linear function on the log o dds scale. In our m ulticategory framework, predictor’s odds are defined b et w een eac h category and 8 the b aseline category . W e follo w the conv ention of setting the baseline category as the last category c ( Agresti 2006 ). In the MCLLO mo del, the analysis is not tec hnically inv ariant to the c hoice of baseline category , although empirically w e show that the baseline choice hsa a small effect on the analysis. See supplemen tary material for more details. W e prop ose a transformation that maps each prediction x ij to a recalibrated prediction g ij for eac h observ ation i ∈ { 1 , . . . , n } , and category j ∈ { 1 , . . . , c } . This is done via a transformation of the log o dds of eac h even t probability prediction x ij v ersus the probability prediction corresp onding the baseline category x ic , denoted log x ij x ic . The log o dds of ev ent probability predictions are mapp ed to the log o dds of the recalibrated predictions g ij and g ic , denoted log g ij g ic . The mapping is a linear function inv olving shift and scale parameters. Define tw o length c − 1 vectors δ and γ that contain the parameter v alues for the recalibration shift and scale, resp ectively . More details ab out the interpretation of δ and γ can b e found in the supplementary material. The goal of recalibration is to find optimal parameter estimates ˆ δ and ˆ γ so that the recalibrated predictions better reflect the observ ed frequencies in Y . W e obtain ˆ δ and ˆ γ b y maximizing a lik eliho o d that asso ciates probabilit y predictions x i with even t data Y i . Mathematically , this shift and scale follows the system of baseline-category log o dds form ulas defined b y log g ij g ic ! = log( δ j ) + γ j l og x ij x ic , (1) for i ∈ { 1 , . . . , n } and j ∈ { 1 , . . . , c − 1 } . The recalibrated probability prediction for the baseline category follows from the axioms of probability , sho wn in Equation ( 2 ). c X j =1 g ij = 1 . (2) Our MCLLO approach in volv es c categories and therefore utilizes a system of c equations 9 with 2( c − 1) parameters. Equation ( 1 ) is called the Multicategory Linear Log Odds (MCLLO) recalibration form ula. Manipulating this formula with the constrain t provided b y Equation ( 2 ) reveals a recalibrator for multicategory predictions. Another adv an tage of MCLLO recalibration is the av ailabilit y of hypothesis testing, as parameter v alues of δ = γ = 1 represent well calibrated predictions that do not require recalibration. MCLLO recalibration is therefore v aluable to any m ulticategory classification application, as a researcher can (1) test for well-calibrated probabilit y predictions via the metho dology in Section 2.4 , and if the predictions are not well-calibrated, (2) pro duce optimally calibrated probability predictions via equations pro vided in Section 2.1 . 2.1 Analytic F orm of Recalibrated Probability Predictions W e algebraically re-express Equations ( 1 ) and ( 2 ) to obtain Equation ( 3 ), whic h provides the form ula that produces recalibrated probabilit y predictions g ij as a function of uncalibrated predictions and parameter v ectors γ and δ . The recalibrated probabilit y predictions tak e the form g ij = δ j x γ j ij x γ j ic + P c − 1 m =1 δ m x γ m im x γ j − γ m ic , (3) for an y observ ation i ∈ { 1 , . . . , n } and category j ∈ { 1 , . . . c − 1 } . The recalibrated probabilit y prediction for the baseline category in observ ation i is g ic = 1 − c − 1 X j =1 g ij . (4) Giv en any parameter v alues δ , γ and original probabilit y predictions X , the recalibrated predictions in G are directly calculated from Equations ( 3 ) and ( 4 ). A deriv ation is provided 10 in the supplementary material. Section 2.3 describ es a maximum likelihoo d-based approac h to recalibrate a set of probabilit y predictions x ij to g ij . 2.2 MCLLO Lik eliho o d Recall the random n × c matrix of observ ations Y and the random n × c matrix X of uncalibrated probability predictions on c categories. The Multicategory Linear Log Odds Lik eliho o d function is defined b y L ( δ , γ ; X , Y ) = n Y i =1 c Y j =1 g Y ij ij , (5) where g ij is defined by Equations ( 3 ) and ( 4 ). This is a multinomial likelihoo d with recalibrated probabilities g ij as even t probabilities. 2.3 Recalibration of Probabilit y Predictions Equation ( 5 ) allo ws us to obtain maxim um lik eliho o d estimates (MLEs) ˆ δ M LE and ˆ γ M LE . W e hav e found the Broyden-Fletc her-Goldfarb-Shannon (BFGS) algorithm ( No cedal & W right 2006 ) in R to b e effectiv e in calculating ˆ δ M LE and ˆ γ M LE . See the supplemen tary material for our repro ducible co de. Emplo ying ˆ δ M LE and ˆ γ M LE in Equations ( 3 ) and ( 4 ) allo ws a researcher to recalibrate probability predictions in the MCLLO framework. 2.4 Lik eliho o d Ratio T est Our MCLLO approach includes a lik eliho o d ratio test (LR T) to assess the plausibility that the probability predictions x ij are well calibrated. The h yp otheses are H 0 : δ = γ = 1 (probability predictions are well-calibrated) , v ersus H 1 : δ j = 1 and/or γ j = 1 for some 1 ≤ j ≤ c − 1 . 11 The LR T statistic for H 0 is λ LR = − 2 log L ( δ = γ = 1 | X , Y ) L ( δ = ˆ δ M LE , γ = ˆ γ M LE | X , Y ) ! . (6) The test statistic asymptotically follo ws a χ 2 distribution with 2( c − 1) degrees of freedom. 2.5 Theoretical Prop erties In this section w e pro vide justification for the asymptotic consistency of the MLEs ˆ δ and ˆ γ . Lemma 1 asserts the conv exity of the negative log of the MCLLO lik eliho o d sho wn in Equation ( 5 ). This property of the lik eliho o d equation is imp ortan t as it guarantees that the Hessian matrix of the negativ e log lik eliho o d equation with resp ect to the parameters is p ositiv e semi-definite, and therefore has non-negative eigen v alues. Lemma 1. The ne gative lo g of the MCLLO likeliho o d in Equation (5) is c onvex in τ = log δ and γ . Pr o of. See App endix. Lemma 1 leads to the following theorem of the asymptotic consistency of the MLEs ˆ δ and ˆ γ . This characteristic of ˆ δ and ˆ γ is esp ecially imp ortant as the MLEs are estimates and th us it is necessary to sho w that ˆ δ and ˆ γ con v erge to the true parameter v alues δ and γ as sample size n b ecomes large. Theorem 1. The maximum likeliho o d estimators ˆ θ n = ( ˆ δ n , ˆ γ n ) for the lo g of the MCLLO likeliho o d shown in Equation (5), ℓ ( θ ) , ar e asymptotic al ly c onsistent. In other wor ds, ˆ θ n p − → θ , wher e ˆ θ n = ( ˆ δ n , ˆ γ n ) is the MLE b ase d on a sample of size n , and θ 0 = ( δ 0 , γ 0 ) is the true p ar ameter value. 12 Pr o of. See App endix. 3 Sim ulation Study 3.1 Sim ulation Study Results: LR T W e demonstrate ho w statistical p o w er of our LR T is impacted by sample size n and effect size (i.e. δ and γ ’s distance from 1 ). This is implemented through a Mon te Carlo simulation study with c = 10 categories. W e w ork with thirty sets of sim ulated data, with sample sizes n from the set { 400 , 450 , 500 , 600 , 1000 , 5000 } , with shifts from p erfectly w ell-calibrated data except for the shift parameter δ 1 taking v alues from the set { 1 , 1 . 1 , 1 . 2 , 1 . 3 , 1 . 4 } . More information ab out the sim ulation process can be found in the supplemen tary material. W e p erform our LR T describ ed in Section 2.4 and calculate a p -v alue for eac h rep etition using α = 0 . 05 . The rejection rate for eac h sim ulation is plotted in Figure 2 . The results sho w that our LR T b ecomes more p ow erful as sample size and effect size gro w. 3.2 Sim ulation Study: ECE T o sho w how our recalibration strategy achiev es calibration according to existing metrics (and not just our o wn LR T approac h), we asses ECE ( Guo et al. 2017 , P akdaman Naeini et al. 2015 b ), showing it improv es as our MCLLO recalibration approach is applied. W e analyze the uncalibrated predictions X and MCLLO-recalibrated predictions X ∗ . In eac h sim ulation, w e calculate the ECE of X and X ∗ , denoted E C E ( X ) and E C E ( X ∗ ) . Figure 4 displa y b oxplots of E C E ( X ) (in na vy) and E C E ( X ∗ ) (in green) for the five sim ulations of sample size n = 5000 with effect sizes { 1 , 1 . 1 , 1 . 2 , 1 . 3 , 1 . 4 } . The boxplots sho w that though the ECE of probabilit y predictions X increases with greater effect size, the MCLLO recalibrated predictions X ∗ are more stable no matter how uncalibrated the 13 1.0 1.1 1.2 1.3 1.4 0.0 0.2 0.4 0.6 0.8 1.0 Rejection rate as Parameters Increase Effect Size rho Rejection Rate Legend n=400 n=450 n=500 n=600 n=1000 n=5000 Figure 2: Thirt y sim ulations of 1000 Mon te Carlo rep etitions w ere run with v arying sample sizes and effect sizes. The rejection rates for eac h simulation are tabulated in the supplemen tary material and visualized in this figure, showing the effect of sample size and effect size on the p ow er of our LR T. original probabilit y predictions X are. The supplementary material con tains visualizations of the comparisons for the other sim ulations conducted with different sample sizes. W e also show ECE fluctuates as sample size and effect size v ary , making articulation of meaningful ECE thresholds difficult. Figure 4 plots the mean difference in the ECE of the uncalibrated v ersus MCLLO-recalibrated probabilit y predictions E C E ( X ) − E C E ( X ∗ ) for the 1000 rep etitions of t wen ty of the simulations. Figure 4 shows that MCLLO recalibration has little effect on the ECE of the uncalibrated probabilit y predictions X when the data are not calibrated. As effect size gro ws, MCLLO recalibration increasingly low ers the ECE of the probabilit y predictions. Unlik e the LR T analysis in the previous subsection, the effectiv eness of recalibration via ECE is not a function of sample size. 14 Figure 3: Fiv e simulations of 1000 Mon te Carlo Rep etitions (with sample size n = 5000 ) w ere run with v arying effect sizes. The ECE of the original probabilit y predictions E C E ( X ) for eac h rep etition are plotted in na vy , and the ECE of the MCLLO recalibrated probabilit y predictions E C E ( X ∗ ) for each rep etition are plotted in green. Figure 4: T w ent y sim ulations of 1000 Mon te Carlo rep etitions w ere run with v arying sample sizes and effect sizes. The mean difference in ECE scores E C E ( X ) − E C E ( X ∗ ) for eac h sim ulation are visualized. 15 4 Case Studies W e demonstrate the capabilities of MCLLO recalibration through three case studies on image classification, ob esit y , plus an and ecology example in the supplemen tary material. In each example, we apply MCLLO recalibration to probabilit y predictions and assess calibration using b oth ECE and the MCLLO LR T describ ed in Section 2.4 . Note that in all three studies, we select the baseline category to b e the last category alphab etically . W e compare MCLLO recalibration to four existing recalibration techniques in eac h setting. The case studies sho wcase sev eral properties of MCLLO recalibration that some recalibration tec hniques lack. First, they demonstrate the wide range of applications that MCLLO recalibration can b e applied to, as it do es not require under-the-ho o d access to the underlying mo del. Second, we show the in terpretability of the MCLLO LR T relative to ECE. The LR T is designed sp ecifically to assess whether a set of probabilit y predictions is p o orly calibrated, wheras a standalone ECE score is not. W e also show that MCLLO recalibration does not dep end on a user-sp ecified bin size. Finally , w e demonstrate that MCLLO recalibration pro duces probabilit y predictions that are comparable to or more well-calibrated than those obtained from comp eting recalibration methods, as confirmed b y b oth the MCLLO LR T and ECE. 4.1 Comparator Metho ds W e compare MCLLO recalibration to four comparator metho ds. The first comparator metho d is a m ulticlass calibration technique developed b y Xu et al. ( 2015 ) that utilizes a lik eliho o d-based belief function to recalibrate a set of probabilit y predictions. The second is a binning pro cedure as describ ed as a comparison for the methods prop osed b y Guo et al. ( 2017 ). The final t w o comparator metho ds are temp erature scaling ( Hin ton et al. 2015 ) and v ector scaling ( Silv a Filho et al. 2023 ). T emp erature scaling inv olves a single parameter 16 for recalibration while vector scaling uses 2 c parameters. Both scaling approaches require under-the-ho o d access to pre-softmax la yer logits and th us cannot b e used in the ob esit y case study which rely on random forests. More information on these metho ds can b e found in the supplementary material. 4.2 Case Study: Image Classification W e utilize the CIF AR-10 dataset ( Krizhevsky 2009 ), whic h consists of n = 10 , 000 images of ob jects that b elong to one of of ten categories: airplane, automobile, bir d, c at, de er, do g, fr o g, horse, ship, or truck . The observ ed categories for each observ ation specified b y CIF AR-10 are enco ded in Y . The set of probabilit y predictions, X , are outputted from V GG Net ( Simon yan & Zisserman 2014 ),a famous image classification mo del. More details ab out our neural netw ork implementation can b e found in the supplemen tary material. The outputted probabilit y predictions ha ve 93 . 00% for the holdout set accuracy on basis of the most probable prediction. W e randomly split the probability predictions X in to an 80% training set (with n t = 8 , 000 observ ations) and a 20% holdout set (with n h = 2 , 000 observ ations). Denote the training set of probabilit y predictions as X t and holdout set of probability predictions as X h . The corresp onding observed lab els are denoted Y t and Y h . 4.2.1 Calibration Assessment for Image Classification W e compute MLEs and apply the MCLLO Lik eliho o d Ratio T est describ ed in Section 2.4 to determine if the original, uncalibrated confidence scores X t and X h and observ ed v alues enco ded in Y t and Y h are well-calibrated. These estimates are sho wn in T able 1 for b oth the training [T op] and holdout [Bottom] sets of confidence scores. The standard errors for these estimates are also listed in paren theses. The 17 standard errors w ere obtained by in verting the Hessian matrix outputted b y the optim() function, applied to the negativ e of the log likelihoo d function describ ed by Equation ( 5 ). The square ro ot of the diagonal entries of the inv erse Hessian matrix are the standard errors of the 2( c − 1) parameter estimates. T o quan tify the calibration of b oth sets, we conduct the MCLLO LR T to test the calibration status of X t , Y t and X h , Y h . The test statistic is calculated to b e λ LR,t = 533 on 18 degrees of freedom for the training set and λ LR,h = 123 for the holdout set, yielding p -v alues of < 0 . 0001 for b oth sets of uncalibrated confidence scores. W e conclude that the confidence scores X t , X h and observed v alues Y t and Y h are not well-calibrated (ev en within the training set) and w ould b enefit from recalibration. T able 1: Maximum lik eliho o d estimates ˆ δ and ˆ γ for probability predictions and observ ed v alues for the CIF AR-10 case study training set X t and holdout set X h of confidence scores, with baseline category truck . The standard errors for eac h of these parameter estimators are shown in paren theses. Image Classification P arameter Estimates that Maximize Lik eliho o d: X t ˆ δ 1.461 (0.315) 0.710 (0.135) 1.275 (0.346) 1.966 (0.456) 0.908 (0.306) 3.095 (0.769) 1.166 (0.370) 1.785 (0.539) 1.111 (0.240) ˆ γ 0.705 (0.028) 0.679 (0.043) 0.692 (0.025) 0.711 (0.022) 0.732 (0.028) 0.685 (0.021) 0.659 (0.030) 0.729 (0.031) 0.621 (0.030) Image Classification P arameter Estimates that Maximize Lik eliho o d: X h ˆ δ 0.693 (0.338) 0.665 (0.211) 0.912 (0.496) 1.588 (0.708) 0.312 (0.234) 1.613 (0.824) 1.037 (0.635) 0.603 (0.439) 0.711 (0.331) ˆ γ 0.746 (0.061) 0.539 (0.060) 0.719 (0.052) 0.740 (0.047) 0.872 (0.073) 0.727 (0.046) 0.676 (0.060) 0.768 (0.077) 0.679 (0.075) 18 4.2.2 Image Classification: MCLLO Recalibration W e recalibrate the holdout set of probabilit y predictions as follows. W e apply Equations ( 3 ) and ( 4 ) to the to the holdout set X h along with the estimated parameters learned from training set as shown in T able 1 . The matrix X ∗ h,M C LLO con tains the recalibrated probabilities of the holdout set of confidence scores. MCLLO recalibration of the confidence scores X h can b e visualized with reliabilit y diagrams ( Silv a Filho et al. 2023 ) created with 10 bins, in which well-calibrated confidence scores are plotted closer to the x = y line. Figure 5 displa ys reliabilit y diagrams that w ere created using the uncalibrated confidence scores X h [T op Left], where [T op Middle] was created using the MCLLO-recalibrated confidence scores X ∗ h,M C LLO . A quick comparison of the t wo plots sho ws that the reliabilit y diagram for uncalibrated confidence scores in [Left] are sligh tly further aw ay from the x = y line than the reliability diagram for MCLLO recalibrated confidence scores. W e calculate the ECE of X ∗ h,M C LLO to be 0.021, impro ved from 0.035 for the uncalibrated confidence scores X h . F urther, the MCLLO LR T for this set of MCLLO-recalibrated probabilit y predictions pro duces a test statistic of λ = 24 . 70 , on 18 degrees of freedom corre- sp onding to a p -v alue of 0.133. W e therefore conclude that applying MCLLO recalibration to the holdout set of confidence scores yielded w ell calibrated probability predictions. 4.2.3 CIF AR: MCLLO v ersus Comparator Metho ds W e compare the MCLLO recalibrated confidence scores X ∗ h,M C LLO to the all the recali- bration techniques discussed in Section 4.1 , denoting X ∗ h,X u , X ∗ h,E B , X ∗ h,T S and X ∗ h,V S for the technique b y Xu et al. ( 2015 ), the extension of binning as describ ed b y Guo et al. ( 2017 ), temp erature scaling ( Hinton et al. 2015 ), and v ector scaling ( Silv a Filho et al. 2023 ), resp ectiv ely . 19 T able 2 lists the ECE (calculated with √ n t bins for the training set and √ n h bins for the holdout set) and LR T calibration metrics calculated from X ∗ h,M C LLO , X ∗ h,X u , X ∗ h,E B , X ∗ h,T S , and X ∗ h,V S for the image classification confidence scores. The recalibrated probabilities via all metho ds rep ort slightly higher or equal accuracy compared to the original probabilit y predictions X h . In terms of calibration, the ECE results show that all recalibration tec hniques reduce ECE when compared to the original, uncalibrated confidence scores X h , with the exception of the technique by Xu et al. ( 2015 ). X ∗ h,M C LLO rep orts an ECE of 0.021, comparable to that of X ∗ h,T S and X ∗ h,V S at 0.020. This result is somewhat surprising, as v ector scaling and MCLLO recalibration utilize 2 c and 2( c − 1) parameters, resp ectiv ely , where temp erature scaling only uses a single parameter to recalibrate the confidence scores for all the categories. W e would therefore not exp ect temp erature scaling to calibrate as w ell as v ector scaling or MCLLO recalibration. On the other hand, the LR T p -v alues rep ort that X ∗ h,M C LLO , X ∗ h,V S and X ∗ h,E B are well-calibrated, while X ∗ h,T S is not. This conclusion is more reasonable than the one based on ECE results, as MCLLO recalibration and v ector scaling utilize more parameters than temp erature scaling. Figure 5 also shows reliability diagrams for the recalibrated confidence scores X ∗ h,T S [top righ t], X ∗ h,V S [b ottom left], X ∗ h,E B [b ottom middle], and X ∗ h,X u [b ottom right], along with their corresponding ECE and LR T p -v alue from T able 2 . It is not surprising that MCLLO and v ector scaling produce confidence scores that are similarly calibrated as the tw o metho ds maximize a likelihoo d with resp ect to a similar n umber of parameters. F rom the reliabilit y diagrams, it is clear that temperature scaling ac hieved remarkable calibration at a lo w cost of complexity: the reliabilit y diagram representing X ∗ h,T S follo ws the x = y line and its ECE is comparable to more complex methods lik e MCLLO and vector scaling. How ever, p -v alues rep ort that temp erature scaling do es not pro vide w ell-calibrated predictions. An examination of which parameter estimates used to calculate these p -v alues differ ( δ 4 and δ 6 ) 20 indicate that those resp ectiv e categories are p o orly handled b y temp erature scaling. Thus, the MCLLO approac h shows that temp erature scaling handles the do g and c at category p o orly , with those sp ecific categories driving the termp erature scaling results to w ards a lac k of calibraiton. See the supplementary material for more details. T able 2: Comparison of calibration metrics from applying recalibration tec hniques on CIF AR-10 confidence scores. Rep orted are the p -v alue from MCLLO’s lik eliho o d ratio test, the ECE score with √ n bins, accuracy , and the p ercentage of first-place lab els that changed after recalibration. Calibration set n MCLLO p -v alue ECE ( √ n bins) Accuracy % lab el change X t 8000 < 0 . 001 0.033 92.7% – X h 2000 < 0 . 001 0.035 93.0% – X ∗ h, MCLLO 2000 0.133 0.021 93.1% 1.95% X ∗ h, Xu 2000 < 0 . 001 0.052 93.0% 0% X ∗ h, EB 2000 0.337 0.025 93.2% 0.8% X ∗ h, TS 2000 0.002 0.020 93.0% 0% X ∗ h, VS 2000 0.142 0.020 93.1% 1.8% 4.3 Case Study: Ob esit y 4.3.1 Random forest as predictive mo del W e obtain probabilit y predictions b y fitting a random forest to a publicly av ailable data set of 2,111 participants that examined 7 ob esity classifications as a function of participant c haracteristics. W e used a 75% training 25% holdout split for this analysis, resulting in probabilit y predictions X for n = 528 individuals. More detail on the implemen tation of this random forest can b e found in the supplemen tary material. 21 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Uncalibrated Confidence Accuracy ECE 0.035 p < 0.001 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 MCLLO Confidence Accuracy ECE 0.021 p = 0.133 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Temperature Scaling Confidence Accuracy ECE 0.020 p = 0.002 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Vector Scaling Confidence Accuracy ECE 0.020 p = 0.142 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Extension of Binning Confidence Accuracy ECE 0.025 p = 0.337 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Xu et al Confidence Accuracy ECE 0.052 p < 0.001 Figure 5: Reliability diagrams for the CIF AR holdout confidence scores b efore [top left] and after different metho ds of recalibration, where well-calibrated predictions lie along the x = y line. The top ro w sho ws (left to righ t) the original uncalibrated holdout confidence scores X h , MCLLO-recalibrated confidence scores X ∗ h, MCLLO , and temp erature scaled confidence scores X ∗ h,T S . The b ottom row shows (left to righ t) vector scaled confidence scores X ∗ h,V S , confidence scores obtained through the extension of binning as describ ed b y Guo et al. ( 2017 ), denoted X ∗ h,E B , and those obtained via the recalibration method by Xu et al. ( 2015 ), denoted X ∗ h,X u . 22 4.3.2 Recalibration of Ob esit y Case Study Probability Predictions W e recalibrate the probabilit y predictions X as follows. W e split X into an additional 75% training and 25% holdout split, resulting in X t , X h , (eac h with n t = 396 , and n h = 132 observ ations). W e then obtain MCLLO MLEs, ˆ δ and ˆ γ , learned from the training set X t , whic h are displa yed in T able ( 3 ) with baseline category “Normal W eigh t." The standard errors for for these estimates, calculated similarly to those calculated in Section 4.2.2 , are listed in parentheses. Finally , w e apply MCLLO recalibration with baseline category “Normal W eight" with the learned parameters to the holdout set X h : in other words, w e apply Equations ( 3 ) and ( 4 ) to the parameters in T able ( 3 ) and the holdout set, to calculate recalibrated probabilities that are stored in the matrix X ∗ h,M C LLO . T able 3: Maxim um lik eliho o d estimates ˆ δ and ˆ γ for probability predictions and observed v alues for the ob esity case study , with baseline category Normal W eight . The standard errors for these estimates are also pro vided in paren theses. Ob esit y P arameter Estimates that Maximize Likelihoo d: X t ˆ δ 0.527 (0.241) 1.002 (0.406) 2.714 (1.404) 0.002 (0.001) 1.167 (0.391) 1.529 (0.524) ˆ γ 2.785 (0.399) 2.211 (0.214) 1.719 (0.175) 4.698 (0.960) 2.387 (0.247) 2.020 (0.188) W e similarly recalibrate the holdout probability predictions with the technique by Xu et al. ( 2015 ) and the extension of binning as describ ed by Guo et al. ( 2017 ), learning parameter estimates on the training set and applying those estimates to the holdout set. This results in the recalibrated probabilit y predictions X ∗ h,X u and and X ∗ h,E B . Since the uncalibrated probabilit y predictions are outputs from a random forest and pre-softmax logits are not attainable, temp erature scaling and vecto r scaling do not apply and we can therefore not 23 include recalibrated probability predictions via these metho ds in our analysis. 4.3.3 Results and Calibration Metrics of Ob esity Probabilit y Predictions Figure 6 displays the reliability diagrams ( Silv a Filho et al. 2023 ), created with 10 bins, for X h , X ∗ h,M C LLO , X ∗ h,E B , and X ∗ h,X u , along with their corresp onding ECE and p -v alues from the MCLLO LR T. Visually , the recalibrated probabilit y predictions follow the x = y line more closely than X h , which agrees with the display ed calibration metrics. 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Uncalibrated Confidence Accuracy ECE 0.127 p = 0.014 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 MCLLO Confidence Accuracy ECE 0.073 p = 0.167 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Extension of Binning Confidence Accuracy ECE 0.083 p = 0.068 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.2 0.4 0.6 0.8 1.0 Xu et al Confidence Accuracy ECE 0.078 p = 0.014 Figure 6: Reliabilit y diagrams for the obesity holdout confidence scores b efore [top left] and after different metho ds of recalibration, where well-calibrated predictions lie along the x = y line. [Left] to [Righ t] displa ys diagrams for the uncalibrated holdout confidence scores X h , and recalibrated confidence scores X ∗ h, MCLLO , X ∗ h,E B , and X ∗ h,X u . T able ( 4 ) lists the calibration metrics computed for the uncalibrated and recalibrated (via MCLLO and comparator metho ds) probabilit y predictions. F or all sets of probability predictions, w e rep ort ECE with 20 or 12 bins (for the training and holdout sets, resp ectiv ely) and MCLLO p -v alue. The MCLLO LR T concludes that the uncalibrated holdout probability predictions X h are not w ell-calibrated for a traditional α = 0 . 05 decision rule. The extension of binning as describ ed by Guo et al. ( 2017 ) and, as exp ected, MCLLO recalibration, produce 24 w ell-calibrated probability predictions via the MCLLO LR T. MCLLO recalibration also results in the lo west ECE than the comp etitor recalibration techniques. It seems noteworth y that the a v ailabilit y of MCLLO LR T can identify successful recalibration based on other metho ds. Our LR T approac h can help researc hers learn which recalibration strategy may b e b est in a giv en situation. Also, see the supplementary material for a discussion of the tradeoff b etw een accuracy and calibration. T able 4: Calibration metrics for the ob esity case study . Probability predictions w ere obtained b y applying a random forest to the ob esit y data set and recalibrated using MCLLO, the metho d of Xu et al., and an extension of binning describ ed b y Guo et al. Rep orted are the p -v alues from MCLLO’s lik eliho o d ratio test, the ECE score with √ n bins, accuracy , and the p ercen tage of first-place predicted lab els that changed after recalibration. Calibration set n MCLLO p -v alue ECE ( √ n bins) Accuracy % lab el change X t 396 < 0 . 001 0.160 85.1% – X h 132 0.014 0.127 78.8% – X ∗ h, MCLLO 132 0.167 0.073 81.1% 2.27% X ∗ h, EB 132 0.068 0.083 81.0% 3.03% X ∗ h, Xu 132 0.014 0.078 81.1% 3.0% 5 Discussion and Conclusion W e hav e sho wn that the prop osed MCLLO approac h is a v aluable contribution to the literature b ecause it (i) directly tests the calibration of a single mo del and furnishes a recalibration strategy for faulty mo del predictions, (ii) do es not require under-the-ho o d mo del access, e.g., accessing logit-scale predictions within the lay ers of a neural netw ork, and (iii) pro vides output which is easy for h uman analysts to understand. This is imp ortant 25 b ecause reliable probabilit y predictions in the m ulticategory realm are crucial for making effectiv e decisions. F or example, classifying an image as a “plane" with a 40% probabilit y prediction v ersus a 60% probabilit y prediction may cause humans to make differen t decisions in this case. Though the general idea of h yp othesis testing to iden tify w ell-calibrated probability predic- tions has been men tioned in the literature b y V aicena vicius et al. ( 2019 ), previous literature do es not fully describe the metho dology of conducting a statistical h yp othesis test. In particular, previous work do es not explicitly define a lik eliho o d function, a null hypothesis equiv alen t to w ell-calibrated probability predictions, or methodology to obtain a sp ecific test statistic or distribution needed to calculate a p -v alue. Additionally , V aicenavicius et al. ( 2019 ) describes assessing calibration but do es not offer a solution to recalibrate a set of probabilit y predictions. In con trast, the prop osed MCLLO approac h is, to our kno wledge, the first fully describ ed and demonstrated metho d to test whether or not a set of probabilit y predictions are plausibly w ell calibrated. F urther, MCLLO MLEs can b e used to recalibrate fault y predictions in the even t that the corresp onding test rejects calibration. The MCLLO LR T has some sp ecific adv antages ov er other p opular metrics of calibration lik e ECE. First, the LR T test statistic in Equation ( 6 ) con trols the t yp e I error rate and gains pow er as sample size or departure from calibration grows, as sho wn in the simulation study in the supplemen tary material. Second, the interpretation of ECE is limited: though lo w er ECE scores signify b etter calibration, ECE alone cannot determine with any level of significance if a set of probability predictions is well-calibrated or not. The MCLLO LR T can direct test the calibration h yp othesis. A dditionally , ECE do es not take in to accoun t ev ery probabilit y prediction: instead, it only considers the maxim um probabilit y prediction of each observ ation, while the MCLLO LR T utilizes the probability predictions for all the categories for eac h observ ation. Finally , ECE do es not prescrib e a strategy to recalibrate 26 predictions, while MCLLO do es. ECE dep ends on a user-sp ecified num b er of bins, and differen t c hoices of bin num b er can lead to different ECE scores. MCLLO recalibration do es not require binning, th us removing one more sub jective sp ecification that may influence results. Despite these drawbac ks, w e note ECE is a useful and p opular metric, and w e ha v e sho wn (see supplemen tary material) that our MCLLO recalibration approac h also impro ves ECE. The metrics can b e used in concert to gain the adv antages of eac h. The MCLLO approac h includes iden tity map. If δ = γ = 1 , then X = G and the original predictions are unchanged. I.e., an MCLLO recalibration can conceptually lea ve w ell-calibrated predictions alone. This feature is not presen t in some existing recalibration tec hniques. F or example, the recalibration method by Xu et al. ( 2015 ) describ ed in Section 4 do es not include the iden tit y map. Thus, even w ell calibrated predictions will b e adjusted at least slightly b y the Xu et al. ( 2015 ) metho d. In addition to p erforming comparativ ely , and sometimes b etter, than temp erature and v ector scaling, w e hav e demonstrated in Section 4.3 and the supplemen tary material that MCLLO recalibration applies to a broader set of applications than temp erature and v ector scaling, as it do es not require under-the-ho o d access to the logits of a mo del that generates probabilit y predictions. F or example, MCLLO recalibration can be used for random forests where temp erature and vector scaling cannot. This is important, as our case study shows random forests are not guaran teed to pro duce well calibrated probabilit y predictions (T able 4 ). Th us the case studies exhibit that if a predictiv e mo del do es not pro duce w ell calibrated probabilit y predictions, MCLLO recalibration can map the outputted predictions to well-calibrated probabilit y predictions. W e close b y addressing a limitation of MCLLO recalibration: in other m ulticategory mo dels, lik e multinomial logistic regression ( Agresti 2006 ), baseline category c hoice is completely arbitrary , meaning that the likelihoo d, MLEs, recalibrated probabilities, and testing results 27 are in v arian t to the c hoice of baseline category . P erhaps surprisingly , this prop erty do es not extend to MCLLO recalibration when the alternative hypothesis H 1 is true. The shift parameter δ of the MCLLO form ula (Equation ( 1 )) under one baseline category c hoice cannot be directly represen ted as a function of the parameters of another baseline category c hoice. Thus, it is p ossible that sp ecification of the baseline category could affect results. Empirically , w e ha v e found these effects to b e v ery small (see supplemen tary material). 6 Disclosure statemen t The authors declare no conflict of in terest exists. 7 Data A v ailabilit y Statemen t Data ha ve b een made a v ailable at the following URL: This will b e established up on article acceptance. References Aaron E. Maxwell, T. A. W. & F ang, F. (2018), ‘Implementation of mac hine-learning classification in remote sensing: an applied review’, International Journal of R emote Sensing 39 (9), 2784–2817. Agresti, A. (2006), An in tro duction to categorical data analysis, in ‘Wiley Series in Prob- abilit y and Statistics’, John Wiley & Sons, Inc., chapter 6. First published: 7 A ugust 2006, Online ISBN: 9780470114759, Copyrigh t © 2007 John Wiley & Sons, Inc. Barlo w, R. E. & Brunk, H. D. (1972), ‘The isotonic regression problem and its dual’, J. A m. Stat. A sso c. 67 (337), 140. 28 F agerland, M. W., Hosmer, D. W. & Bofin, A. M. (2008), ‘Multinomial go o dness-of-fit tests for logistic regression mo dels’, Stat. Me d. 27 (21), 4238–4253. Ganapathira ju, A., Hamak er, J. & Picone, J. (2004), ‘Applications of supp ort vector mac hines to sp eec h recognition’, IEEE T r ansactions on Signal Pr o c essing 52 (8), 2348– 2355. Go etz, J., Brenning, A., Petsc hko, H. & Leop old, P . (2015), ‘Ev aluating mac hine learning and statistical prediction tec hniques for landslide susceptibilit y modeling’, Computers & Ge oscienc es 81 , 1–11. Guo, C., Pleiss, G., Sun, Y. & W einberger, K. Q. (2017), ‘On calibration of mo dern neural net w orks’ . Hameed, N., Shabut, A. M., Ghosh, M. K. & Hossain, M. (2020), ‘Multi-class m ulti-lev el classification algorithm for skin lesions classification using machine learning techniques’, Exp ert Systems with A pplic ations 141 , 112961. Hin ton, G., Viny als, O. & Dean, J. (2015), ‘Distilling the knowledge in a neural net w ork’ . Karalas, K., T sagkatakis, G., Zerv akis, M. & T sakalides, P . (2016), ‘Land classification using remotely sensed data: Going multilabel’, IEEE T r ansactions on Ge oscienc e and R emote Sensing 54 (6), 3548–3563. Krizhevsky , A. (2009), Learning m ultiple la yers of features from tin y images. Kull, M., Filho, T. S. & Flach, P . (2017), Beta calibration: a w ell-founded and easily implemen ted improv ement on logistic calibration for binary classifiers, in A. Singh & J. Zhu, eds, ‘Proceedings of the 20th International Conference on Artificial In telligence and Statistics’, V ol. 54 of Pr o c e e dings of Machine L e arning R ese ar ch , PMLR, pp. 623–631. Kumar, A., Sara wagi, S. & Jain, U. (2018), T rainable calibration measures for neural 29 net w orks from kernel mean em b eddings, in J. Dy & A. Krause, eds, ‘Proceedings of the 35th International Conference on Mac hine Learning’, V ol. 80 of Pr o c e e dings of Machine L e arning R ese ar ch , PMLR, pp. 2805–2814. Kupp ers, F., Kronen b erger, J., Shan tia, A. & Haselhoff, A. (2020), Multiv ariate confi- dence calibration for object detection, in ‘Pro ceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition (CVPR) W orkshops’ . Le Coz, A., Herbin, S. & Adjed, F. (2024), Confidence calibration of classifiers with many classes, in A. Glob erson, L. Mack ey , D. Belgrav e, A. F an, U. P aquet, J. T omczak & C. Zhang, eds, ‘Adv ances in Neural Information Pro cessing Systems’, V ol. 37, Curran Asso ciates, Inc., pp. 77686–77725. Mac hado, G. R., Silv a, E. & Goldsc hmidt, R. R. (2021), ‘Adv ersarial mac hine learning in image classification: A survey to w ard the defender’s p ersp ective’, A CM Comput. Surv. 55 (1). Mi, W., Li, J., Guo, Y., Ren, X., Liang, Z., Zhang, T. & Zou, H. (2021), ‘Deep learning-based m ulti-class classification of breast digital pathology images’, Canc er Management and R ese ar ch 13 , 4605–4617. PMID: 34140807. Naeini, M. P . & Co op er, G. F. (2016), Binary classifier calibration using an ensem ble of near isotonic regression mo dels, in ‘2016 IEEE 16th International Conference on Data Mining (ICDM)’, IEEE. Niculescu-Mizil, A. & Caruana, R. (2005), Predicting goo d probabilities with supervised learning, in ‘Pro ceedings of the 22nd International Conference on Mac hine Learning’, ICML ’05, Asso ciation for Computing Mac hinery , New Y ork, NY, USA, p. 625–632. Nixon, J., Dusen b erry , M. W., Zhang, L., Jerfel, G. & T ran, D. (2019), Measuring calibration 30 in deep learning, in ‘Pro ceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition (CVPR) W orkshops’, pp. 38–41. No cedal, J. & W right, S. (2006), Numeric al optimization , Springer series in op erations researc h and financial engineering, 2. ed. edn, Springer, New Y ork, NY. P akdaman Naeini, M., Co op er, G. & Hauskrec ht, M. (2015 a ), ‘Obtaining well calibrated probabilities using bay esian binning’, Pr o c. Conf. AAAI A rtif. Intel l. 29 (1). P akdaman Naeini, M., Co op er, G. & Hauskrec h t, M. (2015 b ), ‘Obtaining w ell calibrated probabilities using ba yesian binning’, Pr o c e e dings of the AAAI Confer enc e on A rtificial Intel ligenc e 29 (1). P anca, V. & Rustam, Z. (2017), Application of machine learning on brain cancer m ulticlass classification, Author(s). Platt, J. et al. (1999), ‘Probabilistic outputs for supp ort vector mac hines and comparisons to regularized likelihoo d metho ds’, A dvanc es in lar ge mar gin classifiers 10 (3), 61–74. P oso cco, N. & Bonnefoy , A. (2021), Estimating exp ected calibration errors, in I. F arkaš, P . Masulli, S. Otte & S. W ermter, eds, ‘Artificial Neural Netw orks and Machine Learning – ICANN 2021’, Springer International Publishing, Cham, pp. 139–150. Ra jaraman, S., Ganesan, P . & Antani, S. (2022), ‘Deep learning mo del calibration for impro ving p erformance in class-im balanced medical image classification tasks’, PLOS ONE 17 (1), e0262838. Ramasw amy , S., T amay o, P ., Rifkin, R., Mukherjee, S., Y eang, C. H., Angelo, M., Ladd, C., Reich, M., Latulipp e, E., Mesirov, J. P ., Poggio, T., Gerald, W., Loda, M., Lander, E. S. & Golub, T. R. (2001), ‘Multiclass cancer diagnosis using tumor gene expression signatures’, 98 (26), 15149–15154. 31 Reink e, A., Tizabi, M. D., Baumgartner, M., Eisenmann, M., Heckmann-Nötzel, D., Ka vu, A., Rädsc h, T., Sudre, C., A cion, L., Antonelli, M., Arb el, T., Bakas, S., Benis, A., Blasc hk o, M., Büttner, F., Cardoso, M. J., Cheplygina, V., Chen, J., Christo doulou, E. & Maier-Hein, L. (2023), ‘Understanding metric-related pitfalls in image analysis v alidation’ . R ychlik, M. (2021), ‘A pro of of conv ergence of multi-class logistic regression netw ork’ . Sam by al, A. S., Niy az, U., Krishnan, N. C. & Bath ula, D. R. (2023), ‘Understanding calibration of deep neural netw orks for medical image classification’, Computer Metho ds and Pr o gr ams in Biome dicine 242 , 107816. Shiuan W an, T.-C. L. & Chou, T.-Y. (2012), ‘A landslide exp ert system: image classification through in tegration of data mining approaches for multi-category analysis’, International Journal of Ge o gr aphic al Information Scienc e 26 (4), 747–770. Silv a Filho, T., Song, H. & P erello-Nieto, M. e. a. (2023), ‘Classifier calibration: A surv ey on how to assess and improv e predicted class probabilities’, Machine L e arning 112 , 3211– 3260. Simon yan, K. & Zisserman, A. (2014), ‘V ery deep con volutional netw orks for large-scale image recognition’, CoRR abs/1409.1556 . V aicenavicius, J., Widmann, D., Andersson, C., Lindsten, F., Roll, J. & Sc hön, T. (2019), Ev aluating mo del calibration in classification, in K. Chaudh uri & M. Sugiyama, eds, ‘Pro ceedings of the T wen t y-Second In ternational Conference on Artificial Intelligence and Statistics’, V ol. 89 of Pr o c e e dings of Machine L e arning R ese ar ch , PMLR, pp. 3459–3467. Widmann, D., Lindsten, F. & Zac hariah, D. (2019), Calibration tests in multi-class classifica- tion: A unifying framew ork, in H. W allac h, H. Laro c helle, A. Beygelzimer, F. d ' Alché-Buc, 32 E. F ox & R. Garnett, eds, ‘Adv ances in Neural Information Pro cessing Systems’, V ol. 32, Curran Asso ciates, Inc. Xu, P ., Dav oine, F. & Denœux, T. (2015), Evidential multinomial logistic regression for m ulticlass classifier calibration, in ‘2015 18th In ternational Conference on Information F usion (F usion)’, pp. 1106–1112. Y eung, K. Y. & Bumgarner, R. E. (2003), ‘Multiclass classification of microarra y data with rep eated measurements: application to cancer’, Genome Biol. 4 (12), R83. Zadrozn y , B. & Elkan, C. (2001), Obtaining calibrated probabilit y estimates from decision trees and naive ba y esian classifiers, in ‘Icml’, V ol. 1, pp. 609–616. 8 App endix 8.1 Pro ofs of Lemmas and Theorems Lemma 2. The ne gative lo g of the MCLLO likeliho o d in Equation (5) is c onvex in τ = log δ and γ . Pr o of. Cho ose an arbitrary i ∈ { 1 , . . . , n } . Define W = τ Diag γ and x = 1 log x i 1 x ic · · · log x ic − 1 x ic ⊤ , resulting in the activ ation v ector a = W x = log g i 1 g ic · · · log g ic − 1 g ic ⊤ . Then y = softmax ( a ) = g i 1 1 − g ic · · · g ic − 1 1 − g ic ⊤ , is a parallel from R yc hlik ( 2021 ) to MCLLO recalibration. By Corollary 3 in Ryc hlik ( 2021 ), the negativ e log of the MCLLO likelihoo d is conv ex in τ and γ . Theorem 2. The maximum likeliho o d estimators ˆ θ n = ( ˆ δ n , ˆ γ n ) for the lo g of the MCLLO likeliho o d shown in Equation (5), ℓ ( θ ) , ar e asymptotic al ly c onsistent. In other wor ds, ˆ θ n p − → θ , 33 wher e ˆ θ n = ( ˆ δ n , ˆ γ n ) is the MLE b ase d on a sample of size n , and θ 0 = ( δ 0 , γ 0 ) is the true p ar ameter value. Pr o of. W e verify tw o assumptions required for this pro of. First, the negativ e log of the MCLLO likelihoo d function is − ℓ ( θ ) = − c X j =1 n X i =1 y ij log δ j − c X j =1 n X i =1 y ij γ j log x ij − c X j =1 n X i =1 y ij c X z =1 γ z − γ j ! log x ic (7) + n X i =1 n X j =1 y ij log c X m =1 δ m x γ m im x P c z =1 γ z − γ m ic ! , (8) and therefore is twice contin uously differen tiable. Second, the exp ected v alue of the score function (i.e., the gradien t of the negative log-lik eliho o d function) at the true parameter v alue is zero: E h − ∂ ℓ ( θ 0 ) ∂ θ i = 0 . This is a prop erty of the likelihoo d function as an ob jectiv e function that has a minimum v alue. By definition of a minim um, whic h is n umerically approximated by the Broy- den–Fletc her–Goldfarb–Shannon (BF GS) algorithm No cedal & W righ t ( 2006 ) , the MLE ˆ θ n satisfies the first-order condition: − ∂ ℓ ( ˆ θ n ) ∂ θ = 0 . Using a T aylor series expansion of the score function around the true parameter v alue θ 0 and ev aluating it at the MLE ˆ θ n , 0 = − ∂ ℓ ( ˆ θ n ) ∂ θ = − ∂ ℓ ( θ 0 ) ∂ θ − ∂ 2 ℓ ( θ ∗ ) ∂ θ ∂ θ T ( ˆ θ n − θ 0 ) where θ ∗ is a p oint b et ween ˆ θ n and θ 0 , ∂ 2 ℓ ( θ ∗ ) ∂ θ ∂ θ T is a matrix with ij th en try ∂ 2 ℓ ( θ ∗ ) ∂ θ i ∂ θ j . 34 Rearrange the terms and m ultiply b oth sides b y √ n . If the Hessian matrix − ∂ 2 ℓ ( θ ) ∂ θ ∂ θ T of − ℓ ( θ ) is positive definite, use the inv erse. Otherwise, b y Lemma 2 the Hessian matrix is p ositive semi-definite and we use the generalized in v erse to obtain √ n ( ˆ θ n − θ 0 ) = − 1 n ∂ 2 ℓ ( θ ) ∂ θ ∂ θ T ! − 1 √ n ∂ ℓ ( θ 0 ) ∂ θ ! By Lemma 2 , the Hessian matrix − ∂ 2 ℓ ( θ ) ∂ θ ∂ θ T is p ositive semi-definite, whic h implies that all of its eigen v alues are non-negative. This justifies that − 1 n ∂ 2 ℓ ( θ ) ∂ θ ∂ θ T con verge s to the Fisher information matrix −I ( θ 0 ) as the non-negativ e v alues along the diagonal of this matrix are v alid estimates for the v ariances of the parameters. Th us b y the La w of Large Num b ers (LLN), 1 n ∂ 2 ℓ ( θ ) ∂ θ ∂ θ T p − → −I ( θ 0 ) and the Central Limit Theorem (CL T), − 1 √ n ∂ ℓ ( θ 0 ) ∂ θ d − → N (0 , I ( θ 0 )) . Applying Slutsky’s theorem, − √ n ( ˆ θ n − θ 0 ) d − → N (0 , I − 1 ( θ 0 )) , whic h implies that ˆ θ n p − → θ 0 . 35

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

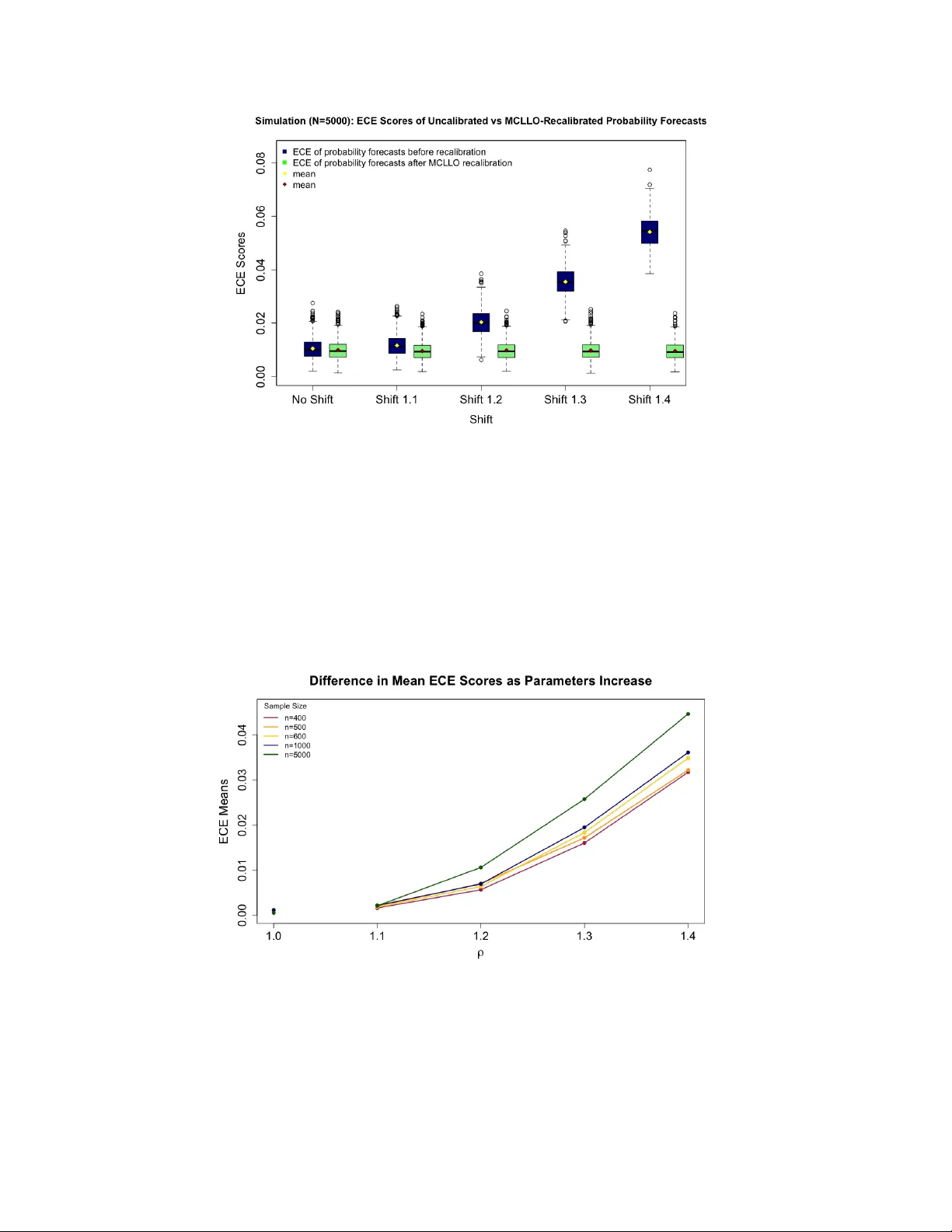

Leave a Comment