PRISM: Parallel Reward Integration with Symmetry for MORL

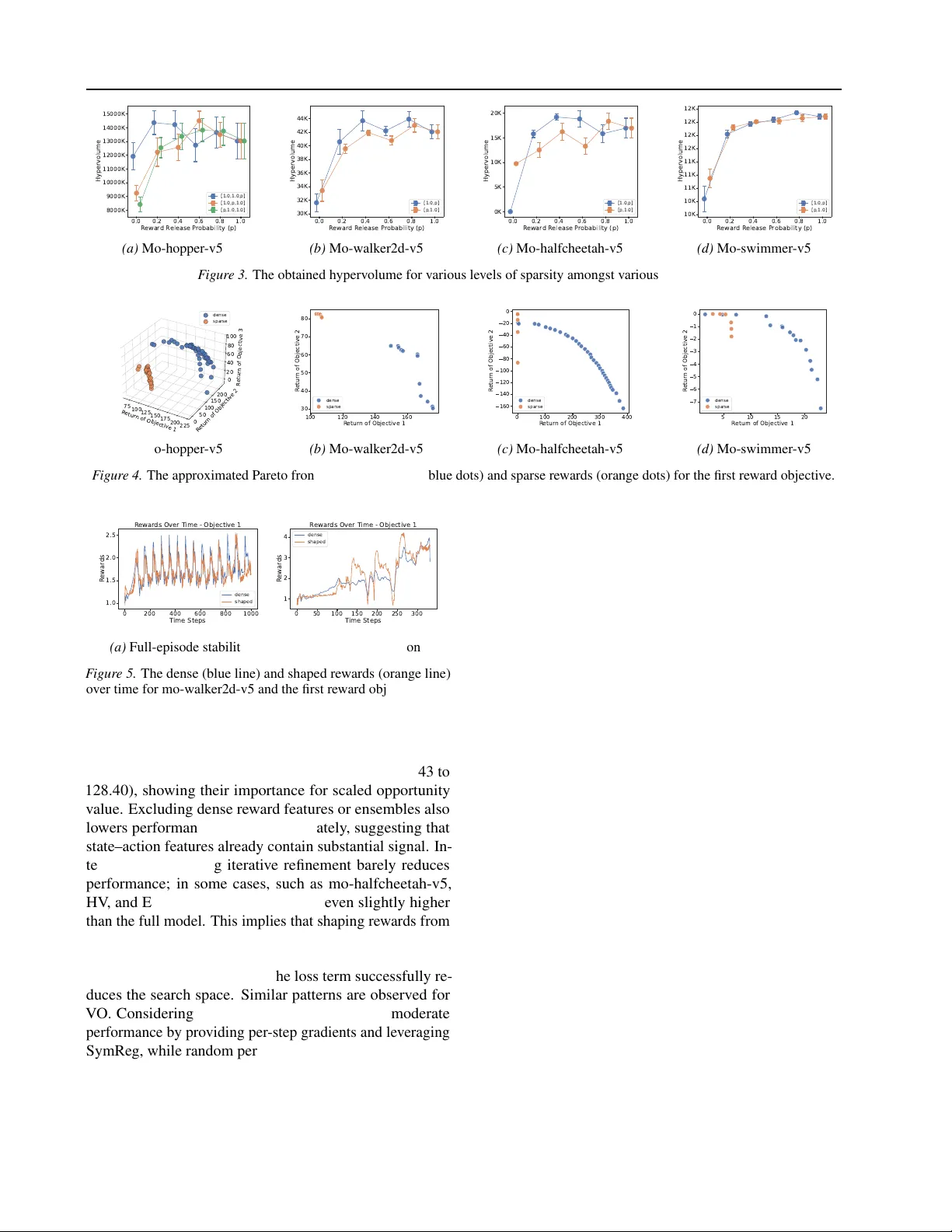

This work studies heterogeneous Multi-Objective Reinforcement Learning (MORL), where objectives can differ sharply in temporal frequency. Such heterogeneity allows dense objectives to dominate learning, while sparse long-horizon rewards receive weak …

Authors: Finn van der Knaap, Kejiang Qian, Zheng Xu