LERD: Latent Event-Relational Dynamics for Neurodegenerative Classification

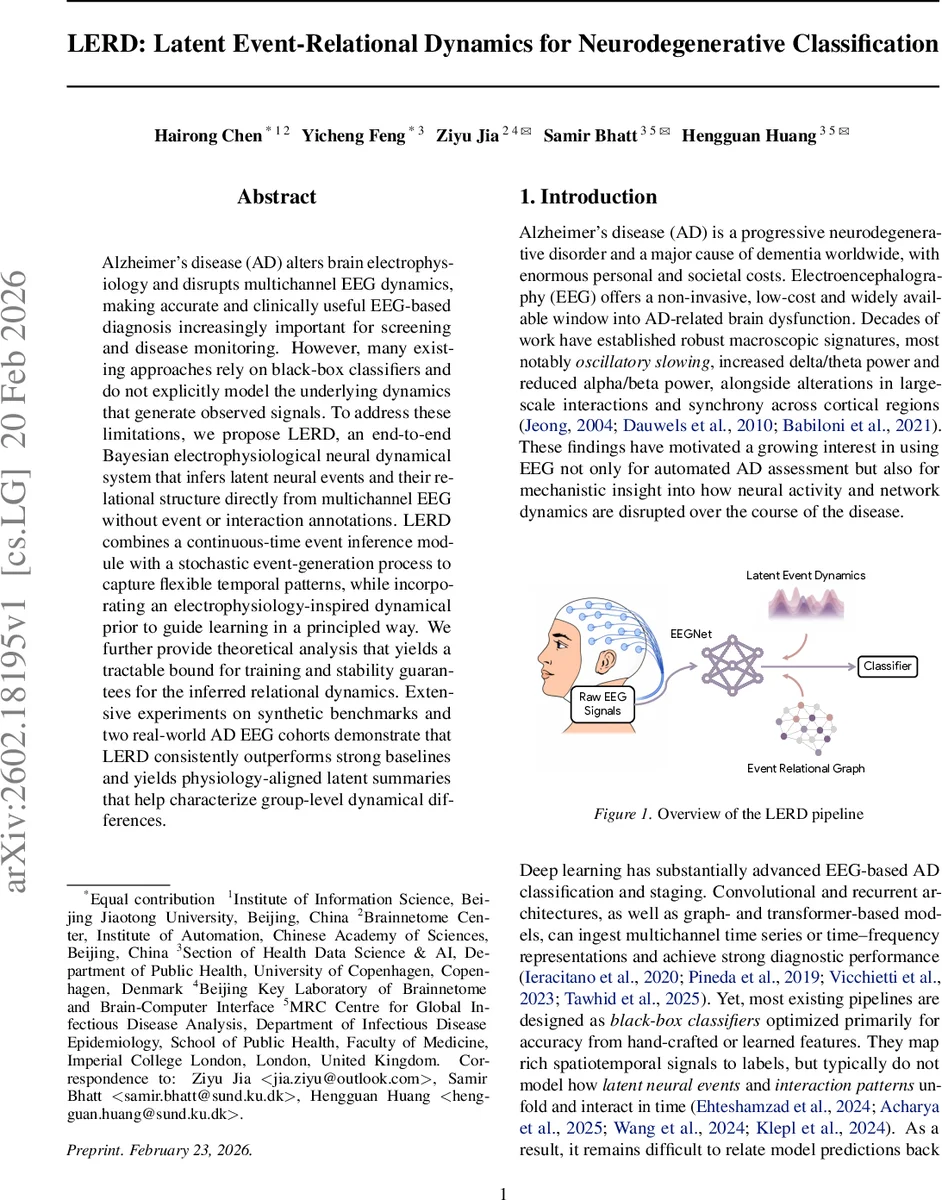

Alzheimer’s disease (AD) alters brain electrophysiology and disrupts multichannel EEG dynamics, making accurate and clinically useful EEG-based diagnosis increasingly important for screening and disease monitoring. However, many existing approaches rely on black-box classifiers and do not explicitly model the underlying dynamics that generate observed signals. To address these limitations, we propose LERD, an end-to-end Bayesian electrophysiological neural dynamical system that infers latent neural events and their relational structure directly from multichannel EEG without event or interaction annotations. LERD combines a continuous-time event inference module with a stochastic event-generation process to capture flexible temporal patterns, while incorporating an electrophysiology-inspired dynamical prior to guide learning in a principled way. We further provide theoretical analysis that yields a tractable bound for training and stability guarantees for the inferred relational dynamics. Extensive experiments on synthetic benchmarks and two real-world AD EEG cohorts demonstrate that LERD consistently outperforms strong baselines and yields physiology-aligned latent summaries that help characterize group-level dynamical differences.

💡 Research Summary

**

The paper introduces LERD (Latent Event‑Relational Dynamics), a novel Bayesian neural dynamical system designed to extract and model latent neural events and their directed interactions directly from multichannel scalp EEG, with the goal of improving Alzheimer’s disease (AD) classification and providing mechanistic insight. Traditional EEG‑based AD classifiers rely on hand‑crafted spectral or connectivity features or on black‑box deep networks that map raw signals to diagnostic labels without explicating the underlying generative dynamics. LERD addresses this gap by jointly learning (i) per‑channel latent event time distributions, (ii) a stochastic process governing inter‑event intervals, and (iii) a conditional event‑relational graph (ERG) that captures how events in one channel influence the timing of events in another.

Core Architecture

-

Event Posterior Differential Equation (EPDE) – For each channel, EPDE solves a continuous‑time differential equation whose solution yields a posterior distribution over event times p_c(t). The EPDE is parameterized by a neural network that conditions on the observed EEG trace, thereby providing a differentiable estimate of when latent “spikes” are likely to occur.

-

Mean‑Evolving Lognormal Process (MELP) – The EPDE’s posterior means serve as parameters for a log‑normal mixture that samples inter‑event intervals τ_c,k. This formulation guarantees positivity, accommodates multimodality, and captures the heavy‑tailed timing variability typical of neural firing.

-

dLIF Prior (differentiable Leaky‑Integrate‑and‑Fire) – To embed electrophysiological realism, each channel’s latent events are regularized by a dLIF prior. The membrane potential obeys du/dt = b(t) – u(t) with b(t) > 1, leading to an instantaneous firing rate r(t)=h−log(1−1/b(t)). This rate defines a hazard function for a renewal process, enforcing biologically plausible firing rates, refractory periods, and frequency bands. Because the spike function is non‑differentiable, the model penalizes the squared difference between a differentiable rate proxy extracted from EPDE and the dLIF‑derived rate (R_LIF term).

-

Event‑Relational Graph (ERG) – Cross‑channel event lags Δt_{ij}=t_i−t_j are passed through a smooth, STDP‑inspired mapping φ_η(·) to produce time‑varying directed edge weights A_{ij}(t). The ERG thus encodes a probabilistic, causal influence matrix that evolves with the inferred event stream. A weak regularizer (R_ERG) nudges the graph toward observable EEG statistics (e.g., correlation) without dictating its fine structure.

Learning Objective

LERD is trained end‑to‑end via variational inference, minimizing a negative ELBO‑style loss:

J = Σ_n

Comments & Academic Discussion

Loading comments...

Leave a Comment