Box Thirding: Anytime Best Arm Identification under Insufficient Sampling

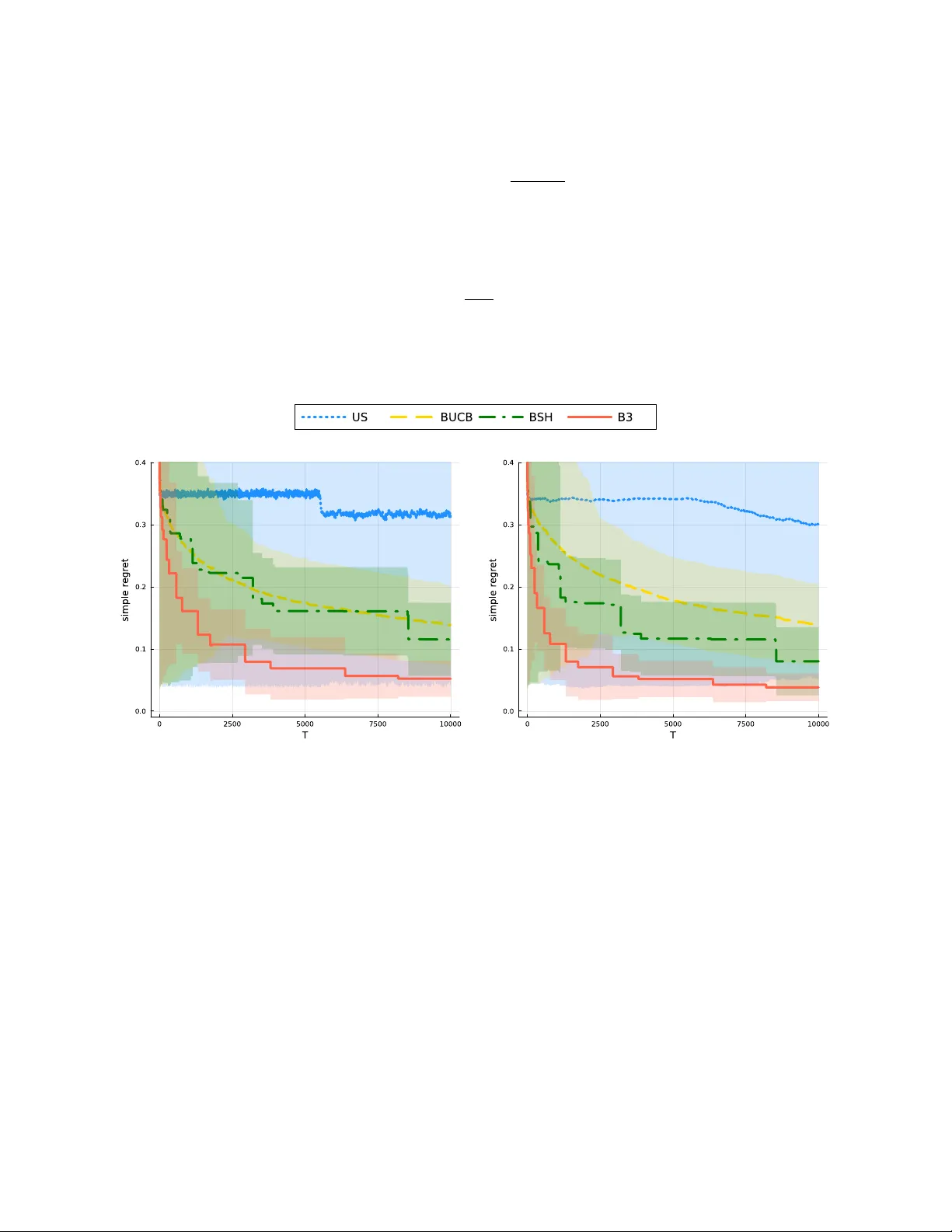

We introduce Box Thirding (B3), a flexible and efficient algorithm for Best Arm Identification (BAI) under fixed-budget constraints. It is designed for both anytime BAI and scenarios with large N, where the number of arms is too large for exhaustive …

Authors: Seohwa Hwang, Junyong Park