OODBench: Out-of-Distribution Benchmark for Large Vision-Language Models

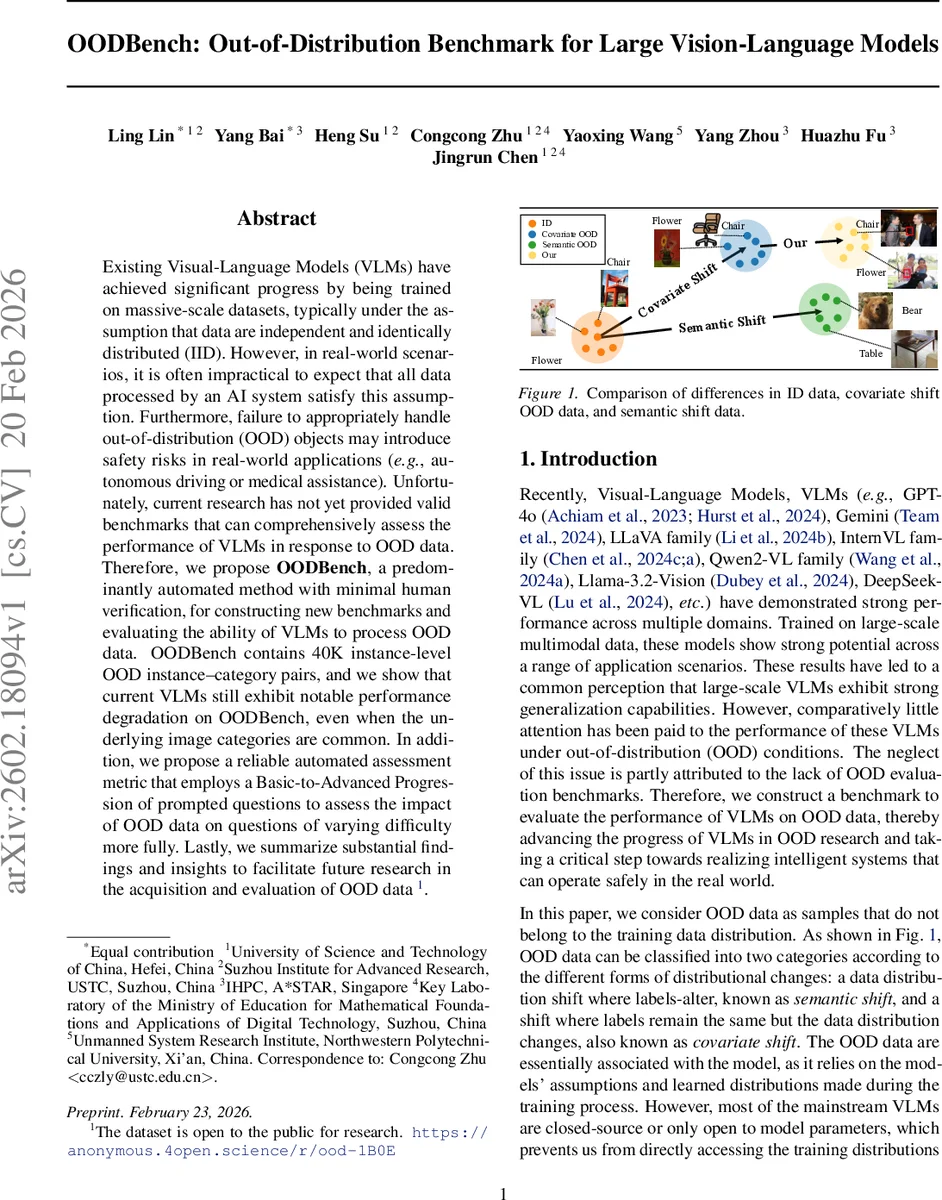

Existing Visual-Language Models (VLMs) have achieved significant progress by being trained on massive-scale datasets, typically under the assumption that data are independent and identically distributed (IID). However, in real-world scenarios, it is often impractical to expect that all data processed by an AI system satisfy this assumption. Furthermore, failure to appropriately handle out-of-distribution (OOD) objects may introduce safety risks in real-world applications (e.g., autonomous driving or medical assistance). Unfortunately, current research has not yet provided valid benchmarks that can comprehensively assess the performance of VLMs in response to OOD data. Therefore, we propose OODBench, a predominantly automated method with minimal human verification, for constructing new benchmarks and evaluating the ability of VLMs to process OOD data. OODBench contains 40K instance-level OOD instance-category pairs, and we show that current VLMs still exhibit notable performance degradation on OODBench, even when the underlying image categories are common. In addition, we propose a reliable automated assessment metric that employs a Basic-to-Advanced Progression of prompted questions to assess the impact of OOD data on questions of varying difficulty more fully. Lastly, we summarize substantial findings and insights to facilitate future research in the acquisition and evaluation of OOD data.

💡 Research Summary

The paper introduces OODBench, a large‑scale benchmark designed to evaluate the out‑of‑distribution (OOD) robustness of modern vision‑language models (VLMs). While recent VLMs such as GPT‑4o, Gemini, LLaVA, InternVL, Qwen2‑VL, DeepSeek‑VL, and others have demonstrated impressive performance on standard IID benchmarks, their behavior under realistic distribution shifts remains under‑explored. Existing OOD benchmarks focus mainly on semantic shift (new classes) and are ill‑suited for VLMs that have already been trained on massive, diverse image‑text corpora covering most common categories. Consequently, the authors argue that covariate shift—where the label space stays the same but the visual appearance of objects changes—is a more relevant failure mode for VLMs in safety‑critical domains such as autonomous driving and medical assistance.

To construct OODBench, the authors start from a publicly available pool of ~77 k yes/no image‑question pairs drawn from natural‑scene datasets (COCO, LVIS) and autonomous‑driving datasets (nuScenes, Cityscapes). They employ two off‑the‑shelf VLMs, CLIP and BLIP‑2, as generalized OOD detectors. For each image, the detectors compute logits for all candidate category labels; a “purify” operation eliminates cross‑label interference by setting logits of non‑target labels to negative infinity, thereby obtaining independent match probabilities for each label. An image is flagged as OOD if (i) a non‑present label receives a higher probability than any true label, or (ii) the probability of the true label falls below a predefined threshold T. Samples identified by both detectors are labeled OOD‑Hard, while those identified by only one are OOD‑Simple. A lightweight human spot‑check validates the automated labeling, ensuring high quality with minimal labor.

The final benchmark comprises 40 k yes/no instances, split roughly 22 k OOD‑Simple and 18 k OOD‑Hard, covering both “Simple” covariate shifts (e.g., lighting, background changes) and “Hard” shifts (e.g., anomalous object forms, non‑main objects). To assess model performance across difficulty levels, the authors propose the Basic‑Advanced Process Metric. Questions are organized into three tiers: Basic (direct factual retrieval), Advanced‑1 (quantity perception and simple reasoning), and Advanced‑2 (complex logical inference). This progression allows a fine‑grained view of how OOD data affect different reasoning capabilities.

Extensive experiments on eight state‑of‑the‑art VLMs reveal substantial degradation under OOD conditions. On OOD‑Hard samples, average accuracy drops by 20‑35 % compared with in‑distribution performance, with the most pronounced decline observed on Advanced‑2 questions where error rates can exceed 50 %. Models also struggle with subtle covariate shifts such as altered viewpoints, occlusions, or color variations, even though the underlying categories remain common. The results demonstrate that current VLMs, despite their large scale, lack robust generalization to covariate shifts that are likely to appear in real deployments.

The paper discusses several implications. First, the automated OOD detection pipeline, combined with minimal human verification, dramatically reduces the cost of building large OOD datasets while preserving label fidelity. Second, the benchmark highlights a gap between VLMs’ apparent IID generalization and their safety‑critical OOD reliability. Third, the authors note that the OOD‑Hard/‑Simple split depends on the chosen detectors; however, supplementary experiments with alternative classifiers show comparable splits, suggesting the method’s robustness.

Limitations include the focus on binary yes/no questions, which may not capture the full spectrum of multimodal reasoning tasks, and the reliance on CLIP/BLIP‑2 for OOD detection, which could introduce detector‑specific bias. Future work is suggested in three directions: (1) data augmentation strategies tailored to OOD‑Hard cases, (2) development of dedicated multimodal OOD detectors that jointly consider visual and textual cues, and (3) integration of OOD awareness into VLM inference pipelines, enabling safe‑fail or uncertainty‑aware responses in high‑risk applications.

In summary, OODBench provides the first comprehensive, large‑scale benchmark for evaluating covariate‑shift robustness of vision‑language models, exposing critical weaknesses in current systems and offering a concrete platform for the community to develop safer, more reliable multimodal AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment