Achieving Robust Extrapolation in Materials Property Prediction via Decoupled Transfer Learning

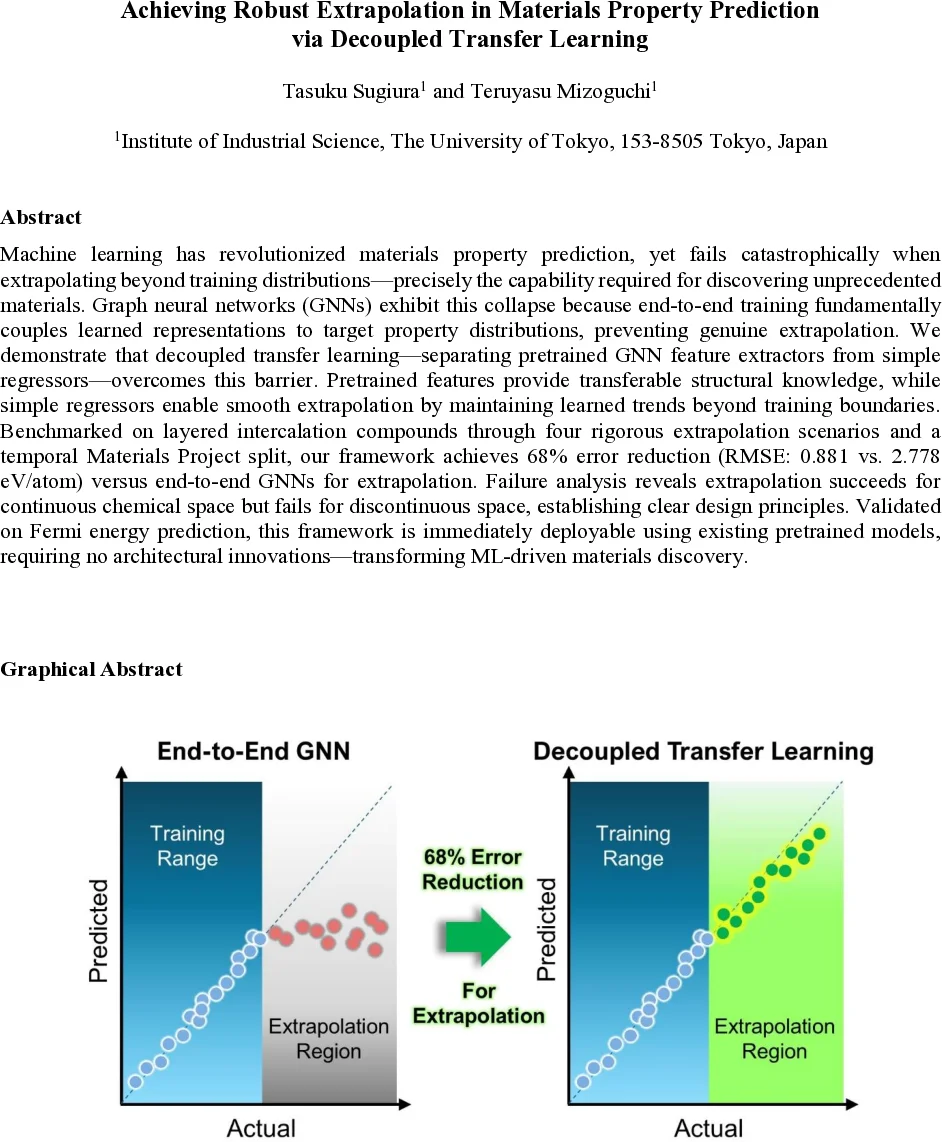

Machine learning has revolutionized materials property prediction, yet fails catastrophically when extrapolating beyond training distributions-precisely the capability required for discovering unprecedented materials. Graph neural networks (GNNs) exhibit this collapse because end-to-end training fundamentally couples learned representations to target property distributions, preventing genuine extrapolation. We demonstrate that decoupled transfer learning-separating pretrained GNN feature extractors from simple regressors-overcomes this barrier. Pretrained features provide transferable structural knowledge, while simple regressors enable smooth extrapolation by maintaining learned trends beyond training boundaries. Benchmarked on layered intercalation compounds through four rigorous extrapolation scenarios and a temporal Materials Project split, our framework achieves 68% error reduction (RMSE: 0.881 vs. 2.778 eV/atom) versus end-to-end GNNs for extrapolation. Failure analysis reveals extrapolation succeeds for continuous chemical space but fails for discontinuous space, establishing clear design principles. Validated on Fermi energy prediction, this framework is immediately deployable using existing pretrained models, requiring no architectural innovations-transforming ML-driven materials discovery.

💡 Research Summary

The paper tackles a fundamental limitation of current machine‑learning models for materials discovery: catastrophic failure when asked to predict properties of compounds that lie outside the distribution of the training data. While graph neural networks (GNNs) have set the state‑of‑the‑art for interpolative property prediction, the authors argue that end‑to‑end training intrinsically couples the learned representation to the target property distribution. This coupling forces the model’s outputs to remain within the range seen during training, making genuine extrapolation impossible.

To break this coupling, the authors propose a decoupled transfer‑learning framework. First, they freeze the parameters of large, publicly‑available pretrained GNNs (CGCNN, SchNet, and DimeNet++) that have been trained on millions of structures from the Open Catalyst Project. These networks act solely as feature extractors, converting crystal graphs into high‑dimensional vectors that encode coordination environments, bond angles, and other structural motifs. Because the feature extractor is frozen, the structural knowledge acquired during pretraining is preserved and can be transferred to any downstream task.

Second, the extracted features are fed into a simple regression head—either support‑vector regression (SVR) or Ridge regression. Linear‑or‑kernel‑based regressors have an inherent ability to extrapolate because their predictions are linear combinations of the input features; they are not constrained by activation functions or output normalisation that typically limit deep networks. Consequently, when the regression head is trained on a modest downstream dataset, it can produce values that lie far beyond the training range while still respecting the learned structural trends.

The authors evaluate the approach on layered intercalation compounds (LIC) and a temporal split of the Materials Project (MP) alloy dataset. Four extrapolation scenarios are defined for the LIC data: (1) random split (interpolation baseline), (2) host‑based split (novel crystal hosts), (3) energy‑threshold split (extreme formation energies), and (4) combined split (simultaneous structural and energetic extrapolation). The MP temporal split (MP18 → MP21) mimics real‑world discovery by training on 2018 data and testing on materials added up to 2021, which contain many more unstable structures.

Results show that the decoupled framework retains excellent interpolative performance (R² > 0.995, RMSE ≈ 0.055 eV/atom) comparable to end‑to‑end CGCNN. In the structural extrapolation task, it achieves RMSE = 0.099 eV/atom, outperforming fine‑tuned CGCNN (0.120 eV/atom). For property extrapolation, the RMSE drops to 0.205 eV/atom, whereas the end‑to‑end model collapses to 0.378 eV/atom and clusters its predictions near the training range. The most demanding combined extrapolation scenario still benefits from the same trend, demonstrating that the regression head can extend predictions both in chemical space and in property magnitude. Across all tests, the decoupled method reduces the root‑mean‑square error by 68 % (0.881 eV/atom vs. 2.778 eV/atom) relative to the baseline, a more than three‑fold improvement.

A detailed failure analysis reveals two systematic limitations. First, when target elements are sparsely represented in the training set, the frozen GNN features lack sufficient information, leading to poorer extrapolation. Second, discontinuous electronic‑structure transitions—such as moving from typical bulk bonding to layered graphene‑like motifs—are rarely present in the pretraining corpus, so the GNN cannot encode the necessary descriptors. In these cases, adding targeted examples or enriching the pretraining dataset markedly improves performance.

The authors also validate the approach on Fermi‑energy prediction, confirming that the method generalizes beyond formation energy to other density‑functional‑theory properties. Importantly, the framework requires no architectural changes: researchers can take any publicly available pretrained GNN, freeze it, and attach a standard regression model. This makes the method immediately deployable, computationally cheap, and compatible with a wide range of downstream tasks.

In summary, the paper establishes two design principles for robust materials‑property extrapolation: (1) leverage massive, diverse pretraining to obtain transferable structural representations, and (2) use simple, extrapolation‑capable regression heads for property prediction. By decoupling representation learning from property fitting, the authors demonstrate that high‑accuracy interpolation and reliable extrapolation can coexist, offering a practical pathway to accelerate the discovery of novel, high‑performance materials across energy storage, catalysis, and sustainable technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment