LeafNet: A Large-Scale Dataset and Comprehensive Benchmark for Foundational Vision-Language Understanding of Plant Diseases

Foundation models and vision-language pre-training have significantly advanced Vision-Language Models (VLMs), enabling multimodal processing of visual and linguistic data. However, their application in domain-specific agricultural tasks, such as plant pathology, remains limited due to the lack of large-scale, comprehensive multimodal image–text datasets and benchmarks. To address this gap, we introduce LeafNet, a comprehensive multimodal dataset, and LeafBench, a visual question-answering benchmark developed to systematically evaluate the capabilities of VLMs in understanding plant diseases. The dataset comprises 186,000 leaf digital images spanning 97 disease classes, paired with metadata, generating 13,950 question-answer pairs spanning six critical agricultural tasks. The questions assess various aspects of plant pathology understanding, including visual symptom recognition, taxonomic relationships, and diagnostic reasoning. Benchmarking 12 state-of-the-art VLMs on our LeafBench dataset, we reveal substantial disparity in their disease understanding capabilities. Our study shows performance varies markedly across tasks: binary healthy–diseased classification exceeds 90% accuracy, while fine-grained pathogen and species identification remains below 65%. Direct comparison between vision-only models and VLMs demonstrates the critical advantage of multimodal architectures: fine-tuned VLMs outperform traditional vision models, confirming that integrating linguistic representations significantly enhances diagnostic precision. These findings highlight critical gaps in current VLMs for plant pathology applications and underscore the need for LeafBench as a rigorous framework for methodological advancement and progress evaluation toward reliable AI-assisted plant disease diagnosis. Code is available at https://github.com/EnalisUs/LeafBench.

💡 Research Summary

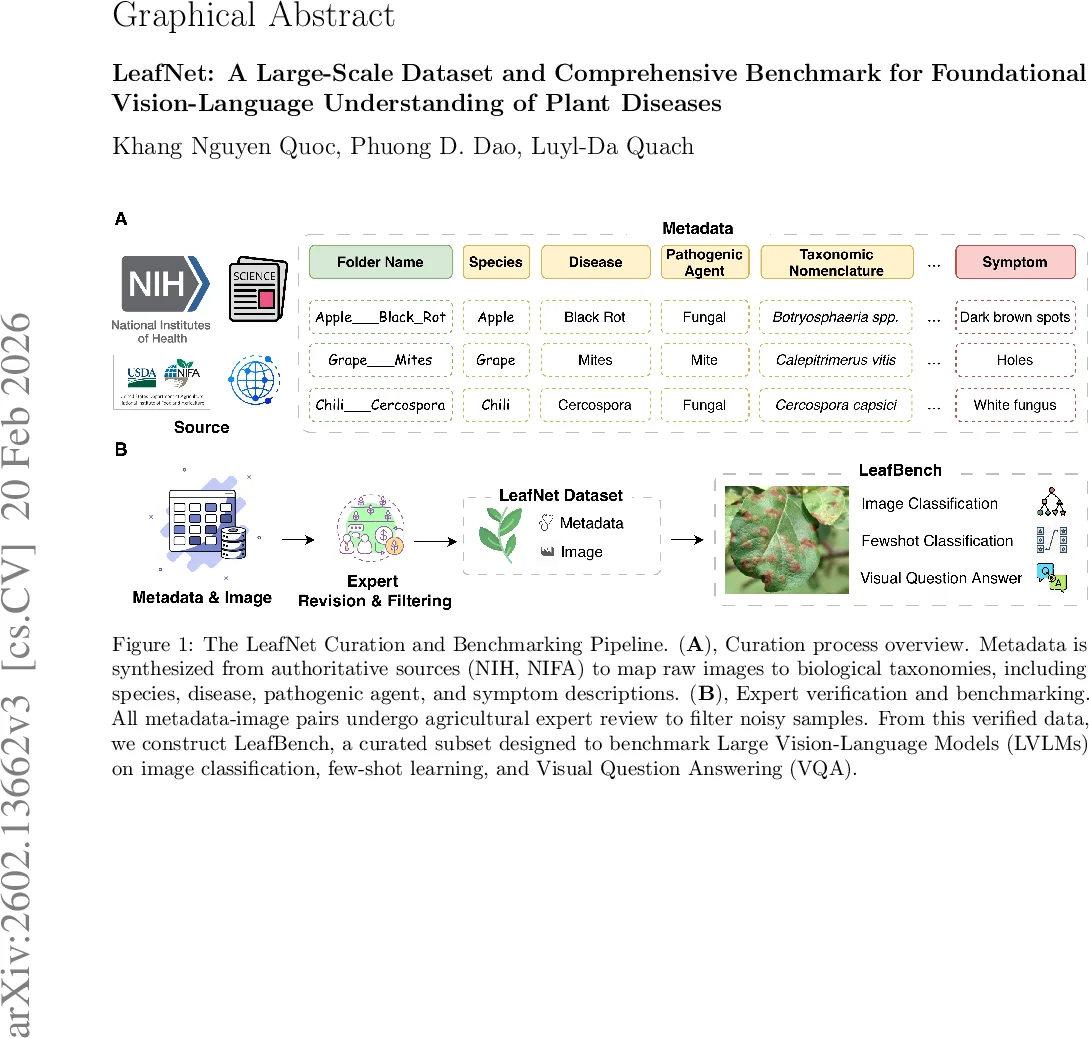

The paper introduces LeafNet, a large‑scale multimodal dataset for plant disease research, and LeafBench, a comprehensive visual‑question‑answering (VQA) benchmark built on top of LeafNet. LeafNet contains 186,000 high‑resolution leaf images covering 22 common crop species and 97 disease classes (62 distinct diseases in the original description, expanded to 97 through fine‑grained taxonomy). Each image is paired with rich expert‑annotated metadata that maps to authoritative taxonomies from NIH, NIFA, and other agricultural databases, including crop species, disease name, pathogen type, and symptom description. All image‑metadata pairs underwent expert verification to filter noisy samples, ensuring high data quality.

LeafBench extracts 13,950 question‑answer pairs from the curated dataset, organized into six critical agricultural tasks: (1) crop species identification, (2) healthy vs. diseased binary classification, (3) disease name identification, (4) symptom identification, (5) pathogen classification (fungi, bacteria, virus), and (6) taxonomic name classification. The questions span multiple formats—multiple‑choice, open‑ended, and reasoning‑based—to evaluate not only visual perception but also linguistic understanding and logical inference in vision‑language models (VLMs).

The authors benchmark 12 state‑of‑the‑art VLMs, including closed‑source giants such as GPT‑4o and Gemini 2.5 Pro, as well as open‑source models (e.g., BLIP‑2, LLaVA‑1.5, Qwen2.5‑VL). Evaluation is performed under three regimes: zero‑shot (no task‑specific fine‑tuning), few‑shot (5‑shot, 10‑shot), and full fine‑tuning on the entire LeafNet training split. For comparison, several vision‑only CNNs/transformers (ResNet‑50, EfficientNet‑B7, ViT) are also evaluated.

Key findings:

- Binary healthy‑diseased classification is relatively easy; most models exceed 90 % accuracy even in zero‑shot mode.

- Fine‑grained tasks (pathogen, species, symptom identification) show a steep performance drop, with accuracies below 65 % for most VLMs in zero‑shot settings.

- Vision‑only models lag behind VLMs on the fine‑grained tasks by 15‑30 % absolute points, confirming that linguistic context provides valuable cues.

- Domain‑specific fine‑tuning dramatically improves results. The agricultural‑focused VLM SCOLD reaches 99.15 % disease identification and 94.92 % symptom identification, outperforming generic VLMs by up to 27.8 % absolute on semantically demanding queries.

- Few‑shot learning yields promising gains: with only 5 examples per class, several models approach 90 % accuracy on disease identification, yet zero‑shot performance remains limited (~70 %).

- Error analysis reveals that many failures stem from visually similar lesions (e.g., brown spot vs. rice blast) and from ambiguous textual descriptions, underscoring the need for richer multimodal cues.

The paper also discusses dataset biases (geographic and climatic concentration), potential taxonomy drift in metadata, and ethical considerations surrounding AI‑driven disease diagnosis. The authors argue that while LeafNet provides a solid foundation, real‑world deployment will require broader environmental coverage, integration of multispectral or temporal data, and clear human‑AI collaboration protocols.

In conclusion, LeafNet and LeafBench constitute a new benchmark ecosystem for evaluating and advancing vision‑language understanding in plant pathology. The work highlights both the promise of multimodal AI—especially when fine‑tuned on domain data—and the current gaps in general‑purpose VLMs for fine‑grained agricultural tasks. Future research directions include expanding data diversity, incorporating additional sensor modalities, improving zero‑shot generalization, and developing explainable, trustworthy AI assistants for precision agriculture.

Comments & Academic Discussion

Loading comments...

Leave a Comment