MUCH: A Multilingual Claim Hallucination Benchmark

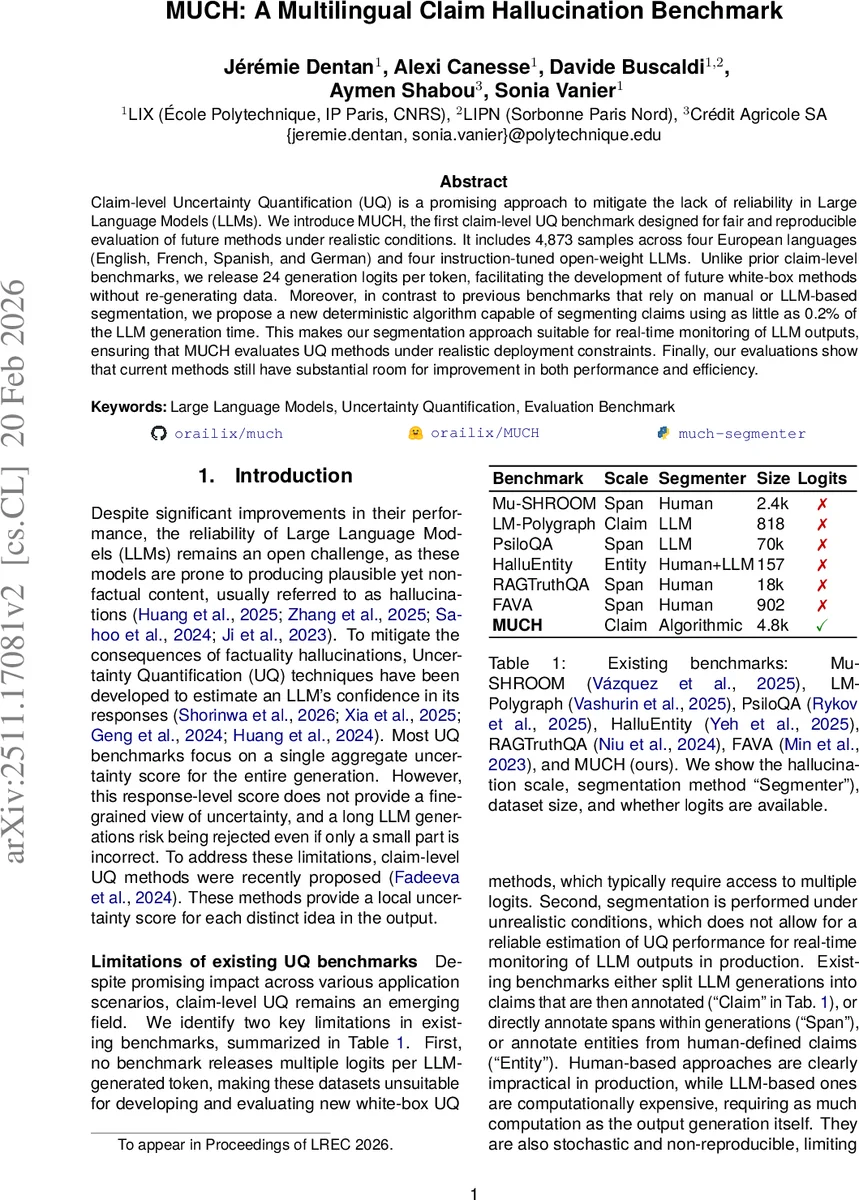

Claim-level Uncertainty Quantification (UQ) is a promising approach to mitigate the lack of reliability in Large Language Models (LLMs). We introduce MUCH, the first claim-level UQ benchmark designed for fair and reproducible evaluation of future methods under realistic conditions. It includes 4,873 samples across four European languages (English, French, Spanish, and German) and four instruction-tuned open-weight LLMs. Unlike prior claim-level benchmarks, we release 24 generation logits per token, facilitating the development of future white-box methods without re-generating data. Moreover, in contrast to previous benchmarks that rely on manual or LLM-based segmentation, we propose a new deterministic algorithm capable of segmenting claims using as little as 0.2% of the LLM generation time. This makes our segmentation approach suitable for real-time monitoring of LLM outputs, ensuring that MUCH evaluates UQ methods under realistic deployment constraints. Finally, our evaluations show that current methods still have substantial room for improvement in both performance and efficiency.

💡 Research Summary

The paper introduces MUCH (Multilingual Claim Hallucination Benchmark), the first claim‑level uncertainty quantification (UQ) benchmark designed for realistic evaluation of future methods. The authors identify two major shortcomings in existing claim‑level benchmarks: (1) the lack of multiple logits per token, which prevents the development and fair comparison of white‑box UQ approaches that rely on log‑probability information, and (2) the reliance on manual or LLM‑based claim segmentation, which is either impractical for production or introduces stochasticity, high computational cost, and mapping difficulties.

MUCH addresses these gaps by providing a dataset of 4,873 question‑answer pairs across four European languages (English, French, Spanish, German) generated by four instruction‑tuned open‑weight LLMs (Llama 3.1 8B‑Instruct, Llama 3.2 3B‑Instruct, Ministral 8B‑Instruct, Gemma 3.4B‑Instruct) at two temperature settings (1.0 and 0.7). For every generated token, the authors release the top‑24 logits, which is sufficient for most existing white‑box methods (e.g., CCP uses only 10 logits). This eliminates the need to regenerate data when testing new algorithms.

A key contribution is the deterministic, rule‑based claim segmenter called much_segmenter. It operates by tokenizing the LLM output into words using NLTK’s TreebankWordTokenizer, detecting claim boundaries via a set of language‑agnostic punctuation and keyword cues, and then mapping character indices back to token indices. The entire dataset can be segmented in only 0.2 % of the computation time required for LLM generation, making it suitable for real‑time monitoring scenarios. The segmenter is language‑agnostic for the four target languages and produces a list of token‑index groups, each representing a claim, guaranteeing a one‑to‑one alignment with the original generation.

The annotation pipeline uses the Mu‑SHROOM test set as the source of factual questions, each linked to a specific Wikipedia page that contains the correct answer. After generation, claim‑level factuality is automatically labeled using two strong LLMs (GPT‑4o and GPT‑4.1) by comparing each claim to the reference Wikipedia content. Only samples where both annotators agree on every claim are retained, resulting in 20,751 binary fact‑check labels (‑1 for false, +1 for true). A small subset was double‑annotated by humans, showing that the automatic labels are as reliable as human inter‑annotator agreement.

The benchmark evaluates five state‑of‑the‑art white‑box claim‑level UQ methods: CCP, SAR, Token Likelihood, Token Entropy, and Maximum Likelihood. Performance is measured using ROC‑AUC, PR‑AUC, and TPR@10 % (among others). All methods achieve modest scores, generally below 70 % across metrics, indicating substantial room for improvement. Moreover, the computational overhead associated with extracting logits and computing token‑level scores is non‑trivial, highlighting efficiency as an additional challenge for deployment.

The authors discuss several future research directions: (1) designing novel uncertainty estimators that exploit the rich 24‑logit information per token, (2) extending the deterministic segmenter to other languages with similar punctuation and stop‑word patterns, (3) exploring hybrid human‑LLM annotation schemes to further improve label quality, and (4) developing lightweight UQ metrics suitable for on‑device or low‑latency settings.

In summary, MUCH provides a comprehensive, reproducible, and multilingual benchmark that includes (i) LLM generations, (ii) per‑token logits, (iii) a fast deterministic claim segmenter, and (iv) high‑quality claim‑level factuality annotations. By addressing the two primary limitations of prior benchmarks, MUCH establishes a solid foundation for the next generation of claim‑level uncertainty quantification research, enabling fair comparison, reproducibility, and realistic assessment of both performance and efficiency.

Comments & Academic Discussion

Loading comments...

Leave a Comment