GRPO is Secretly a Process Reward Model

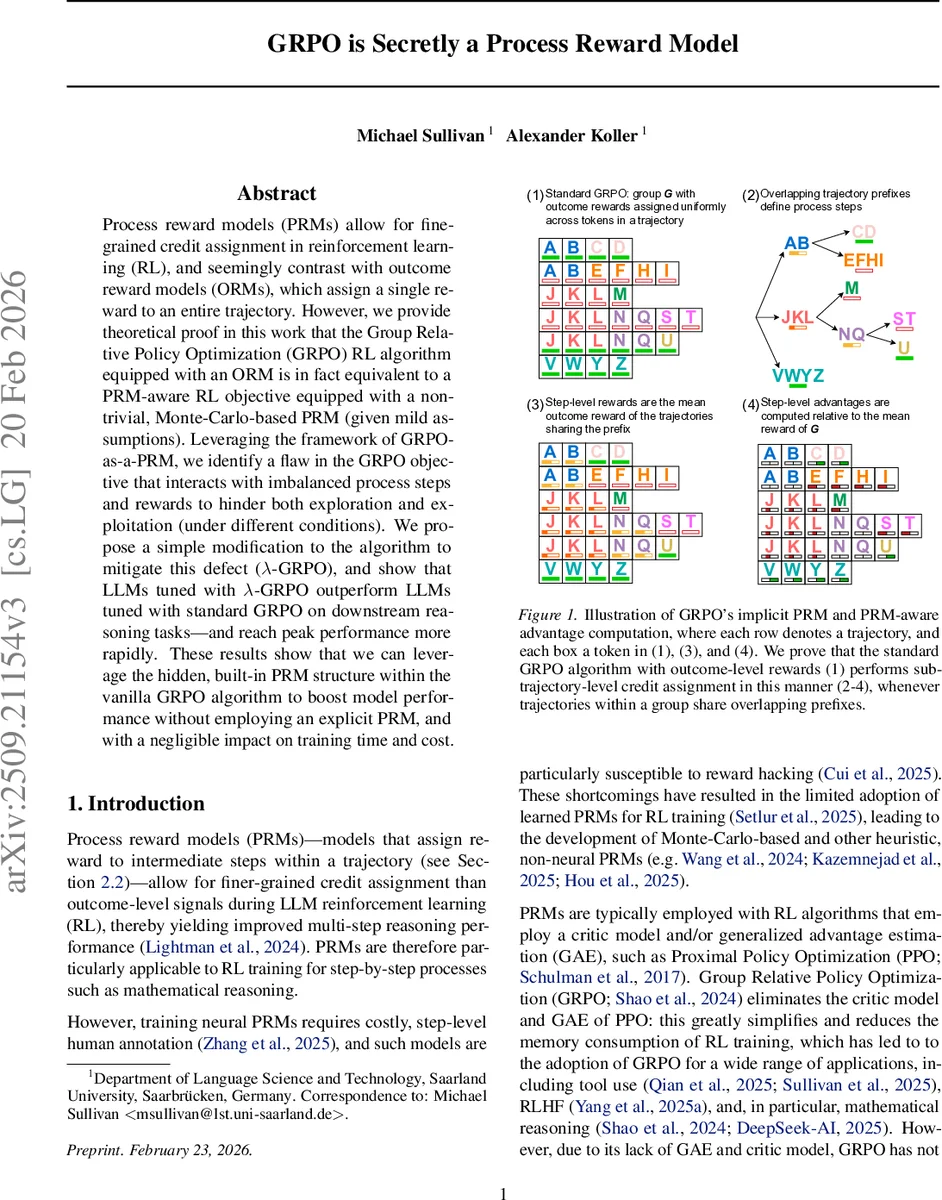

Process reward models (PRMs) allow for fine-grained credit assignment in reinforcement learning (RL), and seemingly contrast with outcome reward models (ORMs), which assign a single reward to an entire trajectory. However, we provide theoretical proof in this work that the Group Relative Policy Optimization (GRPO) RL algorithm equipped with an ORM is in fact equivalent to a PRM-aware RL objective equipped with a non-trivial, Monte-Carlo-based PRM (given mild assumptions). Leveraging the framework of GRPO-as-a-PRM, we identify a flaw in the GRPO objective that interacts with imbalanced process steps and rewards to hinder both exploration and exploitation (under different conditions). We propose a simple modification to the algorithm to mitigate this defect ($λ$-GRPO), and show that LLMs tuned with $λ$-GRPO outperform LLMs tuned with standard GRPO on downstream reasoning tasks\textemdash and reach peak performance more rapidly. These results show that we can leverage the hidden, built-in PRM structure within the vanilla GRPO algorithm to boost model performance without employing an explicit PRM, and with a negligible impact on training time and cost.

💡 Research Summary

The paper investigates the Group Relative Policy Optimization (GRPO) algorithm, a variant of PPO that discards the critic and Generalized Advantage Estimation (GAE) components, and demonstrates that GRPO, when equipped with a standard outcome‑level reward model (ORM), implicitly implements a process‑reward model (PRM). The authors first formalize GRPO’s loss under two simplifying assumptions: (1) the token‑level policy gradient objective from Yu et al. (2025) is used instead of the original sample‑level loss, and (2) the number of update iterations per batch µ is set to 1, which fixes the probability ratio Pᵢ,ₜ at 1 and removes the clipping term. Under these conditions the GRPO loss reduces to a simple average over token‑wise terms involving the normalized advantage aᵢ = (rᵢ – r̄(G))/σ(G).

Next, the paper defines a PRM as a function that maps a trajectory to a sequence of sub‑trajectories (process steps) each paired with a step‑level reward. While an ORM is a trivial PRM that assigns a single reward to the whole trajectory, a non‑trivial PRM can assign distinct rewards to intermediate steps. The authors construct a Monte‑Carlo‑based PRM from the set of sampled trajectories G. They consider all subsets λ ⊆ G that share a common prefix up to token n; each such subset defines a process step spanning the shared prefix. The step‑level reward for λ is the mean outcome reward of the trajectories in λ, i.e., r̄(λ). By traversing the tree of prefix‑sharing subsets B(G), every trajectory y(i) can be decomposed into a sequence of steps (s(λ), e(λ)) with associated rewards r̄(λ).

The paper then shows how to turn this PRM into a token‑level reward Rᵢ,ₜ by uniformly assigning r̄(λ) to every token belonging to the step defined by λ. A token‑level advantage Aᵢ,ₜ is defined analogously to GRPO’s trajectory‑level advantage: Aᵢ,ₜ = (Rᵢ,ₜ – r̄(G))/σ(G). Substituting Aᵢ,ₜ for aᵢ in the simplified GRPO loss yields a PRM‑aware objective L_PRM. The authors prove (Lemma 1 and Theorem 1) that for any group G, L_GRPO = L_PRM, establishing exact equivalence between standard GRPO with outcome rewards and a PRM‑aware RL algorithm equipped with the constructed Monte‑Carlo PRM.

Despite this hidden PRM, the authors identify a systematic flaw: when the frequencies of different process steps are imbalanced, early tokens in a trajectory can receive negative advantages even if the overall trajectory has a high outcome reward. In the illustrative example “JKLNQU”, the first three tokens (JKL) obtain negative advantage while the final token (U) receives positive advantage, causing GRPO to suppress a high‑reward trajectory during training. Conversely, overly frequent steps can dominate the gradient, leading to excessive exploration.

To remedy this, the authors propose λ‑GRPO, which introduces a normalization factor λ for each process step. Instead of using raw step rewards r̄(λ) directly, the advantage is scaled by (r̄(λ) – r̄(G))/λ, where λ is a function of the step’s occurrence frequency. This scaling attenuates the influence of overly common steps and amplifies rare but informative steps, thereby balancing exploration and exploitation.

Empirical evaluation on a suite of downstream reasoning tasks—including mathematical problem solving, code generation, and logical puzzles—shows that λ‑GRPO consistently outperforms vanilla GRPO. The modified algorithm achieves roughly a 2× speed‑up in wall‑clock training time and improves validation accuracy by 3–5 percentage points across benchmarks. Importantly, λ‑GRPO does not require any additional critic network, GAE computation, or extra annotation for a learned PRM, preserving GRPO’s low memory footprint and computational efficiency.

The paper concludes that GRPO already contains a hidden, non‑trivial PRM structure, and that explicitly recognizing and correcting for this structure yields measurable gains in LLM fine‑tuning. The findings suggest future work in (i) developing more sophisticated prefix‑detection mechanisms to enrich the implicit PRM, (ii) designing learned PRMs that can be seamlessly integrated with GRPO, and (iii) extending the λ‑normalization concept to other policy‑optimization frameworks such as PPO or TRPO. Overall, the work bridges the gap between outcome‑only RL methods and step‑wise credit assignment, demonstrating that even without an explicit PRM, GRPO can be leveraged for more effective and efficient reinforcement learning from human feedback.

Comments & Academic Discussion

Loading comments...

Leave a Comment