Learning Compact Video Representations for Efficient Long-form Video Understanding in Large Multimodal Models

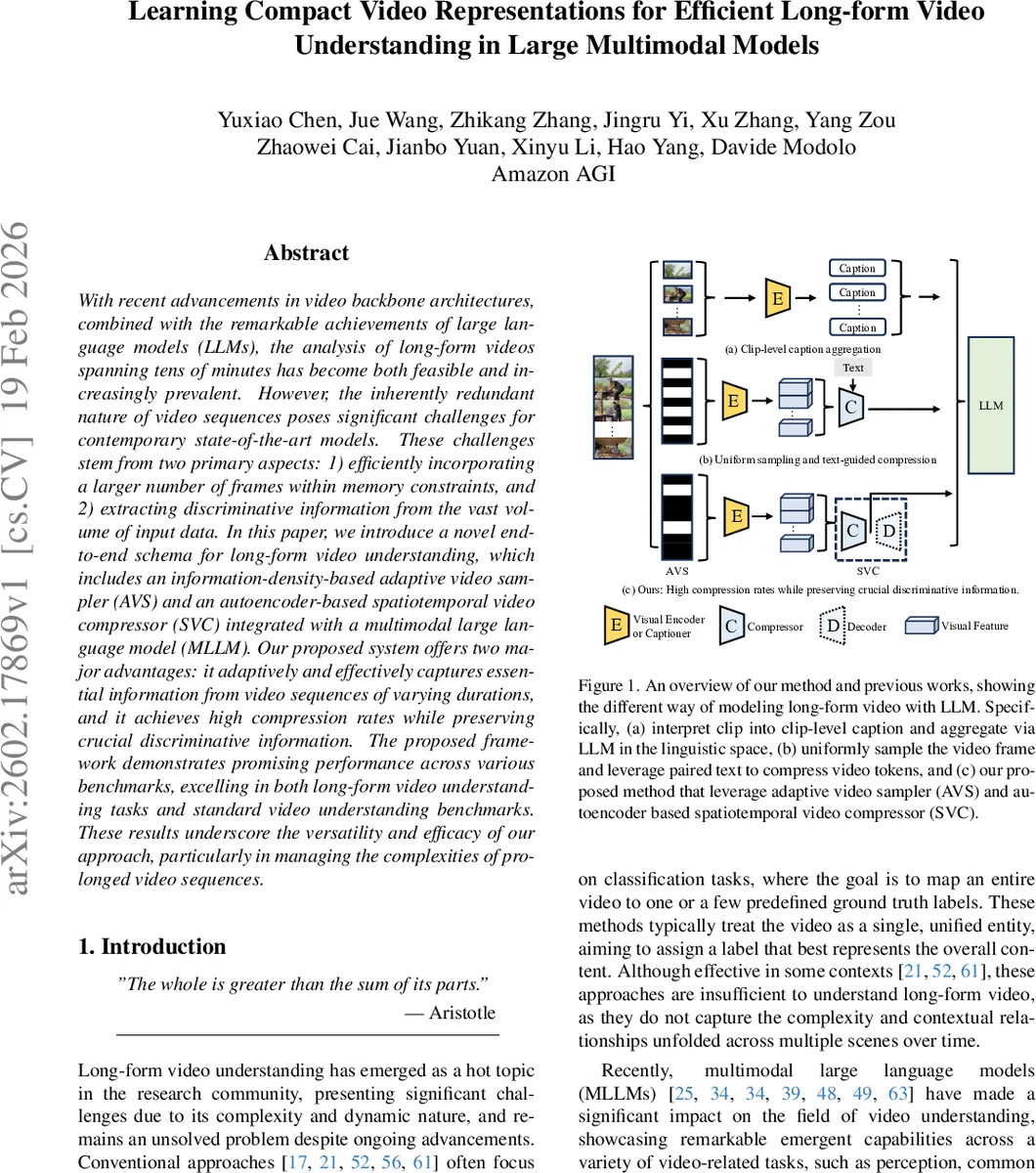

With recent advancements in video backbone architectures, combined with the remarkable achievements of large language models (LLMs), the analysis of long-form videos spanning tens of minutes has become both feasible and increasingly prevalent. However, the inherently redundant nature of video sequences poses significant challenges for contemporary state-of-the-art models. These challenges stem from two primary aspects: 1) efficiently incorporating a larger number of frames within memory constraints, and 2) extracting discriminative information from the vast volume of input data. In this paper, we introduce a novel end-to-end schema for long-form video understanding, which includes an information-density-based adaptive video sampler (AVS) and an autoencoder-based spatiotemporal video compressor (SVC) integrated with a multimodal large language model (MLLM). Our proposed system offers two major advantages: it adaptively and effectively captures essential information from video sequences of varying durations, and it achieves high compression rates while preserving crucial discriminative information. The proposed framework demonstrates promising performance across various benchmarks, excelling in both long-form video understanding tasks and standard video understanding benchmarks. These results underscore the versatility and efficacy of our approach, particularly in managing the complexities of prolonged video sequences.

💡 Research Summary

The paper tackles the pressing problem of efficiently processing long‑form videos (tens of minutes) with multimodal large language models (MLLMs). Existing approaches either convert video clips into textual captions—losing low‑level visual detail and accumulating hallucinations—or employ simple token reduction techniques such as uniform sampling, average pooling, or token merging, which struggle with the high redundancy and diversity of long videos. To address both the memory/compute bottleneck and the need to preserve discriminative visual information, the authors propose a unified pipeline consisting of three components: (1) an Adaptive Video Sampler (AVS), (2) a Spatio‑Temporal Video Compressor (SVC), and (3) a state‑of‑the‑art MLLM (Qwen‑2).

Adaptive Video Sampler (AVS).

AVS first runs a shot‑boundary detector over the entire video, producing a confidence score for each frame that reflects the likelihood of a content change. After non‑maximum suppression to remove redundant detections, the top‑k frames with the highest scores are selected and temporally ordered. This information‑density‑driven sampling mirrors the “chapter‑scene‑shot” structure of movies, assuming that each long video consists of several homogeneous information tubelets separated by distinct transitions. By focusing on frames where the information gradient is steep, AVS captures the most informative moments while discarding the bulk of redundant frames.

Spatio‑Temporal Video Compressor (SVC).

The frames selected by AVS are encoded by a ViT‑based visual encoder, yielding a spatio‑temporal token tensor f (T × H × W × C). SVC is an auto‑encoder that compresses f into a compact latent representation h (t ≤ T, h ≤ H, w ≤ W). The compressor C and decoder D are jointly trained to minimize a reconstruction loss L_rec = |f − D(C(f))|₁, while the visual encoder remains frozen. This design enables training solely on video data, without any paired text, and learns to retain essential motion and appearance cues in the latent space. Empirically, the auto‑encoder‑based compressor outperforms average pooling and token‑merging baselines, achieving a 64‑fold reduction in visual tokens with minimal loss of discriminative information.

Integration with MLLM.

Compressed tokens h are linearly projected into the LLM’s input space and fed together with textual prompts to Qwen‑2. Because the token budget is dramatically reduced, the LLM can attend to the entire video sequence rather than a truncated subset, enabling sophisticated reasoning tasks such as open‑ended dialogue, contextual QA, and long‑range temporal inference. The pipeline thus transforms a potentially billions‑of‑token video into a manageable set of tokens while preserving the semantic richness required for high‑level language understanding.

Experimental Validation.

The authors evaluate the framework on a suite of benchmarks covering both long‑form video understanding (EgoSchema, PercepTest) and standard video classification/recognition datasets (ActivityNet, Kinetics‑400). Key results include:

- EgoSchema: +2.6 % absolute accuracy over LLaVA‑OV, using only 20 % of the visual tokens.

- PercepTest: +3.3 % absolute accuracy with an 80 % reduction in token count.

- Generalization: Competitive performance on short‑video tasks despite the aggressive compression.

Ablation studies show that AVS alone yields a 10–15 % gain, SVC alone a 12 % gain, and their combination delivers >25 % overall improvement, confirming a synergistic effect. Visualizations of shot‑boundary detections and reconstructed features illustrate that critical scenes are retained after compression.

Limitations and Future Directions.

The current backbone is ViT; exploring linear‑complexity sequence models such as Mamba could further improve efficiency. Moreover, the latent tokens could be directly incorporated into the LLM’s self‑attention mechanism, or the compressor could be trained with weak multimodal alignment signals to boost compression quality. Scaling the approach to even longer videos (hours) and multimodal streams (audio, subtitles) is also an open avenue.

Conclusion.

By jointly addressing adaptive sampling and deep spatio‑temporal compression, the proposed framework reduces the visual token budget by 64× while preserving the discriminative cues essential for long‑form video understanding. The integration with a powerful MLLM demonstrates that high‑level reasoning over hours‑long video content is feasible without prohibitive computational costs, establishing a new baseline for future multimodal AI systems handling extensive video data.

Comments & Academic Discussion

Loading comments...

Leave a Comment